1.实现一个神经网络前,需要先初始化模型参数。如果对每一层的权重和偏置都用0初始化,那么通过第一遍前向计算,所有隐藏层神经元的激活值都相同;在反向传播时,所有权重的更新也都相同,这样会导致隐藏层神经元没有差异性,出现对称权重现象。

import torch.nn as nn

import torch.nn.functional as F

import torch

import math

import matplotlib.pyplot as plt

def make_moons(n_samples=1000, shuffle=True, noise=None):

"""生成带噪音的弯月形状数据

输入:n_samples:数据量大小,数据类型为int shuffle:是否打乱数据,数据类型为bool noise:以多大的程度增加噪声,数据类型为None或float,noise为None时表示不增加噪声

输出:X:特征数据,shape=[n_samples,2] y:标签数据, shape=[n_samples]

"""

n_samples_out = n_samples // 2

n_samples_in = n_samples - n_samples_out

# 采集第1类数据,特征为(x,y)

# 使用'torch.linspace'在0到pi上均匀取n_samples_out个值

# 使用'torch.cos'计算上述取值的余弦值作为特征1,使用'torch.sin'计算上述取值的正弦值作为特征2

outer_circ_x = torch.cos(torch.linspace(0, math.pi, n_samples_out))

outer_circ_y = torch.sin(torch.linspace(0, math.pi, n_samples_out))

inner_circ_x = 1 - torch.cos(torch.linspace(0, math.pi, n_samples_in))

inner_circ_y = 0.5 - torch.sin(torch.linspace(0, math.pi, n_samples_in))

print('outer_circ_x.shape:', outer_circ_x.shape, 'outer_circ_y.shape:', outer_circ_y.shape)

print('inner_circ_x.shape:', inner_circ_x.shape, 'inner_circ_y.shape:', inner_circ_y.shape)

# 使用'torch.concat'将两类数据的特征1和特征2分别延维度0拼接在一起,得到全部特征1和特征2

# 使用'torch.stack'将两类特征延维度1堆叠在一起

X = torch.stack(

[torch.concat([outer_circ_x, inner_circ_x]),

torch.concat([outer_circ_y, inner_circ_y])],

dim=1 # 确定在哪个维度拼接

)

print('after concat shape:', torch.concat([outer_circ_x, inner_circ_x]).shape)

print('X shape:', X.shape)

y = torch.concat(

[torch.zeros([n_samples_out]), torch.ones([n_samples_in])]

)

print('y shape:', y.shape)

# 如果shuffle为True,将所有数据打乱

if shuffle:

# 使用'torch.randperm'生成一个数值在0到X.shape[0],随机排列的一维Tensor做索引值,用于打乱数据

idx = torch.randperm(X.shape[0])

X = X[idx]

y = y[idx]

# 如果noise不为None,则给特征值加入噪声

if noise is not None:

# 使用'torch.normal'生成符合正态分布的随机Tensor作为噪声,并加到原始特征上

X += torch.normal(mean=torch.zeros_like(X), std=noise * torch.ones_like(X))

return X, y

n_samples = 1000

X, y = make_moons(n_samples=n_samples, shuffle=True, noise=0.15)

num_train = 640

num_dev = 160

num_test = 200

X_train, y_train = X[:num_train], y[:num_train]

X_dev, y_dev = X[num_train:num_train + num_dev], y[num_train:num_train + num_dev]

X_test, y_test = X[num_train + num_dev:], y[num_train + num_dev:]

y_train = y_train.reshape([-1,1])

y_dev = y_dev.reshape([-1,1])

y_test = y_test.reshape([-1,1])

class Model_MLP_L2_V4(torch.nn.Module):

def __init__(self, input_size, hidden_size, output_size):

super(Model_MLP_L2_V4, self).__init__()

# 使用'paddle.nn.Linear'定义线性层。

# 其中in_features为线性层输入维度;out_features为线性层输出维度

# weight_attr为权重参数属性

# bias_attr为偏置参数属性

self.fc1 = nn.Linear(input_size, hidden_size)

self.fc1.weight = nn.init.constant_(self.fc1.weight, val=0.0)

self.fc1.bias = nn.init.constant_(self.fc1.bias, val=0.0)

self.fc2 = nn.Linear(hidden_size, output_size)

self.fc2.weight = nn.init.constant_(self.fc2.weight, val=0.0)

self.fc2.bias = nn.init.constant_(self.fc2.bias, val=0.0)

# 使用'paddle.nn.functional.sigmoid'定义 Logistic 激活函数

self.act_fn = F.sigmoid

# 前向计算

def forward(self, inputs):

z1 = self.fc1(inputs)

a1 = self.act_fn(z1)

z2 = self.fc2(a1)

a2 = self.act_fn(z2)

return a2

def print_weights(runner):

print('The weights of the Layers:')

for item in runner.model.sublayers():

print(item.full_name())

for param in item.parameters():

print(param.numpy())

class RunnerV2_2(object):

def __init__(self, model, optimizer, metric, loss_fn, **kwargs):

self.model = model

self.optimizer = optimizer

self.loss_fn = loss_fn

self.metric = metric

# 记录训练过程中的评估指标变化情况

self.train_scores = []

self.dev_scores = []

# 记录训练过程中的评价指标变化情况

self.train_loss = []

self.dev_loss = []

def train(self, train_set, dev_set, **kwargs):

# 将模型切换为训练模式

self.model.train()

# 传入训练轮数,如果没有传入值则默认为0

num_epochs = kwargs.get("num_epochs", 0)

# 传入log打印频率,如果没有传入值则默认为100

log_epochs = kwargs.get("log_epochs", 100)

# 传入模型保存路径,如果没有传入值则默认为"best_model.pdparams"

save_path = kwargs.get("save_path", "best_model.pdparams")

# log打印函数,如果没有传入则默认为"None"

custom_print_log = kwargs.get("custom_print_log", None)

# 记录全局最优指标

best_score = 0

# 进行num_epochs轮训练

for epoch in range(num_epochs):

X, y = train_set

# 获取模型预测

logits = self.model(X.to(torch.float32))

# 计算交叉熵损失

trn_loss = self.loss_fn(logits, y)

self.train_loss.append(trn_loss.item())

# 计算评估指标

trn_score = self.metric(logits, y).item()

self.train_scores.append(trn_score)

# 自动计算参数梯度

trn_loss.backward()

if custom_print_log is not None:

# 打印每一层的梯度

custom_print_log(self)

# 参数更新

self.optimizer.step()

# 清空梯度

self.optimizer.zero_grad() # reset gradient

dev_score, dev_loss = self.evaluate(dev_set)

# 如果当前指标为最优指标,保存该模型

if dev_score > best_score:

self.save_model(save_path)

print(f"[Evaluate] best accuracy performence has been updated: {best_score:.5f} --> {dev_score:.5f}")

best_score = dev_score

if log_epochs and epoch % log_epochs == 0:

print(f"[Train] epoch: {epoch}/{num_epochs}, loss: {trn_loss.item()}")

@torch.no_grad()

def evaluate(self, data_set):

# 将模型切换为评估模式

self.model.eval()

X, y = data_set

# 计算模型输出

logits = self.model(X)

# 计算损失函数

loss = self.loss_fn(logits, y).item()

self.dev_loss.append(loss)

# 计算评估指标

score = self.metric(logits, y).item()

self.dev_scores.append(score)

return score, loss

# 模型测试阶段,使用'torch.no_grad()'控制不计算和存储梯度

@torch.no_grad()

def predict(self, X):

# 将模型切换为评估模式

self.model.eval()

return self.model(X)

# 使用'model.state_dict()'获取模型参数,并进行保存

def save_model(self, saved_path):

torch.save(self.model.state_dict(), saved_path)

# 使用'model.set_state_dict'加载模型参数

def load_model(self, model_path):

state_dict = torch.load(model_path)

self.model.load_state_dict(state_dict)

def accuracy(preds, labels):

# 判断是二分类任务还是多分类任务,preds.shape[1]=1时为二分类任务,preds.shape[1]>1时为多分类任务

if preds.shape[1] == 1:

preds=(preds>=0.5).to(torch.float32)

else:

preds = torch.argmax(preds,dim=1).int()

return torch.mean((preds == labels).float())

def plot(runner, fig_name):

plt.figure(figsize=(10, 5))

epochs = [i for i in range(len(runner.train_scores))]

plt.subplot(1, 2, 1)

plt.plot(epochs, runner.train_loss, color='#e4007f', label="Train loss")

plt.plot(epochs, runner.dev_loss, color='#f19ec2', linestyle='--', label="Dev loss")

# 绘制坐标轴和图例

plt.ylabel("loss", fontsize='large')

plt.xlabel("epoch", fontsize='large')

plt.legend(loc='upper right', fontsize='x-large')

plt.subplot(1, 2, 2)

plt.plot(epochs, runner.train_scores, color='#e4007f', label="Train accuracy")

plt.plot(epochs, runner.dev_scores, color='#f19ec2', linestyle='--', label="Dev accuracy")

# 绘制坐标轴和图例

plt.ylabel("score", fontsize='large')

plt.xlabel("epoch", fontsize='large')

plt.legend(loc='lower right', fontsize='x-large')

plt.savefig(fig_name)

plt.show()

input_size = 2

hidden_size = 5

output_size = 1

model = Model_MLP_L2_V4(input_size=input_size, hidden_size=hidden_size, output_size=output_size)

# 设置损失函数

loss_fn = F.binary_cross_entropy

# 设置优化器

learning_rate = 0.02

optimizer = torch.optim.SGD(model.parameters(),lr=learning_rate)

# 设置评价指标

metric = accuracy

# 其他参数

epoch = 2000

saved_path = 'best_model.pdparams'

# 实例化RunnerV2类,并传入训练配置

runner = RunnerV2_2(model, optimizer, metric, loss_fn)

runner.train([X_train, y_train], [X_dev, y_dev], num_epochs = epoch, log_epochs=50, save_path="best_model.pdparams")

plot(runner, "fw-zero.pdf")

#模型评价

runner.load_model("best_model.pdparams")

score, loss = runner.evaluate([X_test, y_test])

print("[Test] score/loss: {:.4f}/{:.4f}".format(score, loss))

# 均匀生成40000个数据点

x1, x2 = torch.meshgrid(torch.linspace(-math.pi, math.pi, 200), torch.linspace(-math.pi, math.pi, 200),indexing = 'ij')

x = torch.stack([torch.flatten(x1), torch.flatten(x2)], dim=1)

# 预测对应类别

y = runner.predict(x)

y = (y >= 0.5).float().squeeze(dim=-1)

# 绘制类别区域

plt.ylabel('x2')

plt.xlabel('x1')

plt.scatter(x[:,0].tolist(), x[:,1].tolist(), c=y.tolist(), cmap=plt.cm.Spectral)

plt.scatter(X_train[:, 0].tolist(), X_train[:, 1].tolist(), marker='*', c=torch.squeeze(y_train,dim=-1).tolist())

plt.scatter(X_dev[:, 0].tolist(), X_dev[:, 1].tolist(), marker='*', c=torch.squeeze(y_dev,dim=-1).tolist())

plt.scatter(X_test[:, 0].tolist(), X_test[:, 1].tolist(), marker='*', c=torch.squeeze(y_test,dim=-1).tolist())

plt.show()如此代码所示,运行结果

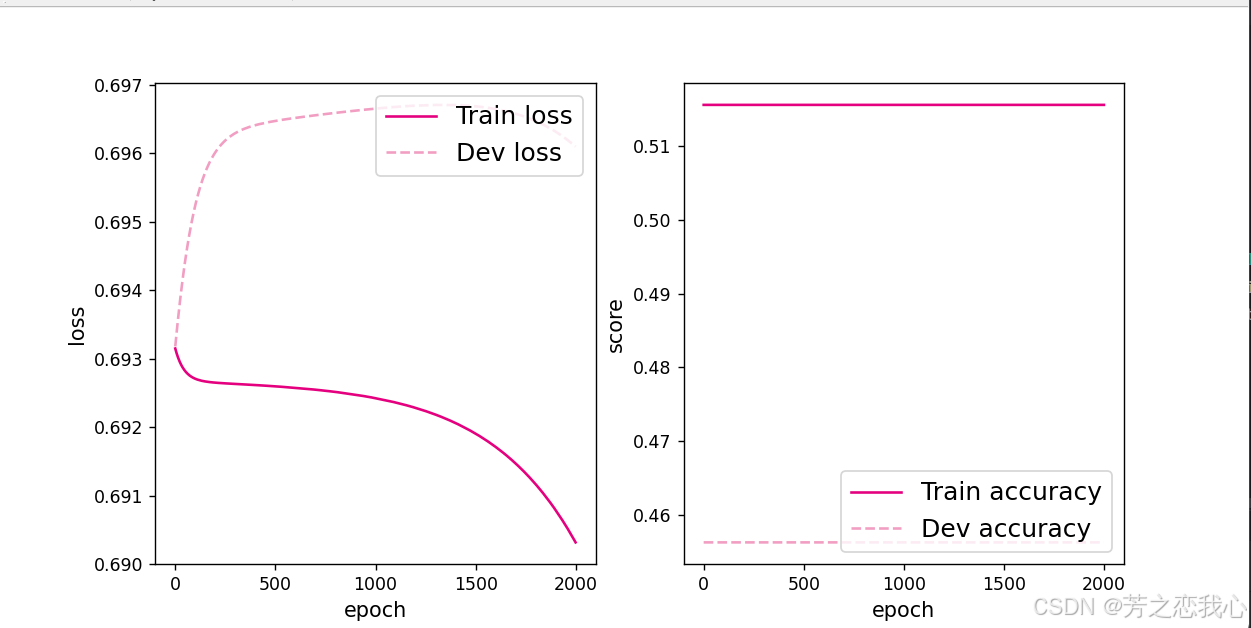

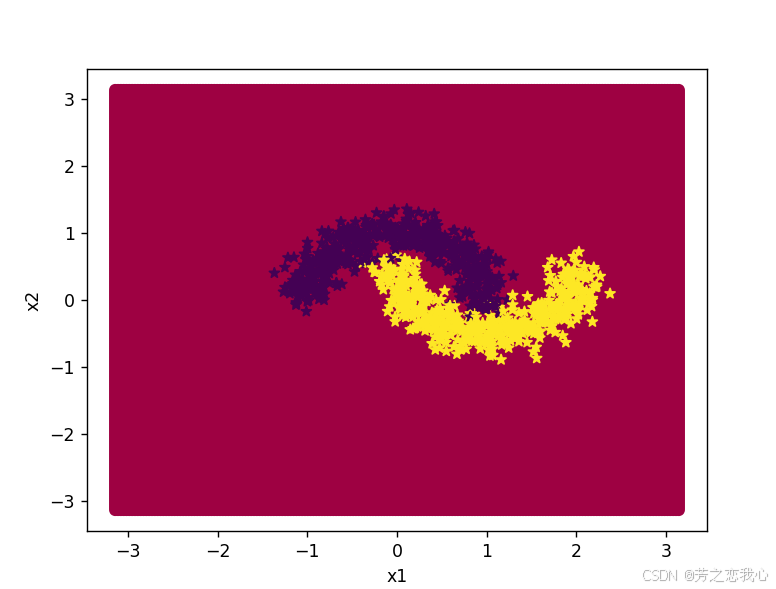

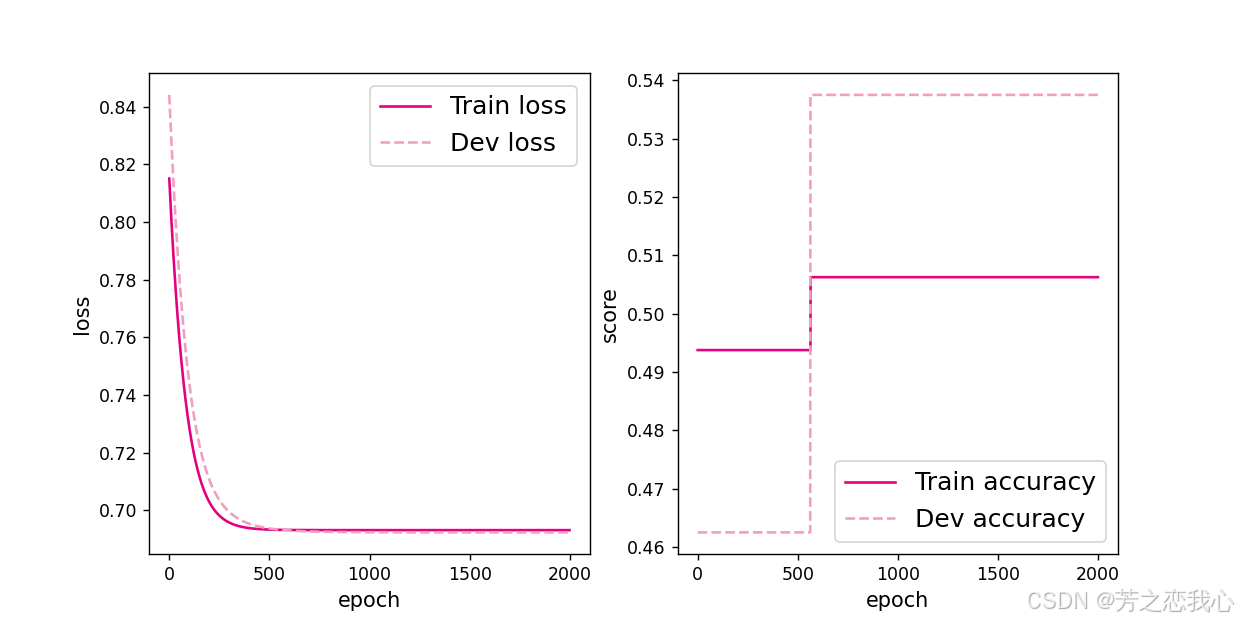

由运行结果可知,loss曲线没有下降,而且输出的分类结果图像没有进行分类,说明模型没有学到任何东西。为了避免对称权重现象,不要将神经网络的参数初始化为0。

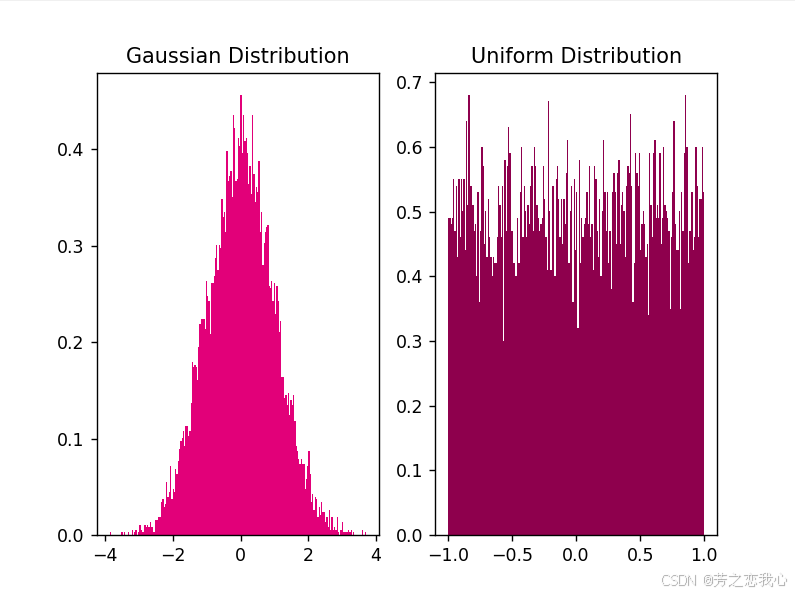

为了避免对称权重现象,使用高斯分布或均匀分布初始化神经网络的参数。

2.

在神经网络的构建过程中,随着网络层数的增加,理论上网络的拟合能力也应该是越来越好的。但是随着网络变深,参数学习更加困难,容易出现梯度消失问题。由于Sigmoid型函数的饱和性,饱和区的导数更接近于0,误差经过每一层传递都会不断衰减。当网络层数很深时,梯度就会不停衰减,甚至消失,使得整个网络很难训练,这就是所谓的梯度消失问题。修改Model_MLP_L5,并使用sigmoid函数进行训练,代码如下

import torch.nn as nn

import torch.nn.functional as F

import torch

import math

import matplotlib.pyplot as plt

def make_moons(n_samples=1000, shuffle=True, noise=None):

"""生成带噪音的弯月形状数据

输入:n_samples:数据量大小,数据类型为int shuffle:是否打乱数据,数据类型为bool noise:以多大的程度增加噪声,数据类型为None或float,noise为None时表示不增加噪声

输出:X:特征数据,shape=[n_samples,2] y:标签数据, shape=[n_samples]

"""

n_samples_out = n_samples // 2

n_samples_in = n_samples - n_samples_out

# 采集第1类数据,特征为(x,y)

# 使用'torch.linspace'在0到pi上均匀取n_samples_out个值

# 使用'torch.cos'计算上述取值的余弦值作为特征1,使用'torch.sin'计算上述取值的正弦值作为特征2

outer_circ_x = torch.cos(torch.linspace(0, math.pi, n_samples_out))

outer_circ_y = torch.sin(torch.linspace(0, math.pi, n_samples_out))

inner_circ_x = 1 - torch.cos(torch.linspace(0, math.pi, n_samples_in))

inner_circ_y = 0.5 - torch.sin(torch.linspace(0, math.pi, n_samples_in))

print('outer_circ_x.shape:', outer_circ_x.shape, 'outer_circ_y.shape:', outer_circ_y.shape)

print('inner_circ_x.shape:', inner_circ_x.shape, 'inner_circ_y.shape:', inner_circ_y.shape)

# 使用'torch.concat'将两类数据的特征1和特征2分别延维度0拼接在一起,得到全部特征1和特征2

# 使用'torch.stack'将两类特征延维度1堆叠在一起

X = torch.stack(

[torch.concat([outer_circ_x, inner_circ_x]),

torch.concat([outer_circ_y, inner_circ_y])],

dim=1 # 确定在哪个维度拼接

)

print('after concat shape:', torch.concat([outer_circ_x, inner_circ_x]).shape)

print('X shape:', X.shape)

y = torch.concat(

[torch.zeros([n_samples_out]), torch.ones([n_samples_in])]

)

print('y shape:', y.shape)

# 如果shuffle为True,将所有数据打乱

if shuffle:

# 使用'torch.randperm'生成一个数值在0到X.shape[0],随机排列的一维Tensor做索引值,用于打乱数据

idx = torch.randperm(X.shape[0])

X = X[idx]

y = y[idx]

# 如果noise不为None,则给特征值加入噪声

if noise is not None:

# 使用'torch.normal'生成符合正态分布的随机Tensor作为噪声,并加到原始特征上

X += torch.normal(mean=torch.zeros_like(X), std=noise * torch.ones_like(X))

return X, y

n_samples = 1000

X, y = make_moons(n_samples=n_samples, shuffle=True, noise=0.15)

num_train = 640

num_dev = 160

num_test = 200

X_train, y_train = X[:num_train], y[:num_train]

X_dev, y_dev = X[num_train:num_train + num_dev], y[num_train:num_train + num_dev]

X_test, y_test = X[num_train + num_dev:], y[num_train + num_dev:]

y_train = y_train.reshape([-1,1])

y_dev = y_dev.reshape([-1,1])

y_test = y_test.reshape([-1,1])

class Model_MLP_L5(torch.nn.Module):

def __init__(self, input_size, output_size, act='relu'):

super(Model_MLP_L5, self).__init__()

self.fc1 = torch.nn.Linear(input_size, 3)

w_ = torch.normal(0, 0.01, size=(3, input_size), requires_grad=True)

self.fc1.weight = nn.Parameter(w_)

self.fc1.bias = nn.init.constant_(self.fc1.bias, val=1.0)

w= torch.normal(0, 0.01, size=(3, 3), requires_grad=True)

self.fc2 = torch.nn.Linear(3, 3)

self.fc2.weight = nn.Parameter(w)

self.fc2.bias = nn.init.constant_(self.fc2.bias, val=1.0)

self.fc3 = torch.nn.Linear(3, 3)

self.fc3.weight = nn.Parameter(w)

self.fc3.bias = nn.init.constant_(self.fc3.bias, val=1.0)

self.fc4 = torch.nn.Linear(3, 3)

self.fc4.weight = nn.Parameter(w)

self.fc4.bias = nn.init.constant_(self.fc4.bias, val=1.0)

self.fc5 = torch.nn.Linear(3, output_size)

w1 = torch.normal(0, 0.01, size=(output_size, 3), requires_grad=True)

self.fc5.weight = nn.Parameter(w1)

self.fc5.bias = nn.init.constant_(self.fc5.bias, val=1.0)

# 定义网络使用的激活函数

if act == 'sigmoid':

self.act = F.sigmoid

elif act == 'relu':

self.act = F.relu

elif act == 'lrelu':

self.act = F.leaky_relu

else:

raise ValueError("Please enter sigmoid relu or lrelu!")

def forward(self, inputs):

outputs = self.fc1(inputs.to(torch.float32))

outputs = self.act(outputs)

outputs = self.fc2(outputs)

outputs = self.act(outputs)

outputs = self.fc3(outputs)

outputs = self.act(outputs)

outputs = self.fc4(outputs)

outputs = self.act(outputs)

outputs = self.fc5(outputs)

outputs = F.sigmoid(outputs)

return outputs

def print_weights(runner):

print('The weights of the Layers:')

for item in runner.model.sublayers():

print(item.full_name())

for param in item.parameters():

print(param.numpy())

class RunnerV2_2(object):

def __init__(self, model, optimizer, metric, loss_fn, **kwargs):

self.model = model

self.optimizer = optimizer

self.loss_fn = loss_fn

self.metric = metric

# 记录训练过程中的评估指标变化情况

self.train_scores = []

self.dev_scores = []

# 记录训练过程中的评价指标变化情况

self.train_loss = []

self.dev_loss = []

def train(self, train_set, dev_set, **kwargs):

# 将模型切换为训练模式

self.model.train()

# 传入训练轮数,如果没有传入值则默认为0

num_epochs = kwargs.get("num_epochs", 0)

# 传入log打印频率,如果没有传入值则默认为100

log_epochs = kwargs.get("log_epochs", 100)

# 传入模型保存路径,如果没有传入值则默认为"best_model.pdparams"

save_path = kwargs.get("save_path", "best_model.pdparams")

# log打印函数,如果没有传入则默认为"None"

custom_print_log = kwargs.get("custom_print_log", None)

# 记录全局最优指标

best_score = 0

# 进行num_epochs轮训练

for epoch in range(num_epochs):

X, y = train_set

# 获取模型预测

logits = self.model(X.to(torch.float32))

# 计算交叉熵损失

trn_loss = self.loss_fn(logits, y)

self.train_loss.append(trn_loss.item())

# 计算评估指标

trn_score = self.metric(logits, y).item()

self.train_scores.append(trn_score)

# 自动计算参数梯度

trn_loss.backward()

if custom_print_log is not None:

# 打印每一层的梯度

custom_print_log(self)

# 参数更新

self.optimizer.step()

# 清空梯度

self.optimizer.zero_grad() # reset gradient

dev_score, dev_loss = self.evaluate(dev_set)

# 如果当前指标为最优指标,保存该模型

if dev_score > best_score:

self.save_model(save_path)

print(f"[Evaluate] best accuracy performence has been updated: {best_score:.5f} --> {dev_score:.5f}")

best_score = dev_score

if log_epochs and epoch % log_epochs == 0:

print(f"[Train] epoch: {epoch}/{num_epochs}, loss: {trn_loss.item()}")

@torch.no_grad()

def evaluate(self, data_set):

# 将模型切换为评估模式

self.model.eval()

X, y = data_set

# 计算模型输出

logits = self.model(X)

# 计算损失函数

loss = self.loss_fn(logits, y).item()

self.dev_loss.append(loss)

# 计算评估指标

score = self.metric(logits, y).item()

self.dev_scores.append(score)

return score, loss

# 模型测试阶段,使用'torch.no_grad()'控制不计算和存储梯度

@torch.no_grad()

def predict(self, X):

# 将模型切换为评估模式

self.model.eval()

return self.model(X)

# 使用'model.state_dict()'获取模型参数,并进行保存

def save_model(self, saved_path):

torch.save(self.model.state_dict(), saved_path)

# 使用'model.set_state_dict'加载模型参数

def load_model(self, model_path):

state_dict = torch.load(model_path)

self.model.load_state_dict(state_dict)

def accuracy(preds, labels):

# 判断是二分类任务还是多分类任务,preds.shape[1]=1时为二分类任务,preds.shape[1]>1时为多分类任务

if preds.shape[1] == 1:

preds=(preds>=0.5).to(torch.float32)

else:

preds = torch.argmax(preds,dim=1).int()

return torch.mean((preds == labels).float())

def plot(runner, fig_name):

plt.figure(figsize=(10, 5))

epochs = [i for i in range(len(runner.train_scores))]

plt.subplot(1, 2, 1)

plt.plot(epochs, runner.train_loss, color='#e4007f', label="Train loss")

plt.plot(epochs, runner.dev_loss, color='#f19ec2', linestyle='--', label="Dev loss")

# 绘制坐标轴和图例

plt.ylabel("loss", fontsize='large')

plt.xlabel("epoch", fontsize='large')

plt.legend(loc='upper right', fontsize='x-large')

plt.subplot(1, 2, 2)

plt.plot(epochs, runner.train_scores, color='#e4007f', label="Train accuracy")

plt.plot(epochs, runner.dev_scores, color='#f19ec2', linestyle='--', label="Dev accuracy")

# 绘制坐标轴和图例

plt.ylabel("score", fontsize='large')

plt.xlabel("epoch", fontsize='large')

plt.legend(loc='lower right', fontsize='x-large')

plt.savefig(fig_name)

plt.show()

def print_grads(runner):

# 打印每一层的权重的模

print('The gradient of the Layers:')

for item in runner.model.modules():

if len(list(item.parameters())) == 2:

# 获取权重的梯度并计算模

grad_norm = item.parameters().__next__().grad.data.norm(2).item()

print(item.__class__.__name__, grad_norm)

# 学习率大小

lr = 0.01

# 定义网络,激活函数使用sigmoid

model = Model_MLP_L5(input_size=2, output_size=1, act='sigmoid')

# 定义优化器

optimizer = torch.optim.SGD(model.parameters(),lr=lr)

# 定义损失函数,使用交叉熵损失函数

loss_fn = F.binary_cross_entropy

# 定义评价指标

metric = accuracy

# 指定梯度打印函数

custom_print_log=print_grads

# 轮次

epoch = 2000

saved_path = 'best_model.pdparams'

# 实例化RunnerV2类,并传入训练配置

runner = RunnerV2_2(model, optimizer, metric, loss_fn)

runner.train([X_train, y_train], [X_dev, y_dev], num_epochs = epoch, log_epochs=50, save_path="best_model.pdparams")

plot(runner, "fw-zero.pdf")

#模型评价

runner.load_model("best_model.pdparams")

score, loss = runner.evaluate([X_test, y_test])

print("[Test] score/loss: {:.4f}/{:.4f}".format(score, loss))

# 启动训练

runner.train([X_train, y_train], [X_dev, y_dev],num_epochs=1, log_epochs=None,save_path="best_model.pdparams", custom_print_log=custom_print_log)

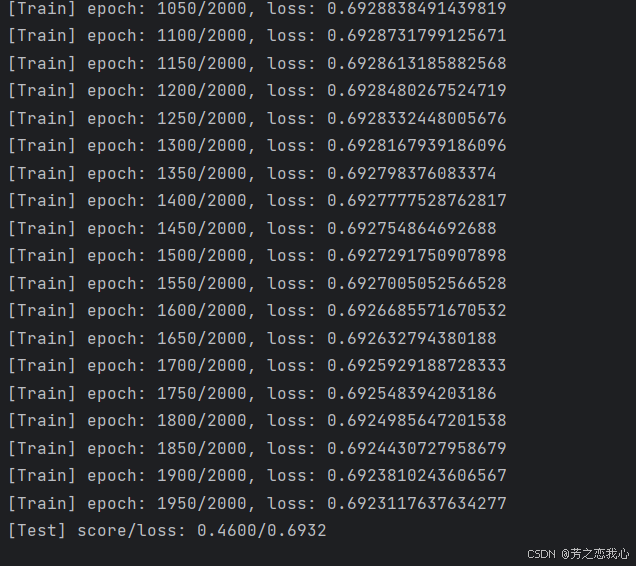

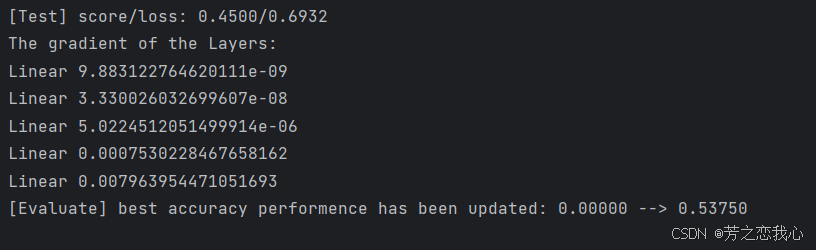

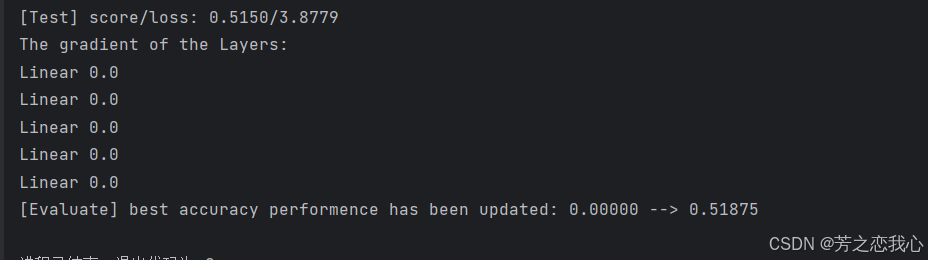

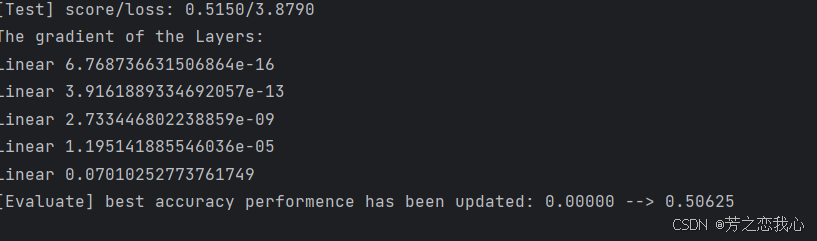

运行结果

由Linear的值可以看出,梯度的更新由9.88->0.007,梯度的更新逐渐减小,出现了梯度消失现象。

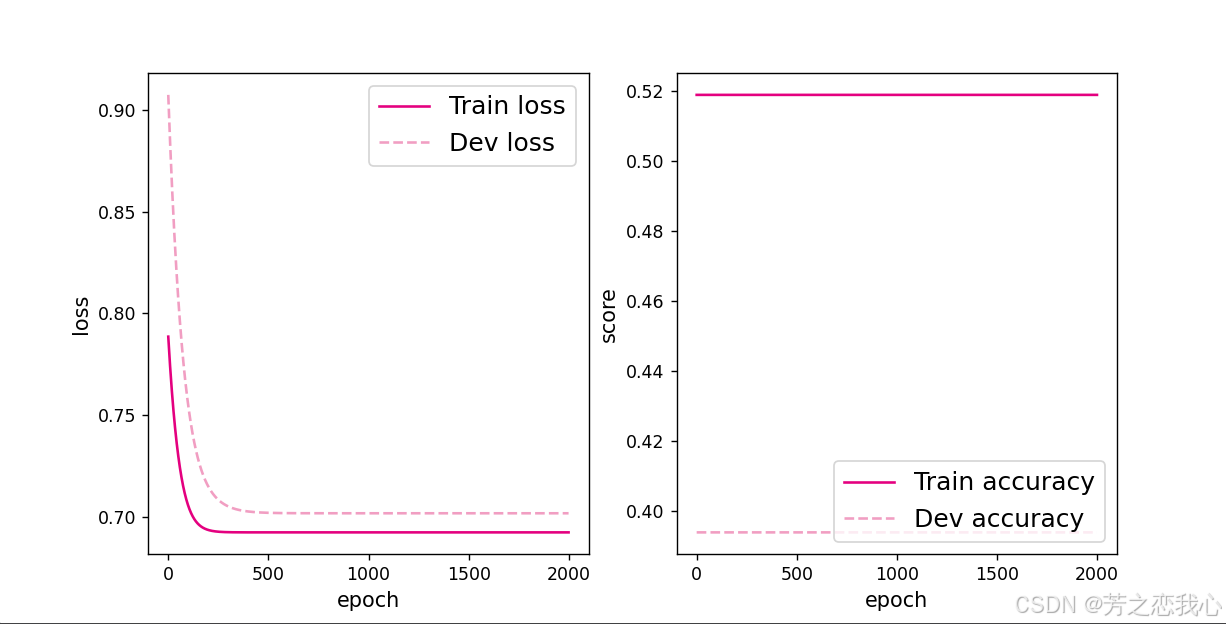

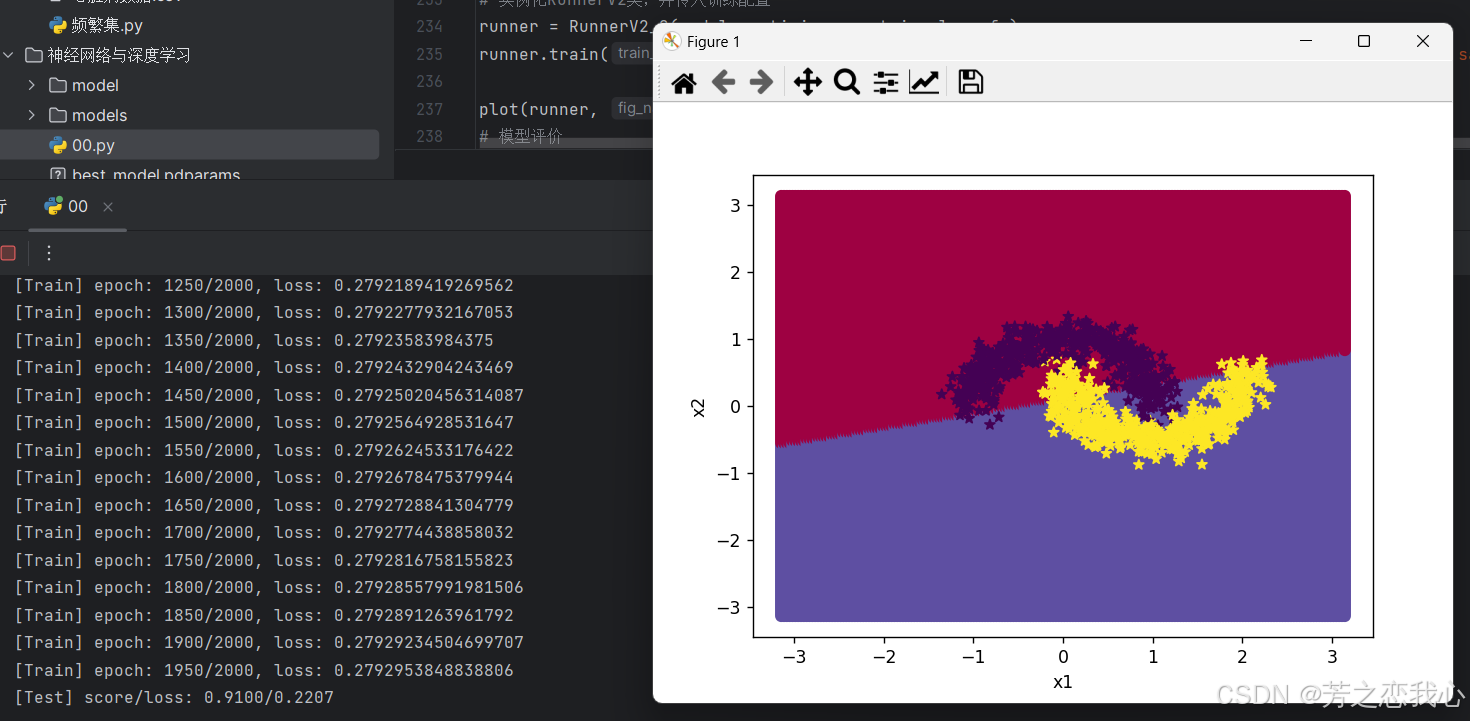

改为ReLu函数进行训练,将model = Model_MLP_L5(input_size=2, output_size=1, act='sigmoid')改为model = Model_MLP_L5(input_size=2, output_size=1, act='relu'),并重新运行代码,结果

由运行结果看,梯度消失问题有一些改善。

由运行结果看,梯度消失问题有一些改善。

3.ReLU激活函数可以一定程度上改善梯度消失问题,但是在某些情况下容易出现死亡ReLU问题,使得网络难以训练。这是由于当x<0时,ReLU函数的输出恒为0。在训练过程中,如果参数在一次不恰当的更新后,某个ReLU神经元在所有训练数据上都不能被激活(即输出为0),那么这个神经元自身参数的梯度永远都会是0,在以后的训练过程中永远都不能被激活。一种简单有效的优化方式就是将激活函数更换为Leaky ReLU、ELU等ReLU的变种。

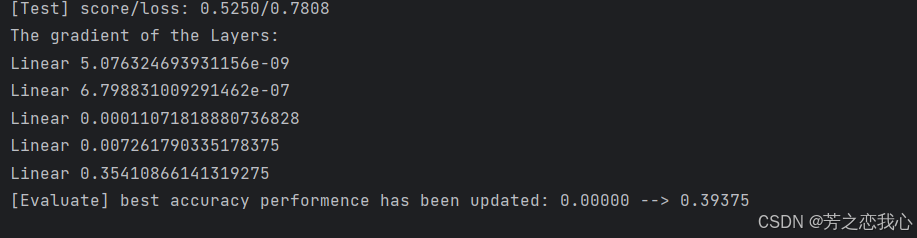

将模型的参数改为-0.8,观察运行结果,发现Linear的值为0,出现了死亡ReLu现象。

将激活函数更换为Leaky ReLU进行模型训练,观察梯度情况。

将激活函数更换为Leaky ReLU进行模型训练,观察梯度情况。

由运行结果得知,梯度进行了正常的更新,死亡ReLu问题得到改善。

4.实验总结

实验中,当网络层数较多时,梯度消失问题显得尤为突出。特别是使用 Sigmoid 激活函数时,由于其在极值区的导数趋近于零,使得梯度在反向传播过程中不断减小,导致靠近输入层的权重几乎不更新。为了解决梯度消失问题,实验中尝试了多种策略,包括更换激活函数为 ReLU、Leaky ReLU 等非线性激活函数。ReLU 的非饱和性质(在 x > 0 时导数恒为 1)使得它在深层网络中能够有效传递梯度,避免了梯度消失的问题。但同时也引入了“死亡 ReLU”问题。实验中,当某个 ReLU 神经元在训练中某一轮更新后变为非激活状态(输出恒为零)时,它的梯度也会恒为零,导致该神经元在后续训练中完全失效。用 Leaky作为激活函数。Leaky在 x < 0 时保留了一小部分负斜率,确保神经元在负值输入时仍有微小的输出,这样即使经历不恰当的权重更新,也不会完全“死亡”。

在实验中,我在问题一中调大了学习率(学习率5),发现模型可以学到东西。

感觉增加学习率可能改善对称权重现象。(不太确定,未查到相关资料)

1879

1879

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?