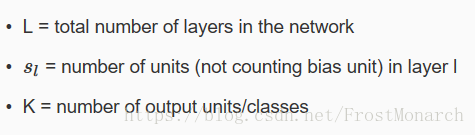

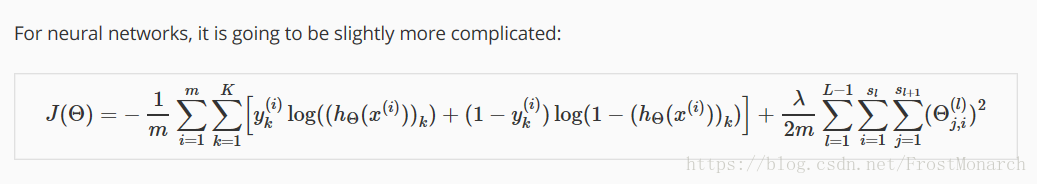

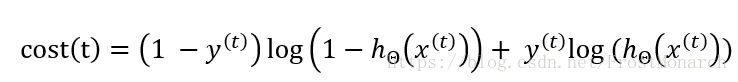

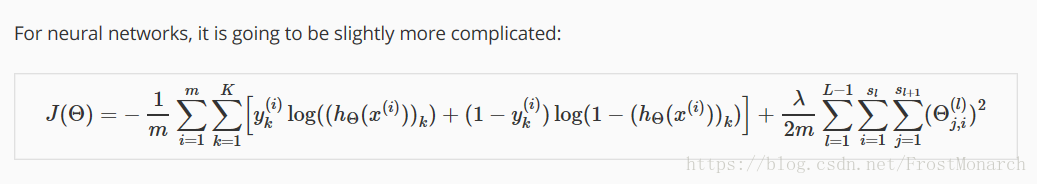

Cost function for neuron model

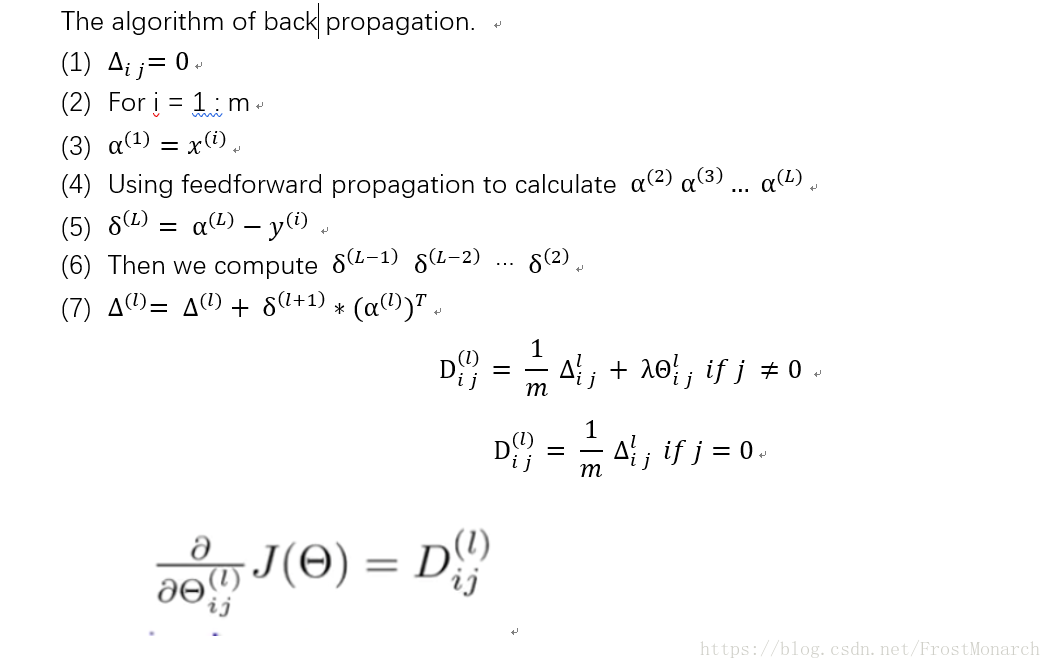

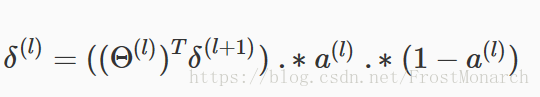

Back propagation

More intuition about back prop

We assume that we have just one unit in the output layer. and we define cost(i) followed:

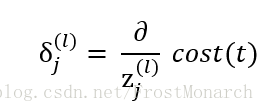

the delta term(error term) is :

Because delta means the tangent of the slope. If it is steeper, in the algorithm we need to minimize the delta(In fact this is what learning algorithm should do). That's why we need the delta term even if when we are facing multi classfication!

Vector -> matrix matrix->vector in OCTAVE

Let's assume that we have matrix theta1(10*11), theta2(10*11), theta3(1*11).

Then we have the vector

matdev = [theta1(:) ; theta2(:); theta3(:)];

this vector can be turned into matrix:

theta1 = reshape(matdev(1:110),10,11);

theta2 = reshape(matdev(111:220),10,11);

theta3 = reshape(matdev(221:231),1,11);

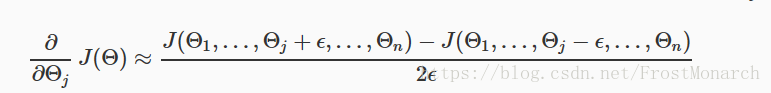

Gradient checking

In order to check whether our partial derivative is correct or not. We can use the following formular:

A small value for epsilon is about 10^(-4). Then we compare the derivative term to the D tern which also compute the partial derivative with back propagation.

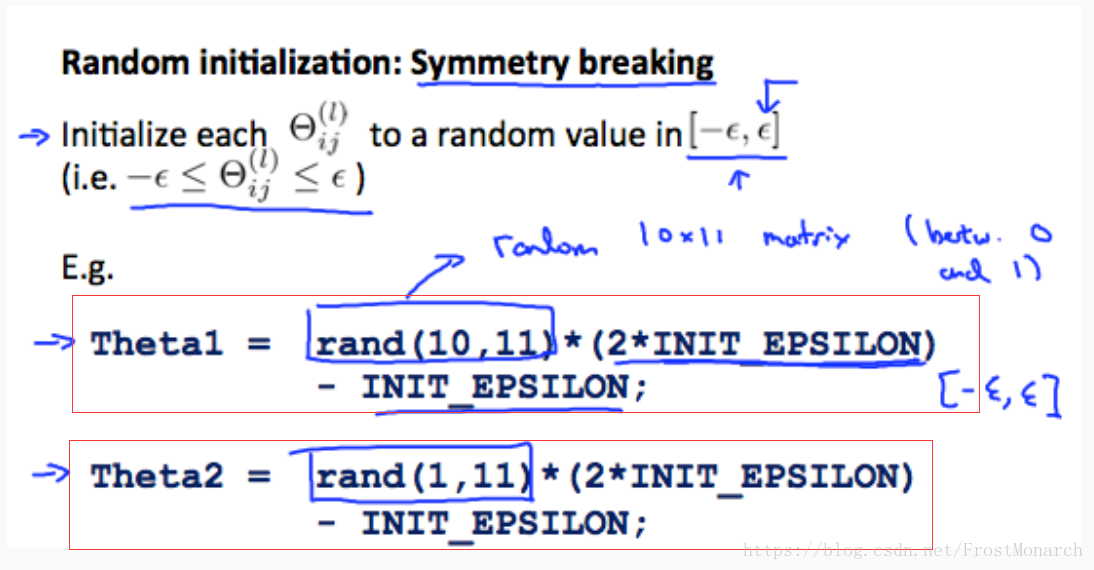

Initial theta

We can not use zeros function to initialize our theta , instead we use:

Review

Since, back prop algorithm is a little complicated. And i would like to make a summary to help us understand the whole procedure.

(1)We should know the neuron model structure first. we should know that how many input units and output units we have , then we usually use 1 hidden layer. The number of the hidden units is always , the more the better.

(2)We use the rand function to initialize the theta matrices.To make sure that every elements in the theta matrices are in the range of [-epsilon , +epsilon]. Epsilon is always a small number like 10^-4.

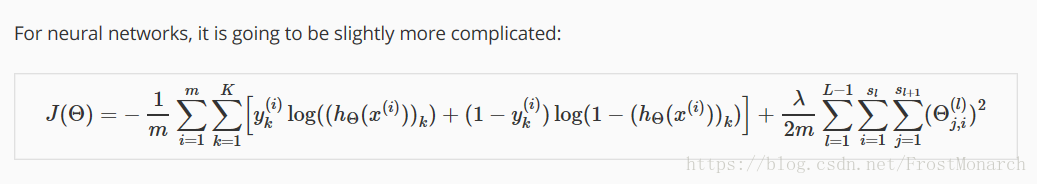

(3) we need to compute the cost function

(4)then we should use the for loop to use the feedforward propagation and back prop algorithm,we have { xi yi}( i =1:m) in each loops.

Pay attention that the capital delta l ,we should discard the first column.

(5)After computing the gradent ,we'd better use the gradient checking function to compare the numerical and analytical result.

本文深入探讨了神经网络中的反向传播算法,包括成本函数定义、梯度检查及初始化等关键步骤,并提供了Octave中矩阵操作的具体示例。

本文深入探讨了神经网络中的反向传播算法,包括成本函数定义、梯度检查及初始化等关键步骤,并提供了Octave中矩阵操作的具体示例。

1820

1820

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?