一、任务背景

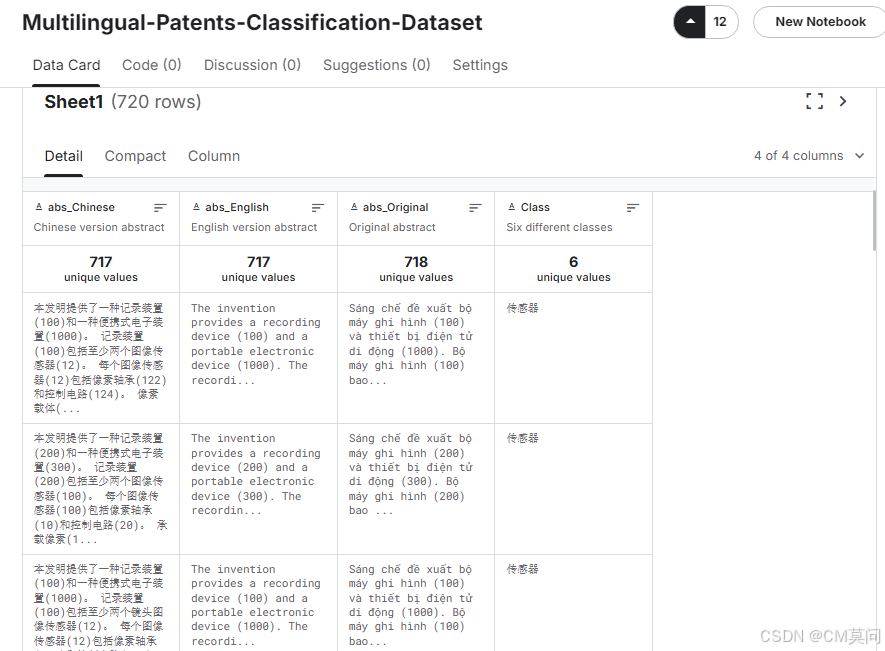

本文的任务是构建一个简易的小型跨语言文本数据库,并实现给定检索Query,算法从数据库中返回相关多语言检索结果的功能。这里,我们使用从kaggle上获取的小型专利摘要数据集《Multilingual-Patents-Classification-Dataset》。为了便于展示,本文仅在python环境中实现数据库的构建,不依赖MySQL等外部数据库工具,检索的过程也通过Python来实现。

这个项目的重点是训练一个跨语言的文本表示模型,模型应当生成有效的文本表示,从而使得我们能够在对Query进行向量化之后检索得到高度相关的信息。建模方案上,我们使用HuggingFace提供的多语言Bert模型作为baseline,通过搭建对比学习框架,构建对比损失,并结合我们的目标数据集对模型进行进一步的微调来实现任务目标。

二、python建模

1、数据读取

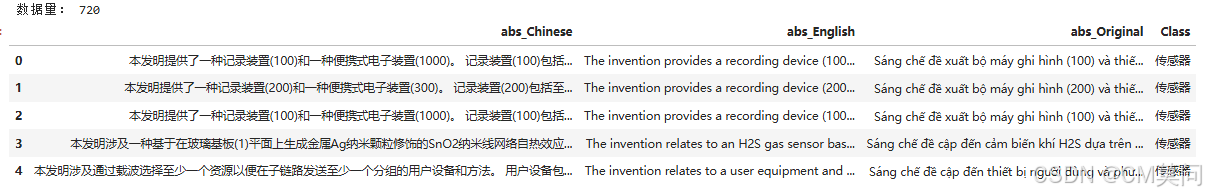

首先,读取excel数据。从下图中可以看到总体的数据量是720。为了构建跨语言训练数据集,我们需要将每一条专利信息转化为数据对,例如“中文-英文数据对”、“中文-小语种数据对”、“英文-小语种数据对”,那么就是720*3=2160条训练正例数据。然后,我们再随机两两配对构建2160条负例数据,就可以得到我们最终的模型训练数据集了。

df = pd.read_excel('/kaggle/input/multilingualpatentsclassification-dataset/Multilingual_classification_dataset.xlsx')

print('数据量:', len(df))

df.head()

2、数据集构建

按照1中所述,我们先构建正例样本对和负例样本对。在负例的构建过程中,我们选择随机打乱原有的数据对应顺序来实现错配。如果读者有精力的话也可以采用更加严谨的方式来构建负例数据集,毕竟目前的构建方式有可能会恰好匹配到对应的正例,相当于引入噪声了,当然从概率的角度来看这样的数据量应当是极少的,尤其是随着数据规模的不断增大。

import random

abs_cn, abs_en, abs_org = df['abs_Chinese'].tolist(), df['abs_English'].tolist(), df['abs_Original'].tolist()

# random.shuffle是原地修改数据,因此这里用.sample

abs_cn_shuffled, abs_en_shuffled, abs_org_shuffled = random.sample(abs_cn, len(abs_cn)), random.sample(abs_en, len(abs_en)), random.sample(abs_org, len(abs_org))

pos_samples = []

neg_samples = []

for cn, en, org, cn_s, en_s, org_s in zip(abs_cn, abs_en, abs_org, abs_cn_shuffled, abs_en_shuffled, abs_org_shuffled):

pos_samples += [[cn, en], [cn, org], [en, org]]

neg_samples += [[cn_s, en_s], [cn_s, org_s], [en_s, org_s]]

print('正例数量:', len(pos_samples))

print('负例数量:', len(neg_samples))接着,我们构建一个taskDataset类,用于读取输入样本对并转化为模型需要的输入格式。因为我们需要进行对比学习(通过对比损失实现),所以taskDataset输入数据应当是样本对,输出也是样本对中每条样本对应的向量表示。

from torch.utils.data import Dataset, DataLoader

from transformers import BertTokenizer, BertModel

# 加载跨语言BERT模型和Tokenizer

model_name = 'bert-base-multilingual-cased'

tokenizer = BertTokenizer.from_pretrained(model_name)

class taskDataset(Dataset):

def __init__(self, sample_pairs, labels, tokenizer, max_len):

self.samples = sample_pairs

self.labels = labels

self.tokenizer = tokenizer

self.max_len = max_len

def __len__(self):

return len(self.labels)

def __getitem__(self, idx):

text1, text2 = self.samples[idx]

# 使用BERT的Tokenizer对样本对进行编码

encoded_text1 = self.tokenizer(

text1,

padding='max_length',

truncation=True,

max_length=self.max_len,

return_tensors='pt'

)

encoded_text2 = self.tokenizer(

text2,

padding='max_length',

truncation=True,

max_length=self.max_len,

return_tensors='pt'

)

# 返回编码后的输入张量

return encoded_text1['input_ids'].squeeze(0), encoded_text1['attention_mask'].squeeze(0), encoded_text1['token_type_ids'].squeeze(0), \

encoded_text2['input_ids'].squeeze(0), encoded_text2['attention_mask'].squeeze(0), encoded_text2['token_type_ids'].squeeze(0), \

self.labels[idx]接着,我们实例化数据集类并创建DataLoader实例。

from sklearn.model_selection import train_test_split

labels = [1]*len(pos_samples)+[0]*len(neg_samples)

X_train, X_test, y_train, y_test = train_test_split(pos_samples+neg_samples, labels, stratify=labels, test_size=0.2, random_state=2025)

train_dataset = taskDataset(X_train, y_train, tokenizer, max_len=128)

test_dataset = taskDataset(X_test, y_test, tokenizer, max_len=128)

train_loader = DataLoader(train_dataset, batch_size=16, shuffle=True)

test_loader = DataLoader(test_dataset, batch_size=16, shuffle=False)3、模型构建

这里,我们使用多语言bert作为文本表示模型。由于我们仅生成文本表示,这里后面不再接入额外的层。当然,现在的设计中模型的输入和输出都是768维的,如果需要更低维度的输出,则可以在bert层后面接入简单的全连接层来实现降维。但需要注意,模型输出的维度应当足够用于进行相似度计算,如果输出只是一个数值,那么就无法进行对比学习了。

import torch.nn as nn

class taskModel(nn.Module):

def __init__(self, out_size, model_name):

super(taskModel, self).__init__()

self.bert = BertModel.from_pretrained(model_name)

def forward(self, ids, msk, ttids):

_, out = self.bert(ids, msk, ttids, return_dict=False)

return out然后,选择我们想要参与训练的参数。

model = taskModel(1, model_name)

for name, param in model.named_parameters():

if '.11' in name:

param.requires_grad = True

else:

param.requires_grad = False

for name, param in model.named_parameters():

if param.requires_grad:

print(name,param.requires_grad)4、损失定义

定义对比损失(Contrastive Loss)是本任务的核心内容。对比损失是一种用于度量学习(Metric Learning)中的损失函数,常用于训练模型以学习特征表示,使得相似的样本在特征空间中更接近,而不相似的样本更远离。通用公式如下:

其中,N是样本对的数量;是第i个样本对的标签;

是第i个样本对在向量空间中的距离,例如欧氏距离;m是超参数,作为边界值来控制不相似样本对之间的最小距离。在上面的公式中,显然

为1表示正例,为0表示负例。如果我们使用的标签相反,那么也应当相应地调整损失函数中的计算逻辑。调整的目标就是当样本不相似时损失尽可能大,样本相似时损失尽可能小。

import torch

import torch.nn.functional as F

class ContrastiveLoss(nn.Module):

def __init__(self, margin=1.0):

super(ContrastiveLoss, self).__init__()

self.margin = margin

def forward(self, output1, output2, label):

euclidean_distance = F.pairwise_distance(output1, output2)

loss_contrastive = torch.mean((label) * torch.pow(euclidean_distance, 2) +

(1-label) * torch.pow(torch.clamp(self.margin - euclidean_distance, min=0.0), 2))

return loss_contrastive

criterion = ContrastiveLoss()

optimizer = torch.optim.Adam(model.parameters())5、模型训练

from tqdm import tqdm

epochs = 5

model.train()

for epoch in range(epochs):

total_loss = 0

for ids1, msk1, ttids1, ids2, msk2, ttids2, label in tqdm(train_loader):

optimizer.zero_grad()

out1 = model(ids1, msk1, ttids1)

out2 = model(ids2, msk2, ttids2)

loss = criterion(out1, out2, label)

total_loss += loss.item()

loss.backward()

optimizer.step()

print(f"Epoch: {epoch}, loss: {total_loss/len(train_loader)}")6、效果评估

这里我们来获取模型在测试集上输出的欧氏距离。

from sklearn.metrics import precision_score, recall_score, f1_score

model.eval()

distance = []

real_label = []

with torch.no_grad():

for ids1, msk1, ttids1, ids2, msk2, ttids2, label in tqdm(test_loader):

ids1, msk1, ttids1, ids2, msk2, ttids2, label = ids1.to(device), msk1.to(device), ttids1.to(device), ids2.to(device), msk2.to(device), ttids2.to(device), label.to(device)

out1 = model(ids1, msk1, ttids1)

out2 = model(ids2, msk2, ttids2)

euclidean_distance = F.pairwise_distance(out1, out2)

distance += list(euclidean_distance.detach().cpu().numpy())

real_label += list(label.detach().cpu().numpy())

pred_label = [1 if dist<=0.5 else 0 for dist in distance]

print('Prec:', precision_score(real_label, pred_label))

print('Rec:', recall_score(real_label, pred_label))

print('F1', f1_score(real_label, pred_label))7、向量检索实践

这里,我们使用cdist来快速计算向量相似度。需要注意,cdist适用于万级以下的数据规模,如果数据量非常大建议使用faiss。从结果上看,检索返回的item与query的相似度高,但人工评估发现实际的相关性并没有很高。这是因为我们的训练数据量较小,且对bert模型没有进行更进一步的调优。通过对向量化模型进一步调优、更换更强大的文本表示模型等方式,可以使得检索效果得到更大的提升。

texts = []

for line in df.values.tolist():

texts += line[:-1]

def vectorize_texts(texts, tokenizer, model, batch_size=32):

vectors = []

for i in range(0, len(texts), batch_size):

batch = texts[i:i+batch_size]

inputs = tokenizer(batch,

padding=True,

truncation=True,

max_length=128,

return_tensors="pt")

inputs = inputs.to(device)

with torch.no_grad():

outputs = model(inputs['input_ids'], inputs['attention_mask'], inputs['token_type_ids'])

batch_vectors = outputs.cpu().numpy()

vectors.append(batch_vectors)

return np.concatenate(vectors, axis=0)

single_tokenizer = BertTokenizer.from_pretrained(model_name)

vectors = vectorize_texts(texts, single_tokenizer, model)

# 存储为内存友好格式(推荐两种方式)

np.save('/kaggle/working/vectors.npy', vectors) # 方式1:numpy二进制格式

from scipy.spatial.distance import cdist

class VectorSearcher:

def __init__(self, vectors, texts, metric='cosine'):

self.vectors = vectors.astype('float32') # 存储原始向量

self.texts = texts

self.metric = metric # 支持cdist所有度量方式

def search(self, query_vector, top_k=5):

# 计算查询与所有向量的距离

distances = cdist(query_vector.reshape(1, -1),

self.vectors,

metric=self.metric)

# 获取top_k索引(注意余弦距离需要取最小,相似度取最大)

if self.metric == 'cosine':

indices = np.argsort(distances[0])[:top_k] # 余弦距离越小越相似

else:

indices = np.argsort(distances[0])[:top_k]

# 组装结果

return [(self.texts[i], 1 - distances[0][i] if self.metric == 'cosine' else distances[0][i])

for i in indices]

# 初始化搜索引擎

vectors = np.load('/kaggle/working/vectors.npy')

searcher = VectorSearcher(vectors, texts)

# 查询使用示例

query = "便携式电子装置"

query_vec = vectorize_texts([query], single_tokenizer, model)[0]

results = searcher.search(query_vec.reshape(1,-1))

print(results)三、完整代码

from torch.utils.data import Dataset, DataLoader

from transformers import BertTokenizer, BertModel

from sklearn.model_selection import train_test_split

from sklearn.metrics import precision_score, recall_score, f1_score

import torch.nn.functional as F

import torch.nn as nn

from tqdm import tqdm

import numpy as np

import pandas as pd

import random

import torch

df = pd.read_excel('/kaggle/input/multilingualpatentsclassification-dataset/Multilingual_classification_dataset.xlsx')

print('数据量:', len(df))

abs_cn, abs_en, abs_org = df['abs_Chinese'].tolist(), df['abs_English'].tolist(), df['abs_Original'].tolist()

# random.shuffle是原地修改数据,因此这里用.sample

abs_cn_shuffled, abs_en_shuffled, abs_org_shuffled = random.sample(abs_cn, len(abs_cn)), random.sample(abs_en, len(abs_en)), random.sample(abs_org, len(abs_org))

pos_samples = []

neg_samples = []

for cn, en, org, cn_s, en_s, org_s in zip(abs_cn, abs_en, abs_org, abs_cn_shuffled, abs_en_shuffled, abs_org_shuffled):

pos_samples += [[cn, en], [cn, org], [en, org]]

neg_samples += [[cn_s, en_s], [cn_s, org_s], [en_s, org_s]]

print('正例数量:', len(pos_samples))

print('负例数量:', len(neg_samples))

# 加载跨语言BERT模型和Tokenizer

model_name = 'bert-base-multilingual-cased'

tokenizer = BertTokenizer.from_pretrained(model_name)

class taskDataset(Dataset):

def __init__(self, sample_pairs, labels, tokenizer, max_len):

self.samples = sample_pairs

self.labels = labels

self.tokenizer = tokenizer

self.max_len = max_len

def __len__(self):

return len(self.labels)

def __getitem__(self, idx):

text1, text2 = self.samples[idx]

# 使用BERT的Tokenizer对样本对进行编码

encoded_text1 = self.tokenizer(

text1,

padding='max_length',

truncation=True,

max_length=self.max_len,

return_tensors='pt'

)

encoded_text2 = self.tokenizer(

text2,

padding='max_length',

truncation=True,

max_length=self.max_len,

return_tensors='pt'

)

# 返回编码后的输入张量

return encoded_text1['input_ids'].squeeze(0), encoded_text1['attention_mask'].squeeze(0), encoded_text1['token_type_ids'].squeeze(0), \

encoded_text2['input_ids'].squeeze(0), encoded_text2['attention_mask'].squeeze(0), encoded_text2['token_type_ids'].squeeze(0), \

self.labels[idx]

labels = [1]*len(pos_samples)+[0]*len(neg_samples)

X_train, X_test, y_train, y_test = train_test_split(pos_samples+neg_samples, labels, stratify=labels, test_size=0.2, random_state=2025)

train_dataset = taskDataset(X_train, y_train, tokenizer, max_len=128)

test_dataset = taskDataset(X_test, y_test, tokenizer, max_len=128)

train_loader = DataLoader(train_dataset, batch_size=16, shuffle=True)

test_loader = DataLoader(test_dataset, batch_size=16, shuffle=False)

class taskModel(nn.Module):

def __init__(self, out_size, model_name):

super(taskModel, self).__init__()

self.bert = BertModel.from_pretrained(model_name)

def forward(self, ids, msk, ttids):

_, out = self.bert(ids, msk, ttids, return_dict=False)

return out

model = taskModel(1, model_name)

for name, param in model.named_parameters():

if '.11.out' in name:

param.requires_grad = True

else:

param.requires_grad = False

for name, param in model.named_parameters():

if param.requires_grad:

print(name,param.requires_grad)

class ContrastiveLoss(nn.Module):

def __init__(self, margin=1.0):

super(ContrastiveLoss, self).__init__()

self.margin = margin

def forward(self, output1, output2, label):

euclidean_distance = F.pairwise_distance(output1, output2)

loss_contrastive = torch.mean((label) * torch.pow(euclidean_distance, 2) +

(1-label) * torch.pow(torch.clamp(self.margin - euclidean_distance, min=0.0), 2))

return loss_contrastive

criterion = ContrastiveLoss()

optimizer = torch.optim.Adam(model.parameters())

epochs = 5

model.train()

for epoch in range(epochs):

total_loss = 0

for ids1, msk1, ttids1, ids2, msk2, ttids2, label in tqdm(train_loader):

optimizer.zero_grad()

out1 = model(ids1, msk1, ttids1)

out2 = model(ids2, msk2, ttids2)

loss = criterion(out1, out2, label)

total_loss += loss.item()

loss.backward()

optimizer.step()

print(f"Epoch: {epoch}, loss: {total_loss/len(train_loader)}")

model.eval()

distance = []

real_label = []

with torch.no_grad():

for ids1, msk1, ttids1, ids2, msk2, ttids2, label in tqdm(test_loader):

ids1, msk1, ttids1, ids2, msk2, ttids2, label = ids1.to(device), msk1.to(device), ttids1.to(device), ids2.to(device), msk2.to(device), ttids2.to(device), label.to(device)

out1 = model(ids1, msk1, ttids1)

out2 = model(ids2, msk2, ttids2)

euclidean_distance = F.pairwise_distance(out1, out2)

distance += list(euclidean_distance.detach().cpu().numpy())

real_label += list(label.detach().cpu().numpy())

pred_label = [1 if dist<=0.5 else 0 for dist in distance]

print('Prec:', precision_score(real_label, pred_label))

print('Rec:', recall_score(real_label, pred_label))

print('F1', f1_score(real_label, pred_label))

texts = []

for line in df.values.tolist():

texts += line[:-1]

def vectorize_texts(texts, tokenizer, model, batch_size=32):

vectors = []

for i in range(0, len(texts), batch_size):

batch = texts[i:i+batch_size]

inputs = tokenizer(batch,

padding=True,

truncation=True,

max_length=128,

return_tensors="pt")

inputs = inputs.to(device)

with torch.no_grad():

outputs = model(inputs['input_ids'], inputs['attention_mask'], inputs['token_type_ids'])

batch_vectors = outputs.cpu().numpy()

vectors.append(batch_vectors)

return np.concatenate(vectors, axis=0)

single_tokenizer = BertTokenizer.from_pretrained(model_name)

vectors = vectorize_texts(texts, single_tokenizer, model)

# 存储为内存友好格式(推荐两种方式)

np.save('/kaggle/working/vectors.npy', vectors) # 方式1:numpy二进制格式

from scipy.spatial.distance import cdist

class VectorSearcher:

def __init__(self, vectors, texts, metric='cosine'):

self.vectors = vectors.astype('float32') # 存储原始向量

self.texts = texts

self.metric = metric # 支持cdist所有度量方式

def search(self, query_vector, top_k=5):

# 计算查询与所有向量的距离

distances = cdist(query_vector.reshape(1, -1),

self.vectors,

metric=self.metric)

# 获取top_k索引(注意余弦距离需要取最小,相似度取最大)

if self.metric == 'cosine':

indices = np.argsort(distances[0])[:top_k] # 余弦距离越小越相似

else:

indices = np.argsort(distances[0])[:top_k]

# 组装结果

return [(self.texts[i], 1 - distances[0][i] if self.metric == 'cosine' else distances[0][i])

for i in indices]

# 初始化搜索引擎

vectors = np.load('/kaggle/working/vectors.npy')

searcher = VectorSearcher(vectors, texts)

# 查询使用示例

query = "便携式电子装置"

query_vec = vectorize_texts([query], single_tokenizer, model)[0]

results = searcher.search(query_vec.reshape(1,-1))

print(results)

——基于对比学习的跨语言文本对齐&spm=1001.2101.3001.5002&articleId=144425053&d=1&t=3&u=ee19c985edbd4671947f186ab6656aa7)

636

636

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?