- 文为「365天深度学习训练营」内部文章

- 参考本文所写文章,请在文章开头带上「🔗 声明」

import tensorflow as tf

gpus = tf.config.list_physical_devices("GPU")

if gpus:

tf.config.experimental.set_memory_growth(gpus[0], True) #设置GPU显存用量按需使用

tf.config.set_visible_devices([gpus[0]],"GPU")

# 打印显卡信息,确认GPU可用

print(gpus)import numpy as np

import matplotlib.pyplot as plt

# 支持中文

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号

import os,PIL,pathlib

#隐藏警告

import warnings

warnings.filterwarnings('ignore')

data_dir = "E:/T3/365-8-data"

data_dir = pathlib.Path(data_dir)

image_count = len(list(data_dir.glob('*/*')))

print("图片总数为:",image_count)图片总数为: 3400

batch_size = 64

img_height = 224

img_width = 224"""

关于image_dataset_from_directory()的详细介绍可以参考文章:https://mtyjkh.blog.youkuaiyun.com/article/details/117018789

"""

train_ds = tf.keras.preprocessing.image_dataset_from_directory(

data_dir,

validation_split=0.2,

subset="training",

seed=12,

image_size=(img_height, img_width),

batch_size=batch_size)Found 3400 files belonging to 2 classes. Using 2720 files for training.

"""

关于image_dataset_from_directory()的详细介绍可以参考文章:https://mtyjkh.blog.youkuaiyun.com/article/details/117018789

"""

val_ds = tf.keras.preprocessing.image_dataset_from_directory(

data_dir,

validation_split=0.2,

subset="validation",

seed=12,

image_size=(img_height, img_width),

batch_size=batch_size)Found 3400 files belonging to 2 classes. Using 680 files for validation.

class_names = train_ds.class_names

print(class_names)['cat', 'dog']

for image_batch, labels_batch in train_ds:

print(image_batch.shape)

print(labels_batch.shape)

break(64, 224, 224, 3) (64,)

AUTOTUNE = tf.data.AUTOTUNE

def preprocess_image(image,label):

return (image/255.0,label)

# 归一化处理

train_ds = train_ds.map(preprocess_image, num_parallel_calls=AUTOTUNE)

val_ds = val_ds.map(preprocess_image, num_parallel_calls=AUTOTUNE)

train_ds = train_ds.cache().shuffle(1000).prefetch(buffer_size=AUTOTUNE)

val_ds = val_ds.cache().prefetch(buffer_size=AUTOTUNE)plt.figure(figsize=(15, 10)) # 图形的宽为15高为10

for images, labels in train_ds.take(1):

for i in range(8):

ax = plt.subplot(5, 8, i + 1)

plt.imshow(images[i])

plt.title(class_names[labels[i]])

plt.axis("off")

from tensorflow.keras import layers, models, Input

from tensorflow.keras.models import Model

from tensorflow.keras.layers import Conv2D, MaxPooling2D, Dense, Flatten, Dropout

def VGG16(nb_classes, input_shape):

input_tensor = Input(shape=input_shape)

# 1st block

x = Conv2D(64, (3,3), activation='relu', padding='same',name='block1_conv1')(input_tensor)

x = Conv2D(64, (3,3), activation='relu', padding='same',name='block1_conv2')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block1_pool')(x)

# 2nd block

x = Conv2D(128, (3,3), activation='relu', padding='same',name='block2_conv1')(x)

x = Conv2D(128, (3,3), activation='relu', padding='same',name='block2_conv2')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block2_pool')(x)

# 3rd block

x = Conv2D(256, (3,3), activation='relu', padding='same',name='block3_conv1')(x)

x = Conv2D(256, (3,3), activation='relu', padding='same',name='block3_conv2')(x)

x = Conv2D(256, (3,3), activation='relu', padding='same',name='block3_conv3')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block3_pool')(x)

# 4th block

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block4_conv1')(x)

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block4_conv2')(x)

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block4_conv3')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block4_pool')(x)

# 5th block

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block5_conv1')(x)

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block5_conv2')(x)

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block5_conv3')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block5_pool')(x)

# full connection

x = Flatten()(x)

x = Dense(4096, activation='relu', name='fc1')(x)

x = Dense(4096, activation='relu', name='fc2')(x)

output_tensor = Dense(nb_classes, activation='softmax', name='predictions')(x)

model = Model(input_tensor, output_tensor)

return model

model=VGG16(1000, (img_width, img_height, 3))

model.summary()

Model: "functional"

┏━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━┓ ┃ Layer (type) ┃ Output Shape ┃ Param # ┃ ┡━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━┩ │ input_layer (InputLayer) │ (None, 224, 224, 3) │ 0 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ block1_conv1 (Conv2D) │ (None, 224, 224, 64) │ 1,792 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ block1_conv2 (Conv2D) │ (None, 224, 224, 64) │ 36,928 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ block1_pool (MaxPooling2D) │ (None, 112, 112, 64) │ 0 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ block2_conv1 (Conv2D) │ (None, 112, 112, 128) │ 73,856 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ block2_conv2 (Conv2D) │ (None, 112, 112, 128) │ 147,584 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ block2_pool (MaxPooling2D) │ (None, 56, 56, 128) │ 0 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ block3_conv1 (Conv2D) │ (None, 56, 56, 256) │ 295,168 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ block3_conv2 (Conv2D) │ (None, 56, 56, 256) │ 590,080 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ block3_conv3 (Conv2D) │ (None, 56, 56, 256) │ 590,080 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ block3_pool (MaxPooling2D) │ (None, 28, 28, 256) │ 0 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ block4_conv1 (Conv2D) │ (None, 28, 28, 512) │ 1,180,160 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ block4_conv2 (Conv2D) │ (None, 28, 28, 512) │ 2,359,808 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ block4_conv3 (Conv2D) │ (None, 28, 28, 512) │ 2,359,808 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ block4_pool (MaxPooling2D) │ (None, 14, 14, 512) │ 0 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ block5_conv1 (Conv2D) │ (None, 14, 14, 512) │ 2,359,808 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ block5_conv2 (Conv2D) │ (None, 14, 14, 512) │ 2,359,808 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ block5_conv3 (Conv2D) │ (None, 14, 14, 512) │ 2,359,808 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ block5_pool (MaxPooling2D) │ (None, 7, 7, 512) │ 0 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ flatten (Flatten) │ (None, 25088) │ 0 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ fc1 (Dense) │ (None, 4096) │ 102,764,544 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ fc2 (Dense) │ (None, 4096) │ 16,781,312 │ ├──────────────────────────────────────┼─────────────────────────────┼─────────────────┤ │ predictions (Dense) │ (None, 1000) │ 4,097,000 │ └──────────────────────────────────────┴─────────────────────────────┴─────────────────┘

Total params: 138,357,544 (527.79 MB)

Trainable params: 138,357,544 (527.79 MB)

Non-trainable params: 0 (0.00 B)

model.compile(optimizer="adam",

loss ='sparse_categorical_crossentropy',

metrics =['accuracy'])from tqdm import tqdm

import tensorflow.keras.backend as K

epochs = 10

lr = 1e-4

# 记录训练数据,方便后面的分析

history_train_loss = []

history_train_accuracy = []

history_val_loss = []

history_val_accuracy = []

for epoch in range(epochs):

train_total = len(train_ds)

val_total = len(val_ds)

"""

total:预期的迭代数目

ncols:控制进度条宽度

mininterval:进度更新最小间隔,以秒为单位(默认值:0.1)

"""

with tqdm(total=train_total, desc=f'Epoch {epoch + 1}/{epochs}',mininterval=1,ncols=100) as pbar:

lr = lr*0.92

model.optimizer.learning_rate.assign(lr)

train_loss = []

train_accuracy = []

for image,label in train_ds:

"""

训练模型,简单理解train_on_batch就是:它是比model.fit()更高级的一个用法

想详细了解 train_on_batch 的同学,

可以看看我的这篇文章:https://www.yuque.com/mingtian-fkmxf/hv4lcq/ztt4gy

"""

# 这里生成的是每一个batch的acc与loss

history = model.train_on_batch(image,label)

train_loss.append(history[0])

train_accuracy.append(history[1])

pbar.set_postfix({"train_loss": "%.4f"%history[0],

"train_acc":"%.4f"%history[1],

"lr": model.optimizer.learning_rate.numpy()})

pbar.update(1)

history_train_loss.append(np.mean(train_loss))

history_train_accuracy.append(np.mean(train_accuracy))

print('开始验证!')

with tqdm(total=val_total, desc=f'Epoch {epoch + 1}/{epochs}',mininterval=0.3,ncols=100) as pbar:

val_loss = []

val_accuracy = []

for image,label in val_ds:

# 这里生成的是每一个batch的acc与loss

history = model.test_on_batch(image,label)

val_loss.append(history[0])

val_accuracy.append(history[1])

pbar.set_postfix({"val_loss": "%.4f"%history[0],

"val_acc":"%.4f"%history[1]})

pbar.update(1)

history_val_loss.append(np.mean(val_loss))

history_val_accuracy.append(np.mean(val_accuracy))

print('结束验证!')

print("验证loss为:%.4f"%np.mean(val_loss))

print("验证准确率为:%.4f"%np.mean(val_accuracy))Epoch 1/10: 100%|███| 43/43 [07:03<00:00, 9.86s/it, train_loss=1.5739, train_acc=0.4752, lr=9.2e-5]

开始验证!

Epoch 1/10: 100%|██████████████████| 11/11 [00:30<00:00, 2.79s/it, val_loss=1.4039, val_acc=0.4818]

结束验证! 验证loss为:1.4716 验证准确率为:0.4813

Epoch 2/10: 100%|██| 43/43 [07:42<00:00, 10.75s/it, train_loss=1.0875, train_acc=0.5082, lr=8.46e-5]

开始验证!

Epoch 2/10: 100%|██████████████████| 11/11 [00:31<00:00, 2.85s/it, val_loss=1.0451, val_acc=0.5223]

结束验证! 验证loss为:1.0630 验证准确率为:0.5157

Epoch 3/10: 100%|██| 43/43 [07:50<00:00, 10.94s/it, train_loss=0.9377, train_acc=0.5479, lr=7.79e-5]

开始验证!

Epoch 3/10: 100%|██████████████████| 11/11 [00:32<00:00, 2.94s/it, val_loss=0.9184, val_acc=0.5582]

结束验证! 验证loss为:0.9267 验证准确率为:0.5532

Epoch 4/10: 100%|██| 43/43 [07:46<00:00, 10.84s/it, train_loss=0.8519, train_acc=0.5814, lr=7.16e-5]

开始验证!

Epoch 4/10: 100%|██████████████████| 11/11 [00:34<00:00, 3.10s/it, val_loss=0.8373, val_acc=0.5876]

结束验证! 验证loss为:0.8435 验证准确率为:0.5850

Epoch 5/10: 100%|██| 43/43 [07:51<00:00, 10.96s/it, train_loss=0.7650, train_acc=0.6249, lr=6.59e-5]

开始验证!

Epoch 5/10: 100%|██████████████████| 11/11 [00:34<00:00, 3.11s/it, val_loss=0.7473, val_acc=0.6348]

结束验证! 验证loss为:0.7549 验证准确率为:0.6308

Epoch 6/10: 100%|██| 43/43 [07:57<00:00, 11.10s/it, train_loss=0.6646, train_acc=0.6772, lr=6.06e-5]

开始验证!

Epoch 6/10: 100%|██████████████████| 11/11 [00:35<00:00, 3.19s/it, val_loss=0.6456, val_acc=0.6870]

结束验证! 验证loss为:0.6536 验证准确率为:0.6827

Epoch 7/10: 100%|██| 43/43 [08:10<00:00, 11.40s/it, train_loss=0.5803, train_acc=0.7202, lr=5.58e-5]

开始验证!

Epoch 7/10: 100%|██████████████████| 11/11 [00:31<00:00, 2.90s/it, val_loss=0.5656, val_acc=0.7276]

结束验证! 验证loss为:0.5718 验证准确率为:0.7244

Epoch 8/10: 100%|██| 43/43 [07:53<00:00, 11.01s/it, train_loss=0.5129, train_acc=0.7536, lr=5.13e-5]

开始验证!

Epoch 8/10: 100%|██████████████████| 11/11 [00:32<00:00, 2.97s/it, val_loss=0.5016, val_acc=0.7594]

结束验证! 验证loss为:0.5065 验证准确率为:0.7569

Epoch 9/10: 100%|██| 43/43 [07:49<00:00, 10.93s/it, train_loss=0.4592, train_acc=0.7802, lr=4.72e-5]

开始验证!

Epoch 9/10: 100%|██████████████████| 11/11 [00:33<00:00, 3.02s/it, val_loss=0.4500, val_acc=0.7847]

结束验证! 验证loss为:0.4539 验证准确率为:0.7827

Epoch 10/10: 100%|█| 43/43 [07:46<00:00, 10.86s/it, train_loss=0.4162, train_acc=0.8014, lr=4.34e-5]

开始验证!

Epoch 10/10: 100%|█████████████████| 11/11 [00:34<00:00, 3.16s/it, val_loss=0.4094, val_acc=0.8049]

结束验证! 验证loss为:0.4124 验证准确率为:0.8034

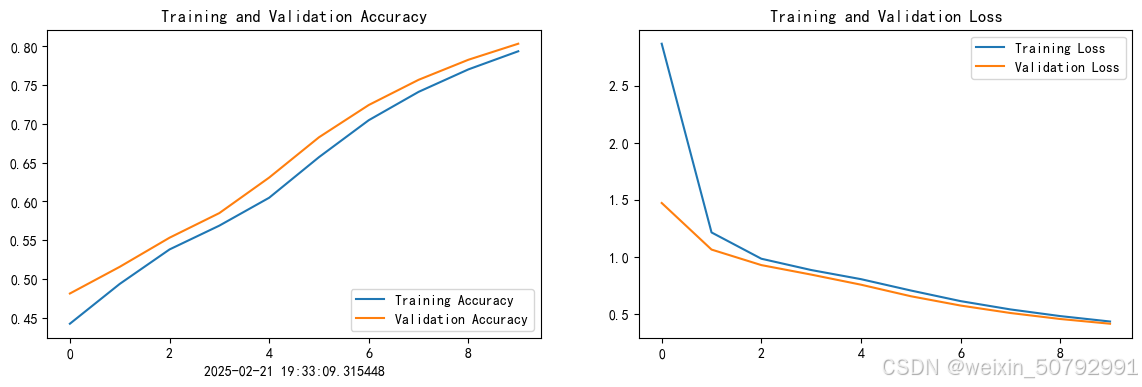

from datetime import datetime

current_time = datetime.now() # 获取当前时间

epochs_range = range(epochs)

plt.figure(figsize=(14, 4))

plt.subplot(1, 2, 1)

plt.plot(epochs_range, history_train_accuracy, label='Training Accuracy')

plt.plot(epochs_range, history_val_accuracy, label='Validation Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.xlabel(current_time) # 打卡请带上时间戳,否则代码截图无效

plt.subplot(1, 2, 2)

plt.plot(epochs_range, history_train_loss, label='Training Loss')

plt.plot(epochs_range, history_val_loss, label='Validation Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

import numpy as np

# 采用加载的模型(new_model)来看预测结果

plt.figure(figsize=(18, 3)) # 图形的宽为18高为5

plt.suptitle("预测结果展示")

for images, labels in val_ds.take(1):

for i in range(8):

ax = plt.subplot(1,8, i + 1)

# 显示图片

plt.imshow(images[i].numpy())

# 需要给图片增加一个维度

img_array = tf.expand_dims(images[i], 0)

# 使用模型预测图片中的人物

predictions = model.predict(img_array)

plt.title(class_names[np.argmax(predictions)])

plt.axis("off")1/1 ━━━━━━━━━━━━━━━━━━━━ 0s 179ms/step 1/1 ━━━━━━━━━━━━━━━━━━━━ 0s 88ms/step 1/1 ━━━━━━━━━━━━━━━━━━━━ 0s 87ms/step 1/1 ━━━━━━━━━━━━━━━━━━━━ 0s 105ms/step 1/1 ━━━━━━━━━━━━━━━━━━━━ 0s 89ms/step 1/1 ━━━━━━━━━━━━━━━━━━━━ 0s 88ms/step 1/1 ━━━━━━━━━━━━━━━━━━━━ 0s 95ms/step 1/1 ━━━━━━━━━━━━━━━━━━━━ 0s 88ms/step

收获:大致了解更新的详细步骤,接下来会继续学习相关代码的运行知识 更加清楚的学习如何进行算法优化与改进

16万+

16万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?