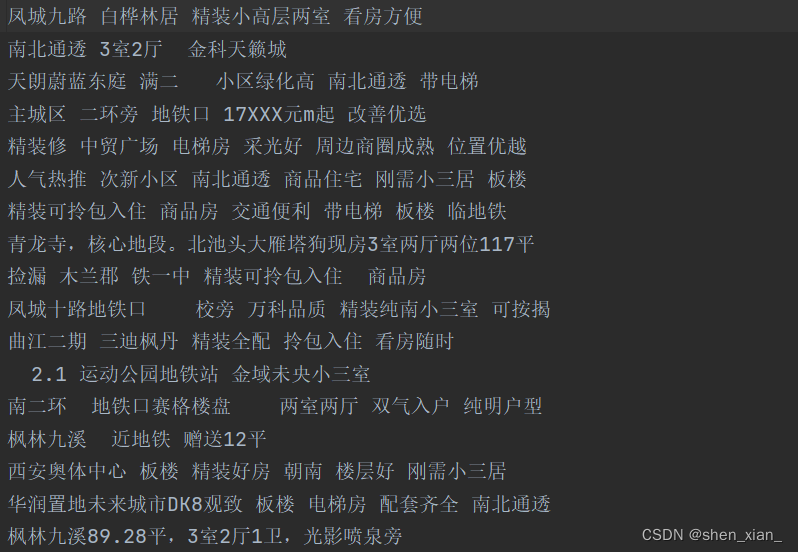

1.爬取58同城二手房信息

通过xpath解析网页中信息,准确爬取二手房的标题信息。

from lxml import etree

import requests

# 爬取58二手房信息

if __name__ == '__main__':

# 指定URL

url = 'https://xa.58.com/ershoufang/'

# 加入头信息

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/114.0.0.0 Safari/537.36'

}

page_text = requests.get(url=url,headers=headers).text

#使用xpath对爬取数据进行解析

tree = etree.HTML(page_text)

lis = tree.xpath('//section[@class="list"]/div')

# 给定文件,将爬取的数据写入指定文件中

fp = open('58.txt','w',encoding='utf-8')

for di in lis:

tittle = di.xpath('./a/div[2]/div/div/h3/text()')[0]

print(tittle)

fp.write(tittle +'\n')

爬取之后数据如图所示。

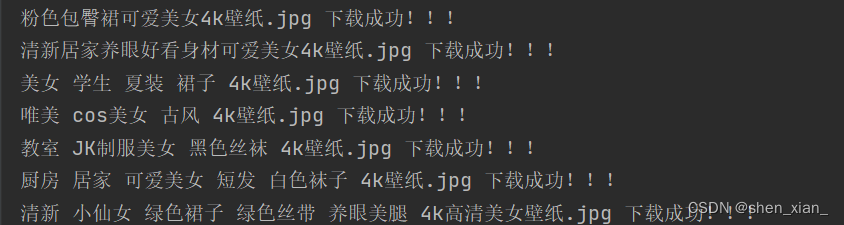

2.批量下载网站中的图片

解析网页中的图片数据,将其爬取并下载到本地文件夹,实现数据的批量获取。

from lxml import etree

import requests

import os

# 批量爬取网页中美女图片

if __name__ == '__main__':

url = 'http://pic.netbian.com/4kmeinv/'

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/114.0.0.0 Safari/537.36'

}

response = requests.get(url=url,headers=headers)

# 手动设定响应数据编码格式

#response.encoding='utf-8'

page_text = response.text

tree = etree.HTML(page_text)

li_list = tree.xpath('//div[@class="slist"]/ul/li')

# 创建一个文件夹

if not os.path.exists('./picLibs'):

os.mkdir('./picLibs')

# 将爬取的图片数据写入文件夹中

for li in li_list:

image_src = 'http://pic.netbian.com' + li.xpath('./a/img/@src')[0]

image_name = li.xpath('./a/img/@alt')[0]+'.jpg'

# 通用处理中文乱码方案

image_name = image_name.encode('iso-8859-1').decode('gbk')

#print(image_src)

image_data = requests.get(url=image_src,headers=headers).content

image_path = 'picLibs/'+image_name

with open(image_path,'wb') as fp:

fp.write(image_data)

print(image_name,'下载成功!!!')

爬取之后效果如图所示。

文件夹中的数据信息如图所示。

3556

3556

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?