卷积神经网络

卷积神经网络本质是共享权重+稀疏链接的全连接网络

编写步骤

构建一个神经网络,步骤是几乎不变的,大概有以下几步

- 准备数据集

#更高级的CNN网络

import torch

import torch.nn as nn

import torch.nn.functional as F

import torchvision

import torchvision.transforms as transforms

import torch.optim as optim

#准备数据集

batch_size = 64

transforms = transforms.Compose([transforms.ToTensor(),

transforms.Normalize((0.1307,),(0.3081,))])

trainset = torchvision.datasets.MNIST(root=r'../data/mnist',

train=True,

download=True,

transform=transforms)

trainloader = torch.utils.data.DataLoader(trainset, batch_size=batch_size, shuffle=True)

testset = torchvision.datasets.MNIST(root=r'../data/mnist',

train=False,

download=True,

transform=transforms)

testloader = torch.utils.data.DataLoader(testset, batch_size=batch_size, shuffle=False)

不使用官方数据集读取方法,可以自己继承Dataset类重写

class Mydataset(Dataset):

def __init__(self,filepath):

xy=np.loadtxt(filepath,delimiter=',',dtype=np.float32)

self.len=xy.shape[0]

self.x_data=torch.from_numpy(xy[:,:-1])

self.y_data=torch.from_numpy(xy[:,[-1]])

#魔法方法,容许用户通过索引index得到值

def __getitem__(self,index):

return self.x_data[index],self.y_data[index]

def __len__(self):

return self.len

- 构建模型

class CNN_net(nn.Module):

def __init__(self):

super(CNN_net, self).__init__()

self.conv1 = nn.Conv2d(1, 10, kernel_size=3, bias=False)#输出存为[10,26,26]

self.conv2 = nn.Conv2d(10, 20, kernel_size=3, bias=False)#输出为【20,5,5】

self.pooling = nn.MaxPool2d(2, 2)#输出为将其中尺寸减半

self.fc1 = nn.Linear(500, 10)

def forward(self, x):

batch_size = x.size(0)

x = F.relu(self.pooling(self.conv1(x)))

x = F.relu(self.pooling(self.conv2(x)))

#

x = x.view(batch_size, -1)

#(x.size())

x = F.relu(self.fc1(x))

return x

如果想使用残差网络可以定义残差网络块儿

# 定义残差网络块儿

class ResidualBlock(nn.Module):

def __init__(self, channels):

super(ResidualBlock, self).__init__()

self.conv1 = nn.Conv2d(channels, kernel_size=3, padding=1, bias=False)

self.conv2 = nn.Conv2d(channels,channels, kernel_size=3, padding=1, bias=False)

def forward(self, x):

out = F.relu(self.conv1(x))

out = self.conv2(out)

return F.relu(out + x)

那么对应的forward哪里可以添加进去残差块

class CNN_net(nn.Module):

def __init__(self):

super(CNN_net, self).__init__()

self.conv1 = nn.Conv2d(1, 10, kernel_size=3, bias=False)#输出存为[10,26,26]

self.conv2 = nn.Conv2d(10, 20, kernel_size=3, bias=False)#输出为【20,5,5】

self.resblock1 = ResidualBlock(10)

self.resblock2 = ResidualBlock(20)

self.pooling = nn.MaxPool2d(2, 2)#输出为将其中尺寸减半

self.fc1 = nn.Linear(500, 10)

def forward(self, x):

batch_size = x.size(0)

x = F.relu(self.pooling(self.conv1(x)))

x=self.resblock1(x)

x = F.relu(self.pooling(self.conv2(x)))

x = self.resblock2(x)

x = x.view(batch_size, -1)

#(x.size())

x = F.relu(self.fc1(x))

return x

- 构建模型和损失函数

# 构建模型和损失

model=CNN_net()

# 定义一个设备,如果我们=有能够访问的CUDA设备

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print(torch.cuda.is_available())

#将模型搬移到CUDA支持的GPU上面

model.to(device)

criterion = nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.001, momentum=0.9)

- 训练(和测试)模型

def train(epoch):

running_loss = 0.0

for batch_idx, (inputs, targets) in enumerate(trainloader):

inputs,targets = inputs.to(device),targets.to(device)

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs, targets)

loss.backward()

optimizer.step()

#需要将张量转换为浮点数运算

running_loss += loss.item()

if batch_idx % 100 == 0:

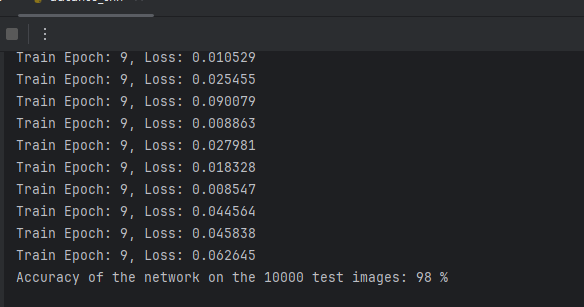

print('Train Epoch: {}, Loss: {:.6f}'.format(epoch, loss.item()))

running_loss = 0

def test(epoch):

correct = 0

total = 0

with torch.no_grad():

for batch_idx, (inputs, targets) in enumerate(testloader):

inputs,targets = inputs.to(device),targets.to(device)

outputs = model(inputs)

_, predicted = torch.max(outputs.data, 1)

total += targets.size(0)

correct=correct+(predicted.eq(targets).sum()*1.0)

print('Accuracy of the network on the 10000 test images: %d %%' % (100*correct/total))

全部代码

#更高级的CNN网络

import torch

import torch.nn as nn

import torch.nn.functional as F

import torchvision

import torchvision.transforms as transforms

import torch.optim as optim

#准备数据集

batch_size = 64

transforms = transforms.Compose([transforms.ToTensor(),

transforms.Normalize((0.1307,),(0.3081,))])

trainset = torchvision.datasets.MNIST(root=r'../data/mnist',

train=True,

download=True,

transform=transforms)

trainloader = torch.utils.data.DataLoader(trainset, batch_size=batch_size, shuffle=True)

testset = torchvision.datasets.MNIST(root=r'../data/mnist',

train=False,

download=True,

transform=transforms)

testloader = torch.utils.data.DataLoader(testset, batch_size=batch_size, shuffle=False)

# 定义残差网络块儿

class ResidualBlock(nn.Module):

def __init__(self, channels):

super(ResidualBlock, self).__init__()

self.conv1 = nn.Conv2d(channels,channels, kernel_size=3, padding=1, bias=False)

self.conv2 = nn.Conv2d(channels,channels, kernel_size=3, padding=1, bias=False)

def forward(self, x):

out = F.relu(self.conv1(x))

out = self.conv2(out)

return F.relu(out + x)

# 定义卷积神经网络

class CNN_net(nn.Module):

def __init__(self):

super(CNN_net, self).__init__()

self.conv1 = nn.Conv2d(1, 10, kernel_size=3, bias=False)#输出存为[10,26,26]

self.conv2 = nn.Conv2d(10, 20, kernel_size=3, bias=False)#输出为【20,5,5】

self.resblock1 = ResidualBlock(10)

self.resblock2 = ResidualBlock(20)

self.pooling = nn.MaxPool2d(2, 2)#输出为将其中尺寸减半

self.fc1 = nn.Linear(500, 10)

def forward(self, x):

batch_size = x.size(0)

x = F.relu(self.pooling(self.conv1(x)))

x=self.resblock1(x)

x = F.relu(self.pooling(self.conv2(x)))

x = self.resblock2(x)

x = x.view(batch_size, -1)

#(x.size())

x = F.relu(self.fc1(x))

return x

# 构建模型和损失

model=CNN_net()

# 定义一个设备,如果我们=有能够访问的CUDA设备

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print(torch.cuda.is_available())

#将模型搬移到CUDA支持的GPU上面

model.to(device)

criterion = nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.001, momentum=0.9)

def train(epoch):

running_loss = 0.0

for batch_idx, (inputs, targets) in enumerate(trainloader):

inputs,targets = inputs.to(device),targets.to(device)

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs, targets)

loss.backward()

optimizer.step()

#需要将张量转换为浮点数运算

running_loss += loss.item()

if batch_idx % 100 == 0:

print('Train Epoch: {}, Loss: {:.6f}'.format(epoch, loss.item()))

running_loss = 0

def test(epoch):

correct = 0

total = 0

with torch.no_grad():

for batch_idx, (inputs, targets) in enumerate(testloader):

inputs,targets = inputs.to(device),targets.to(device)

outputs = model(inputs)

_, predicted = torch.max(outputs.data, 1)

total += targets.size(0)

correct=correct+(predicted.eq(targets).sum()*1.0)

print('Accuracy of the network on the 10000 test images: %d %%' % (100*correct/total))

if __name__ == '__main__':

for epoch in range(10):

train(epoch)

test(epoch)

运行结果如下所示:

556

556

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?