Softmax Sigmoid

Intoduction to Deep Neural Networks

https://www.ritchieng.com/machine-learning/deep-learning/neural-nets/

Logistic Regression

https://www.cntk.ai/pythondocs/CNTK_103B_MNIST_LogisticRegression.html

logistic regression (LR) network is a simple building block that has been effectively

powering many ML applications in the past decade.

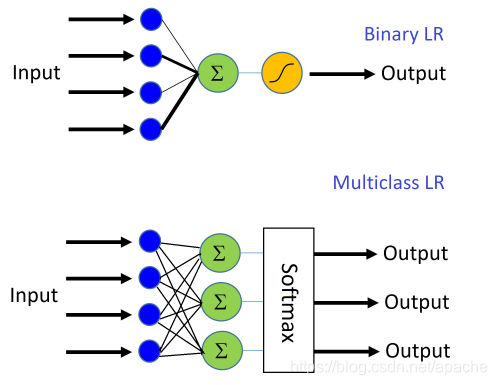

Binary Logistic Regression

Multinomial Linear Regression

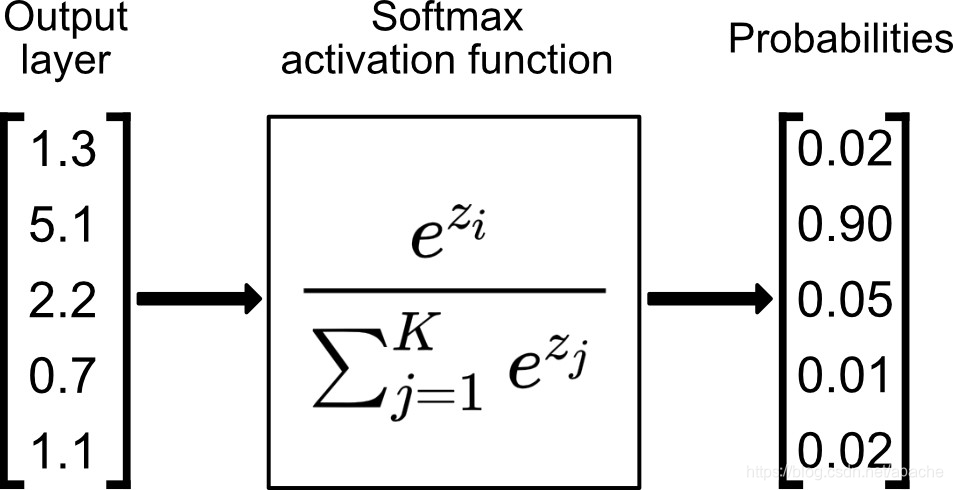

Softmax 多分类

-

=========

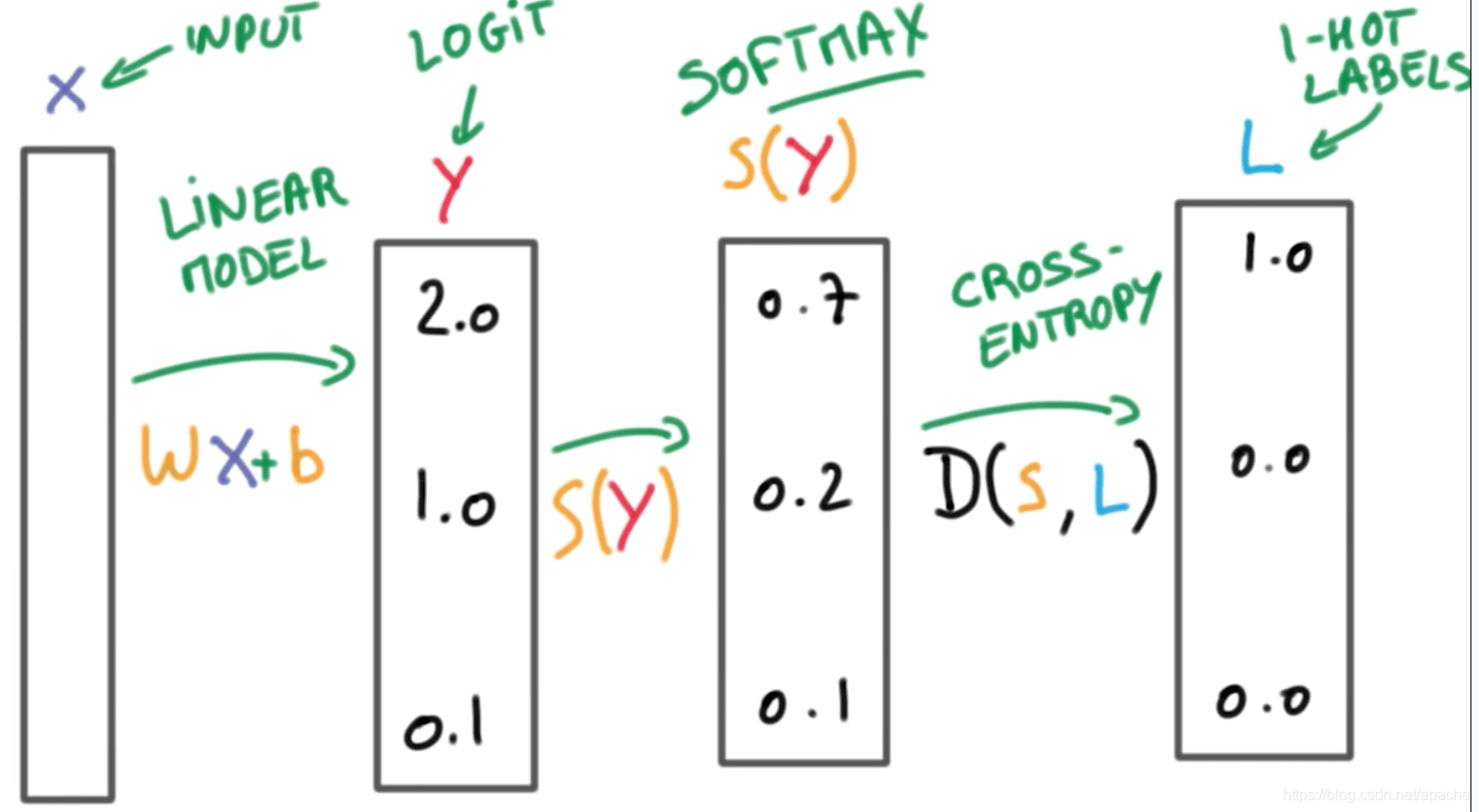

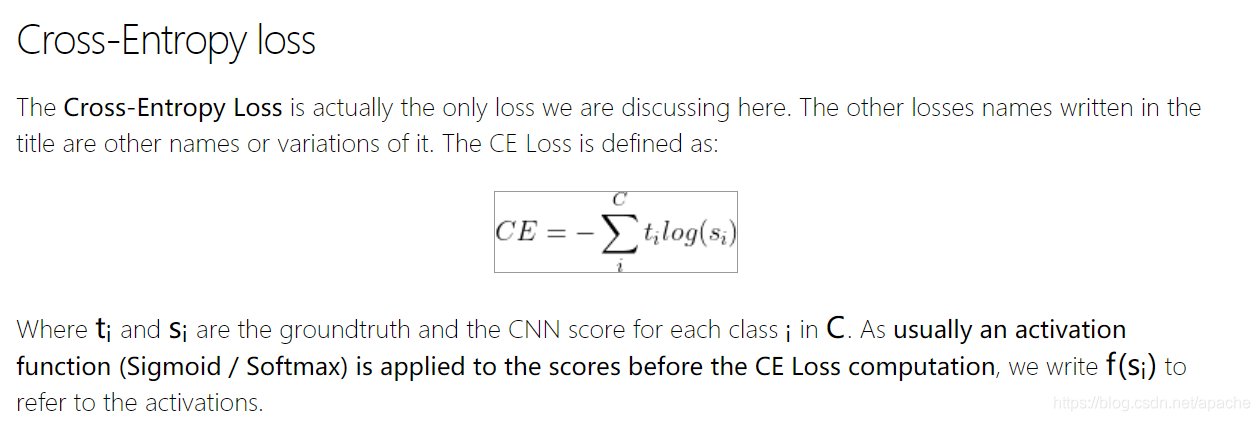

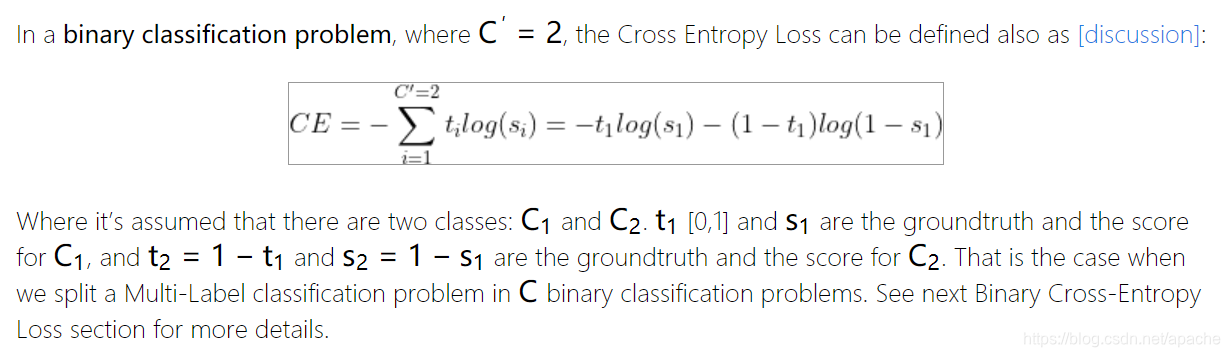

LOSS

当多分类变为二分类时,交叉熵LOSS和Logistic Regression LOSS一样。

https://gombru.github.io/2018/05/23/cross_entropy_loss/

-

-

--

Ref

https://dataaspirant.com/difference-between-softmax-function-and-sigmoid-function/

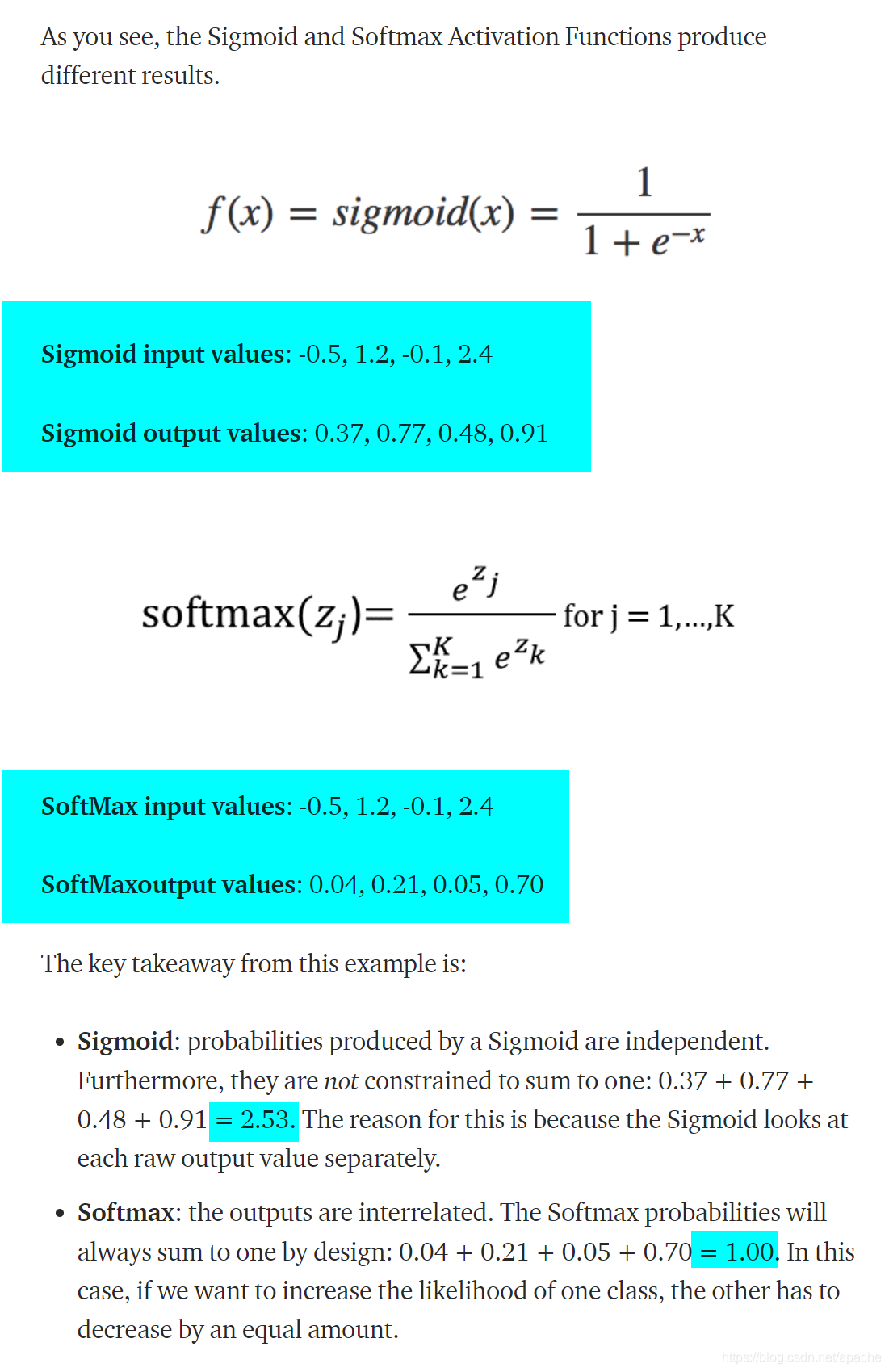

本文介绍了深度学习中两种重要的激活函数——Softmax和Sigmoid。Softmax主要用于多分类问题,将神经网络的输出转换为概率分布;而Sigmoid则常用于二分类问题,输出介于0和1之间,代表了类别的概率。它们在交叉熵损失函数下有密切联系,当多分类问题简化为二分类时,Softmax的损失函数等同于Sigmoid的损失函数。

本文介绍了深度学习中两种重要的激活函数——Softmax和Sigmoid。Softmax主要用于多分类问题,将神经网络的输出转换为概率分布;而Sigmoid则常用于二分类问题,输出介于0和1之间,代表了类别的概率。它们在交叉熵损失函数下有密切联系,当多分类问题简化为二分类时,Softmax的损失函数等同于Sigmoid的损失函数。

2333

2333

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?