代码地址:https://github.com/xinyu-ch/faster-rcnn.pytorch/blob/master/lib/model/faster_rcnn/resnet.py

前言

具体的理论知识可参考:https://www.cnblogs.com/shouhuxianjian/p/7766441.html

1 总结构

如下是ResNet的一个主题结构,通过__init__函数可设置对应的ResNet网络,block代表网络的结构(BasicBlock–基础结构, Bottleneck–瓶颈结构),layers代表选择不同的层([2, 2, 2, 2]–res18, [3, 4, 6, 3]–res18, [3, 4, 6, 3]–res50, [3, 4, 23, 3]–res101,具体可参考ResNet详细结构),默认类别num_classes–1000。__make_layer用于创建结构类似的层,其中的downsample在稍后介绍的基础结构会用到,downsample在瓶颈结构中会用到。self.modules会返回模型的每一层参数,此处for循环是用于初始化卷积层和batch normalization层的参数。layer1, layer2, layer3, layer4 是输入输出层数不同但结构相同的resual网络层,后面详细解释。

Res101层构成:第1层–conv1卷积层+bn+relu+Maxpool; 第2~10层(33)–layer1; 第11~22层(43)–layer2;第23~91层(233)–layer3; 第92~100层(33)–layer4; 第101层:avgpool层+fc层。

class ResNet(nn.Module):

def __init__(self, block, layers, num_classes=1000):

self.inplanes = 64

super(ResNet, self).__init__()

self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3,

bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=0, ceil_mode=True) # change

self.layer1 = self._make_layer(block, 64, layers[0])

self.layer2 = self._make_layer(block, 128, layers[1], stride=2)

self.layer3 = self._make_layer(block, 256, layers[2], stride=2)

self.layer4 = self._make_layer(block, 512, layers[3], stride=2)

# it is slightly better whereas slower to set stride = 1

# self.layer4 = self._make_layer(block, 512, layers[3], stride=1)

self.avgpool = nn.AvgPool2d(7)

self.fc = nn.Linear(512 * block.expansion, num_classes)

for m in self.modules():

if isinstance(m, nn.Conv2d):

n = m.kernel_size[0] * m.kernel_size[1] * m.out_channels

m.weight.data.normal_(0, math.sqrt(2. / n))

elif isinstance(m, nn.BatchNorm2d):

m.weight.data.fill_(1)

m.bias.data.zero_()

def _make_layer(self, block, planes, blocks, stride=1):

downsample = None

if stride != 1 or self.inplanes != planes * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(self.inplanes, planes * block.expansion,

kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(planes * block.expansion),

)

layers = []

layers.append(block(self.inplanes, planes, stride, downsample))

self.inplanes = planes * block.expansion

for i in range(1, blocks):

layers.append(block(self.inplanes, planes))

return nn.Sequential(*layers)

def forward(self, x):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.avgpool(x)

x = x.view(x.size(0), -1)

x = self.fc(x)

return x

2 基础Resual 网络结构

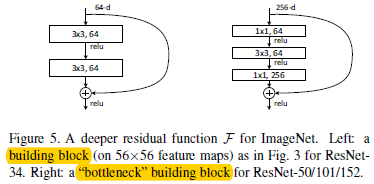

ResNet网络中主要用到了两个结构:基础ResNet结构和瓶颈结构,如下图,左侧是基础结构,其一般用于层数小于30层的时候;右侧的是瓶颈结构,其一般用于大于30层的时候,可以大幅减少网络参数。由图可以看出,基础结构的相加部分维度相同,可直接相加,其expansion=1;而瓶颈结构的维度则不太一样,不能直接相加,需要进行downsample处理后才可直接相加,其expansion=4

基础结构代码:

class BasicBlock(nn.Module):

expansion = 1

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(BasicBlock, self).__init__()

self.conv1 = conv3x3(inplanes, planes, stride)

self.bn1 = nn.BatchNorm2d(planes)

self.relu = nn.ReLU(inplace=True)

self.conv2 = conv3x3(planes, planes)

self.bn2 = nn.BatchNorm2d(planes)

self.downsample = downsample

self.stride = stride

def forward(self, x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

if self.downsample is not None:

residual = self.downsample(x)

out += residual

out = self.relu(out)

return out

瓶颈结构:

class Bottleneck(nn.Module):

expansion = 4

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(Bottleneck, self).__init__()

self.conv1 = nn.Conv2d(inplanes, planes, kernel_size=1, stride=stride, bias=False) # change

self.bn1 = nn.BatchNorm2d(planes)

self.conv2 = nn.Conv2d(planes, planes, kernel_size=3, stride=1, # change

padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(planes)

self.conv3 = nn.Conv2d(planes, planes * 4, kernel_size=1, bias=False)

self.bn3 = nn.BatchNorm2d(planes * 4)

self.relu = nn.ReLU(inplace=True)

self.downsample = downsample

self.stride = stride

def forward(self, x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.relu(out)

out = self.conv3(out)

out = self.bn3(out)

if self.downsample is not None:

residual = self.downsample(x)

out += residual

out = self.relu(out)

return out

这里的结构和上图描述的一致,可详细看看。瓶颈结构会用到downsample,但是layer1, layer2, layer3, layer4都只有最开始的时候有用到。

3 基于ResNet骨干网的faster R-CNN

__init__模型初始化,相关参数设置。__init_modules模型初始化,

class resnet(_fasterRCNN):

def __init__(self, classes, num_layers=101, pretrained=False, class_agnostic=False):

self.model_path = 'data/pretrained_model/resnet101_caffe.pth'

self.dout_base_model = 1024

self.pretrained = pretrained

self.class_agnostic = class_agnostic

_fasterRCNN.__init__(self, classes, class_agnostic)

def _init_modules(self):

resnet = resnet101()

if self.pretrained == True:

print("Loading pretrained weights from %s" %(self.model_path))

state_dict = torch.load(self.model_path)

resnet.load_state_dict({k:v for k,v in state_dict.items() if k in resnet.state_dict()})

# Build resnet.

self.RCNN_base = nn.Sequential(resnet.conv1, resnet.bn1,resnet.relu,

resnet.maxpool,resnet.layer1,resnet.layer2,resnet.layer3)

self.RCNN_top = nn.Sequential(resnet.layer4)

self.RCNN_cls_score = nn.Linear(2048, self.n_classes)

if self.class_agnostic:

self.RCNN_bbox_pred = nn.Linear(2048, 4)

else:

self.RCNN_bbox_pred = nn.Linear(2048, 4 * self.n_classes)

# Fix blocks

for p in self.RCNN_base[0].parameters(): p.requires_grad=False

for p in self.RCNN_base[1].parameters(): p.requires_grad=False

assert (0 <= cfg.RESNET.FIXED_BLOCKS < 4)

if cfg.RESNET.FIXED_BLOCKS >= 3:

for p in self.RCNN_base[6].parameters(): p.requires_grad=False

if cfg.RESNET.FIXED_BLOCKS >= 2:

for p in self.RCNN_base[5].parameters(): p.requires_grad=False

if cfg.RESNET.FIXED_BLOCKS >= 1:

for p in self.RCNN_base[4].parameters(): p.requires_grad=False

def set_bn_fix(m):

classname = m.__class__.__name__

if classname.find('BatchNorm') != -1:

for p in m.parameters(): p.requires_grad=False

self.RCNN_base.apply(set_bn_fix)

self.RCNN_top.apply(set_bn_fix)

def train(self, mode=True):

# Override train so that the training mode is set as we want

nn.Module.train(self, mode)

if mode:

# Set fixed blocks to be in eval mode

self.RCNN_base.eval()

self.RCNN_base[5].train()

self.RCNN_base[6].train()

def set_bn_eval(m):

classname = m.__class__.__name__

if classname.find('BatchNorm') != -1:

m.eval()

self.RCNN_base.apply(set_bn_eval)

self.RCNN_top.apply(set_bn_eval)

def _head_to_tail(self, pool5):

fc7 = self.RCNN_top(pool5).mean(3).mean(2)

return fc7

本文深入探讨Faster R-CNN中的Res101网络结构,包括ResNet的基本和瓶颈结构,以及在faster R-CNN中的应用。通过代码分析了Res101的层构成,如conv1、layer1至layer4,以及downsample在瓶颈结构中的作用。

本文深入探讨Faster R-CNN中的Res101网络结构,包括ResNet的基本和瓶颈结构,以及在faster R-CNN中的应用。通过代码分析了Res101的层构成,如conv1、layer1至layer4,以及downsample在瓶颈结构中的作用。

678

678

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?