使用torch的API完成模型训练

学习目标

本课程介绍机器学习中常见任务的API,通过本课程,学员可以学会从torch中使用多个API来完成一个模型的训练以及后续的保存与加载。

相关知识点

- 使用torch的API完成模型训练

学习内容

1 使用torch的API完成模型训练

1.1 使用数据

PyTorch 提供特定于域的库,例如TorchText、TorchVision 和TorchAudio,所有这些库包括Datasets。在本课程中,将使用TorchVision库加载数据。

该模块包含许多 CIFAR、COCO 等真实世界视觉数据(完整列表 这里)。在本课程中,使用 FashionMNIST数据集。每个TorchVision数据集都包含两个参数:samples和labels。

import torch

from torch import nn

from torch.utils.data import DataLoader

from torchvision import datasets

from torchvision.transforms import ToTensor

import torch_npu

使用wget方式进行数据集的下载并解压,命令如下:

!wget --no-check-certificate https://model-community-picture.obs.cn-north-4.myhuaweicloud.com/ascend-zone/notebook_datasets/2590e70a2bdb11f0825afa163edcddae/FashionMNIST.zip

!unzip FashionMNIST.zip

将Dataset作为参数传递给DataLoade。这为数据集封装了一个可迭代对象,并支持自动批处理、采样、打乱顺序以及多进程数据加载。在这里,定义了一个批次大小为 64,也就是说,DataLoader 中的每个元素都会返回一个包含 64 个样本的特征和对应标签的批次

device = torch.device(

"mps" if torch.backends.mps.is_available()

else "cuda" if torch.cuda.is_available()

else 'npu:0' if hasattr(torch_npu, 'npu') and torch_npu.npu.is_available()

else "cpu"

)

print(f"Using {device} device")

# Download training data from open datasets.

training_data = datasets.FashionMNIST(

root="data",

train=True,

download=False,

transform=ToTensor(),

)

# Download test data from open datasets.

test_data = datasets.FashionMNIST(

root="data",

train=False,

download=False,

transform=ToTensor(),

)

将 Dataset 作为参数传递给 DataLoader。这为数据集封装了一个可迭代对象,并支持自动批处理、采样、打乱顺序以及多进程数据加载。在这里,定义了一个批次大小为 64,也就是说,DataLoader 中的每个元素都会返回一个包含 64 个样本的特征和对应标签的批次

batch_size = 64

train_dataloader = DataLoader(training_data, batch_size=64, shuffle=True, num_workers=0)

test_dataloader = DataLoader(test_data, batch_size=64, shuffle=False, num_workers=0)

for X, y in test_dataloader:

print(f"Shape of X [N, C, H, W]: {X.shape}")

print(f"Shape of y: {y.shape} {y.dtype}")

break

Shape of X [N, C, H, W]: torch.Size([64, 1, 28, 28])

Shape of y: torch.Size([64]) torch.int64

1.2 创建模型

为了在 PyTorch 中定义神经网络,创建一个继承来自 nn.模块。 在函数中定义网络的层,并且在函数中指定数据如何通过网络。为了加速神经网络中的作,将其移至 CUDA、MPS、MTIA 或 NPU 等加速器。如果当前加速器为可用,那么将使用它。否则使用 CPU。

# 定义模型

class NeuralNetwork(nn.Module):

def __init__(self):

super().__init__()

self.flatten = nn.Flatten()

self.linear_relu_stack = nn.Sequential(

nn.Linear(28*28, 512),

nn.ReLU(),

nn.Linear(512, 512),

nn.ReLU(),

nn.Linear(512, 10)

)

def forward(self, x):

x = self.flatten(x)

return self.linear_relu_stack(x)

model = NeuralNetwork().to(device)

print(model)

[W compiler_depend.ts:623] Warning: expandable_segments currently defaults to false. You can enable this feature by `export PYTORCH_NPU_ALLOC_CONF = expandable_segments:True`. (function operator())

NeuralNetwork(

(flatten): Flatten(start_dim=1, end_dim=-1)

(linear_relu_stack): Sequential(

(0): Linear(in_features=784, out_features=512, bias=True)

(1): ReLU()

(2): Linear(in_features=512, out_features=512, bias=True)

(3): ReLU()

(4): Linear(in_features=512, out_features=10, bias=True)

)

)

1.3 模型训练

loss_fn = nn.CrossEntropyLoss()

optimizer = torch.optim.SGD(model.parameters(), lr=1e-3)

在单个训练循环中,模型对训练进行预测数据集(分批提供给它),并反向传播预测误差调整模型的参数。

def train(dataloader, model, loss_fn, optimizer):

size = len(dataloader.dataset)

model.train()

for batch, (X, y) in enumerate(dataloader):

X, y = X.to(device), y.to(device)

# Compute prediction error

pred = model(X)

loss = loss_fn(pred, y)

# Backpropagation

loss.backward()

optimizer.step()

optimizer.zero_grad()

if batch % 100 == 0:

loss, current = loss.item(), (batch + 1) * len(X)

print(f"loss: {loss:>7f} [{current:>5d}/{size:>5d}]")

还可以根据测试数据集检查模型的性能,以确保模型正在学习。

def test(dataloader, model, loss_fn):

size = len(dataloader.dataset)

num_batches = len(dataloader)

model.eval()

test_loss, correct = 0, 0

with torch.no_grad():

for X, y in dataloader:

X, y = X.to(device), y.to(device)

pred = model(X)

test_loss += loss_fn(pred, y).item()

correct += (pred.argmax(1) == y).type(torch.float).sum().item()

test_loss /= num_batches

correct /= size

print(f"Test Error: \n Accuracy: {(100*correct):>0.1f}%, Avg loss: {test_loss:>8f} \n")

训练过程会进行多个轮次(epochs)。在每个轮次中,模型会学习调整参数以做出更准确的预测。在每个 epoch 后打印模型的准确率和损失值;在这里希望看到准确率随着训练轮次的增加而提高,损失值则逐渐下降。

epochs = 5

for t in range(epochs):

print(f"Epoch {t+1}\n-------------------------------")

train(train_dataloader, model, loss_fn, optimizer)

test(test_dataloader, model, loss_fn)

print("Done!")

Epoch 1

-------------------------------

loss: 2.307599 [ 64/60000]

loss: 2.294127 [ 6464/60000]

loss: 2.280530 [12864/60000]

loss: 2.271653 [19264/60000]

loss: 2.261745 [25664/60000]

loss: 2.237861 [32064/60000]

loss: 2.214950 [38464/60000]

loss: 2.204939 [44864/60000]

loss: 2.205576 [51264/60000]

loss: 2.168304 [57664/60000]

Test Error:

Accuracy: 52.7%, Avg loss: 2.162874

Epoch 2

-------------------------------

loss: 2.179749 [ 64/60000]

loss: 2.168770 [ 6464/60000]

loss: 2.110500 [12864/60000]

loss: 2.094859 [19264/60000]

loss: 2.073327 [25664/60000]

loss: 2.050367 [32064/60000]

loss: 2.024948 [38464/60000]

loss: 1.984296 [44864/60000]

loss: 1.950111 [51264/60000]

loss: 1.959091 [57664/60000]

Test Error:

Accuracy: 61.2%, Avg loss: 1.896798

Epoch 3

-------------------------------

loss: 1.896815 [ 64/60000]

loss: 1.868473 [ 6464/60000]

loss: 1.896794 [12864/60000]

loss: 1.695260 [19264/60000]

loss: 1.778525 [25664/60000]

loss: 1.709584 [32064/60000]

loss: 1.585671 [38464/60000]

loss: 1.606815 [44864/60000]

loss: 1.555282 [51264/60000]

loss: 1.545494 [57664/60000]

Test Error:

Accuracy: 60.2%, Avg loss: 1.519633

Epoch 4

-------------------------------

loss: 1.516972 [ 64/60000]

loss: 1.509254 [ 6464/60000]

loss: 1.378001 [12864/60000]

loss: 1.427272 [19264/60000]

loss: 1.333346 [25664/60000]

loss: 1.245684 [32064/60000]

loss: 1.358380 [38464/60000]

loss: 1.363098 [44864/60000]

loss: 1.288136 [51264/60000]

loss: 1.267256 [57664/60000]

Test Error:

Accuracy: 62.8%, Avg loss: 1.247218

Epoch 5

-------------------------------

loss: 1.358569 [ 64/60000]

loss: 1.166384 [ 6464/60000]

loss: 1.063975 [12864/60000]

loss: 1.200364 [19264/60000]

loss: 1.147708 [25664/60000]

loss: 1.104832 [32064/60000]

loss: 1.006688 [38464/60000]

loss: 0.997465 [44864/60000]

loss: 1.162314 [51264/60000]

loss: 1.065279 [57664/60000]

Test Error:

Accuracy: 64.0%, Avg loss: 1.083212

Done!

1.4 保存模型

一种常见的保存模型的方法是序列化其内部的状态字典(该字典包含了模型的参数)

torch.save(model.state_dict(), "model.pth")

print("Saved PyTorch Model State to model.pth")

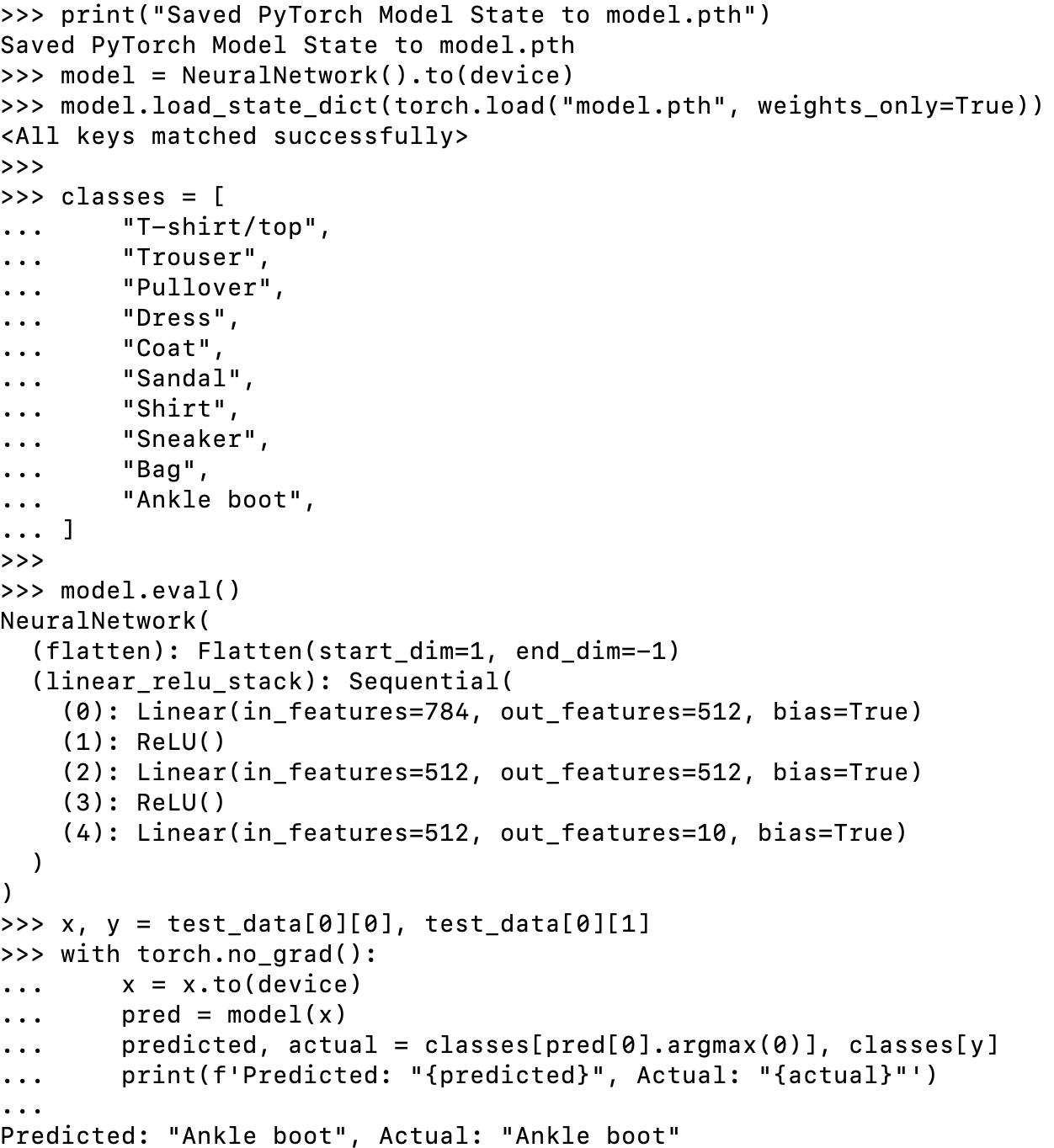

1.5 加载模型

加载模型的过程包括重新创建模型结构,并将状态字典加载到该结构中。

model = NeuralNetwork().to(device)

model.load_state_dict(torch.load("model.pth", weights_only=True))

此模型现在可用于进行预测。

classes = [

"T-shirt/top",

"Trouser",

"Pullover",

"Dress",

"Coat",

"Sandal",

"Shirt",

"Sneaker",

"Bag",

"Ankle boot",

]

model.eval()

x, y = test_data[0][0], test_data[0][1]

with torch.no_grad():

x = x.to(device)

pred = model(x)

predicted, actual = classes[pred[0].argmax(0)], classes[y]

print(f'Predicted: "{predicted}", Actual: "{actual}"')

Predicted: "Ankle boot", Actual: "Ankle boot"

6562

6562

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?