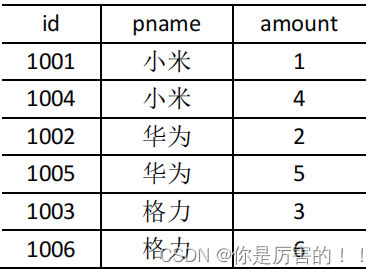

Map 端的主要工作:为来自不同表或文件的 key/value 对, 打标签以区别不同来源的记 录 。然后 用连接字段作为 key ,其余部分和新加的标志作为 value ,最后进行输出。Reduce 端的主要工作:在 Reduce 端 以连接字段作为 key 的分组已经完成 ,我们只需要 在每一个分组当中将那些来源于不同文件的记录(在 Map 阶段已经打标志)分开,最后进行合并就 ok 了。

(1)需求

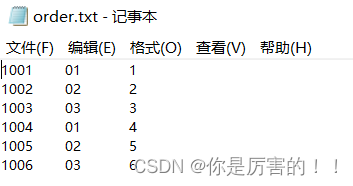

两个文件的形式和内容

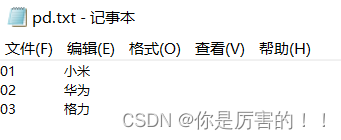

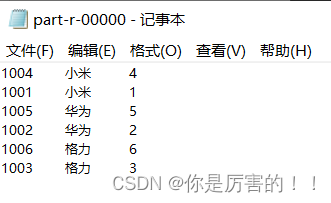

最终输出结果:

(2)需求分析

通过将关联条件作为

Map

输出的

key

,将两表满足

Join

条件的数据并携带数据所来源的文件信息,发往同一个 ReduceTask

,在

Reduce

中进行数据的串联。

(3)代码实现

使用maven工程进行实现

pom.xml配置文件(添加依赖和打包插件)

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>org.hadoop</groupId>

<artifactId>MapReduceDemo</artifactId>

<version>1.0-SNAPSHOT</version>

<properties>

<maven.compiler.source>8</maven.compiler.source>

<maven.compiler.target>8</maven.compiler.target>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

</properties>

<dependencies>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>3.1.3</version>

</dependency>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.12</version>

</dependency>

<dependency>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-log4j12</artifactId>

<version>1.7.30</version>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.6.1</version>

<configuration>

<source>1.8</source>

<target>1.8</target>

</configuration>

</plugin>

<plugin>

<artifactId>maven-assembly-plugin</artifactId>

<configuration>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

</configuration>

<executions>

<execution>

<id>make-assembly</id>

<phase>package</phase>

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>

</project>(1)编写TableBean类

package com.hadoop.mapreduce.reduceJoin;

import org.apache.hadoop.io.Writable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

/**

* @author codestart

* @create 2023-06-21 10:41

*/

public class TableBean implements Writable {

//id pid amount pname需要创建的字段

private String id; //订单id

private String pid; //商品id

private int amount; //商品价格

private String pname; //商品名称

private String flag; //标记为哪个表

public TableBean() {

}

public String getId() {

return id;

}

public void setId(String id) {

this.id = id;

}

public String getPid() {

return pid;

}

public void setPid(String pid) {

this.pid = pid;

}

public int getAmount() {

return amount;

}

public void setAmount(int amount) {

this.amount = amount;

}

public String getPname() {

return pname;

}

public void setPname(String pname) {

this.pname = pname;

}

public String getFlag() {

return flag;

}

public void setFlag(String flag) {

this.flag = flag;

}

@Override

public void write(DataOutput out) throws IOException {

out.writeUTF(id);

out.writeUTF(pid);

out.writeInt(amount);

out.writeUTF(pname);

out.writeUTF(flag);

}

@Override

public void readFields(DataInput in) throws IOException {

this.id = in.readUTF();

this.pid = in.readUTF();

this.amount = in.readInt();

this.pname = in.readUTF();

this.flag = in.readUTF();

}

@Override

public String toString() {

return id + '\t' + pname + '\t' + amount;

}

}

(2)编写Mapper类

package com.hadoop.mapreduce.reduceJoin;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.InputSplit;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.lib.input.FileSplit;

import java.io.File;

import java.io.IOException;

/**

* @author codestart

* @create 2023-06-21 10:56

*/

public class TableMapper extends Mapper<LongWritable, Text, Text, TableBean> {

private String filename;

private Text outK = new Text();

private TableBean outV = new TableBean();

@Override

protected void setup(Mapper<LongWritable, Text, Text, TableBean>.Context context) throws IOException, InterruptedException {

//获取文件切片信息

FileSplit split = (FileSplit) context.getInputSplit();

filename = split.getPath().getName();

}

@Override

protected void map(LongWritable key, Text value, Mapper<LongWritable, Text, Text, TableBean>.Context context) throws IOException, InterruptedException {

//1、读取一行

String line = value.toString();

//2、判断文件

if (filename.contains("order")) { //订单表

//切割

String[] word = line.split("\t");

//封装

outK.set(word[1]);

outV.setPid(word[1]);

outV.setId(word[0]);

outV.setAmount(Integer.parseInt(word[2]));

outV.setPname("");

outV.setFlag("order");

} else { //商品表

//切割

String[] word1 = line.split("\t");

//封装

outK.set(word1[0]);

outV.setPid(word1[0]);

outV.setId("");

outV.setAmount(0);

outV.setPname(word1[1]);

outV.setFlag("pd");

}

//写出

context.write(outK, outV);

}

}

(3)编写Reducer类

package com.hadoop.mapreduce.reduceJoin;

import org.apache.commons.beanutils.BeanUtils;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

import java.lang.reflect.InvocationTargetException;

import java.util.ArrayList;

/**

* @author codestart

* @create 2023-06-21 11:18

*/

public class TableReducer extends Reducer<Text, TableBean, TableBean, NullWritable> {

@Override

protected void reduce(Text key, Iterable<TableBean> values, Reducer<Text, TableBean, TableBean, NullWritable>.Context context) throws IOException, InterruptedException {

//创建集合存放以下形式

//01 1001 1 order

//01 1004 4 order

//01 小米 pd

ArrayList<TableBean> orderBeans = new ArrayList<>();

TableBean pdBean = new TableBean();

//取值写入

for (TableBean value : values) {

if ("order".equals(value.getFlag())) { //订单表

TableBean tmpTableBean = new TableBean();

try {

BeanUtils.copyProperties(tmpTableBean,value);

} catch (IllegalAccessException e) {

e.printStackTrace();

} catch (InvocationTargetException e) {

e.printStackTrace();

}

orderBeans.add(tmpTableBean);

} else { //商品表

try {

BeanUtils.copyProperties(pdBean,value);

} catch (IllegalAccessException e) {

e.printStackTrace();

} catch (InvocationTargetException e) {

e.printStackTrace();

}

}

}

//循环遍历写入pname(取一条orderBeans写入pname)

for (TableBean orderBean : orderBeans) {

orderBean.setPname(pdBean.getPname());

context.write(orderBean,NullWritable.get());

}

}

}

(4)编写Driver类

package com.hadoop.mapreduce.reduceJoin;

import org.apache.commons.io.output.NullWriter;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.FileOutputStream;

import java.io.IOException;

/**

* @author codestart

* @create 2023-06-21 22:36

*/

public class TableDriver {

public static void main(String[] args) throws IOException, InterruptedException, ClassNotFoundException {

//1、获取job对象

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

//2、设置driver的位置

job.setJarByClass(TableDriver.class);

//3、关联Mapper和Reducer

job.setMapperClass(TableMapper.class);

job.setReducerClass(TableReducer.class);

//4、Mapper的K-v输入输出

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(TableBean.class);

//5、最终的输入输出

job.setOutputKeyClass(TableBean.class);

job.setOutputValueClass(NullWriter.class);

//6、设置输入输出的路径

FileInputFormat.setInputPaths(job, new Path("D:\\data\\input\\inputtable"));

FileOutputFormat.setOutputPath(job, new Path("D:\\data\\output\\output3"));

//7、提交job

boolean b = job.waitForCompletion(true);

System.exit(b ? 0 : 1);

}

}

(4)最终效果和总结

最终效果

总结:使用reduce join实现连接两个数据集,如果存在一个数据集非常大,一个数据集非常小,就会出现数据倾斜,所以可以选择使用Map join对数据集进行连接。

以上是我通过网络学习,自己总结和练习的过程。一是为了防止自己忘记学过的知识,二是分享自己学习过程得到的结果,以此来发布博客。以上如有雷同,请联系本人!

文章详细介绍了如何使用Hadoop的MapReduce框架来执行两个文件的Join操作。Map阶段为来自不同文件的记录打标签,并以连接字段为key进行输出。Reduce阶段则负责将来源于不同文件的记录分开并进行合并。此外,文章提到了数据倾斜的问题,并指出在特定情况下可以使用Mapjoin来优化。

文章详细介绍了如何使用Hadoop的MapReduce框架来执行两个文件的Join操作。Map阶段为来自不同文件的记录打标签,并以连接字段为key进行输出。Reduce阶段则负责将来源于不同文件的记录分开并进行合并。此外,文章提到了数据倾斜的问题,并指出在特定情况下可以使用Mapjoin来优化。

732

732

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?