书生大模型实战营-InternLM + LlamaIndex RAG 实践

任务要点

- InternLM + LlamaIndex RAG 实践

基础任务

-

任务要求1:基于 LlamaIndex 构建自己的 RAG 知识库,寻找一个问题 A 在使用 LlamaIndex 之前 浦语 API 不会回答,借助 LlamaIndex 后 浦语 API 具备回答 A 的能力,截图保存。

-

任务要求2:基于 LlamaIndex 构建自己的 RAG 知识库,寻找一个问题 A 在使用 LlamaIndex 之前 InternLM2-Chat-1.8B 模型不会回答,借助 LlamaIndex 后 InternLM2-Chat-1.8B 模型具备回答 A 的能力,截图保存。

-

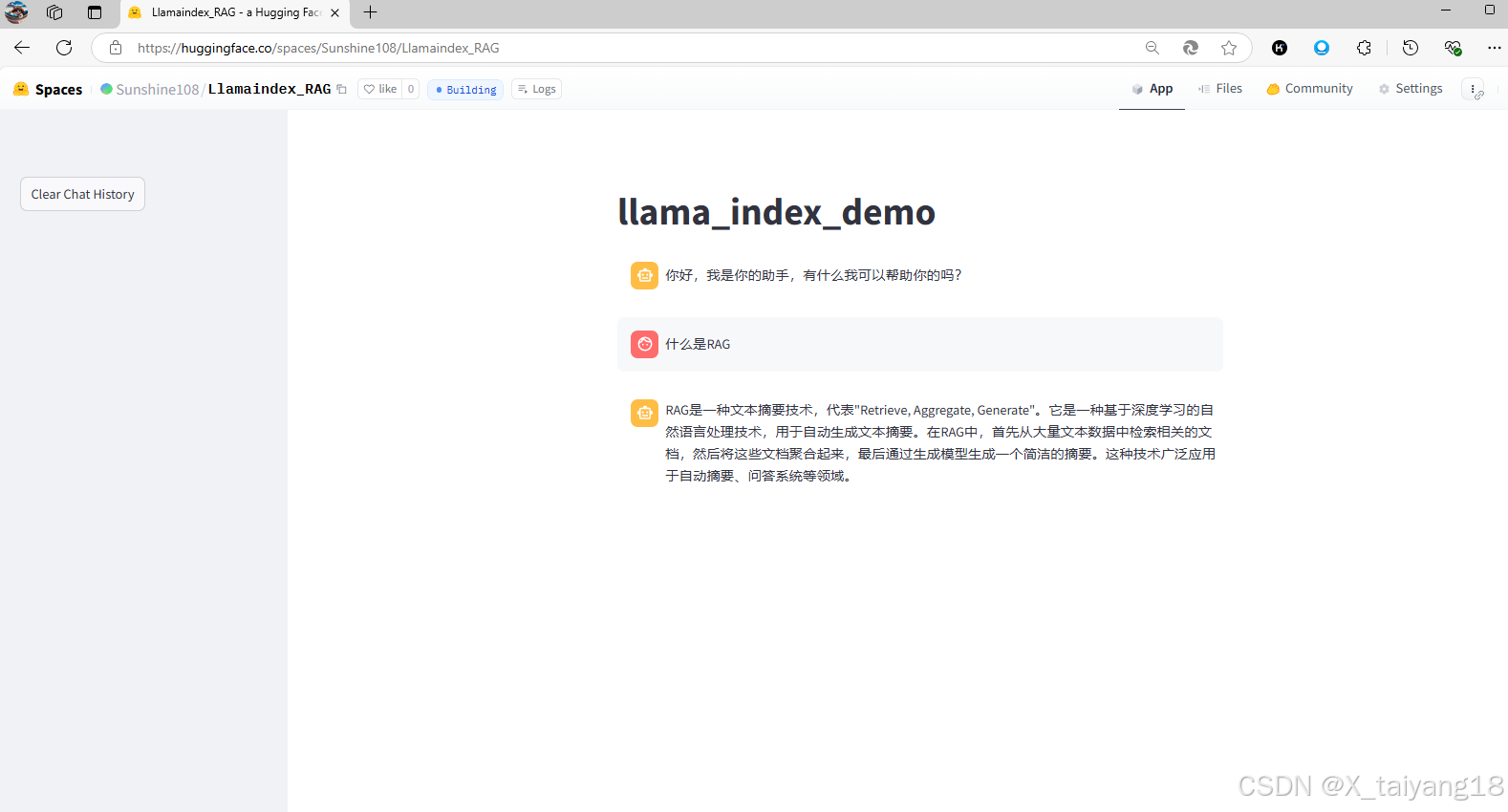

任务要求3 :将 Streamlit+LlamaIndex+浦语API的 Space 部署到 Hugging Face。

实践流程

新开一个30% A100机器 Cuda12.2-conda 镜像

浦语 API+LlamaIndex 实践

# 安装新环境

conda create -n llamaindex python=3.10

conda env list

conda activate llamaindex

# 安装 Llamaindex和相关的包

pip install einops==0.7.0 protobuf==5.26.1

pip install llama-index==0.11.20

pip install llama-index-llms-replicate==0.3.0

pip install llama-index-llms-openai-like==0.2.0

pip install llama-index-embeddings-huggingface==0.3.1

pip install llama-index-embeddings-instructor==0.2.1

pip install torch==2.5.0 torchvision==0.20.0 torchaudio==2.5.0 --index-url https://download.pytorch.org/whl/cu121

# 下载 Sentence Transformer 模型

cd ~

mkdir llamaindex_demo

mkdir model

cd ~/llamaindex_demo

touch download_hf.py

# download_hf.py 中的代码

import os

# 设置环境变量

os.environ['HF_ENDPOINT'] = 'https://hf-mirror.com'

# 下载模型

os.system('huggingface-cli download --resume-download sentence-transformers/paraphrase-multilingual-MiniLM-L12-v2 --local-dir /root/model/sentence-transformer')

# 执行代码

cd /root/llamaindex_demo

conda activate llamaindex

python download_hf.py

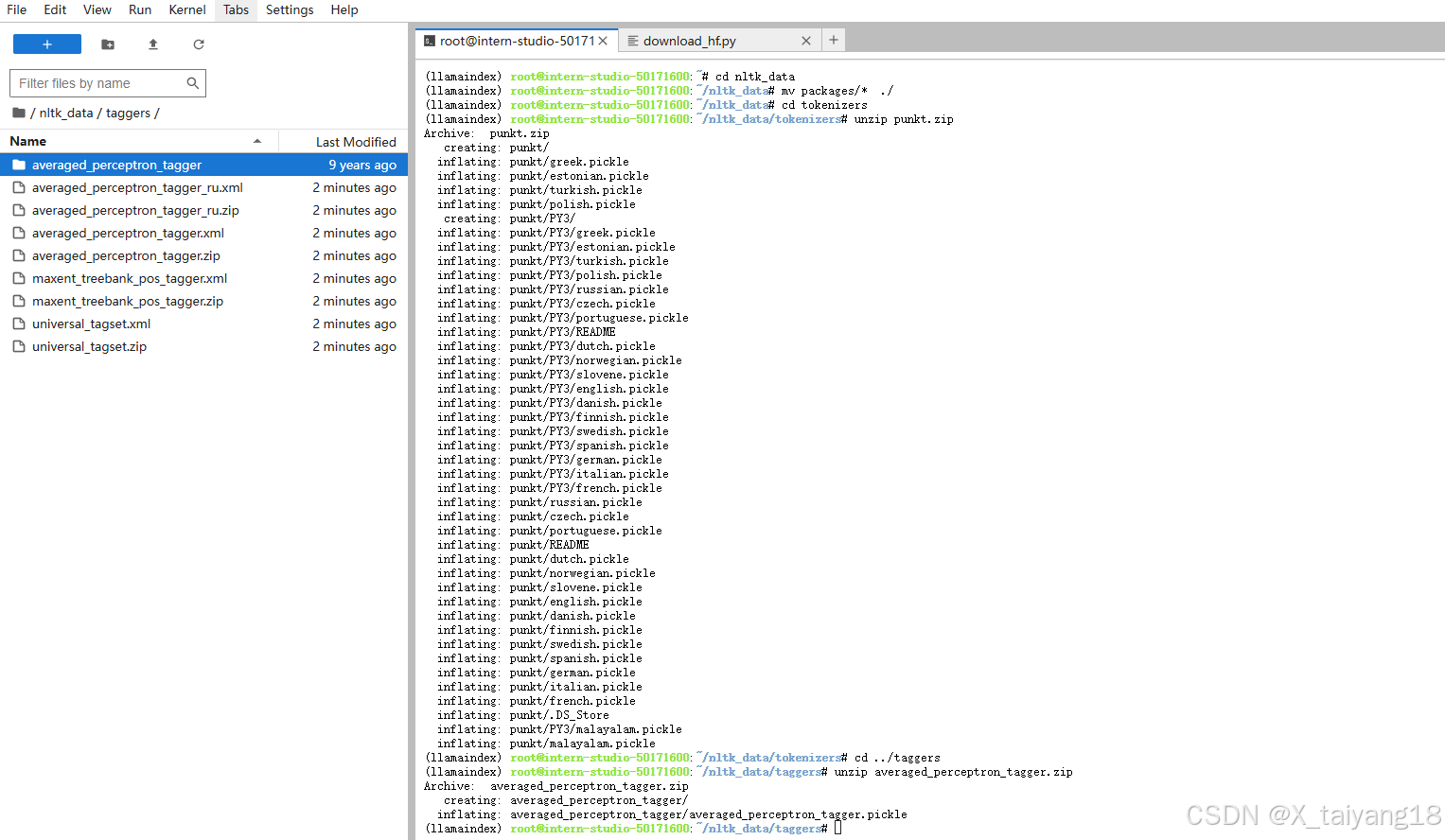

# 下载 NLTK 相关资源

cd /root

git clone https://gitee.com/yzy0612/nltk_data.git --branch gh-pages

cd nltk_data

mv packages/* ./

cd tokenizers

unzip punkt.zip

cd ../taggers

unzip averaged_perceptron_tagger.zip

是否使用 LlamaIndex 前后对比

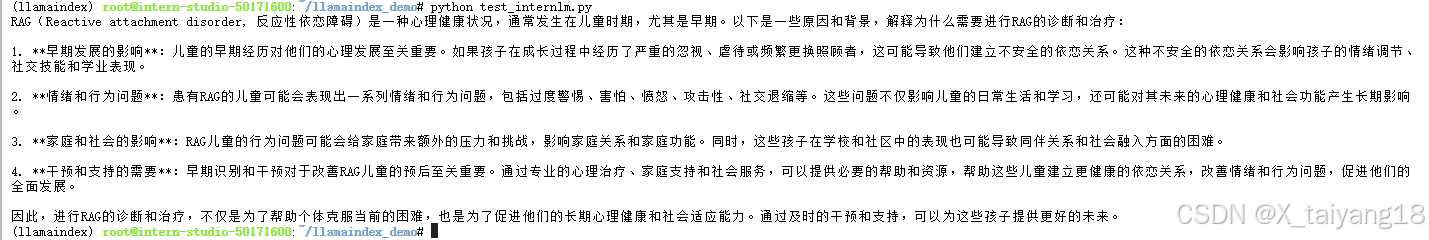

- 不使用 LlamaIndex RAG(仅API)

- 运行以下指令,新建一个python文件

cd ~/llamaindex_demo touch test_internlm.py - 打开test_internlm.py 贴入以下代码

from openai import OpenAI base_url = "https://internlm-chat.intern-ai.org.cn/puyu/api/v1/" api_key = "eyJ0eXBlIjoiSldUIiwiYWxnIjoiSFM1MTIifQ.eyJqdGkiOiI1MDE3MTYwMCIsInJvbCI6IlJPTEVfUkVHSVNURVIiLCJpc3MiOiJPcGVuWExhYiIsImlhdCI6MTczMjg4NDg3NiwiY2xpZW50SWQiOiJlYm1ydm9kNnlvMG5semFlazF5cCIsInBob25lIjoiMTUyOTY2MjY3OTIiLCJ1dWlkIjoiYjA2NDA1MmQtNmEzYy00MDUwLThjOTgtNjc1ZDJmYzhhNTYxIiwiZW1haWwiOiIiLCJleHAiOjE3NDg0MzY4NzZ9.QRz3xCB7fE8rwNTlqHZ2H9K7wum0gt_pGEk7PtmxihKEnRKJ9oL-ULjaPr9SLTFUCGu_GRcTuNSWGWMDGyDZWw" model="internlm2.5-latest" # base_url = "https://api.siliconflow.cn/v1" # api_key = "sk-请填写准确的 token!" # model="internlm/internlm2_5-7b-chat" client = OpenAI( api_key=api_key , base_url=base_url, ) chat_rsp = client.chat.completions.create( model=model, messages=[{"role": "user", "content": "为什么要做 RAG ?"}], ) for choice in chat_rsp.choices: print(choice.message.content) - 之后运行

conda activate llamaindex cd ~/llamaindex_demo/ python test_internlm.py - 结果为:

可以看到回答的效果并不好

- 运行以下指令,新建一个python文件

- 使用 API+LlamaIndex

-

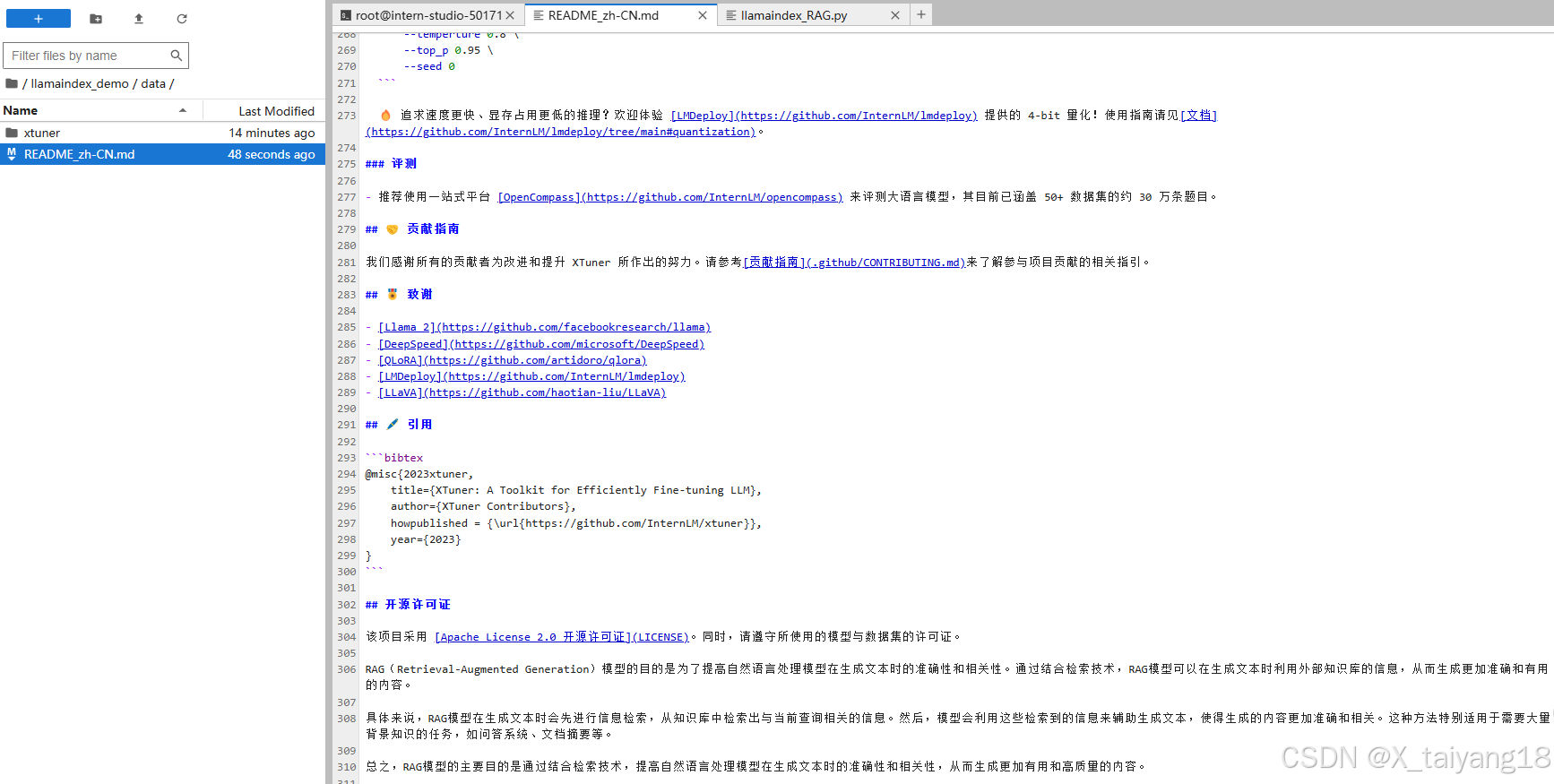

运行以下命令,获取知识库

cd ~/llamaindex_demo mkdir data cd data git clone https://github.com/InternLM/xtuner.git mv xtuner/README_zh-CN.md ./ -

编辑

README_zh-CN.md文件,加入对应的RAG内容供大模型参考

-

运行以下指令,新建一个python文件

cd ~/llamaindex_demo touch llamaindex_RAG.py -

打开llamaindex_RAG.py贴入以下代码

import os os.environ['NLTK_DATA'] = '/root/nltk_data' from llama_index.core import VectorStoreIndex, SimpleDirectoryReader from llama_index.core.settings import Settings from llama_index.embeddings.huggingface import HuggingFaceEmbedding from llama_index.legacy.callbacks import CallbackManager from llama_index.llms.openai_like import OpenAILike # Create an instance of CallbackManager callback_manager = CallbackManager() api_base_url = "https://internlm-chat.intern-ai.org.cn/puyu/api/v1/" model = "internlm2.5-latest" api_key = "eyJ0eXBlIjoiSldUIiwiYWxnIjoiSFM1MTIifQ.eyJqdGkiOiI1MDE3MTYwMCIsInJvbCI6IlJPTEVfUkVHSVNURVIiLCJpc3MiOiJPcGVuWExhYiIsImlhdCI6MTczMjg4NDg3NiwiY2xpZW50SWQiOiJlYm1ydm9kNnlvMG5semFlazF5cCIsInBob25lIjoiMTUyOTY2MjY3OTIiLCJ1dWlkIjoiYjA2NDA1MmQtNmEzYy00MDUwLThjOTgtNjc1ZDJmYzhhNTYxIiwiZW1haWwiOiIiLCJleHAiOjE3NDg0MzY4NzZ9.QRz3xCB7fE8rwNTlqHZ2H9K7wum0gt_pGEk7PtmxihKEnRKJ9oL-ULjaPr9SLTFUCGu_GRcTuNSWGWMDGyDZWw" # api_base_url = "https://api.siliconflow.cn/v1" # model = "internlm/internlm2_5-7b-chat" # api_key = "请填写 API Key" llm =OpenAILike(model=model, api_base=api_base_url, api_key=api_key, is_chat_model=True,callback_manager=callback_manager) #初始化一个HuggingFaceEmbedding对象,用于将文本转换为向量表示 embed_model = HuggingFaceEmbedding( #指定了一个预训练的sentence-transformer模型的路径 model_name="/root/model/sentence-transformer" ) #将创建的嵌入模型赋值给全局设置的embed_model属性, #这样在后续的索引构建过程中就会使用这个模型。 Settings.embed_model = embed_model #初始化llm Settings.llm = llm #从指定目录读取所有文档,并加载数据到内存中 documents = SimpleDirectoryReader("/root/llamaindex_demo/data").load_data() #创建一个VectorStoreIndex,并使用之前加载的文档来构建索引。 # 此索引将文档转换为向量,并存储这些向量以便于快速检索。 index = VectorStoreIndex.from_documents(documents) # 创建一个查询引擎,这个引擎可以接收查询并返回相关文档的响应。 query_engine = index.as_query_engine() response = query_engine.query("为什么要做 RAG ?") print(response) -

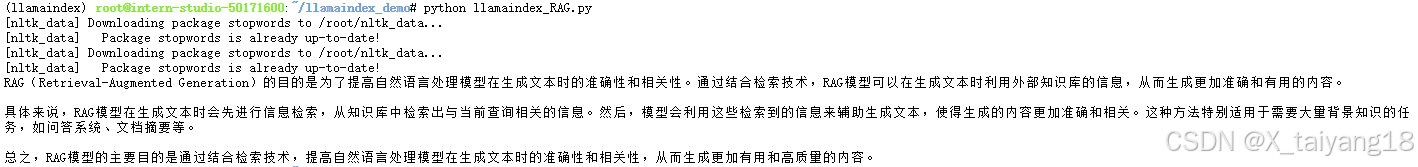

之后运行

conda activate llamaindex cd ~/llamaindex_demo/ python llamaindex_RAG.py -

结果为:

借助RAG技术后,就能获得我们想要的答案了

-

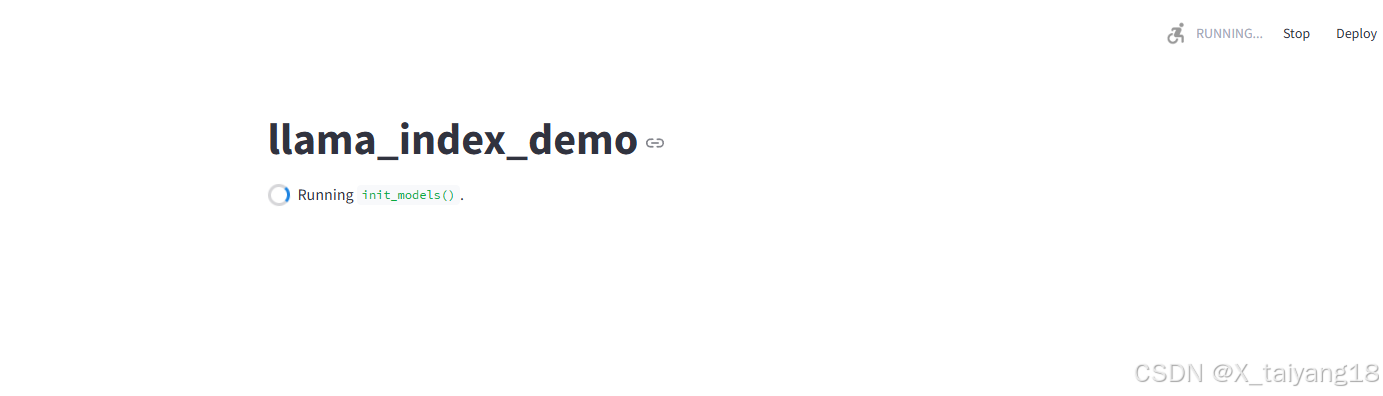

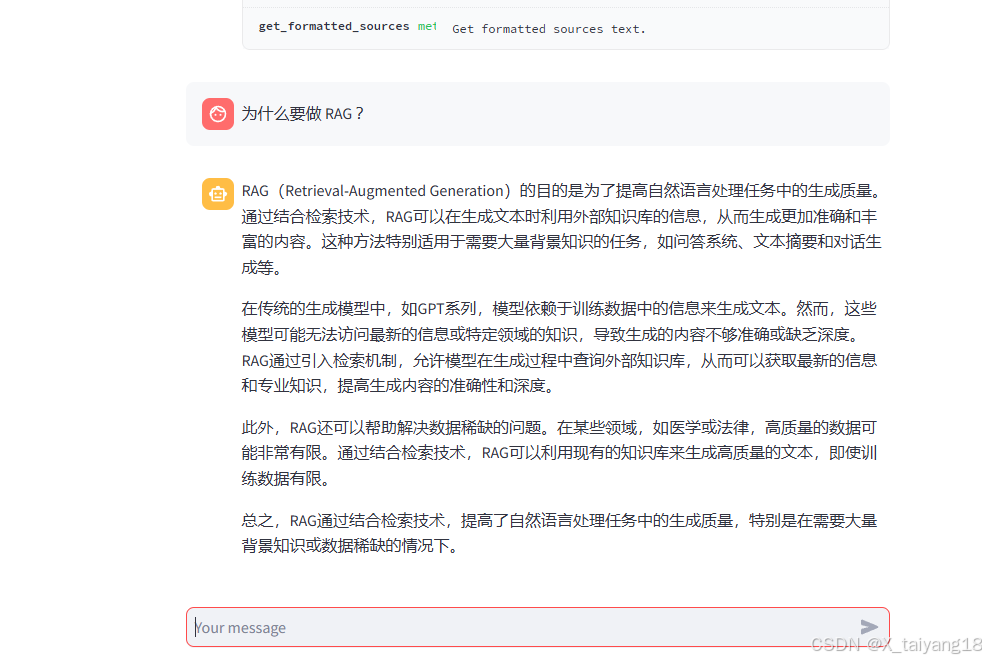

LlamaIndex web

- 运行之前首先安装依赖

pip install streamlit==1.39.0 - 运行以下指令,新建一个python文件

cd ~/llamaindex_demo touch app.py - 打开app.py贴入以下代码

import streamlit as st from llama_index.core import VectorStoreIndex, SimpleDirectoryReader, Settings from llama_index.embeddings.huggingface import HuggingFaceEmbedding from llama_index.legacy.callbacks import CallbackManager from llama_index.llms.openai_like import OpenAILike # Create an instance of CallbackManager callback_manager = CallbackManager() api_base_url = "https://internlm-chat.intern-ai.org.cn/puyu/api/v1/" model = "internlm2.5-latest" api_key = "eyJ0eXBlIjoiSldUIiwiYWxnIjoiSFM1MTIifQ.eyJqdGkiOiI1MDE3MTYwMCIsInJvbCI6IlJPTEVfUkVHSVNURVIiLCJpc3MiOiJPcGVuWExhYiIsImlhdCI6MTczMjg4NDg3NiwiY2xpZW50SWQiOiJlYm1ydm9kNnlvMG5semFlazF5cCIsInBob25lIjoiMTUyOTY2MjY3OTIiLCJ1dWlkIjoiYjA2NDA1MmQtNmEzYy00MDUwLThjOTgtNjc1ZDJmYzhhNTYxIiwiZW1haWwiOiIiLCJleHAiOjE3NDg0MzY4NzZ9.QRz3xCB7fE8rwNTlqHZ2H9K7wum0gt_pGEk7PtmxihKEnRKJ9oL-ULjaPr9SLTFUCGu_GRcTuNSWGWMDGyDZWw" # api_base_url = "https://api.siliconflow.cn/v1" # model = "internlm/internlm2_5-7b-chat" # api_key = "请填写 API Key" llm =OpenAILike(model=model, api_base=api_base_url, api_key=api_key, is_chat_model=True,callback_manager=callback_manager) st.set_page_config(page_title="llama_index_demo", page_icon="🦜🔗") st.title("llama_index_demo") # 初始化模型 @st.cache_resource def init_models(): embed_model = HuggingFaceEmbedding( model_name="/root/model/sentence-transformer" ) Settings.embed_model = embed_model #用初始化llm Settings.llm = llm documents = SimpleDirectoryReader("/root/llamaindex_demo/data").load_data() index = VectorStoreIndex.from_documents(documents) query_engine = index.as_query_engine() return query_engine # 检查是否需要初始化模型 if 'query_engine' not in st.session_state: st.session_state['query_engine'] = init_models() def greet2(question): response = st.session_state['query_engine'].query(question) return response # Store LLM generated responses if "messages" not in st.session_state.keys(): st.session_state.messages = [{"role": "assistant", "content": "你好,我是你的助手,有什么我可以帮助你的吗?"}] # Display or clear chat messages for message in st.session_state.messages: with st.chat_message(message["role"]): st.write(message["content"]) def clear_chat_history(): st.session_state.messages = [{"role": "assistant", "content": "你好,我是你的助手,有什么我可以帮助你的吗?"}] st.sidebar.button('Clear Chat History', on_click=clear_chat_history) # Function for generating LLaMA2 response def generate_llama_index_response(prompt_input): return greet2(prompt_input) # User-provided prompt if prompt := st.chat_input(): st.session_state.messages.append({"role": "user", "content": prompt}) with st.chat_message("user"): st.write(prompt) # Gegenerate_llama_index_response last message is not from assistant if st.session_state.messages[-1]["role"] != "assistant": with st.chat_message("assistant"): with st.spinner("Thinking..."): response = generate_llama_index_response(prompt) placeholder = st.empty() placeholder.markdown(response) message = {"role": "assistant", "content": response} st.session_state.messages.append(message) - 之后运行

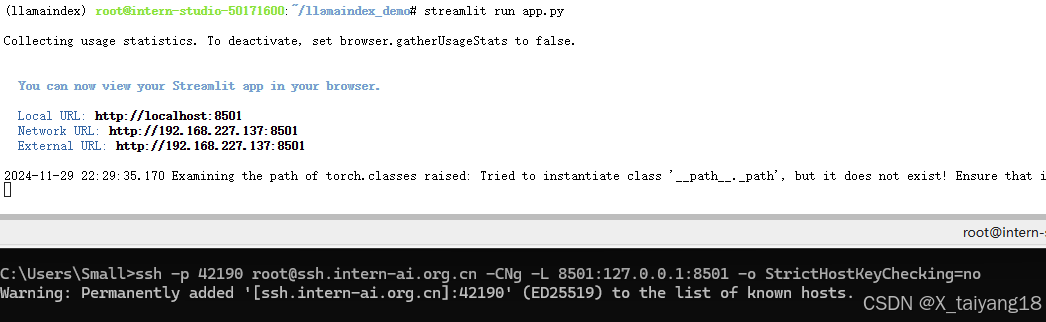

streamlit run app.py - 在本地终端运行(端口映射)

ssh -p 42190 root@ssh.intern-ai.org.cn -CNg -L 8501:127.0.0.1:8501 -o StrictHostKeyChecking=no -o StrictHostKeyChecking=no -o UserKnownHostsFile=/dev/null - 浏览器访问该url

http://localhost:8501/

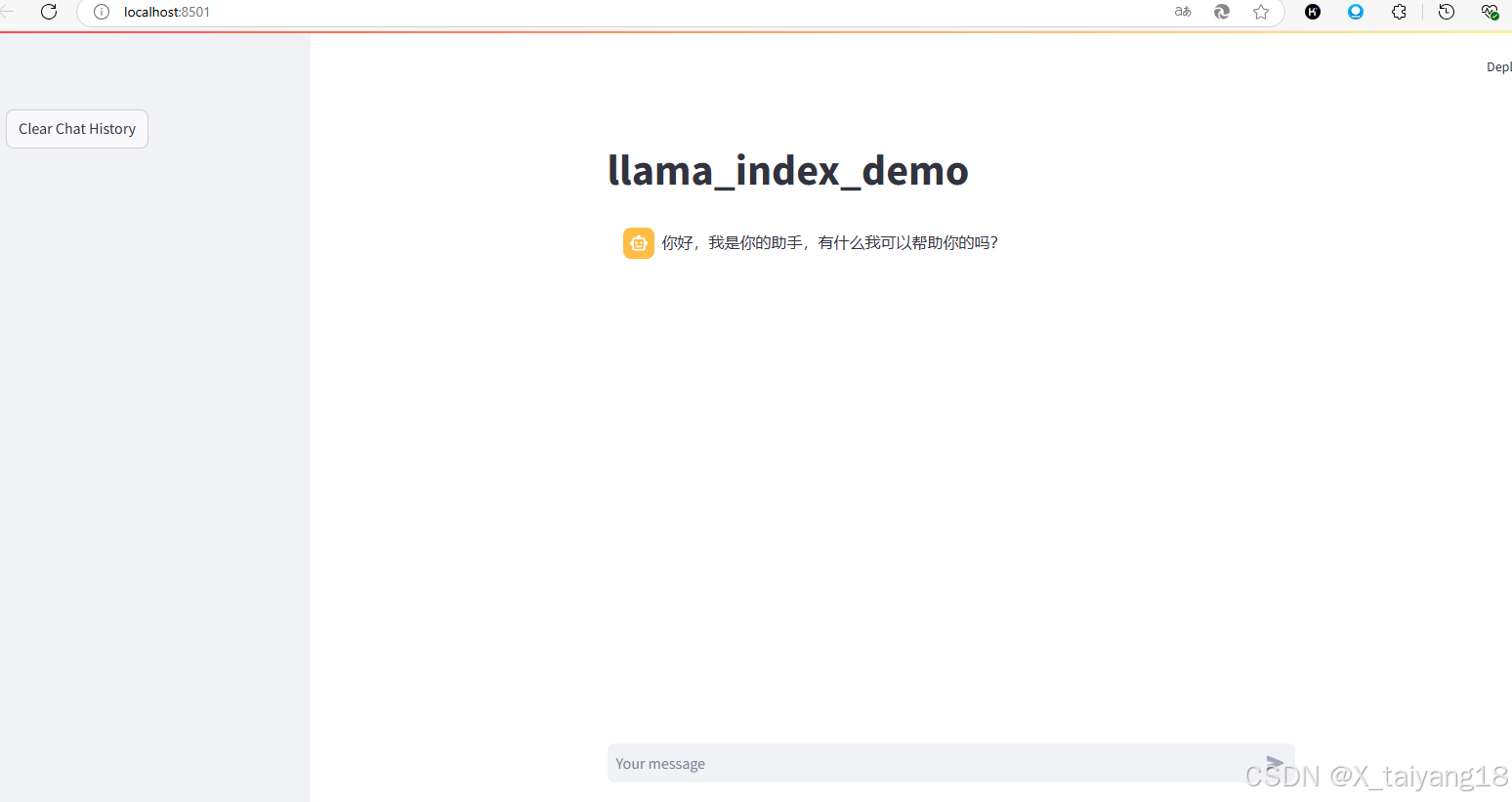

- 在该网页下,就可以开始尝试问问题了

- 询问结果为:

本地部署InternLM+LlamaIndex实践

- 安装相关的包

pip install huggingface_hub llama-index-llms-huggingface sentencepiece sentence-transformers - 运行以下指令,把

InternLM2 1.8B软连接出来cd ~/model ln -s /root/share/new_models/Shanghai_AI_Laboratory/internlm2-chat-1_8b/ ./ - 运行以下指令,新建一个 python 文件

cd ~/llamaindex_demo touch llamaindex_internlm.py - 打开 llamaindex_internlm.py 贴入以下代码

from llama_index.llms.huggingface import HuggingFaceLLM from llama_index.core.llms import ChatMessage llm = HuggingFaceLLM( model_name="/root/model/internlm2-chat-1_8b", tokenizer_name="/root/model/internlm2-chat-1_8b", model_kwargs={"trust_remote_code":True}, tokenizer_kwargs={"trust_remote_code":True} ) rsp = llm.chat(messages=[ChatMessage(content="为什么要做 RAG ?")]) print(rsp) - 之后运行

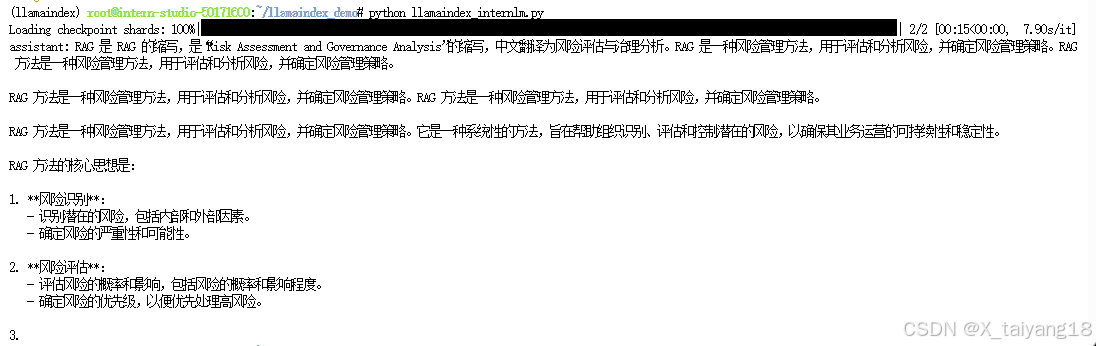

conda activate llamaindex cd ~/llamaindex_demo/ python llamaindex_internlm.py - 结果为:

目前回答的效果并不好,并不是我们想要的。

- 运行以下指令,新建一个 python 文件

cd ~/llamaindex_demo touch llamaindex_internlm_RAG.py - 打开llamaindex_internlm_RAG.py贴入以下代码

from llama_index.core import VectorStoreIndex, SimpleDirectoryReader, Settings from llama_index.embeddings.huggingface import HuggingFaceEmbedding from llama_index.llms.huggingface import HuggingFaceLLM #初始化一个HuggingFaceEmbedding对象,用于将文本转换为向量表示 embed_model = HuggingFaceEmbedding( #指定了一个预训练的sentence-transformer模型的路径 model_name="/root/model/sentence-transformer" ) #将创建的嵌入模型赋值给全局设置的embed_model属性, #这样在后续的索引构建过程中就会使用这个模型。 Settings.embed_model = embed_model llm = HuggingFaceLLM( model_name="/root/model/internlm2-chat-1_8b", tokenizer_name="/root/model/internlm2-chat-1_8b", model_kwargs={"trust_remote_code":True}, tokenizer_kwargs={"trust_remote_code":True} ) #设置全局的llm属性,这样在索引查询时会使用这个模型。 Settings.llm = llm #从指定目录读取所有文档,并加载数据到内存中 documents = SimpleDirectoryReader("/root/llamaindex_demo/data").load_data() #创建一个VectorStoreIndex,并使用之前加载的文档来构建索引。 # 此索引将文档转换为向量,并存储这些向量以便于快速检索。 index = VectorStoreIndex.from_documents(documents) # 创建一个查询引擎,这个引擎可以接收查询并返回相关文档的响应。 query_engine = index.as_query_engine() response = query_engine.query("为什么要做 RAG ?") print(response) - 之后运行

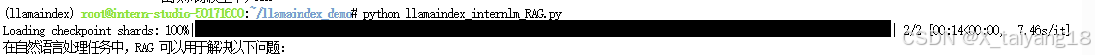

conda activate llamaindex cd ~/llamaindex_demo/ python llamaindex_internlm_RAG.py

结果为:

借助 RAG 技术后,就能获得我们想要的答案了

- 运行以下指令,新建一个 python 文件

cd ~/llamaindex_demo touch app_internlm.py - 打开app_internlm.py 贴入以下代码

import streamlit as st from llama_index.core import VectorStoreIndex, SimpleDirectoryReader, Settings from llama_index.embeddings.huggingface import HuggingFaceEmbedding from llama_index.llms.huggingface import HuggingFaceLLM st.set_page_config(page_title="llama_index_demo", page_icon="🦜🔗") st.title("llama_index_demo") # 初始化模型 @st.cache_resource def init_models(): embed_model = HuggingFaceEmbedding( model_name="/root/model/sentence-transformer" ) Settings.embed_model = embed_model llm = HuggingFaceLLM( model_name="/root/model/internlm2-chat-1_8b", tokenizer_name="/root/model/internlm2-chat-1_8b", model_kwargs={"trust_remote_code": True}, tokenizer_kwargs={"trust_remote_code": True} ) Settings.llm = llm documents = SimpleDirectoryReader("/root/llamaindex_demo/data").load_data() index = VectorStoreIndex.from_documents(documents) query_engine = index.as_query_engine() return query_engine # 检查是否需要初始化模型 if 'query_engine' not in st.session_state: st.session_state['query_engine'] = init_models() def greet2(question): response = st.session_state['query_engine'].query(question) return response # Store LLM generated responses if "messages" not in st.session_state.keys(): st.session_state.messages = [{"role": "assistant", "content": "你好,我是你的助手,有什么我可以帮助你的吗?"}] # Display or clear chat messages for message in st.session_state.messages: with st.chat_message(message["role"]): st.write(message["content"]) def clear_chat_history(): st.session_state.messages = [{"role": "assistant", "content": "你好,我是你的助手,有什么我可以帮助你的吗?"}] st.sidebar.button('Clear Chat History', on_click=clear_chat_history) # Function for generating LLaMA2 response def generate_llama_index_response(prompt_input): return greet2(prompt_input) # User-provided prompt if prompt := st.chat_input(): st.session_state.messages.append({"role": "user", "content": prompt}) with st.chat_message("user"): st.write(prompt) # Gegenerate_llama_index_response last message is not from assistant if st.session_state.messages[-1]["role"] != "assistant": with st.chat_message("assistant"): with st.spinner("Thinking..."): response = generate_llama_index_response(prompt) placeholder = st.empty() placeholder.markdown(response) message = {"role": "assistant", "content": response} st.session_state.messages.append(message) - 之后运行

streamlit run app_internlm.py - 在本地终端运行(端口映射)

ssh -p 42190 root@ssh.intern-ai.org.cn -CNg -L 8501:127.0.0.1:8501 -o StrictHostKeyChecking=no -o StrictHostKeyChecking=no -o UserKnownHostsFile=/dev/null - 浏览器访问该url

http://localhost:8501/

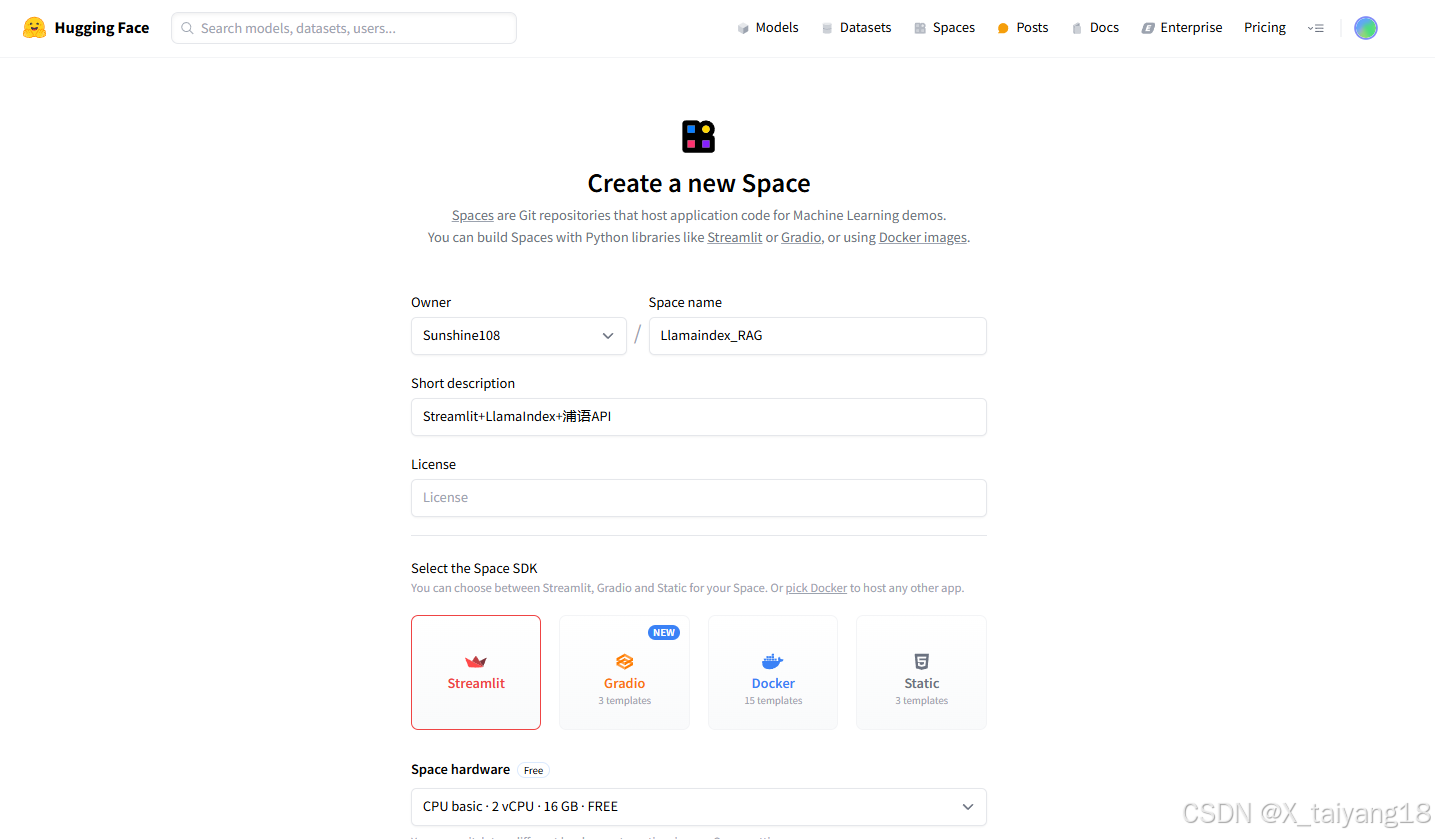

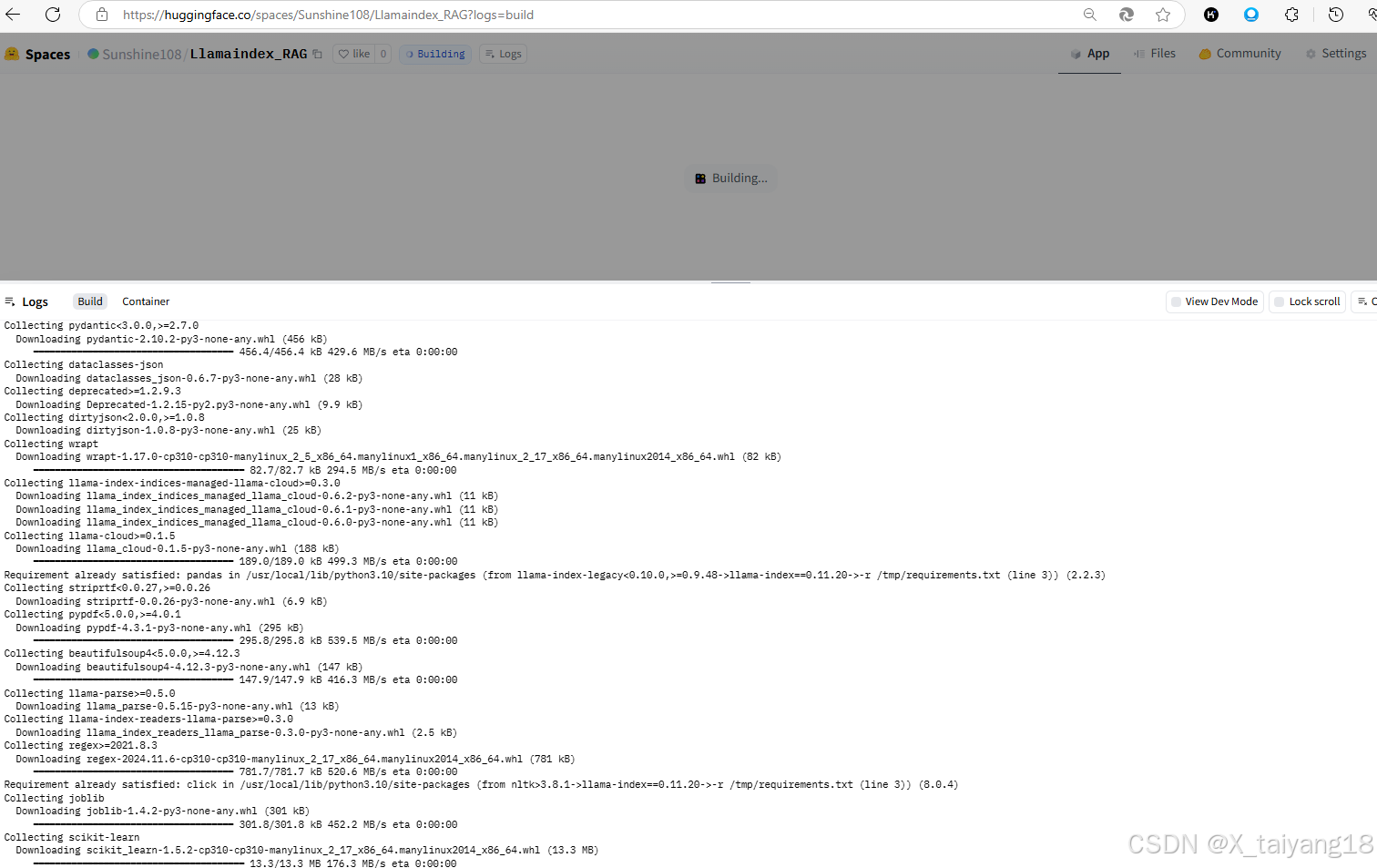

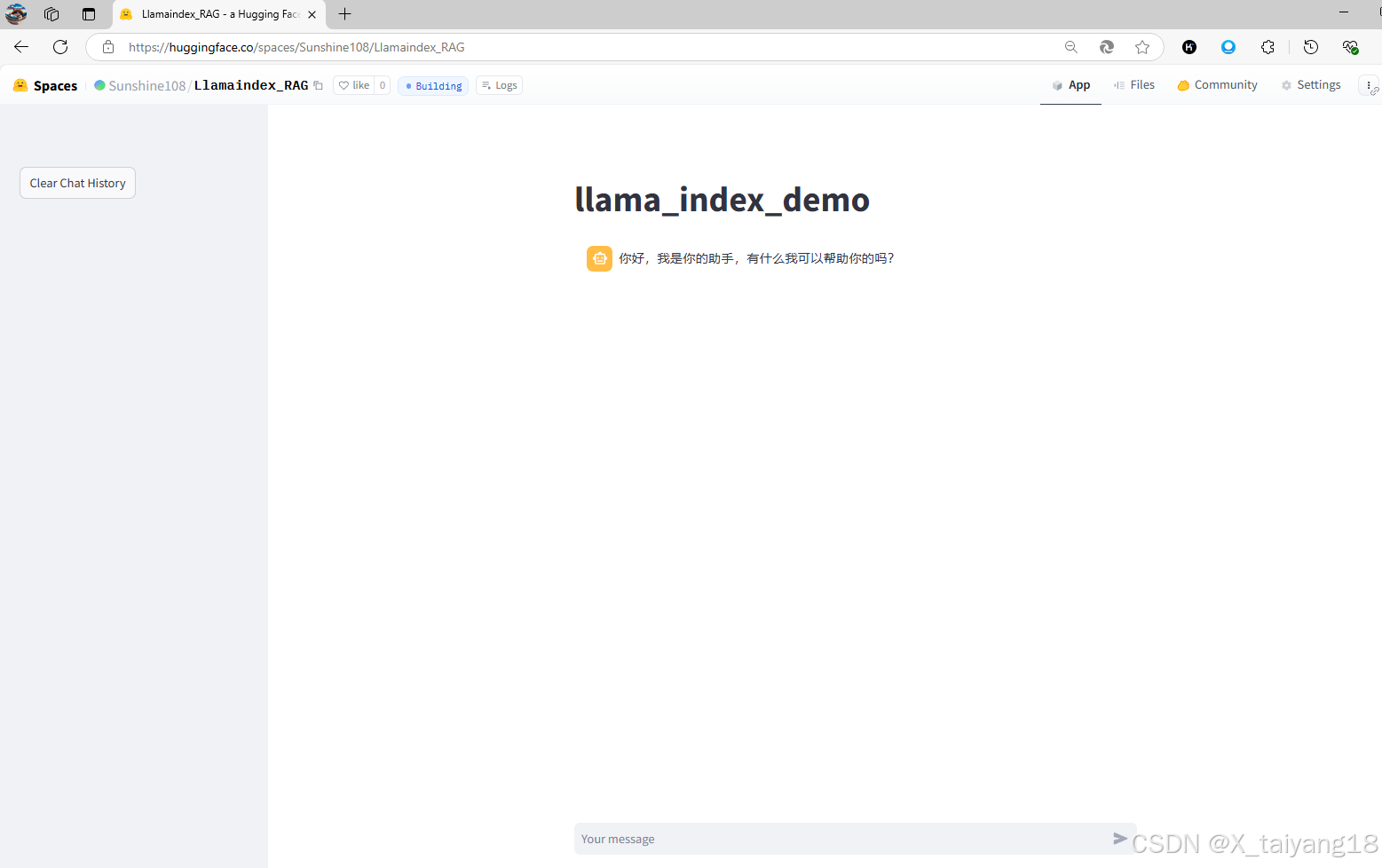

部署到 Hugging Face

- 登录 Hugging Face 平台

进入Hugging Face官网,点击右上角头像,New Space,选择Streamlit。

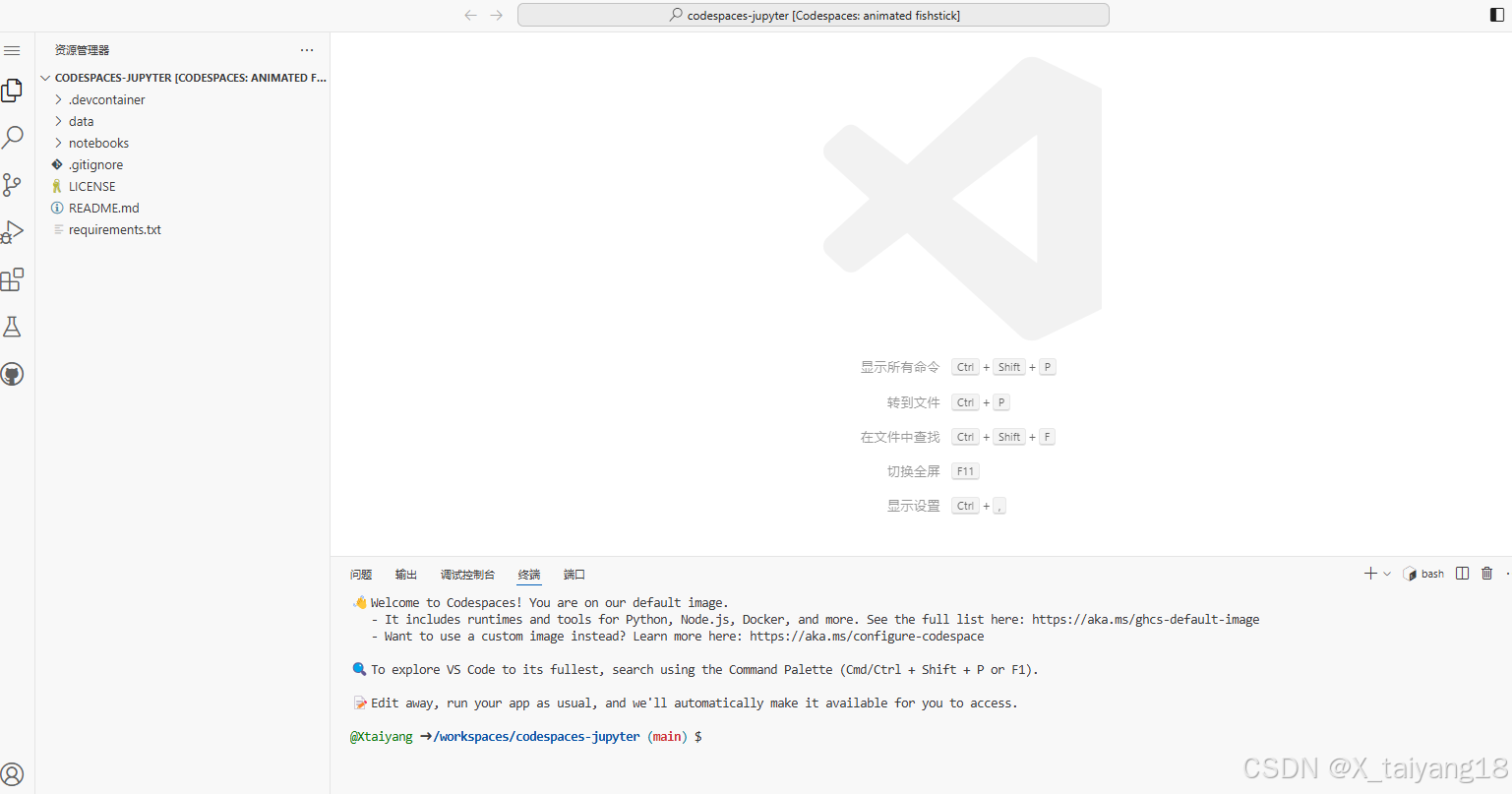

- 登录 GitHub CodeSpace 平台

- 创建requirements.txt,space在运行时会自动安装requirements.txt中的库。

einops==0.7.0 protobuf==3.20.3 llama-index==0.11.20 llama-index-llms-replicate==0.3.0 llama-index-llms-openai-like==0.2.0 llama-index-embeddings-huggingface==0.3.1 llama-index-embeddings-instructor==0.2.1 torch==2.5.0 torchvision==0.20.0 torchaudio==2.5.0 - 克隆自己在huggingface上刚刚创建的项目:

pip install huggingface_hub git clone https://huggingface.co/spaces/Sunshine108/Llamaindex_RAG - 切换至Llamaindex_RAG目录下,运行以下指令,新建一个 python 文件

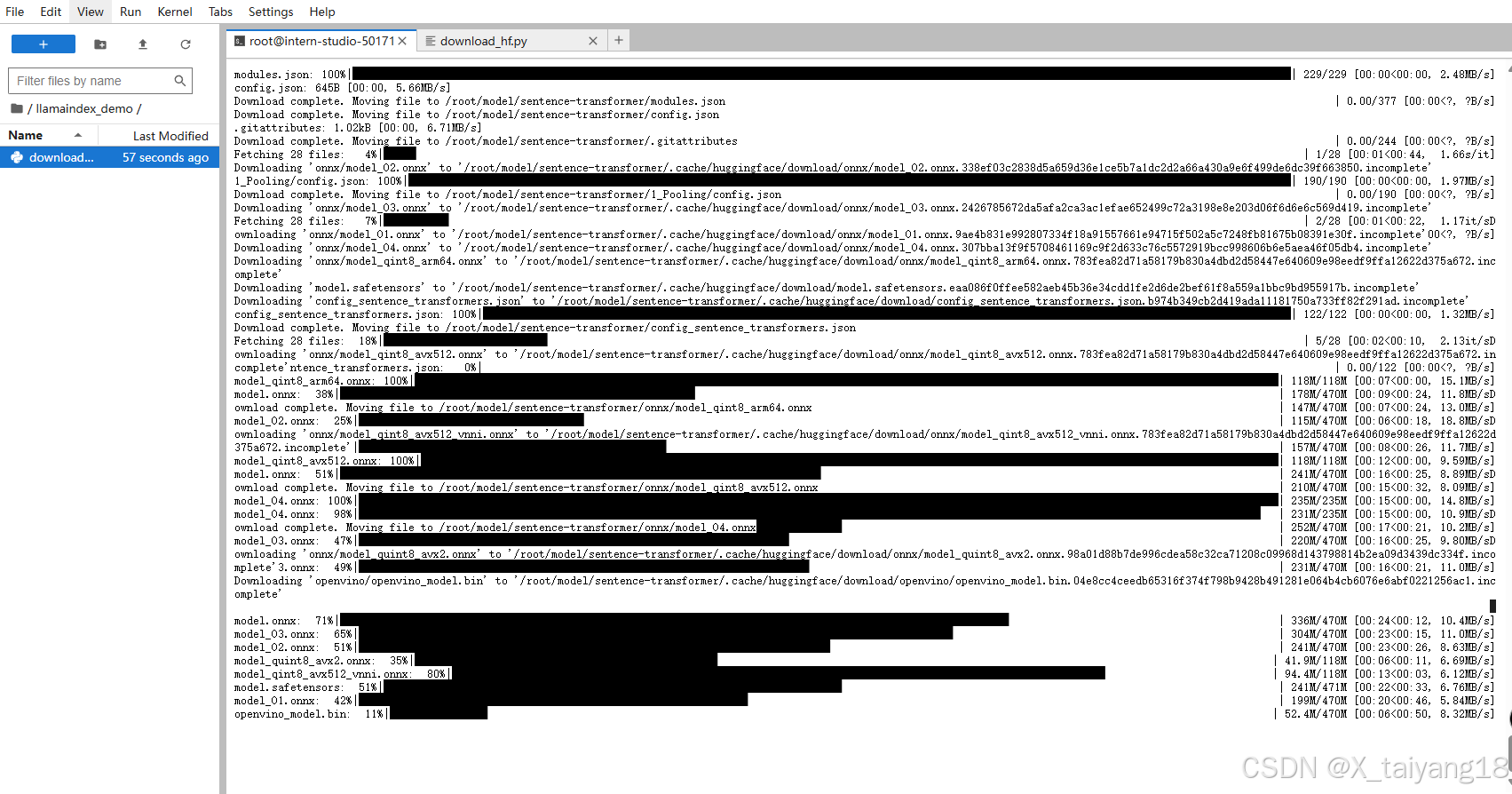

cd /workspaces/codespaces-jupyter/Llamaindex_RAG touch app.py - 在Llamaindex_RAG目录下创建model文件夹并下载sentence-transformer:

mkdir model huggingface-cli download --resume-download sentence-transformers/paraphrase-multilingual-MiniLM-L12-v2 --local-dir /workspaces/codespaces-jupyter/Llamaindex_RAG/model - 打开app.py贴入以下代码

import streamlit as st from llama_index.core import VectorStoreIndex, SimpleDirectoryReader, Settings from llama_index.embeddings.huggingface import HuggingFaceEmbedding from llama_index.legacy.callbacks import CallbackManager from llama_index.llms.openai_like import OpenAILike # Create an instance of CallbackManager callback_manager = CallbackManager() api_base_url = "https://internlm-chat.intern-ai.org.cn/puyu/api/v1/" model = "internlm2.5-latest" api_key = "eyJ0eXBlIjoiSldUIiwiYWxnIjoiSFM1MTIifQ.eyJqdGkiOiI1MDE3MTYwMCIsInJvbCI6IlJPTEVfUkVHSVNURVIiLCJpc3MiOiJPcGVuWExhYiIsImlhdCI6MTczMjg4NDg3NiwiY2xpZW50SWQiOiJlYm1ydm9kNnlvMG5semFlazF5cCIsInBob25lIjoiMTUyOTY2MjY3OTIiLCJ1dWlkIjoiYjA2NDA1MmQtNmEzYy00MDUwLThjOTgtNjc1ZDJmYzhhNTYxIiwiZW1haWwiOiIiLCJleHAiOjE3NDg0MzY4NzZ9.QRz3xCB7fE8rwNTlqHZ2H9K7wum0gt_pGEk7PtmxihKEnRKJ9oL-ULjaPr9SLTFUCGu_GRcTuNSWGWMDGyDZWw" # api_base_url = "https://api.siliconflow.cn/v1" # model = "internlm/internlm2_5-7b-chat" # api_key = "请填写 API Key" llm =OpenAILike(model=model, api_base=api_base_url, api_key=api_key, is_chat_model=True,callback_manager=callback_manager) st.set_page_config(page_title="llama_index_demo", page_icon="🦜🔗") st.title("llama_index_demo") # 初始化模型 @st.cache_resource def init_models(): embed_model = HuggingFaceEmbedding( model_name="model" ) Settings.embed_model = embed_model #用初始化llm Settings.llm = llm documents = SimpleDirectoryReader("data").load_data() index = VectorStoreIndex.from_documents(documents) query_engine = index.as_query_engine() return query_engine # 检查是否需要初始化模型 if 'query_engine' not in st.session_state: st.session_state['query_engine'] = init_models() def greet2(question): response = st.session_state['query_engine'].query(question) return response # Store LLM generated responses if "messages" not in st.session_state.keys(): st.session_state.messages = [{"role": "assistant", "content": "你好,我是你的助手,有什么我可以帮助你的吗?"}] # Display or clear chat messages for message in st.session_state.messages: with st.chat_message(message["role"]): st.write(message["content"]) def clear_chat_history(): st.session_state.messages = [{"role": "assistant", "content": "你好,我是你的助手,有什么我可以帮助你的吗?"}] st.sidebar.button('Clear Chat History', on_click=clear_chat_history) # Function for generating LLaMA2 response def generate_llama_index_response(prompt_input): return greet2(prompt_input) # User-provided prompt if prompt := st.chat_input(): st.session_state.messages.append({"role": "user", "content": prompt}) with st.chat_message("user"): st.write(prompt) # Gegenerate_llama_index_response last message is not from assistant if st.session_state.messages[-1]["role"] != "assistant": with st.chat_message("assistant"): with st.spinner("Thinking..."): response = generate_llama_index_response(prompt) placeholder = st.empty() placeholder.markdown(response) message = {"role": "assistant", "content": response} st.session_state.messages.append(message) - 在Llamaindex_RAG目录下创建data文件夹和README.md

mkdir data cd data git clone https://github.com/InternLM/xtuner.git mv xtuner/README_zh-CN.md ./

---

title: Llamaindex_RAG

emoji: 🦙

colorFrom: green

colorTo: blue

sdk: streamlit

sdk_version: "1.39.0"

app_file: app.py

pinned: false

---

# Llamaindex_RAG

Welcome to Llamaindex_RAG.

- 现在可以push到远程仓库上了

git add . git commit -m "demo" git remote set-url origin https://Sunshine108:hf_cEwsWCfJtMncMILqhoppDjwGh@huggingface.co/spaces/Sunshine108/Llamaindex_RAG git push

总结

天行健,君子以自强不息。

1304

1304

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?