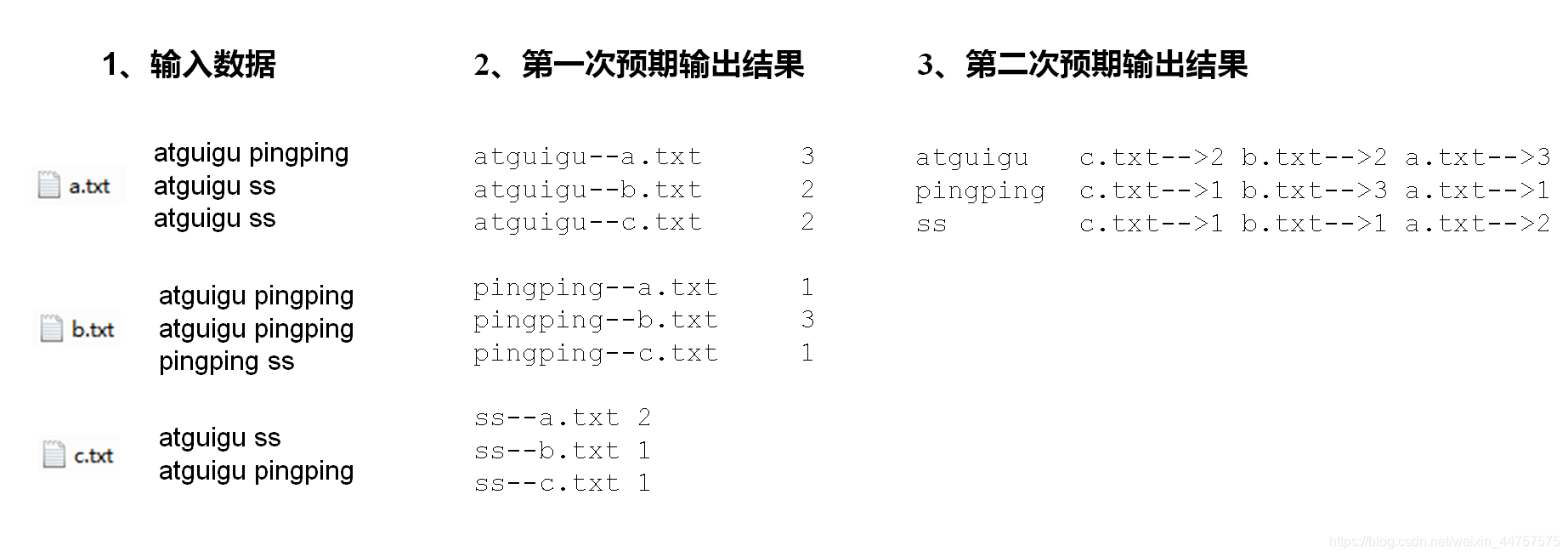

倒排索引案例(多job串联)

- 需求及分析:有大量的文本(文档,网页),需要建立搜索索引(如下图所示)

- 第一次处理:

(1)第一次处理,编写OneIndexMapper类

public class OneIndexMapper extends Mapper<LongWritable, Text, Text, IntWritable>{

String name;

Text k = new Text();

IntWritable v = new IntWritable();

@Override

protected void setup(Context context)throws IOException, InterruptedException {

// 获取文件名称

FileSplit split = (FileSplit) context.getInputSplit();

name = split.getPath().getName();

}

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

// 1 获取1行

String line = value.toString();

// 2 切割

String[] fields = line.split(" ");

for (String word : fields) {

// 3 拼接

k.set(word+"--"+name);

v.set(1);

// 4 写出

context.write(k, v);

}

}

}

(2)第一次处理,编写OneIndexReducer类

public class OneIndexReducer extends Reducer<Text, IntWritable, Text, IntWritable>{

IntWritable v = new IntWritable();

@Override

protected void reduce(Text key, Iterable<IntWritable> values,Context context) throws IOException, InterruptedException {

int sum = 0;

// 1 累加求和

for(IntWritable value: values){

sum +=value.get();

}

v.set(sum);

// 2 写出

context.write(key, v);

}

}(3)第一次处理,编写OneIndexDriver类

public class OneIndexDriver {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

String inputPath = "E:\\input\\input4";

String outputPath = "E:\\output\\output6";

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

job.setJarByClass(OneIndexDriver.class);

job.setMapperClass(OneIndexMapper.class);

job.setReducerClass(OneIndexReducer.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

FileInputFormat.setInputPaths(job,new Path(inputPath));

FileOutputFormat.setOutputPath(job,new Path(outputPath));

boolean res = job.waitForCompletion(true);

System.exit(res ? 0 : 1);

}

}- 第二次处理:

(1)第二次处理,编写TwoIndexMapper类

public class TwoIndexMapper extends Mapper<LongWritable,Text,Text,Text> {

Text k = new Text();

Text v = new Text();

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String line = value.toString();

String[] split = line.split("--");

k.set(split[0]);

v.set(split[1]);

context.write(k,v);

}

}(2)第二次处理,编写TwoIndexReducer类

public class TwoIndexReducer extends Reducer<Text,Text,Text,Text> {

Text value = new Text();

@Override

protected void reduce(Text key, Iterable<Text> values, Context context) throws IOException, InterruptedException {

// atguigu a.txt 3

// atguigu b.txt 2

// atguigu c.txt 2

// atguigu c.txt-->2 b.txt-->2 a.txt-->3

StringBuilder sb = new StringBuilder();

for (Text text : values) {

//拼接

sb.append(text.toString().replace("\t","-->")+"\t");

}

value.set(sb.toString());

context.write(key,value);

}

}

(3)第二次处理,编写TwoIndexDriver类

public class TwoIndexDriver {

public static void main(String[] args) throws Exception {

String inputPath = "E:\\output\\output6";

String outputPath = "E:\\output\\output2";

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

job.setJarByClass(TwoIndexDriver.class);

job.setMapperClass(TwoIndexMapper.class);

job.setReducerClass(TwoIndexReducer.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(Text.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);

FileInputFormat.setInputPaths(job,new Path(inputPath));

FileOutputFormat.setOutputPath(job,new Path(outputPath));

boolean res = job.waitForCompletion(true);

System.exit(res ? 0 : 1);

}

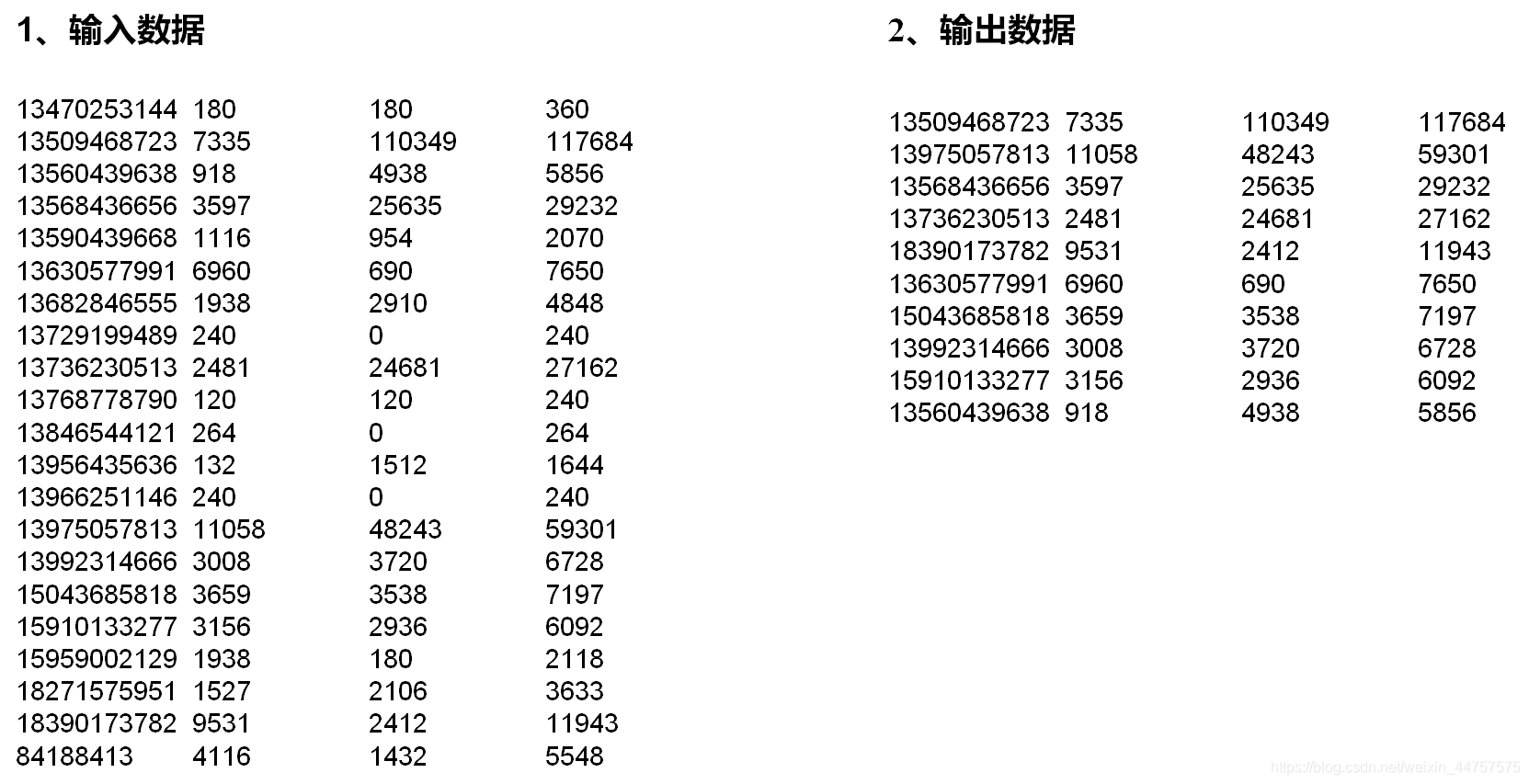

}TopN案例

需求如下:

代码实现:

(1)编写FlowBean类:

public class FlowBean implements WritableComparable<FlowBean> {

private long upFlow;

private long downFlow;

private long sumFlow;

public FlowBean() {

super();

}

public FlowBean(long upFlow, long downFlow) {

super();

this.upFlow = upFlow;

this.downFlow = downFlow;

}

@Override

public void write(DataOutput dataOutput) throws IOException {

dataOutput.writeLong(upFlow);

dataOutput.writeLong(downFlow);

dataOutput.writeLong(sumFlow);

}

@Override

public void readFields(DataInput dataInput) throws IOException {

upFlow = dataInput.readLong();

downFlow = dataInput.readLong();

sumFlow = dataInput.readLong();

}

public long getUpFlow() {

return upFlow;

}

public void setUpFlow(long upFlow) {

this.upFlow = upFlow;

}

public long getDownFlow() {

return downFlow;

}

public void setDownFlow(long downFlow) {

this.downFlow = downFlow;

}

public long getSumFlow() {

return sumFlow;

}

public void setSumFlow(long sumFlow) {

this.sumFlow = sumFlow;

}

public void set(long upFlow, long downFlow) {

this.upFlow = upFlow;

this.downFlow = downFlow;

this.sumFlow = upFlow + downFlow;

}

@Override

public String toString() {

return upFlow + "\t" + downFlow + "\t" + sumFlow;

}

@Override

public int compareTo(FlowBean flowBean) {

if (this.getSumFlow() > flowBean.getSumFlow())

return -1;

else if (this.getSumFlow() < flowBean.getSumFlow())

return 1;

else

return 0;

}

}(2)编写TopNMapper类

public class TopNMapper extends Mapper<LongWritable, Text, FlowBean, Text> {

private FlowBean k;

private Text v;

// 定义一个TreeMap作为存储数据的容器(天然按key排序)

private TreeMap<FlowBean, Text> flowMap = new TreeMap<FlowBean, Text>();

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

k = new FlowBean();

v = new Text();

// 1 获取一行

String line = value.toString();

// 2 切割

String[] flieds = line.split("\t");

// 3 封装数据

v.set(flieds[1]);

String upFlow = flieds[flieds.length - 3];

String downFlow = flieds[flieds.length - 2];

k.set(Long.parseLong(upFlow), Long.parseLong(downFlow));

// 4 向TreeMap中添加数据

flowMap.put(k,v);

// 5 限制TreeMap的数据量,超过10条就删除掉流量最小的一条数据

if(flowMap.size() > 10){

flowMap.remove(flowMap.lastKey());

}

}

@Override

protected void cleanup(Context context) throws IOException, InterruptedException {

// 6 遍历treeMap集合,输出数据

Iterator<FlowBean> iterator = flowMap.keySet().iterator();

while (iterator.hasNext()){

FlowBean k = iterator.next();

context.write(k,flowMap.get(k));

}

}

}(3)编写TopNReducer类

public class TopNReducer extends Reducer<FlowBean,Text,Text,FlowBean> {

// 定义一个TreeMap作为存储数据的容器(天然按key排序)

TreeMap<FlowBean, Text> flowMap = new TreeMap<FlowBean, Text>();

@Override

protected void reduce(FlowBean key, Iterable<Text> values, Context context) throws IOException, InterruptedException {

for (Text v : values) {

FlowBean k = new FlowBean();

k.set(key.getUpFlow(),key.getDownFlow());

// 1 向treeMap集合中添加数据

flowMap.put(k,new Text(v));

// 2 限制TreeMap数据量,超过10条就删除掉流量最小的一条数据

if (flowMap.size()>10)

flowMap.remove(flowMap.lastKey());

}

}

@Override

protected void cleanup(Context context) throws IOException, InterruptedException {

// 3 遍历集合,输出数据

Iterator<FlowBean> iterator = flowMap.keySet().iterator();

while (iterator.hasNext()){

FlowBean v = iterator.next();

context.write(new Text(flowMap.get(v)),v);

}

}

}

(4)编写TopNDriver类

public class TopNDriver {

public static void main(String[] args) throws Exception {

String inputPath = "E:\\input\\input2";

String outputPath = "E:\\output\\output3";

Configuration configuration = new Configuration();

Job job = Job.getInstance(configuration);

job.setJarByClass(TopNDriver.class);

job.setMapperClass(TopNMapper.class);

job.setReducerClass(TopNReducer.class);

job.setMapOutputKeyClass(FlowBean.class);

job.setMapOutputValueClass(Text.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(FlowBean.class);

FileInputFormat.setInputPaths(job, new Path(inputPath));

FileOutputFormat.setOutputPath(job, new Path(outputPath));

boolean res = job.waitForCompletion(true);

System.exit(res ? 0 : 1);

}

}

284

284

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?