目录

2.1 Infiniband RDMA and RoCE (Infiniband RDMA和ROCE)

2.2 Priority Flow Control (优先级流控)

2.3 iWARP vs RoCE (iWARP对比RoCE )

3.1 IRN’s Loss Recovery Mechanism (IRN丢包恢复机制)

3.2 IRN’s BDP-FC Mechanism (IRN的BDP-FC机制)

4 Evaluating IRN’s Transport Logic (评估IRN的传输层逻辑)

4.1 Experimental Settings (实验设置)

4.3 Factor Analysis of IRN (IRN的因子分析)

4.4 Robustness of Basic Results (基础结果的鲁棒性)

4.5 Comparison with Resilient RoCE (与弹性RoCE比较)

4.6 Comparison with iWARP (与iWARP对比)

5 Implementation Considerations (实现考量)

5.2 Supporting RDMA Reads and Atomics (支持RDMA读和原子)

5.3 Supporting Out-of-order Packet Delivery (支持乱序包交付)

5.4 Other Considerations (其它考量)

原文:https://www.cnblogs.com/alpaca/p/9663655.html

转自:https://my.oschina.net/u/4267906/blog/3822372

PPT报告:http://conferences.sigcomm.org/sigcomm/2018/files/slides/paper_6.2.pdf

Abstract (摘要)

The advent of RoCE (RDMA over Converged Ethernet) has led to a signifcant increase in the use of RDMA in datacenter networks. To achieve good performance, RoCE requires a lossless network which is in turn achieved by enabling Priority Flow Control (PFC) within the network. However, PFC brings with it a host of problems such as head-of-the-line blocking, congestion spreading, and occasional deadlocks. Rather than seek to fix these issues, we instead ask: is PFC fundamentally required to support RDMA over Ethernet?

RoCE(RDMA over Converged Ethernet,基于融合以太网的RDMA)的出现使得RDMA在数据中心网络中的使用量显着增加。为了获得良好的性能,RoCE要求网络是不丢包网络,这通过在网络中启用优先级流控(Priority Flow Control, PFC)来实现。然而,PFC带来了许多问题,例如队头阻塞、拥塞扩散和偶尔的死锁。 我们不是解决这些问题,而是要询问:为了支持基于以太网上的RDMA,RFC是否是必须的?

We show that the need for PFC is an artifact of current RoCE NIC designs rather than a fundamental requirement. We propose an improved RoCE NIC (IRN) design that makes a few simple changes to the RoCE NIC for better handling of packet losses. We show that IRN (without PFC) outperforms RoCE (with PFC) by 6-83% for typical network scenarios. Thus not only does IRN eliminate the need for PFC, it improves performance in the process! We further show that the changes that IRN introduces can be implemented with modest overheads of about 3-10% to NIC resources. Based on our results, we argue that research and industry should rethink the current trajectory of network support for RDMA.

我们将展示对PFC的需求是当前RoCE NIC设计的一种人为因素,而不是基本要求。我们提出了一种改进的RoCE NIC (IRN)设计,通过对RoCE NIC进行一些简单的更改,以便更好地处理数据包丢失。我们指出对于典型的网络场景,IRN(没有PFC)优于RoCE(使用PFC) 6-83%。 因此,IRN不仅消除了对PFC的需求,而且还提高了处理过程中的性能!我们进一步展示,IRN引入的更改可以通过大约3-10%的适度NIC资源开销来实现。根据我们的结果,我们认为研究界和工业界应重新考虑当前RDMA的网络支持。

1 Introduction (引言)

Datacenter networks offer higher bandwidth and lower latency than traditional wide-area networks. However, traditional endhost networking stacks, with their high latencies and substantial CPU overhead, have limited the extent to which applications can make use of these characteristics. As a result, several large datacenters have recently adopted RDMA, which bypasses the traditional networking stacks in favor of direct memory accesses.

与传统的广域网相比,数据中心网络具有更高的带宽和更低的延迟。但是,传统的主机端网络协议栈具有高延迟和较大的CPU开销,这限制了应用程序利用数据中心网络高带宽和低延迟特性的程度。因此,最近几个大型数据中心采用了RDMA;RDMA绕过了传统的网络协议栈,采用直接内存访问。

RDMA over Converged Ethernet (RoCE) has emerged as the canonical method for deploying RDMA in Ethernet-based datacenters [23, 38]. The centerpiece of RoCE is a NIC that (i) provides mechanisms for accessing host memory without CPU involvement and (ii) supports very basic network transport functionality. Early experience revealed that RoCE NICs only achieve good end-to-end performance when run over a lossless network, so operators turned to Ethernet’s Priority Flow Control (PFC) mechanism to achieve minimal packet loss. The combination of RoCE and PFC has enabled a wave of datacenter RDMA deployments.

融合以太网上的RDMA(RoCE)已经成为在基于以太网的数据中心中部署RDMA的规范方法[23,38]。RoCE的核心是一个NIC,它(i)提供了在没有CPU参与的情况下访问主机内存的机制,并(ii)支持非常基本的网络传输功能。早期的经验表明,RoCE NIC只有在不丢包网络上运行时才能取得良好的端到端性能,因此运营商转向以太网优先级流控(PFC)机制,以实现最少的数据包丢失。 RoCE和PFC的组合成为数据中心RDMA部署的浪潮。

However, the current solution is not without problems. In particular, PFC adds management complexity and can lead to signifcant performance problems such as head-of-the-line blocking, congestion spreading, and occasional deadlocks [23, 24, 35, 37, 38]. Rather than continue down the current path and address the various problems with PFC, in this paper we take a step back and ask whether it was needed in the first place. To be clear, current RoCE NICs require a lossless fabric for good performance. However, the question we raise is: can the RoCE NIC design be altered so that we no longer need a lossless network fabric?

然而,目前的解决方案仍然存在问题。特别地,PFC增加了管理的复杂性,并可能导致严重的性能问题,如队头阻塞、拥塞传播和偶尔的死锁[23,24,35,37,38]。 与继续沿着当前路径解决PFC的各种问题不同,本文中我们退后一步,询问PFC是否是必须的。 需要明确的是,目前的RoCE NIC需要网络是不丢包的才能获得良好的性能。 但是,我们提出的问题是:RoCE网卡设计是否可以改变,以便我们不再需要不丢包网络?

We answer this question in the affirmative, proposing a new design called IRN (for Improved RoCE NIC) that makes two incremental changes to current RoCE NICs (i) more efficient loss recovery, and (ii) basic end-to-end flow control to bound the number of in-flight packets (§3). We show, via extensive simulations on a RoCE simulator obtained from a commercial NIC vendor, that IRN performs better than current RoCE NICs, and that IRN does not require PFC to achieve high performance; in fact, IRN often performs better without PFC (§4). We detail the extensions to the RDMA protocol that IRN requires (§5) and use comparative analysis and FPGA synthesis to evaluate the overhead that IRN introduces in terms of NIC hardware resources (§6). Our results suggest that adding IRN functionality to current RoCE NICs would add as little as 3-10% overhead in resource consumption, with no deterioration in message rates.

我们肯定地回答了这个问题,提出了一个名为IRN(Improved RoCE NIC,改进的RoCE NIC)的新设计,它对当前的RoCE NIC进行了两个增量式更改

(i)更有效的丢包恢复机制,以及

(ii)基本的端到端流控(限制飞行中(in-flight)数据包的数量(§3))。

我们在从商用NIC供应商处获得的RoCE仿真器上进行了大量仿真,结果表明IRN的性能优于当前的RoCE NIC,并且IRN不需要PFC来取得高性能。实际上,IRN在没有PFC的情况下通常表现更好(§4)。 我们详细介绍了IRN要求的对RDMA协议的扩展(§5),并使用比较分析和FPGA综合来评估IRN在NIC硬件资源方面引入的开销(§6)。我们的结果表明,在当前的RoCE网卡中添加IRN功能会增加3-10%的资源开销,而不会降低消息速率。

A natural question that arises is how IRN compares to iWARP? iWARP [33] long ago proposed a similar philosophy as IRN: handling packet losses efficiently in the NIC rather than making the network lossless. What we show is that iWARP’s failing was in its design choices. The differences between iWARP and IRN designs stem from their starting points: iWARP aimed for full generality which led them to put the full TCP/IP stack on the NIC, requiring multiple layers of translation between RDMA abstractions and traditional TCP bytestream abstractions. As a result, iWARP NICs are typically far more complex than RoCE ones, with higher cost and lower performance (§2). In contrast, IRN starts with the much simpler design of RoCE and asks what minimal features can be added to eliminate the need for PFC.

一个明显的问题是与iWARP比较,IRN如何?很久以前,iWARP [33]提出了与IRN类似的学说:在NIC中有效地处理数据包丢失而不是使网络不丢包。我们展示的是iWARP的失败在于其设计选择。iWARP和IRN设计之间的差异源于他们的出发点:iWARP旨在实现全面的通用性,这使得他们将完整的TCP/IP协议栈实现于NIC中,需要在RDMA抽象和传统的TCP字节流抽象之间进行多层转换。 因此,iWARP NIC通常比RoCE更复杂、成本更高,且性能更低(§2)。相比之下,IRN从更简单的RoCE设计开始,并询问可以通过添加哪些最小功能以消除对PFC的需求。

More generally: while the merits of iWARP vs. RoCE has been a long-running debate in industry, there is no conclusive or rigorous evaluation that compares the two architectures. Instead, RoCE has emerged as the de-facto winner in the marketplace, and brought with it the implicit (and still lingering) assumption that a lossless fabric is necessary to achieve RoCE’s high performance. Our results are the first to rigorously show that, counter to what market adoption might suggest, iWARP in fact had the right architectural philosophy, although a needlessly complex design approach.

更一般地说:虽然iWARP与RoCE的优点一直是业界长期争论的问题,但没有比较两种架构的结论性或严格的评估。相反,RoCE已成为市场上事实上的赢家,并带来了隐含(并且仍然挥之不去)的假设,即不丢包网络是实现RoCE高性能所必需的。我们的结果是第一个严格表明(与市场采用建议相反),尽管具有一种不必要的复杂设计方法,iWARP实际上具有正确的架构理念。

Hence, one might view IRN and our results in one of two ways: (i) a new design for RoCE NICs which, at the cost of a few incremental modifcations, eliminates the need for PFC and leads to better performance, or, (ii) a new incarnation of the iWARP philosophy which is simpler in implementation and faster in performance.

因此,可以通过以下两种方式之一来审视IRN和我们的结果:(i)RoCE NIC的新设计,以少量增量修改为代价,消除了对PFC的需求并导致更好的性能,或者,(ii)iWARP理念的新实现,其实现更简单,性能更好。

2 Background (背景)

We begin with reviewing some relevant background.

我们以回顾一些相关背景开始。

2.1 Infiniband RDMA and RoCE (Infiniband RDMA和ROCE)

RDMA has long been used by the HPC community in special-purpose Infniband clusters that use credit-based flow control to make the network lossless [4]. Because packet drops are rare in such clusters, the RDMA Infiniband transport (as implemented on the NIC) was not designed to efficiently recover from packet losses. When the receiver receives an out-of-order packet, it simply discards it and sends a negative acknowledgement (NACK) to the sender. When the sender sees a NACK, it retransmits all packets that were sent after the last acknowledged packet (i.e., it performs a go-back-N retransmission).

长期以来,HPC(高性能计算)社区一直在特定用途的Infiniband集群中使用RDMA,这些集群使用基于信用的流控制来使网络不丢包[4]。由于数据包丢失在此类群集中很少见,因此RDMA Infiniband传输层(在NIC上实现)并非旨在高效恢复数据包丢失。 当接收方收到无序数据包时,它只是丢弃该数据包并向发送方发送 否定确认(NACK)。当发送方看到NACK时,它重新发送在最后一个确认的数据包之后发送的所有数据包(即,它执行 go-back-N重传)。

To take advantage of the widespread use of Ethernet in datacenters, RoCE [5, 9] was introduced to enable the use of RDMA over Ethernet. RoCE adopted the same Infiniband transport design (including go-back-N loss recovery), and the network was made lossless using PFC.

为了利用以太网在数据中心中的广泛使用的优势,引入了RoCE [5,9],以便在以太网上使用RDMA(我们对RoCE [5]及其后继者RoCEv2 [9]使用术语RoCE,RoCEv2使得RDMA不仅可以通过以太网运行,还可以运行在IP路由网络(因为RoCEv2使用UDP,包含IP头,可以用IP路由)。RoCE采用了相同的Infiniband传输层设计(包括回退N(go-back-N)重传),并且使用PFC使网络不丢包。

2.2 Priority Flow Control (优先级流控)

Priority Flow Control (PFC) [6] is Ethernet’s flow control mechanism, in which a switch sends a pause (or X-OFF) frame to the upstream entity (a switch or a NIC), when the queue exceeds a certain confgured threshold. When the queue drains below this threshold, an X-ON frame is sent to resume transmission. When confgured correctly, PFC makes the network lossless (as long as all network elements remain functioning). However, this coarse reaction to congestion is agnostic to which flows are causing it and this results in various performance issues that have been documented in numerous papers in recent years [23, 24, 35, 37, 38]. These issues range from mild (e.g., unfairness and head-of-line blocking) to severe, such as “pause spreading” as highlighted in [23] and even network deadlocks [24, 35, 37]. In an attempt to mitigate these issues, congestion control mechanisms have been proposed for RoCE (e.g., DCQCN [38] and Timely [29]) which reduce the sending rate on detecting congestion, but are not enough to eradicate the need for PFC. Hence, there is now a broad agreement that PFC makes networks harder to understand and manage, and can lead to myriad performance problems that need to be dealt with.

优先级流控(PFC)[6]是以太网的流量控制机制,当队列超过某个特定的配置阈值时,交换机会向上游实体(交换机或NIC)发送暂停(或X-OFF)帧。当队列低于此阈值时,将发送X-ON帧以恢复传输。正确配置后,PFC使网络不丢包(只要所有网络元素保持正常运行)。然而,这种对拥堵的粗略反应对哪些流导致拥塞是不可知的,这导致了近年来在许多论文中记载的各种性能问题[23,24,35,37,38]。这些问题的范围从轻微(例如,不公平和head-of-line阻塞)到严重(例如[23]中突出显示的“暂停传播”),甚至是网络死锁[24,35,37]。为了缓解这些问题,已经为RoCE提出了拥塞控制机制(例如,DC-QCN [38]和Timely [29]),其降低了拥塞时的发送速率,但是不足以消除对PFC的需求。因此,现在普遍认为PFC使网络更难理解和管理,并且可能导致需要处理的无数性能问题。

2.3 iWARP vs RoCE (iWARP对比RoCE )

iWARP [33] was designed to support RDMA over a fully general (i.e., not loss-free) network. iWARP implements the entire TCP stack in hardware along with multiple other layers that it needs to translate TCP’s byte stream semantics to RDMA segments. Early in our work, we engaged with multiple NIC vendors and datacenter operators in an attempt to understand why iWARP was not more broadly adopted (since we believed the basic architectural premise underlying iWARP was correct). The consistent response we heard was that iWARP is signifcantly more complex and expensive than RoCE, with inferior performance [13].

iWARP [33]旨在通过完全通用(即非不丢包网络)网络支持RDMA。iWARP在硬件中实现了整个TCP栈以及将TCP的字节流语义转换为RDMA分段所需的多个其他层。 在我们的早期工作中,我们与多家NIC供应商和数据中心运营商合作,试图了解为什么iWARP没有得到更广泛的采用(因为我们认为iWARP的基本架构前提是正确的)。我们听到的一致反应是iWARP明显比RoCE更复杂和昂贵,性能较差[13]。

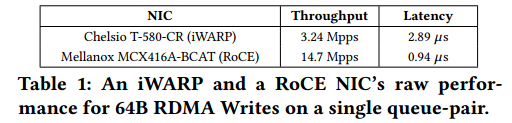

We also looked for empirical datapoints to validate or refute these claims. We ran RDMA Write benchmarks on two machines connected to one another, using Chelsio T-580-CR 40Gbps iWARP NICs on both machines for one set of experiments, and Mellanox MCX416A-BCAT 56Gbps RoCE NICs (with link speed set to 40Gbps) for another. Both NICs had similar specifcations, and at the time of purchase, the iWARP NIC cost 760, while the RoCE NIC cost760,whiletheRoCENICcost420. Raw NIC performance values for 64 bytes batched Writes on a single queue-pair are reported in Table 1. We fnd that iWARP has 3× higher latency and 4× lower throughput than RoCE.

我们还寻找经验数据点以验证或驳斥这些观点。我们在两台相互连接的机器上运行RDMA写入基准测试,两台机器上的Chelsio T-580-CR 40Gbps iWARP网卡用于一组实验,Mellanox MCX416A-BCAT 56Gbps RoCE网卡(链路速度设置为40Gbps)用于另一组实验。两个NIC的规格类似,在购买时,iWARP NIC售价760美元,而RoCE NIC售价420美元。表1中给出了单个队列对上64字节批量写入的原始NIC性能值。我们发现iWARP的延迟比RoCE高3倍,吞吐量低4倍。

表1: 单一队列对情形下64字节RDMA写操作的iWARP和RoCE NIC的原始性能

| NIC | 吞吐量 | 延迟 |

| Chelsio T-580-CR (iWARP) | 3.24 Mpps | 2.89 us |

| Mellanox MCX416A-BCAT (RoCE) | 14.7 Mpps | 0.94 us |

These price and performance differences could be attributed to many factors other than transport design complexity (such as differences in profit margins, supported features and engineering effort) and hence should be viewed as anecdotal evidence as best. Nonetheless, they show that our conjecture (in favor of implementing loss recovery at the endhost NIC) was certainly not obvious based on current iWARP NICs.

这些价格和性能差异可归因于除运输层设计复杂性之外的许多因素(例如,利润率差异、支持的特征和工程效率),因此应被视为最佳的轶事证据。尽管如此,他们表明:基于当前的iWARP NIC,我们的猜想(支持在终端主机NIC上实现丢包恢复)肯定是不明显的。

Our primary contribution is to show that iWARP, somewhat surprisingly, d

本文提出了一种名为IRN的新设计,旨在改进RoCE NIC,以应对PFC带来的问题。IRN通过更有效的丢包恢复和基本的端到端流控,实现了不依赖于无损网络的高性能。IRN在广泛仿真中表现优于现有RoCE NIC,且在没有PFC的情况下,性能通常更好。此外,IRN的实现开销相对较小,只需对当前RoCE NIC增加3-10%的资源消耗。

本文提出了一种名为IRN的新设计,旨在改进RoCE NIC,以应对PFC带来的问题。IRN通过更有效的丢包恢复和基本的端到端流控,实现了不依赖于无损网络的高性能。IRN在广泛仿真中表现优于现有RoCE NIC,且在没有PFC的情况下,性能通常更好。此外,IRN的实现开销相对较小,只需对当前RoCE NIC增加3-10%的资源消耗。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

789

789

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?