## TODO: Define your model with dropout added

class Classifier(nn.Module):

def __init__(self):

super().__init__()

self.fc1 = nn.Linear(784, 256)

self.fc2 = nn.Linear(256, 128)

self.fc3 = nn.Linear(128, 64)

self.fc4 = nn.Linear(64, 10)

# Dropout module with 0.2 drop probability

self.dropout = nn.Dropout(p=0.2)

def forward(self, x):

# make sure input tensor is flattened

x = x.view(x.shape[0], -1)

# Now with dropout

x = self.dropout(F.relu(self.fc1(x)))

x = self.dropout(F.relu(self.fc2(x)))

x = self.dropout(F.relu(self.fc3(x)))

# output so no dropout here

x = F.log_softmax(self.fc4(x), dim=1)

return x

## TODO: Train your model with dropout, and monitor the training progress with the validation loss and accuracy

%matplotlib inline

%config InlineBackend.figure_format = 'retina'

import matplotlib.pyplot as plt

import helper

print("#############################开始训练")

model=Classifier()

optimizer = optim.Adam(model.parameters(),lr = 0.001)

criterion = nn.NLLLoss()

epoches = 10

train_losses=[]

test_losses=[]

for e in range(epoches):

train_loss=0

for images,labels in trainloader:

optimizer.zero_grad()

imgs_new = images.view(images.shape[0],-1)

ps=model.forward(imgs_new)

loss = criterion(ps,labels)

train_loss+=loss

loss.backward()

optimizer.step()

else:

test_loss=0

accuracy=0

model.eval()

with torch.no_grad():

for images,labels in testloader:

imgs_new= images.view(images.shape[0],-1)

ps=model.forward(imgs_new)

test_loss += criterion(ps,labels)

ps2 = torch.exp(ps2)#为了计算准确率用了exp函数

t_p,t_c = ps.topk(1,dim=1)

equals = t_c ==labels

accuracy = torch.mean(equals.type(torch.FloatTensor))

model.train()

tr=train_loss/len(trainloader)

train_losses.append(tr)

te=test_loss/len(testloader)

test_losses.append(te)

print("accuracy={}%".format(accuracy*100),"train_loss={}".format(tr),"test_loss={}".format(te))

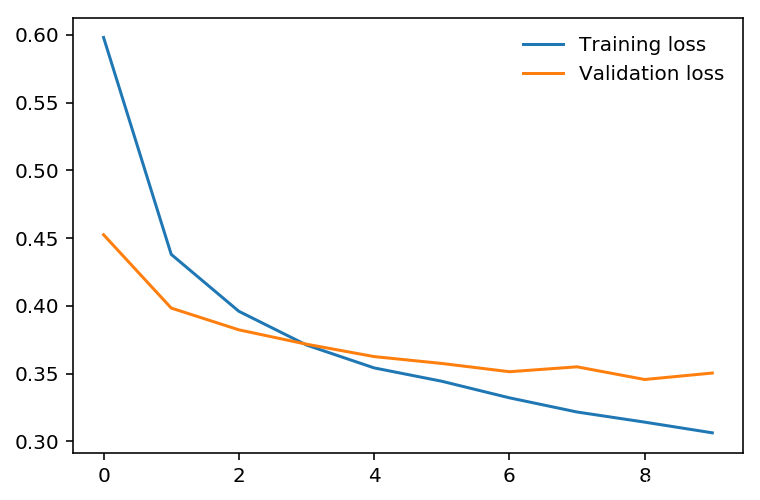

print("######################################观察训练与校验损失函数走向便于确定过拟合位置")

plt.plot(train_losses, label='Training loss')

plt.plot(test_losses, label='Validation loss')

plt.legend(frameon=False)

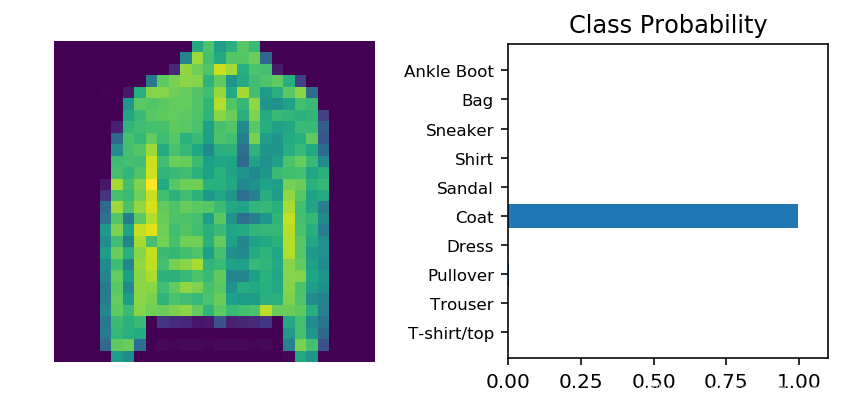

print("##########################实际预测")

images,labels = next(iter(testloader))

imgs_new2= images.view(images.shape[0],-1)

im = imgs_new2[0].view(-1,784)

with torch.no_grad():

ps=torch.exp(model.forward(im))

helper.view_classify(im.view(1,28,28),ps,version="Fashion")

#验证结果

#############################开始训练

accuracy=17.1875% train_loss=0.5980074405670166 test_loss=0.45242178440093994

accuracy=15.234375% train_loss=0.43792349100112915 test_loss=0.39830338954925537

accuracy=13.28125% train_loss=0.3959130644798279 test_loss=0.38223353028297424

accuracy=12.5% train_loss=0.37101325392723083 test_loss=0.37162792682647705

accuracy=15.234375% train_loss=0.354133665561676 test_loss=0.3624916672706604

accuracy=14.453125% train_loss=0.34428462386131287 test_loss=0.357414573431015

accuracy=15.625% train_loss=0.3320519030094147 test_loss=0.3513256013393402

accuracy=17.578125% train_loss=0.32158225774765015 test_loss=0.354927122592926

accuracy=14.84375% train_loss=0.31410548090934753 test_loss=0.3455764949321747

accuracy=15.234375% train_loss=0.3062107563018799 test_loss=0.35034212470054626

######################################观察训练与校验损失函数走向便于确定过拟合位置

##########################实际预测

###备注

最后一个参数version存在的意义是给纵轴注明品类,去掉了最后一个参数就不会有了

helper.view_classify(img.view(1, 28, 28), ps, version='Fashion')

本文介绍了一种带有Dropout层的神经网络模型,该模型通过在训练过程中随机关闭部分神经元来防止过拟合。文章详细展示了模型定义、训练过程及验证结果,包括训练与验证损失的变化趋势。

本文介绍了一种带有Dropout层的神经网络模型,该模型通过在训练过程中随机关闭部分神经元来防止过拟合。文章详细展示了模型定义、训练过程及验证结果,包括训练与验证损失的变化趋势。

9万+

9万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?