激活函数

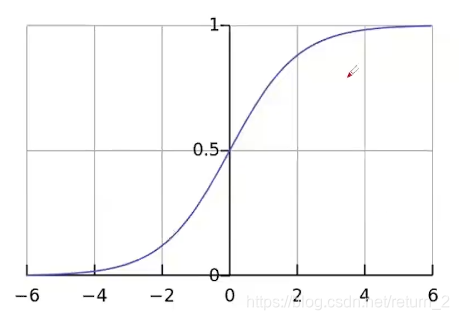

1. sigmoid函数

缺陷:

- 梯度消失

- 偏执现象:输出均大于0,使得输出均值不是0

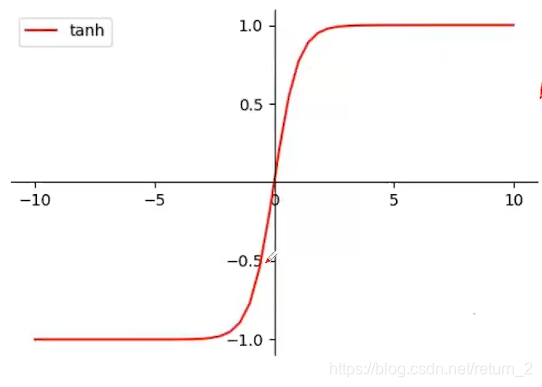

tanh函数

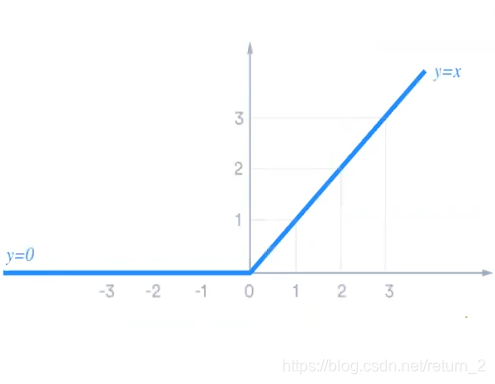

relu函数

优势:

优势:

- 计算简单

- 单边的输出特性和生物学意义上的神经元阈值机制相似

- 当x>0时,梯度不变,解决了sigmoid以及tanh常见的梯度消失问题

- 一般用于多层感知机以及卷积神经网络,在循环神经网络中并不常见

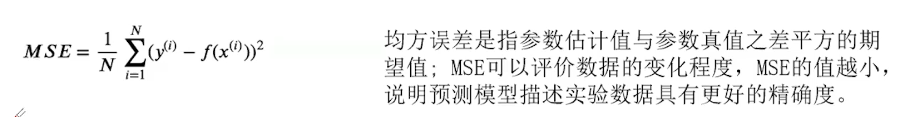

损失函数

回归问题

1.MSE 均方误差

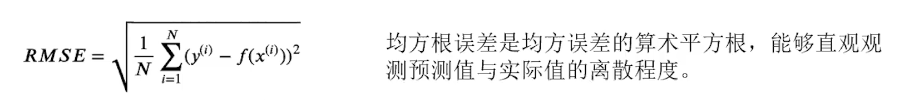

2. RMSE 均方根误差

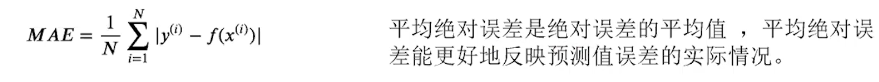

3. MAE 平均绝对误差

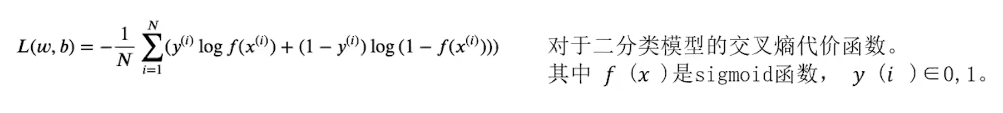

分类问题

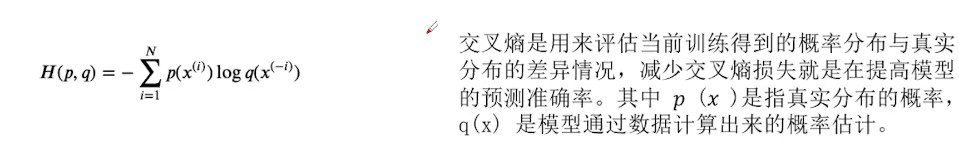

- 交叉熵:表示不同分布之间的差异

线性层完成MNIST

import torch

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.nn.functional as F

import torch.optim as optim

# prepare dataset

'''

tranforms:变换数据形态

'''

batch_size = 64

transform = transforms.Compose([transforms.ToTensor(), transforms.Normalize((0.1307,), (0.3081,))]) # 归一化,均值和方差

train_dataset = datasets.MNIST(root='./data/mnist/', train=True, download=False, transform=transform)

train_loader = DataLoader(train_dataset, shuffle=True, batch_size=batch_size)

test_dataset = datasets.MNIST(root='./data/mnist/', train=False, download=False, transform=transform)

test_loader = DataLoader(test_dataset, shuffle=False, batch_size=batch_size)

# design model using class

class Net(torch.nn.Module):

def __init__(self):

super().__init__()

self.l1 = torch.nn.Linear(784, 512)

self.l2 = torch.nn.Linear(512, 256)

self.l3 = torch.nn.Linear(256, 128)

self.l4 = torch.nn.Linear(128, 64)

self.l5 = torch.nn.Linear(64, 10)

def forward(self, x):

x = x.view(-1, 784) # -1其实就是自动获取mini_batch

x = F.relu(self.l1(x))

x = F.relu(self.l2(x))

x = F.relu(self.l3(x))

x = F.relu(self.l4(x))

return self.l5(x) # 最后一层不做激活,不进行非线性变换

model = Net()

# construct loss and optimizer

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)

# training cycle forward, backward, update

def train(epoch):

running_loss = 0.0

for batch_idx, data in enumerate(train_loader):

# 获得一个批次的数据和标签

inputs, target = data

# 获得模型预测结果(64, 10)

outputs = model(inputs)

# 交叉熵代价函数outputs(64,10),target(64)

optimizer.zero_grad()

loss = criterion(outputs, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

if batch_idx % 300 == 299:

print('[%d, %5d] loss: %.3f' % (epoch + 1, batch_idx + 1, running_loss / 300))

running_loss = 0.0

def test():

correct = 0

total = 0

with torch.no_grad():

for data in test_loader:

images, labels = data

outputs = model(images)

_, predicted = torch.max(outputs.data, dim=1) # dim = 1 列是第0个维度,行是第1个维度

total += labels.size(0)

correct += (predicted == labels).sum().item() # 张量之间的比较运算

print('accuracy on test set: %d %% ' % (100 * correct / total))

if __name__ == '__main__':

for epoch in range(10):

train(epoch)

test()

1324

1324

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?