1. Multinomial Logistic Loss

2. Infogain Loss - a generalization of MultinomialLogisticLossLayer.

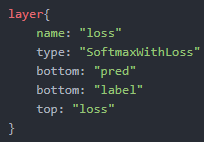

3. Softmax with Loss - computes the multinomial logistic loss of the softmax of its inputs. It’s conceptually identical to a softmax layer followed by a multinomial logistic loss layer, but provides a more numerically stable gradient.

4. Sum-of-Squares / Euclidean - computes the sum of squares of differences of its two inputs, 12N∑Ni=1∥x1i-x2i∥22.

5. Hinge / Margin - The hinge loss layer computes a one-vsall hinge (L1) or squared hinge loss (L2).

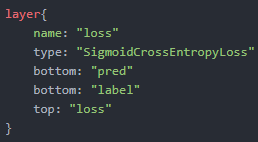

6. Sigmoid Cross-Entropy Loss - computes the cross-entropy (logistic) loss, often used for predicting targets interpreted as probabilities.

7. Accuracy / Top-k layer - scores the output as an accuracy with respect to target – it is not actually a loss and has no backward step.

8. Contrastive Loss

本文深入探讨了多种深度学习中常用的损失函数,包括Multinomial Logistic Loss、InfoGain Loss、Softmax with Loss、Sum-of-Squares/Euclidean Loss、Hinge/Margin Loss、Sigmoid Cross-Entropy Loss以及Contrastive Loss等,解析了它们的概念、计算方式及应用场景。

本文深入探讨了多种深度学习中常用的损失函数,包括Multinomial Logistic Loss、InfoGain Loss、Softmax with Loss、Sum-of-Squares/Euclidean Loss、Hinge/Margin Loss、Sigmoid Cross-Entropy Loss以及Contrastive Loss等,解析了它们的概念、计算方式及应用场景。

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?