给出n个串,问有多少对串(si,sj),si是sj的子串且不存在sk使得si是sk的子串&sk是sj的子串。

首先利用ac自动机可以找到一个串以第i个字符结尾的最长后缀。

因此对所有串构造ac自动机后,枚举每一个串为sj,看有多少个si。

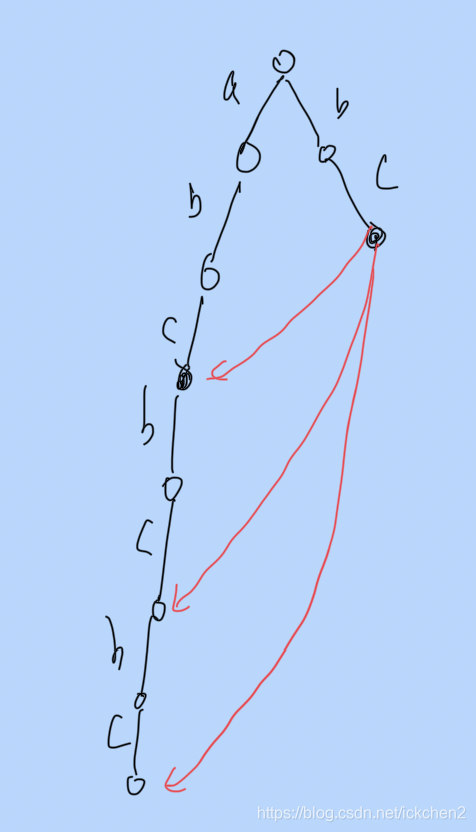

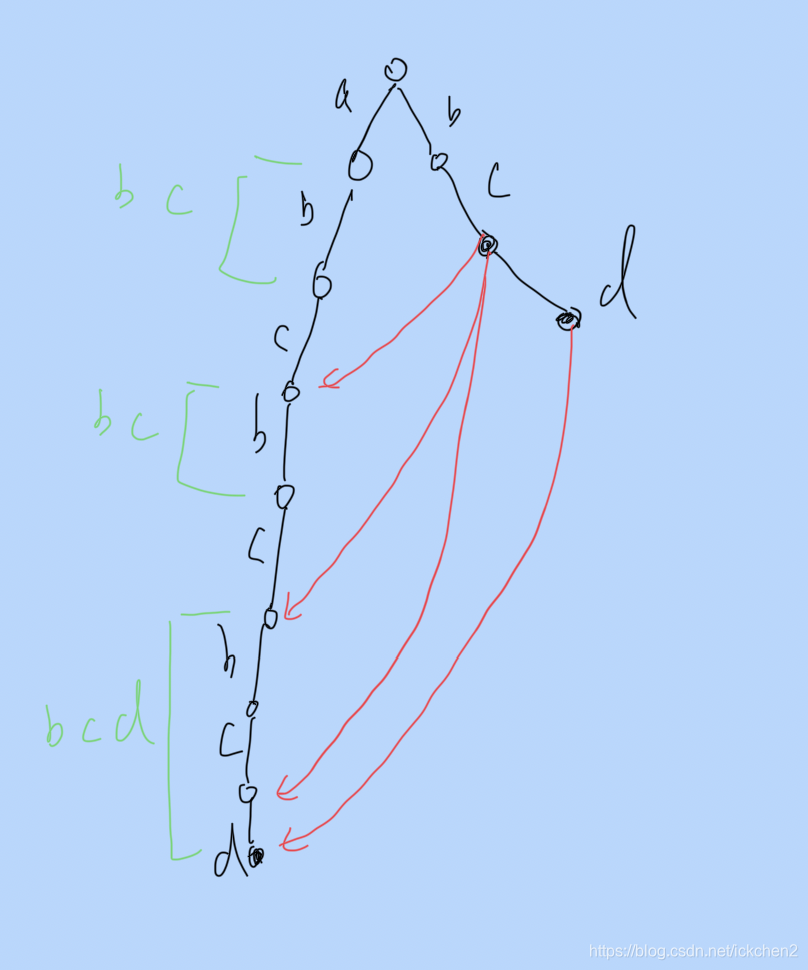

对于一个串sj,跑ac自动机,在每一个节点从该节点的fail节点得出他的最长后缀,因此可以找到对应的串。

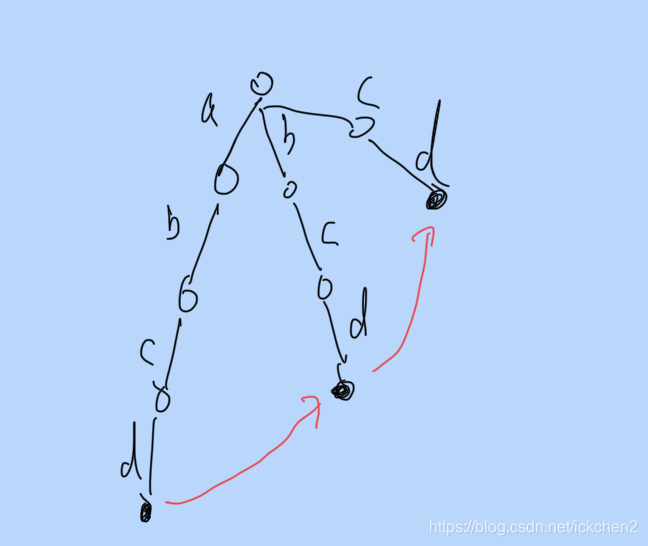

abcd对应的节点找到bcd

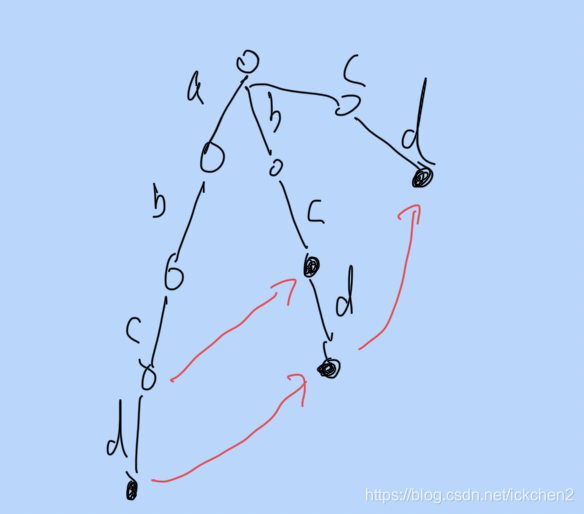

abc对应的节点找到了c

所以可以直接利用fail节点来找到当前节点往上的第一个字符串(当前的最长后缀)

但是需要判断找到的串是不是直接后缀(不存在中间层)。

假设当前在i的位置找到的串是si,长度为k,那么在位置[i-k+1,i]中找到的串(包括找到的串的子串子子串)都不能用。

譬如下图的abcd,bcd,bc,cd. 在找abcd的最后一个位置时,找到了他的si(bcd),那么bc就不能用了。

那怎么判断一个串能不能用呢?

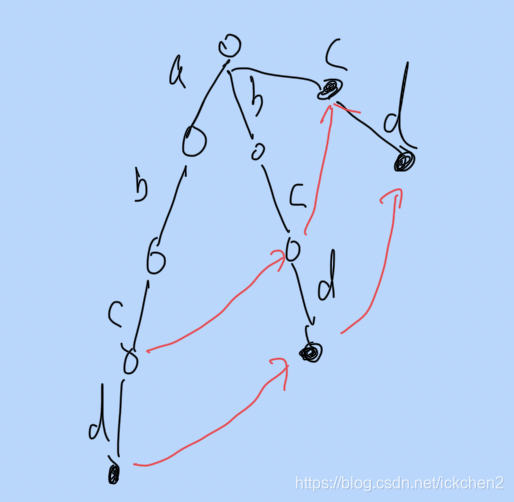

如果对fail节点建树的话,那么一个树节点下的子节点肯定包含该树节点对应的串si的子串。也就是说如果树下面如果存在一个节点是在其他串的范围内,那么这个子串是不能用的。(是某个串的子串)。譬如bc的c的子树在bcd中。

因此

先遍历si把si的每一个点解加入树状数组中。

si的结尾找到的串肯定是自己,因此需要找他fail节点。

再统计每一个串在si仲出现的次数。

对si从后往前遍历。假设当前找到的串是sj,那么就跳过完全在[i-len(sj)+1,i]中的串(被支配了),并统计该串出现了cnt[sj]++。

最后判断找到的串是否可用。

再扫一次串si,对于每一个位置的sj,如果他对应的树节点下面是否刚好有cnt个节点,如果比他大(不可能小的)那么说明出现了sk。如果刚好是cnt,那么就找到了一个串了。

判断树节点下面有多少个统计节点可以利用dfs序get(out) - get(in)

abcbcbc被bc串覆盖了3次,那么c对应就有3个子节点

对于abcbcbcd,bcd串覆盖了1次,bc覆盖了2次,单词c对应有3个子节点,说明有一个放到了别人的区间(bcd)中,那么bc不合法。

#define ll long long

#define ull unsigned long long

#define clr(x) memset(x,0,sizeof(x))

#define _clr(x) memset(x,-1,sizeof(x))

#define fr(i,a,b) for(int i = a; i < b; ++i)

#define frr(i,a,b) for(int i = a; i > b; --i)

#define pb push_back

#define sf scanf

#define pf printf

#define mp make_pair

#define pir pair<int,int>

//#define N 2e5+10

#define N 2001000

using namespace std;

int mod = 998244353;

//int mod = 1e9+7;

int get_min(int a, int b){

if(a==-1) return b;

if(b==-1) return a;

return min(a,b);

}

int ch[N][30],fail[N],ed[N],lt[N],v[N];

int id = 2;

int in_dfn[N],out_dfn[N], dfn_id;

vector<int> g[N];

void add(string s, int idx){

int len = s.size();

int root = 1;

for(int i = 0; i < len; ++i){

//printf("s = %s i = %d root = %d\n",s.c_str(),i,root);

if(!ch[root][s[i]-'a'])ch[root][s[i]-'a'] = id++;

root = ch[root][s[i]-'a'];

}

ed[root] = idx;

}

void dfs(int t, int f){

lt[t] = ed[t] ? t:lt[f];

//printf("t = %d f = %d\n",t,f);

in_dfn[t] = dfn_id++;

for(int u : g[t]){

dfs(u,t);

}

out_dfn[t] = dfn_id++;

}

string s[N];

void build(){

dfs(1,1);

int ans = 0;

queue<int> q;

for(int i = 0; i < 30; ++i)ch[0][i] = 1;

q.push(1);

while(!q.empty()){

int t = q.front();

q.pop();

for(int i =0; i< 30;++i){

if(!ch[t][i])ch[t][i]=ch[fail[t]][i];

else {

q.push(ch[t][i]);

fail[ch[t][i]] = ch[fail[t]][i];

}

}

//printf("t = %d fail = %d lt = %d\n",t,fail[t], lt[fail[t]]);

g[fail[t]].pb(t);

}

dfn_id = 1;

dfs(1,1);

return ;

}

int sum[N],cnt[N];

void add(int t, int val){

//printf("add t = %d val = %d\n",t,val);

for(; t<dfn_id; t +=t&-t){

sum[t] += val;

}

}

int get(int t){

int ret = 0;

while(t>0){

ret += sum[t];

t-=t&-t;

}

return ret;

}

int count(int x){

return get(out_dfn[x]) - get(in_dfn[x]-1);

}

int main(){

int n;

cin>>n;

for(int i = 0; i < n; ++i){

cin>>s[i];

add(s[i],i+1);

}

build();

int ans = 0;

for(int i = 0; i <n; ++i){

const string &str = s[i];

int root = 1;

vector<int> p,node;

for(int j =0; j < str.size();++j){

root = ch[root][str[j]-'a'];

p.pb(lt[root]);

node.pb(root);

if(in_dfn[root]){

add(in_dfn[root],1);

}

}

p.back() = lt[fail[root]];

node.back() = fail[root];

int cur = p.size();

for(int j = p.size()-1; j >=0;--j){

int x = p[j];

if(x && (int)(j-s[ed[x]-1].size()) < cur){

cnt[ed[x]-1]++;

//printf("i = %d j = %d s = %s x = %d ed = %d count = %d\n",i,j,str.c_str(),x, ed[x], cnt[ed[x]-1]);

cur = j-s[ed[x]-1].size();

}

}

for(int j = p.size()-1; j >=0;--j){

int x = p[j];

//printf("i = %d j = %d s = %s x = %d ed = %d(%zu) count = %d cur = %d\n",i,j,str.c_str(),x, ed[x], s[ed[x]-1].size(),count(x),cur);

if(ed[x] && !v[x]){

//printf("i = %d j = %d s = %s x = %d ed = %d(%zu) count = %d cur = %d v = %d\n",i,j,str.c_str(),x, ed[x], s[ed[x]-1].size(),count(x),cur,v[x]);

if(count(x)==cnt[ed[x]-1]){

v[x] = 1;

++ans;

}

}

}

//printf("i = %d ans = %d\n",i,ans);

root = 1;

for(int j =0; j < str.size();++j){

root = ch[root][str[j]-'a'];

if(in_dfn[root]){

add(in_dfn[root],-1);

}

if(lt[root]){

int x = lt[root];

v[x] = 0;

if(ed[x])

cnt[ed[x]-1]=0;

}

}

int x = lt[fail[root]];

v[x] = 0;

if(ed[x]){

cnt[ed[x]-1]=0;

}

}

cout<<ans<<endl;

}

本文详细介绍了如何使用AC自动机解决寻找字符串间子串的问题,并讨论了在AC自动机中如何判断找到的子串是否唯一,避免中间串的干扰。通过建立树状数组和fail节点,实现高效统计和判断,最后给出了一种解决方案并展示了其实例分析。

本文详细介绍了如何使用AC自动机解决寻找字符串间子串的问题,并讨论了在AC自动机中如何判断找到的子串是否唯一,避免中间串的干扰。通过建立树状数组和fail节点,实现高效统计和判断,最后给出了一种解决方案并展示了其实例分析。

3966

3966

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?