一、课题背景和开发环境

📌第J2周:ResNet50V2算法实战与解析📌

语言:Python3、Pytorch

📌本周任务:📌

– 1.请根据本文TensorFlow代码,编写出相应的Pytorch代码(建议使用上周的数据测试一下模型是否构建正确)

– 2.了解ResNetV2与ResNetV的区别

– 3.改进思路是否可以迁移到其他地方呢(自由探索)

**🔊注:**从前几周开始训练营的难度逐渐提升,具体体现在不再直接提供源代码。任务中会给大家提供一些算法改进的思路/方向,希望大家这一块可以积极探索。(这个探索的过程很重要,也将学到更多)

二、模型复现

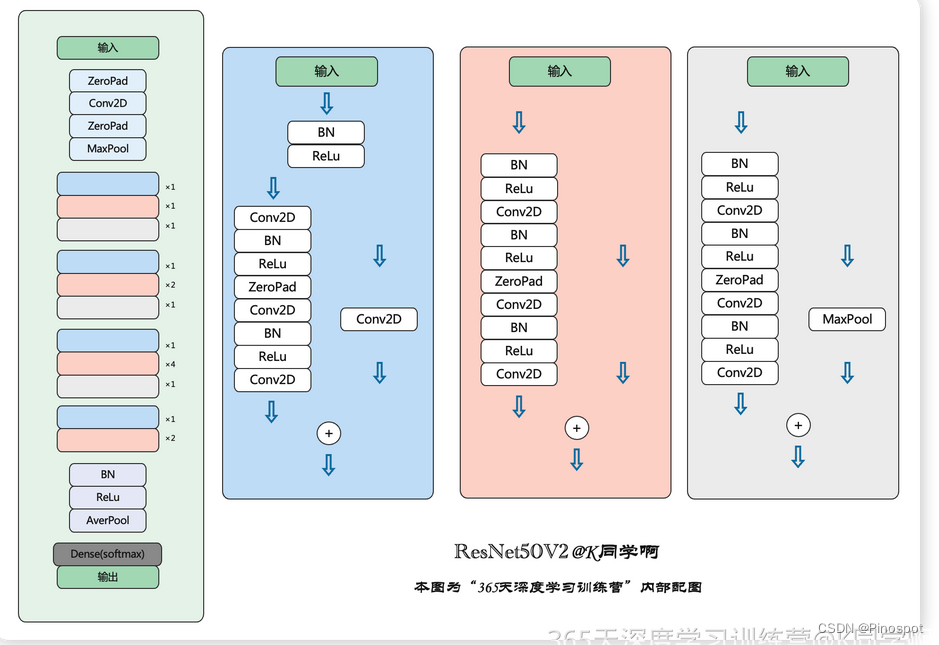

1.残差结构

''' Residual Block '''

class Block2(nn.Module):

def __init__(self, in_channel, filters, kernel_size=3, stride=1, conv_shortcut=False):

super(Block2, self).__init__()

self.preact = nn.Sequential(

nn.BatchNorm2d(in_channel),

nn.ReLU(True)

)

self.shortcut = conv_shortcut

if self.shortcut:

self.short = nn.Conv2d(in_channel, 4*filters, 1, stride=stride, padding=0, bias=False)

elif stride>1:

self.short = nn.MaxPool2d(kernel_size=1, stride=stride, padding=0)

else:

self.short = nn.Identity()

self.conv1 = nn.Sequential(

nn.Conv2d(in_channel, filters, 1, stride=1, bias=False),

nn.BatchNorm2d(filters),

nn.ReLU(True)

)

self.conv2 = nn.Sequential(

nn.Conv2d(filters, filters, kernel_size, stride=stride, padding=1, bias=False),

nn.BatchNorm2d(filters),

nn.ReLU(True)

)

self.conv3 = nn.Conv2d(filters, 4*filters, 1, stride=1, bias=False)

def forward(self, x):

x1 = self.preact(x)

if self.shortcut:

x2 = self.short(x1)

else:

x2 = self.short(x)

x1 = self.conv1(x1)

x1 = self.conv2(x1)

x1 = self.conv3(x1)

x = x1 + x2

return x

2.模块构建

class Stack2(nn.Module):

def __init__(self, in_channel, filters, blocks, stride=2):

super(Stack2, self).__init__()

self.conv = nn.Sequential()

self.conv.add_module(str(0), Block2(in_channel, filters, conv_shortcut=True))

for i in range(1, blocks-1):

self.conv.add_module(str(i), Block2(4*filters, filters))

self.conv.add_module(str(blocks-1), Block2(4*filters, filters, stride=stride))

def forward(self, x):

x = self.conv(x)

return x

3.网络构建

''' 构建ResNet50V2 '''

class ResNet50V2(nn.Module):

def __init__(self,

include_top=True, # 是否包含位于网络顶部的全链接层

preact=True, # 是否使用预激活

use_bias=True, # 是否对卷积层使用偏置

input_shape=[224, 224, 3],

classes=1000,

pooling=None): # 用于分类图像的可选类数

super(ResNet50V2, self).__init__()

self.conv1 = nn.Sequential()

self.conv1.add_module('conv', nn.Conv2d(3, 64, 7, stride=2, padding=3, bias=use_bias, padding_mode='zeros'))

if not preact:

self.conv1.add_module('bn', nn.BatchNorm2d(64))

self.conv1.add_module('relu', nn.ReLU())

self.conv1.add_module('max_pool', nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

self.conv2 = Stack2(64, 64, 3)

self.conv3 = Stack2(256, 128, 4)

self.conv4 = Stack2(512, 256, 6)

self.conv5 = Stack2(1024, 512, 3, stride=1)

self.post = nn.Sequential()

if preact:

self.post.add_module('bn', nn.BatchNorm2d(2048))

self.post.add_module('relu', nn.ReLU())

if

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?