P8周:YOLOv5-C3模块实现

一、前期准备

1.设置GPU

import torch

import torch.nn as nn

import torchvision.transforms as transforms

import torchvision

from torchvision import transforms,datasets

import os,PIL,pathlib,warnings

warnings.filterwarnings("ignore")

device = torch.device("cuda" if torch.cuda.is_available() else "CPU")

device

device(type=‘cuda’)

2.导入数据

import os,PIL,random,pathlib

data_dir = r"D:\z_temp\data\weather_photos"

data_dir = pathlib.Path(data_dir)

data_paths = list(data_dir.glob("*"))

classNames = list(str(path).split("\\")[4] for path in data_paths)

classNames

[‘cloudy’, ‘rain’, ‘shine’, ‘sunrise’]

train_transforms = transforms.Compose([

transforms.Resize([224,224]),

transforms.ToTensor(),

transforms.Normalize(

mean = [0.485,0.456,0.406],

std = [0.229,0.224,0.225])

])

test_transforms = transforms.Compose([

transforms.Resize([224,224]),

transforms.ToTensor(),

transforms.Normalize(

mean = [0.485,0.456,0.406],

std = [0.229,0.224,0.225])

])

total_data = datasets.ImageFolder(r"D:\z_temp\data\weather_photos",transform = train_transforms)

total_data

Dataset ImageFolder

Number of datapoints: 1125

Root location: D:\z_temp\data\weather_photos

StandardTransform

Transform: Compose(

Resize(size=[224, 224], interpolation=bilinear, max_size=None, antialias=True)

ToTensor()

Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

)

total_data.class_to_idx

{‘cloudy’: 0, ‘rain’: 1, ‘shine’: 2, ‘sunrise’: 3}

3.划分数据集

train_size = int(0.8*len(total_data))

test_size = len(total_data)-train_size

train_dataset,test_dataset = torch.utils.data.random_split(total_data,[train_size,test_size])

train_dataset,test_dataset

(<torch.utils.data.dataset.Subset at 0x19f98169700>,

<torch.utils.data.dataset.Subset at 0x19f981692e0>)

batch_size = 4

train_dl = torch.utils.data.DataLoader(train_dataset,

batch_size = batch_size,

shuffle = True,

num_workers = 1)

test_dl = torch.utils.data.DataLoader(test_dataset,

batch_size = batch_size,

shuffle = True,

num_workers = 1)

for X,y in test_dl:

print("Shape of X [N,C,H,W]:",X.shape)

print("Shape of y:",y.shape,y.dtype)

break

Shape of X [N,C,H,W]: torch.Size([4, 3, 224, 224])

Shape of y: torch.Size([4]) torch.int64

二、搭建包含C3模块的模型

1.搭建模型

import torch.nn.functional as F

def autopad(k,p = None):

if p is None:

p = k//2 if isinstance(k,int) else [x // 2 for x in k]

return p

class Conv(nn.Module):

def __init__(self,c1,c2,k=1,s=1,p=None,g=1,act=True):

super().__init__()

self.conv = nn.Conv2d(c1,c2,k,s,autopad(k,p),groups = g,bias = False)

self.bn = nn.BatchNorm2d(c2)

self.act = nn.SiLU() if act is True else (act if ininstance(act,nn.Module) else nn.Identity())

def forward(self,x):

return self.act(self.bn(self.conv(x)))

class Bottleneck(nn.Module):

def __init__(self,c1,c2,shortcut = True,g=1,e=0.5):

super().__init__()

c_ = int(c2 * e)

self.cv1 = Conv(c1,c_,1,1)

self.cv2 = Conv(c_,c2,3,1,g=g)

self.add = shortcut and c1 == c2

def forward(self,x):

return x+self.cv2(self.cv1(x)) if self.add else self.cv2(self.cv1(x))

class C3(nn.Module):

def __init__(self,c1,c2,n=1,shortcut=True,g=1,e=0.5):

super().__init__()

c_ = int(c2*e)

self.cv1 = Conv(c1,c_,1,1)

self.cv2 = Conv(c1,c_,1,1)

self.cv3 = Conv(2*c_,c2,1)

self.m = nn.Sequential(*(Bottleneck(c_,c_,shortcut,g,e=1.0) for _ in range(n)))

def forward(self,x):

return self.cv3(torch.cat((self.m(self.cv1(x)),self.cv2(x)),dim=1))

class model_K(nn.Module):

def __init__(self):

super(model_K,self).__init__()

self.Conv = Conv(3,32,3,2)

self.C3_1 = C3(32,64,3,2)

self.classifier = nn.Sequential(

nn.Linear(in_features = 802816,out_features = 100),# in_features = 64*112*112

nn.ReLU(),

nn.Linear(in_features = 100,out_features = 4)

)

def forward(self,x):

x = self.Conv(x)

x = self.C3_1(x)

x = torch.flatten(x,start_dim = 1)

x = self.classifier(x)

return x

device = 'cuda' if torch.cuda.is_available() else 'CPU'

print('Using {} device'.format(device))

model = model_K().to(device)

model

model_K(

(Conv): Conv(

(conv): Conv2d(3, 32, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(act): SiLU()

)

(C3_1): C3(

(cv1): Conv(

(conv): Conv2d(32, 32, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv2): Conv(

(conv): Conv2d(32, 32, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv3): Conv(

(conv): Conv2d(64, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(act): SiLU()

)

(m): Sequential(

(0): Bottleneck(

(cv1): Conv(

(conv): Conv2d(32, 32, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv2): Conv(

(conv): Conv2d(32, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(act): SiLU()

)

)

(1): Bottleneck(

(cv1): Conv(

(conv): Conv2d(32, 32, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv2): Conv(

(conv): Conv2d(32, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(act): SiLU()

)

)

(2): Bottleneck(

(cv1): Conv(

(conv): Conv2d(32, 32, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv2): Conv(

(conv): Conv2d(32, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(act): SiLU()

)

)

)

)

(classifier): Sequential(

(0): Linear(in_features=802816, out_features=100, bias=True)

(1): ReLU()

(2): Linear(in_features=100, out_features=4, bias=True)

)

)

2.查看模型详情

# 统计模型参数量以及其他指标

import torchsummary as summary

summary.summary(model, (3, 224, 224))

Layer (type) Output Shape Param #================================================================

Conv2d-1 [-1, 32, 112, 112] 864

BatchNorm2d-2 [-1, 32, 112, 112] 64

SiLU-3 [-1, 32, 112, 112] 0

Conv-4 [-1, 32, 112, 112] 0

Conv2d-5 [-1, 32, 112, 112] 1,024

BatchNorm2d-6 [-1, 32, 112, 112] 64

SiLU-7 [-1, 32, 112, 112] 0

Conv-8 [-1, 32, 112, 112] 0

Conv2d-9 [-1, 32, 112, 112] 1,024

BatchNorm2d-10 [-1, 32, 112, 112] 64

SiLU-11 [-1, 32, 112, 112] 0

Conv-12 [-1, 32, 112, 112] 0

Conv2d-13 [-1, 32, 112, 112] 9,216

BatchNorm2d-14 [-1, 32, 112, 112] 64

SiLU-15 [-1, 32, 112, 112] 0

Conv-16 [-1, 32, 112, 112] 0

Bottleneck-17 [-1, 32, 112, 112] 0

Conv2d-18 [-1, 32, 112, 112] 1,024

BatchNorm2d-19 [-1, 32, 112, 112] 64

SiLU-20 [-1, 32, 112, 112] 0

Conv-21 [-1, 32, 112, 112] 0

Conv2d-22 [-1, 32, 112, 112] 9,216

BatchNorm2d-23 [-1, 32, 112, 112] 64

SiLU-24 [-1, 32, 112, 112] 0

Conv-25 [-1, 32, 112, 112] 0

Bottleneck-26 [-1, 32, 112, 112] 0

Conv2d-27 [-1, 32, 112, 112] 1,024

BatchNorm2d-28 [-1, 32, 112, 112] 64

SiLU-29 [-1, 32, 112, 112] 0

Conv-30 [-1, 32, 112, 112] 0

Conv2d-31 [-1, 32, 112, 112] 9,216

BatchNorm2d-32 [-1, 32, 112, 112] 64

SiLU-33 [-1, 32, 112, 112] 0

Conv-34 [-1, 32, 112, 112] 0

Bottleneck-35 [-1, 32, 112, 112] 0

Conv2d-36 [-1, 32, 112, 112] 1,024

BatchNorm2d-37 [-1, 32, 112, 112] 64

SiLU-38 [-1, 32, 112, 112] 0

Conv-39 [-1, 32, 112, 112] 0

Conv2d-40 [-1, 64, 112, 112] 4,096

BatchNorm2d-41 [-1, 64, 112, 112] 128

SiLU-42 [-1, 64, 112, 112] 0

Conv-43 [-1, 64, 112, 112] 0

C3-44 [-1, 64, 112, 112] 0

Linear-45 [-1, 100] 80,281,700

ReLU-46 [-1, 100] 0

Linear-47 [-1, 4] 404

================================================================

Total params: 80,320,536Trainable params: 80,320,536Non-trainable params: 0

----------------------------------------------------------------

nput size (MB): 0.57Forward/backward pass size (MB): 150.06Params size (MB): 306.40Estimated Total Size (MB): 457.04

----------------------------------------------------------------

三、训练模型

1.编写训练函数

# 训练循环

def train(dataloader, model, loss_fn, optimizer):

size = len(dataloader.dataset) # 训练集的大小

num_batches = len(dataloader) # 批次数目, (size/batch_size,向上取整)

train_loss, train_acc = 0, 0 # 初始化训练损失和正确率

for X, y in dataloader: # 获取图片及其标签

X, y = X.to(device), y.to(device)

# 计算预测误差

pred = model(X) # 网络输出

loss = loss_fn(pred, y) # 计算网络输出和真实值之间的差距,targets为真实值,计算二者差值即为损失

# 反向传播

optimizer.zero_grad() # grad属性归零

loss.backward() # 反向传播

optimizer.step() # 每一步自动更新

# 记录acc与loss

train_acc += (pred.argmax(1) == y).type(torch.float).sum().item()

train_loss += loss.item()

train_acc /= size

train_loss /= num_batches

return train_acc, train_loss

2.编写测试函数

def test (dataloader, model, loss_fn):

size = len(dataloader.dataset) # 测试集的大小

num_batches = len(dataloader) # 批次数目, (size/batch_size,向上取整)

test_loss, test_acc = 0, 0

# 当不进行训练时,停止梯度更新,节省计算内存消耗

with torch.no_grad():

for imgs, target in dataloader:

imgs, target = imgs.to(device), target.to(device)

# 计算loss

target_pred = model(imgs)

loss = loss_fn(target_pred, target)

test_loss += loss.item()

test_acc += (target_pred.argmax(1) == target).type(torch.float).sum().item()

test_acc /= size

test_loss /= num_batches

return test_acc, test_loss

3.正式训练

import copy

optimizer = torch.optim.Adam(model.parameters(), lr= 1e-4)

loss_fn = nn.CrossEntropyLoss() # 创建损失函数

epochs = 20

train_loss = []

train_acc = []

test_loss = []

test_acc = []

best_acc = 0 # 设置一个最佳准确率,作为最佳模型的判别指标

for epoch in range(epochs):

model.train()

epoch_train_acc, epoch_train_loss = train(train_dl, model, loss_fn, optimizer)

model.eval()

epoch_test_acc, epoch_test_loss = test(test_dl, model, loss_fn)

# 保存最佳模型到 best_model

if epoch_test_acc > best_acc:

best_acc = epoch_test_acc

best_model = copy.deepcopy(model)

train_acc.append(epoch_train_acc)

train_loss.append(epoch_train_loss)

test_acc.append(epoch_test_acc)

test_loss.append(epoch_test_loss)

# 获取当前的学习率

lr = optimizer.state_dict()['param_groups'][0]['lr']

template = ('Epoch:{:2d}, Train_acc:{:.1f}%, Train_loss:{:.3f}, Test_acc:{:.1f}%, Test_loss:{:.3f}, Lr:{:.2E}')

print(template.format(epoch+1, epoch_train_acc*100, epoch_train_loss,

epoch_test_acc*100, epoch_test_loss, lr))

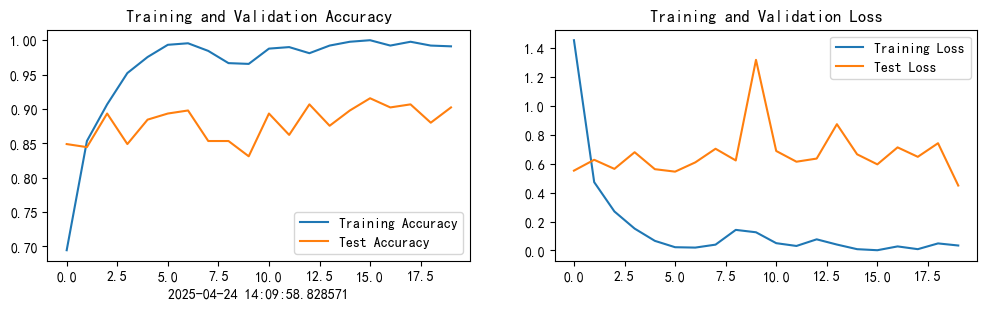

Epoch: 1, Train_acc:69.4%, Train_loss:1.455, Test_acc:84.9%, Test_loss:0.553, Lr:1.00E-04

Epoch: 2, Train_acc:85.3%, Train_loss:0.473, Test_acc:84.4%, Test_loss:0.628, Lr:1.00E-04

Epoch: 3, Train_acc:90.7%, Train_loss:0.270, Test_acc:89.3%, Test_loss:0.565, Lr:1.00E-04

Epoch: 4, Train_acc:95.2%, Train_loss:0.151, Test_acc:84.9%, Test_loss:0.680, Lr:1.00E-04

Epoch: 5, Train_acc:97.6%, Train_loss:0.066, Test_acc:88.4%, Test_loss:0.563, Lr:1.00E-04

Epoch: 6, Train_acc:99.3%, Train_loss:0.022, Test_acc:89.3%, Test_loss:0.546, Lr:1.00E-04

Epoch: 7, Train_acc:99.6%, Train_loss:0.020, Test_acc:89.8%, Test_loss:0.610, Lr:1.00E-04

Epoch: 8, Train_acc:98.4%, Train_loss:0.040, Test_acc:85.3%, Test_loss:0.704, Lr:1.00E-04

Epoch: 9, Train_acc:96.7%, Train_loss:0.142, Test_acc:85.3%, Test_loss:0.623, Lr:1.00E-04

Epoch:10, Train_acc:96.6%, Train_loss:0.126, Test_acc:83.1%, Test_loss:1.319, Lr:1.00E-04

Epoch:11, Train_acc:98.8%, Train_loss:0.050, Test_acc:89.3%, Test_loss:0.689, Lr:1.00E-04

Epoch:12, Train_acc:99.0%, Train_loss:0.031, Test_acc:86.2%, Test_loss:0.614, Lr:1.00E-04

Epoch:13, Train_acc:98.1%, Train_loss:0.077, Test_acc:90.7%, Test_loss:0.636, Lr:1.00E-04

Epoch:14, Train_acc:99.2%, Train_loss:0.041, Test_acc:87.6%, Test_loss:0.874, Lr:1.00E-04

Epoch:15, Train_acc:99.8%, Train_loss:0.009, Test_acc:89.8%, Test_loss:0.665, Lr:1.00E-04

Epoch:16, Train_acc:100.0%, Train_loss:0.002, Test_acc:91.6%, Test_loss:0.596, Lr:1.00E-04

Epoch:17, Train_acc:99.2%, Train_loss:0.028, Test_acc:90.2%, Test_loss:0.714, Lr:1.00E-04

Epoch:18, Train_acc:99.8%, Train_loss:0.009, Test_acc:90.7%, Test_loss:0.648, Lr:1.00E-04

Epoch:19, Train_acc:99.2%, Train_loss:0.049, Test_acc:88.0%, Test_loss:0.742, Lr:1.00E-04

Epoch:20, Train_acc:99.1%, Train_loss:0.034, Test_acc:90.2%, Test_loss:0.449, Lr:1.00E-04

四、结果可视化

1.Loss与Accuracy图

import matplotlib.pyplot as plt

#隐藏警告

import warnings

warnings.filterwarnings("ignore") #忽略警告信息

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号

plt.rcParams['figure.dpi'] = 100 #分辨率

from datetime import datetime

current_time = datetime.now() # 获取当前时间

epochs_range = range(epochs)

plt.figure(figsize=(12, 3))

plt.subplot(1, 2, 1)

plt.plot(epochs_range, train_acc, label='Training Accuracy')

plt.plot(epochs_range, test_acc, label='Test Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.xlabel(current_time) # 打卡请带上时间戳,否则代码截图无效

plt.subplot(1, 2, 2)

plt.plot(epochs_range, train_loss, label='Training Loss')

plt.plot(epochs_range, test_loss, label='Test Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

2.模型评估

best_model.eval()

epoch_test_acc, epoch_test_loss = test(test_dl, best_model, loss_fn)

epoch_test_acc, epoch_test_loss

epoch_test_acc, epoch_test_loss

# 查看是否与我们记录的最高准确率一致

epoch_test_acc

0.9155555555555556

五、总结

1. YOLOv5-C3介绍

C3模块,全称 Cross Stage Partial Bottleneck with 3 convolutions,是 YOLOv5 中提出的一种改进型的残差模块(Residual Block),灵感来自 CSPNet(Cross Stage Partial Network) 的思想。

其主要作用是:

- 加强特征提取(提高网络表达能力)

- 减少计算量和参数量(比传统残差结构更轻量)

C3 的网络结构组成

│

┌────┴────────┐

│ │

│ ┌─▼─┐

│ │Conv│

│ └─┬─┘

│ ┌────▼────┐

│ │ 残差结构 │ × n次(Bottleneck)

│ └────┬────┘

│ ┌─▼─┐

│ │Conv│

│ └─┬─┘

└────┬────────▼─────────┐

│ Add(或Concat)

▼

输出

2.C3的模块

auto padding

为了保持图像大小卷积前后一致

def autopad(k, p=None): # kernel padding 根据卷积核大小k自动计算卷积核padding数(0填充)

"""

:param k: 卷积核的 kernel_size

:param p: 卷积的padding 一般是None

:return: 自动计算的需要pad值(0填充)

"""

if p is None:

# k 是 int 整数则除以2, 若干的整数值则循环整除

p = k // 2 if isinstance(k, int) else [x // 2 for x in k]

return p

Conv模块

class Conv(nn.Module):

def __init__(self, c1, c2, k=1, s=1, p=None, act=True, g=1):

"""

:param c1: 输入的channel值

:param c2: 输出的channel值

:param k: 卷积的kernel_size

:param s: 卷积的stride

:param p: 卷积的padding 一般是None

:param act: 激活函数类型 True就是SiLU(), False就是不使用激活函数

:param g: 卷积的groups数 =1就是普通的卷积 >1就是深度可分离卷积

"""

super(Conv, self).__init__()

self.conv_1 = nn.Conv2d(c1, c2, k, s, autopad(k, p), groups=g, bias=True)

self.bn = nn.BatchNorm2d(c2)

self.act = nn.SiLU() if act else nn.Identity() # 若act=True, 则激活, act=False, 不激活

def forward(self, x):

return self.act(self.bn(self.conv_1(x)))

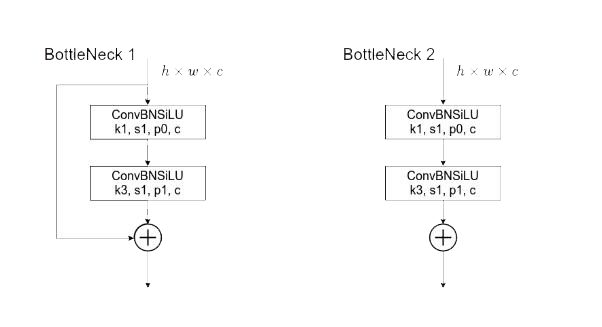

Bottleneck

Bottleneck模块中包含一个残差连接结构(左),和不包含的残差结构(右),就需要传入参数,来判断是否需要使用残差结构

class Bottleneck(nn.Module):

def __init__(self, c1, c2, e=0.5, shortcut=True, g=1):

"""

:param c1: 整个Bottleneck的输入channel

:param c2: 整个Bottleneck的输出channel

:param e: expansion ratio c2*e 就是第一个卷积的输出channel=第二个卷积的输入channel

:param shortcut: bool Bottleneck中是否有shortcut,默认True

:param g: Bottleneck中的3x3卷积类型 =1普通卷积 >1深度可分离卷积

"""

super(Bottleneck, self).__init__()

c_ = int(c2*e) # 使通道减半, c_具体多少取决于e

self.conv_1 = Conv(c1, c_, 1, 1)

self.conv_2 = Conv(c_, c2, 3, 1, g=g)

self.add = shortcut and c1 == c2

def forward(self, x):

return x + self.conv_2(self.conv_1(x)) if self.add else self.conv_2(self.conv_1(x))

C3

class C3(nn.Module):

def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5):

"""

:param c1: 整个 C3 的输入channel

:param c2: 整个 C3 的输出channel

:param n: 有n个Bottleneck

:param shortcut: bool Bottleneck中是否有shortcut,默认True

:param g: C3中的3x3卷积类型 =1普通卷积 >1深度可分离卷积

:param e: expansion ratio

"""

super(C3, self).__init__()

c_ = int(c2 * e)

self.cv_1 = Conv(c1, c_, 1, 1)

self.cv_2 = Conv(c1, c_, 1, 1)

# *操作符可以把一个list拆开成一个个独立的元素,然后再送入Sequential来构造m,相当于m用了n次Bottleneck的操作

self.m = nn.Sequential(*[Bottleneck(c_, c_, e=1, shortcut=True, g=1) for _ in range(n)])

self.cv_3 = Conv(2*c_, c2, 1, 1)

def forward(self, x):

return self.cv_3(torch.cat((self.m(self.cv_1(x)), self.cv_2(x)), dim=1))

其他

class BottleneckCSP(nn.Module):

def __init__(self, c1, c2, e=0.5, n=1):

"""

:param c1: 整个BottleneckCSP的输入channel

:param c2: 整个BottleneckCSP的输出channel

:param e: expansion ratio c2*e=中间其他所有层的卷积核个数/中间所有层的输入输出channel数

:param n: 有 n 个Bottleneck

"""

super(BottleneckCSP, self).__init__()

c_ = int(c2*e)

self.conv_1 = Conv(c1, c_, 1, 1)

self.m = nn.Sequential(*[Bottleneck(c_, c_, e=1, shortcut=True, g=1) for _ in range(n)])

self.conv_3 = Conv(c_, c_, 1, 1)

self.conv_2 = Conv(c1, c_, 1, 1)

self.bn = nn.BatchNorm2d(2*c_)

self.LeakyRelu = nn.LeakyReLU()

self.conv_4 = Conv(2*c_, c2, 1, 1)

def forward(self, x):

x_1 = self.conv_3(self.m(self.conv_1(x)))

x_2 = self.conv_2(x)

x_3 = torch.cat([x_1, x_2], dim=1)

x_4 = self.LeakyRelu(self.bn(x_3))

x = self.conv_4(x_4)

return x

class SPP(nn.Module):

def __init__(self, c1, c2, e=0.5, k1=5, k2=9, k3=13):

"""

:param c1: SPP模块的输入channel

:param c2: SPP模块的输出channel

:param e: expansion ratio

:param k1: Maxpool 的卷积核大小

:param k2: Maxpool 的卷积核大小

:param k3: Maxpool 的卷积核大小

"""

super(SPP, self).__init__()

c_ = int(c2*e)

self.cv_1 = Conv(c1, c_, 1, 1)

self.pool_1 = nn.MaxPool2d(kernel_size=k1, stride=1, padding=k1 // 2)

self.pool_2 = nn.MaxPool2d(kernel_size=k2, stride=1, padding=k2 // 2)

self.pool_3 = nn.MaxPool2d(kernel_size=k3, stride=1, padding=k3 // 2)

self.cv_2 = Conv(4*c_, c2, 1, 1)

def forward(self, x):

return self.cv_2(torch.cat((self.pool_1(self.cv_1(x)), self.pool_2(self.cv_1(x)), self.pool_3(self.cv_1(x)), self.cv_1(x)), dim=1))

1325

1325

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?