- 🍨 本文为🔗365天深度学习训练营 中的学习记录博客

- 🍖 原作者:K同学啊

一、前期准备

1.设置GPU

import torch

import torch.nn as nn

import torchvision.transforms as transforms

import torchvision

from torchvision import transforms, datasets

import os,PIL,pathlib

device=torch.device("cuda"if torch.cuda.is_available() else "cpu")

device

device(type=‘cuda’)

2.导入数据

import os,PIL,random,pathlib

data_dir = r"D:\z_temp\data\第4周"

data_dir = pathlib.Path(data_dir)

data_paths = list(data_dir.glob('*'))

classNames = [str(path).split("\\")[4] for path in data_paths]

classNames

total_datadir = r"D:\z_temp\data\第4周"

train_transforms = transforms.Compose([

transforms.Resize([224,224]),

transforms.ToTensor(),

transforms.Normalize(

mean=[0.485,0.456,0.406],

std=[0.229,0.224,0.225])

])

total_data = datasets.ImageFolder(total_datadir,transform = train_transforms)

total_data

Dataset ImageFolder

Number of datapoints: 2142

Root location: D:\z_temp\data\第4周

StandardTransform

Transform: Compose(

Resize(size=[224, 224], interpolation=bilinear, max_size=None, antialias=True)

ToTensor()

Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

)

3.划分数据集

import os,PIL,random,pathlib

data_dir = r"D:\z_temp\data\第4周"

data_dir = pathlib.Path(data_dir)

data_paths = list(data_dir.glob('*'))

classNames = [str(path).split("\\")[4] for path in data_paths]

classNames

total_datadir = r"D:\z_temp\data\第4周"

train_transforms = transforms.Compose([

transforms.Resize([224,224]),

transforms.ToTensor(),

transforms.Normalize(

mean=[0.485,0.456,0.406],

std=[0.229,0.224,0.225])

])

total_data = datasets.ImageFolder(total_datadir,transform = train_transforms)

total_data

Dataset ImageFolder

Number of datapoints: 2142

Root location: D:\z_temp\data\第4周

StandardTransform

Transform: Compose(

Resize(size=[224, 224], interpolation=bilinear, max_size=None, antialias=True)

ToTensor()

Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

)

4.划分数据集

train_size = int(0.8*len(total_data))

test_size = len(total_data)-train_size

train_dataset,test_dataset = torch.utils.data.random_split(total_data,[train_size,test_size])

train_dataset,test_dataset

(<torch.utils.data.dataset.Subset at 0x24c0c33d6a0>,

<torch.utils.data.dataset.Subset at 0x24c0c33da30>)

batch_size = 32

train_dl = torch.utils.data.DataLoader(train_dataset,

batch_size = batch_size,

shuffle=True,

num_workers = 1)

test_dl = torch.utils.data.DataLoader(test_dataset,

batch_size = batch_size,

shuffle=True,

num_workers=1)

for X,y in test_dl:

print('Shap of X [N,C,H,W]:', X.shape)

print('Shap of y:',y.shape,y.dtype)

break

Shap of X [N,C,H,W]: torch.Size([32, 3, 224, 224])

Shap of y: torch.Size([32]) torch.int64

二、构建CNN

import torch.nn.functional as F

class Network_bn(nn.Module):

def __init__(self):

super(Network_bn,self).__init__()

self.conv1 = nn.Conv2d(in_channels=3,

out_channels=12,

kernel_size=5,

stride = 1,

padding=0)

self.bn1 = nn.BatchNorm2d(12)

self.conv2 = nn.Conv2d(in_channels=12,

out_channels=12,

kernel_size=5,

stride = 1,

padding=0)

self.bn2 = nn.BatchNorm2d(12)

self.pool = nn.MaxPool2d(2,2)

self.conv4 = nn.Conv2d(in_channels=12,

out_channels = 24,

kernel_size =5,

stride = 1,

padding = 0)

self.bn4 = nn.BatchNorm2d(24)

self.conv5 = nn.Conv2d(in_channels = 24,

out_channels = 24,

kernel_size=5,

stride = 1,

padding = 0)

self.bn5 = nn.BatchNorm2d(24)

self.fc1 = nn.Linear(24*50*50,len(classNames))

def forward(self, x):

x = F.relu(self.bn1(self.conv1(x)))

x = F.relu(self.bn2(self.conv2(x)))

x = self.pool(x)

x = F.relu(self.bn4(self.conv4(x)))

x = F.relu(self.bn5(self.conv5(x)))

x = self.pool(x)

x = x.view(-1, 24*50*50)

x = self.fc1(x)

return x

device = "cuda" if torch.cuda.is_available() else "cpu"

print("Using {} device".format(device))

model = Network_bn().to(device)

model

Network_bn(

(conv1): Conv2d(3, 12, kernel_size=(5, 5), stride=(1, 1))

(bn1): BatchNorm2d(12, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(12, 12, kernel_size=(5, 5), stride=(1, 1))

(bn2): BatchNorm2d(12, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(pool): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

(conv4): Conv2d(12, 24, kernel_size=(5, 5), stride=(1, 1))

(bn4): BatchNorm2d(24, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv5): Conv2d(24, 24, kernel_size=(5, 5), stride=(1, 1))

(bn5): BatchNorm2d(24, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(fc1): Linear(in_features=60000, out_features=2, bias=True)

)

三、训练模型

1.设置超参数

loss_fn = nn.CrossEntropyLoss() # 创建损失函数

learn_rate = 1e-4 # 学习率

opt = torch.optim.SGD(model.parameters(),lr=learn_rate)

2.编写训练函数

# 训练循环

def train(dataloader, model, loss_fn, optimizer):

size = len(dataloader.dataset) # 训练集的大小,一共60000张图片

num_batches = len(dataloader) # 批次数目,1875(60000/32)

train_loss, train_acc = 0, 0 # 初始化训练损失和正确率

for X, y in dataloader: # 获取图片及其标签

X, y = X.to(device), y.to(device)

# 计算预测误差

pred = model(X) # 网络输出

loss = loss_fn(pred, y) # 计算网络输出和真实值之间的差距,targets为真实值,计算二者差值即为损失

# 反向传播

optimizer.zero_grad() # grad属性归零

loss.backward() # 反向传播

optimizer.step() # 每一步自动更新

# 记录acc与loss

train_acc += (pred.argmax(1) == y).type(torch.float).sum().item()

train_loss += loss.item()

train_acc /= size

train_loss /= num_batches

return train_acc, train_loss

3.编写测试函数

def test (dataloader, model, loss_fn):

size = len(dataloader.dataset) # 测试集的大小,一共10000张图片

num_batches = len(dataloader) # 批次数目,313(10000/32=312.5,向上取整)

test_loss, test_acc = 0, 0

# 当不进行训练时,停止梯度更新,节省计算内存消耗

with torch.no_grad():

for imgs, target in dataloader:

imgs, target = imgs.to(device), target.to(device)

# 计算loss

target_pred = model(imgs)

loss = loss_fn(target_pred, target)

test_loss += loss.item()

test_acc += (target_pred.argmax(1) == target).type(torch.float).sum().item()

test_acc /= size

test_loss /= num_batches

return test_acc, test_loss

4.正式训练

epochs = 35

train_loss = []

train_acc = []

test_loss = []

test_acc = []

for epoch in range(epochs):

model.train()

epoch_train_acc, epoch_train_loss = train(train_dl, model, loss_fn, opt)

model.eval()

epoch_test_acc, epoch_test_loss = test(test_dl, model, loss_fn)

train_acc.append(epoch_train_acc)

train_loss.append(epoch_train_loss)

test_acc.append(epoch_test_acc)

test_loss.append(epoch_test_loss)

template = ('Epoch:{:2d}, Train_acc:{:.1f}%, Train_loss:{:.3f}, Test_acc:{:.1f}%,Test_loss:{:.3f}')

print(template.format(epoch+1, epoch_train_acc*100, epoch_train_loss, epoch_test_acc*100, epoch_test_loss))

print('Done')

Epoch: 1, Train_acc:61.9%, Train_loss:0.666, Test_acc:64.1%,Test_loss:0.646

Epoch: 2, Train_acc:71.3%, Train_loss:0.578, Test_acc:68.3%,Test_loss:0.598

Epoch: 3, Train_acc:74.9%, Train_loss:0.521, Test_acc:69.7%,Test_loss:0.567

Epoch: 4, Train_acc:77.7%, Train_loss:0.487, Test_acc:71.6%,Test_loss:0.548

Epoch: 5, Train_acc:80.7%, Train_loss:0.449, Test_acc:70.2%,Test_loss:0.557

Epoch: 6, Train_acc:81.8%, Train_loss:0.432, Test_acc:75.8%,Test_loss:0.509

Epoch: 7, Train_acc:83.7%, Train_loss:0.398, Test_acc:72.0%,Test_loss:0.536

Epoch: 8, Train_acc:84.7%, Train_loss:0.390, Test_acc:72.0%,Test_loss:0.524

Epoch: 9, Train_acc:86.5%, Train_loss:0.368, Test_acc:76.7%,Test_loss:0.496

Epoch:10, Train_acc:87.5%, Train_loss:0.356, Test_acc:75.8%,Test_loss:0.496

Epoch:11, Train_acc:88.5%, Train_loss:0.337, Test_acc:79.0%,Test_loss:0.475

Epoch:12, Train_acc:88.1%, Train_loss:0.327, Test_acc:78.8%,Test_loss:0.462

Epoch:13, Train_acc:89.8%, Train_loss:0.315, Test_acc:78.3%,Test_loss:0.464

Epoch:14, Train_acc:89.8%, Train_loss:0.303, Test_acc:80.0%,Test_loss:0.488

Epoch:15, Train_acc:91.4%, Train_loss:0.287, Test_acc:80.0%,Test_loss:0.439

Epoch:16, Train_acc:90.9%, Train_loss:0.285, Test_acc:80.7%,Test_loss:0.442

Epoch:17, Train_acc:91.8%, Train_loss:0.272, Test_acc:79.3%,Test_loss:0.432

Epoch:18, Train_acc:92.2%, Train_loss:0.265, Test_acc:81.1%,Test_loss:0.419

Epoch:19, Train_acc:93.1%, Train_loss:0.253, Test_acc:81.4%,Test_loss:0.438

Epoch:20, Train_acc:92.5%, Train_loss:0.257, Test_acc:80.7%,Test_loss:0.405

Epoch:21, Train_acc:93.9%, Train_loss:0.239, Test_acc:82.8%,Test_loss:0.412

Epoch:22, Train_acc:93.5%, Train_loss:0.237, Test_acc:81.1%,Test_loss:0.408

Epoch:23, Train_acc:93.9%, Train_loss:0.231, Test_acc:83.2%,Test_loss:0.401

Epoch:24, Train_acc:93.6%, Train_loss:0.221, Test_acc:82.8%,Test_loss:0.403

Epoch:25, Train_acc:94.9%, Train_loss:0.215, Test_acc:82.1%,Test_loss:0.399

Epoch:26, Train_acc:94.8%, Train_loss:0.217, Test_acc:82.3%,Test_loss:0.402

Epoch:27, Train_acc:95.2%, Train_loss:0.210, Test_acc:83.7%,Test_loss:0.389

Epoch:28, Train_acc:94.7%, Train_loss:0.204, Test_acc:82.1%,Test_loss:0.400

Epoch:29, Train_acc:95.0%, Train_loss:0.202, Test_acc:82.3%,Test_loss:0.386

Epoch:30, Train_acc:95.2%, Train_loss:0.195, Test_acc:82.3%,Test_loss:0.388

Epoch:31, Train_acc:95.4%, Train_loss:0.190, Test_acc:82.1%,Test_loss:0.392

Epoch:32, Train_acc:94.9%, Train_loss:0.190, Test_acc:85.3%,Test_loss:0.383

Epoch:33, Train_acc:95.6%, Train_loss:0.185, Test_acc:81.6%,Test_loss:0.403

Epoch:34, Train_acc:95.9%, Train_loss:0.181, Test_acc:85.5%,Test_loss:0.370

Epoch:35, Train_acc:95.9%, Train_loss:0.179, Test_acc:83.7%,Test_loss:0.374

Done

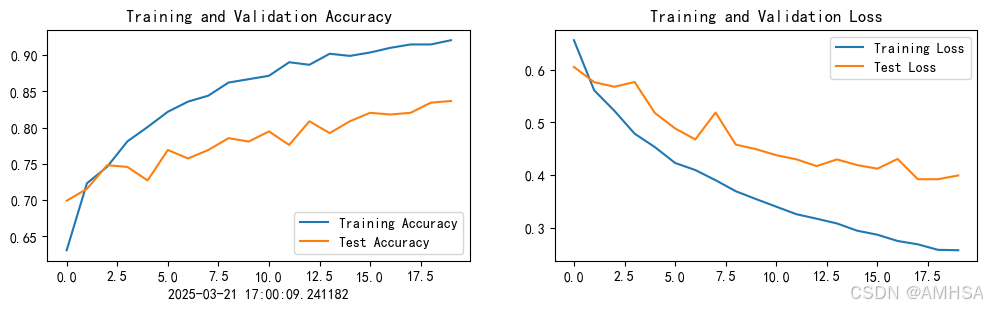

毫无疑问test_acc最高85.5%这个结果准确率不够高,但是train_acc=95.9%这个结果表明网络结构可能对训练集有一定的过拟合。得重新调整网络结构

四、结果可视化

import matplotlib.pyplot as plt

#隐藏警告

import warnings

warnings.filterwarnings("ignore") #忽略警告信息

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号

plt.rcParams['figure.dpi'] = 100 #分辨率

from datetime import datetime

current_time = datetime.now() # 获取当前时间

epochs_range = range(epochs)

plt.figure(figsize=(12, 3))

plt.subplot(1, 2, 1)

plt.plot(epochs_range, train_acc, label='Training Accuracy')

plt.plot(epochs_range, test_acc, label='Test Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.xlabel(current_time) # 打卡请带上时间戳,否则代码截图无效

plt.subplot(1, 2, 2)

plt.plot(epochs_range, train_loss, label='Training Loss')

plt.plot(epochs_range, test_loss, label='Test Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

五、指定图片预测

from PIL import Image

classes = list(total_data.class_to_idx)

def predict_one_image(image_path, model, transform, classes):

test_img = Image.open(image_path).convert('RGB')

# plt.imshow(test_img) # 展示预测的图片

test_img = transform(test_img)

img = test_img.to(device).unsqueeze(0)

model.eval()

output = model(img)

_,pred = torch.max(output,1)

pred_class = classes[pred]

print(f'预测结果是:{pred_class}')

predict_one_image(image_path=str(pathlib.Path(data_dir)/"Monkeypox"/"M01_01_00.jpg"),

model=model,

transform=train_transforms,

classes=classes)

预测结果是:Monkeypox

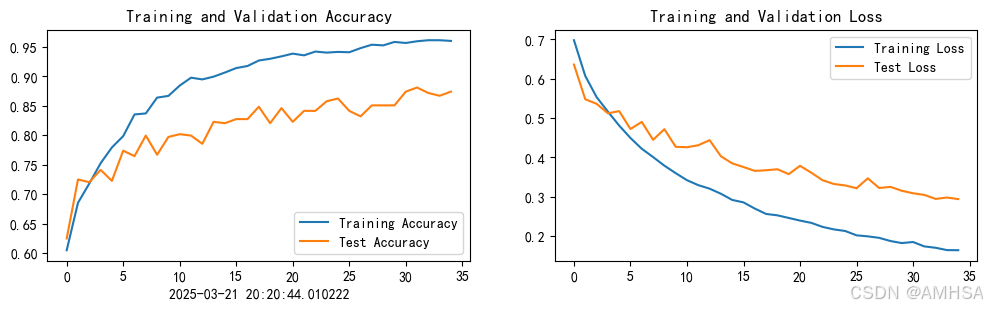

六、优化conv1\2 55~conv1\2\3 33

import torch.nn.functional as F

class Network_bn(nn.Module):

def __init__(self):

super(Network_bn,self).__init__()

self.conv1 = nn.Conv2d(in_channels=3,

out_channels=32,

kernel_size=3,

stride = 1,

padding=0)#224-3+1=222

self.bn1 = nn.BatchNorm2d(32)

self.conv2 = nn.Conv2d(in_channels=32,

out_channels=32,

kernel_size=3,

stride = 1,

padding=0)#222-3+1=220

self.bn2 = nn.BatchNorm2d(32)

self.conv3 = nn.Conv2d(in_channels=32,

out_channels=32,

kernel_size=3,

stride = 1,

padding=0)#220-3+1=218

self.bn3 = nn.BatchNorm2d(32)

self.pool = nn.MaxPool2d(2,2)#109

self.conv5 = nn.Conv2d(in_channels=32,

out_channels = 64,

kernel_size =5,

stride = 1,

padding = 0)#105

self.bn5 = nn.BatchNorm2d(64)

self.conv6 = nn.Conv2d(in_channels = 64,

out_channels = 64,

kernel_size=5,

stride = 1,

padding = 0)#101

self.bn6 = nn.BatchNorm2d(64)

#pool2 50

self.fc1 = nn.Linear(64*50*50,64)

self.fc2 = nn.Linear(64,len(classNames))

def forward(self, x):

x = F.relu(self.bn1(self.conv1(x)))

x = F.relu(self.bn2(self.conv2(x)))

x = F.relu(self.bn3(self.conv3(x)))

x = self.pool(x)

x = F.relu(self.bn5(self.conv5(x)))

x = F.relu(self.bn6(self.conv6(x)))

x = self.pool(x)

x = x.view(x.size(0),-1)

x = self.fc1(x)

x = self.fc2(x)

return x

device = "cuda" if torch.cuda.is_available() else "cpu"

print("Using {} device".format(device))

model = Network_bn().to(device)

model

Epoch: 1, Train_acc:60.5%, Train_loss:0.698, Test_acc:62.5%,Test_loss:0.636

Epoch: 2, Train_acc:68.5%, Train_loss:0.607, Test_acc:72.5%,Test_loss:0.548

Epoch: 3, Train_acc:71.8%, Train_loss:0.553, Test_acc:72.0%,Test_loss:0.536

Epoch: 4, Train_acc:75.2%, Train_loss:0.516, Test_acc:74.1%,Test_loss:0.512

Epoch: 5, Train_acc:77.9%, Train_loss:0.481, Test_acc:72.3%,Test_loss:0.518

Epoch: 6, Train_acc:79.9%, Train_loss:0.449, Test_acc:77.4%,Test_loss:0.472

Epoch: 7, Train_acc:83.5%, Train_loss:0.422, Test_acc:76.5%,Test_loss:0.490

Epoch: 8, Train_acc:83.7%, Train_loss:0.401, Test_acc:80.0%,Test_loss:0.445

Epoch: 9, Train_acc:86.4%, Train_loss:0.379, Test_acc:76.7%,Test_loss:0.472

Epoch:10, Train_acc:86.7%, Train_loss:0.360, Test_acc:79.7%,Test_loss:0.427

Epoch:11, Train_acc:88.4%, Train_loss:0.342, Test_acc:80.2%,Test_loss:0.426

Epoch:12, Train_acc:89.8%, Train_loss:0.330, Test_acc:80.0%,Test_loss:0.431

Epoch:13, Train_acc:89.5%, Train_loss:0.321, Test_acc:78.6%,Test_loss:0.444

Epoch:14, Train_acc:90.0%, Train_loss:0.308, Test_acc:82.3%,Test_loss:0.403

Epoch:15, Train_acc:90.7%, Train_loss:0.292, Test_acc:82.1%,Test_loss:0.385

Epoch:16, Train_acc:91.4%, Train_loss:0.286, Test_acc:82.8%,Test_loss:0.376

Epoch:17, Train_acc:91.8%, Train_loss:0.270, Test_acc:82.8%,Test_loss:0.366

Epoch:18, Train_acc:92.7%, Train_loss:0.257, Test_acc:84.8%,Test_loss:0.368

Epoch:19, Train_acc:93.0%, Train_loss:0.253, Test_acc:82.1%,Test_loss:0.370

Epoch:20, Train_acc:93.4%, Train_loss:0.246, Test_acc:84.6%,Test_loss:0.358

Epoch:21, Train_acc:93.9%, Train_loss:0.240, Test_acc:82.3%,Test_loss:0.379

Epoch:22, Train_acc:93.6%, Train_loss:0.234, Test_acc:84.1%,Test_loss:0.361

Epoch:23, Train_acc:94.2%, Train_loss:0.224, Test_acc:84.1%,Test_loss:0.342

Epoch:24, Train_acc:94.0%, Train_loss:0.217, Test_acc:85.8%,Test_loss:0.333

Epoch:25, Train_acc:94.2%, Train_loss:0.213, Test_acc:86.2%,Test_loss:0.329

Epoch:26, Train_acc:94.1%, Train_loss:0.202, Test_acc:84.1%,Test_loss:0.322

Epoch:27, Train_acc:94.8%, Train_loss:0.200, Test_acc:83.2%,Test_loss:0.347

Epoch:28, Train_acc:95.4%, Train_loss:0.196, Test_acc:85.1%,Test_loss:0.323

Epoch:29, Train_acc:95.3%, Train_loss:0.188, Test_acc:85.1%,Test_loss:0.325

Epoch:30, Train_acc:95.9%, Train_loss:0.183, Test_acc:85.1%,Test_loss:0.315

Epoch:31, Train_acc:95.7%, Train_loss:0.185, Test_acc:87.4%,Test_loss:0.309

Epoch:32, Train_acc:96.0%, Train_loss:0.174, Test_acc:88.1%,Test_loss:0.305

Epoch:33, Train_acc:96.1%, Train_loss:0.171, Test_acc:87.2%,Test_loss:0.295

Epoch:34, Train_acc:96.1%, Train_loss:0.165, Test_acc:86.7%,Test_loss:0.298

Epoch:35, Train_acc:96.0%, Train_loss:0.164, Test_acc:87.4%,Test_loss:0.294

Done

test_acc 勉强能够达到88%

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?