学习pytorch (下)

——莫烦python之CNN

我通过莫烦python教学视频入门pytorch,通过分析一篇cnn代码样例进行学习。

1. 全局代码

import torch

import torch.nn as nn

from torch.autograd import Variable

import torch.utils.data as Data

import torchvision

import matplotlib.pyplot as plt

#Hyper parameter

EPOCH = 1

BATCH_SIZE = 50

LR = 0.001

DOWNLOAD_MNIST = False

train_data = torchvision.datasets.MNIST(

root = './mnist',

train = True,

transform = torchvision.transform.ToTensor(),

download = DOWNLOAD_MNIST

)

# #plot one example

# print(train_data.train_data.size())

# print(train_data.train_labels.size())

# plt.imshow(train_data.train_data[0].numpy(),cmap='gray')

# plt.title('%i'%train_data.train_labels[0])

# plt.show()

train_loader = Data.DataLoader(

dataset = train_data,

batch_size = BATCH_SIZE,

shuffle = True,

num_workers = 2

)

test_data = torchvision.datasets.MNIST(

root = './mnist',

train = False

)

test_x = Variable(torch.unsequeeze(test_data.test_data,dim = 1),volatile=True).type(torch.FloatTensor)[:2000]/255

test_y = test_data.test_lables[:2000]

class CNN(nn.Module):

def __init__(self):

super(CNN,self).__init__()

self.conv1 = nn.Sequential(

nn.Conv2d(

in_channels = 1,

out_channels = 16,

kernerl_size = 5,

stride = 1,

padding =2,

),

nn.ReLU(),

nn.MaxPool2d(kernerl_size = 2),

)

self.conv2 = nn.Sequential(

nn.Conv2d(16,32,5,1,2),

nn.ReLU(),

nn.MaxPool2d(2),

)

self.out = nn.Linear(32*7*7,10)

def forward(self,x):

x = self.conv1(x)

x = self.conv2(x) # (batch, 32, 7, 7)

x = x.view(x.size(0),-1) # (batch, 32 *7 *7) x.size(0) keep the size of batch

output = self.out(x)

return output

cnn = CNN()

print(cnn)

optimizer = torch.optim.Adam(cnn.parameters(),lr=LR)

loss_func = nn.CrossEntropyLoss()

#training and testing

for epoch in range(EPOCH):

for step,(x,y) in enumerate(train_loader):

b_x = Variable(x)

b_y = Variable(y)

output = cnn(b_x)

loss = loss_func(output,b_y)

optimizer.zero_grad()

loss.backward()

optimizer.step()

if step % 50 == 0:

test_output = cnn(test_x)

pred_y = torch.max(test_output,1)[1].data.squeeze()

accuracy = sum(pred_y == test_y)/test_.size(0)

print('EPOCH:',epoch,'| train_loss:', loss.data[0], '| test accuracy:',accuracy)

#print 10 predictions from test data

test_output = cnn(test_x[:10])

pred_y = torch.max(test_output,1)[1].data.numpy().squeeze()

print(pred_y,'redictin number')

print(test_y[:10].numpy(),'real number')

2. 局部分析

加载相应的库

import torch

import torch.nn as nn

from torch.autograd import Variable

import torch.utils.data as Data

import torchvision

import matplotlib.pyplot as plt

超参数

EPOCH = 1

BATCH_SIZE = 50

LR = 0.001

DOWNLOAD_MNIST = False

EPOCH:遍历几次数据集

BATCH_SIZE: 批训练大小

LR:学习率

DOWNLOAD_MNIST: 标志位(是否下载数据集,第一次置True,之后置False)

通过torchvision下载数据集MNIST

train_data = torchvision.datasets.MNIST(

root = './mnist', #数据集存放目录

train = True, #取训练数据部分

transform = torchvision.transform.ToTensor(),#转至Tensor格式

download = DOWNLOAD_MNIST #标志位,是否下载

)

通过Loader准备好训练数据

train_loader = Data.DataLoader( #Data.DataLoader是必须步骤

dataset = train_data, #加载数据

batch_size = BATCH_SIZE, #批训练

shuffle = True, #标志位,是否打乱数据集顺序

num_workers = 2 #开几个线程

)

准备测试数据集

test_data = torchvision.datasets.MNIST(

root = './mnist',

train = False

)

选择前2000个数据,将训练数据变成Variable

test_x = Variable(torch.unsequeeze(test_data.test_data,dim = 1),volatile=True).type(torch.FloatTensor)[:2000]/255

test_y = test_data.test_lables[:2000]

搭建网络结构

class CNN(nn.Module):

def __init__(self):

super(CNN,self).__init__()

self.conv1 = nn.Sequential( #卷积神经网络

nn.Conv2d(

in_channels = 1, #输入通道

out_channels = 16,#输出通道

kernerl_size = 5,#核的大小

stride = 1,

padding =2,

),

nn.ReLU(),

nn.MaxPool2d(kernerl_size = 2),#池化,2*2

)

self.conv2 = nn.Sequential(

nn.Conv2d(16,32,5,1,2),

nn.ReLU(),

nn.MaxPool2d(2),

)

self.out = nn.Linear(32*7*7,10)

def forward(self,x): #定义数据传输

x = self.conv1(x)

x = self.conv2(x) # (batch, 32, 7, 7)

x = x.view(x.size(0),-1) # (batch, 32 *7 *7) x.size(0) keep the size of batch

output = self.out(x)

return output

定义优化器核损失函数

optimizer = torch.optim.Adam(cnn.parameters(),lr=LR)

loss_func = nn.CrossEntropyLoss()

训练过程

for epoch in range(EPOCH):

for step,(x,y) in enumerate(train_loader):

b_x = Variable(x) #将训练数据里面的特征变成Variable

b_y = Variable(y) #将训练数据里面的标签变成Variable

output = cnn(b_x) #预测结果

loss = loss_func(output,b_y) #损失

optimizer.zero_grad() #每次将优化器的导数先置为0

loss.backward() #后向传播

optimizer.step() #优化

if step % 50 == 0: #每隔50步测试一下网络效果

test_output = cnn(test_x) #输入测试数据,Variable形式

pred_y = torch.max(test_output,1)[1].data.squeeze()

accuracy = sum(pred_y == test_y)/test_.size(0)

print('EPOCH:',epoch,'| train_loss:', loss.data[0], '| test accuracy:',accuracy)

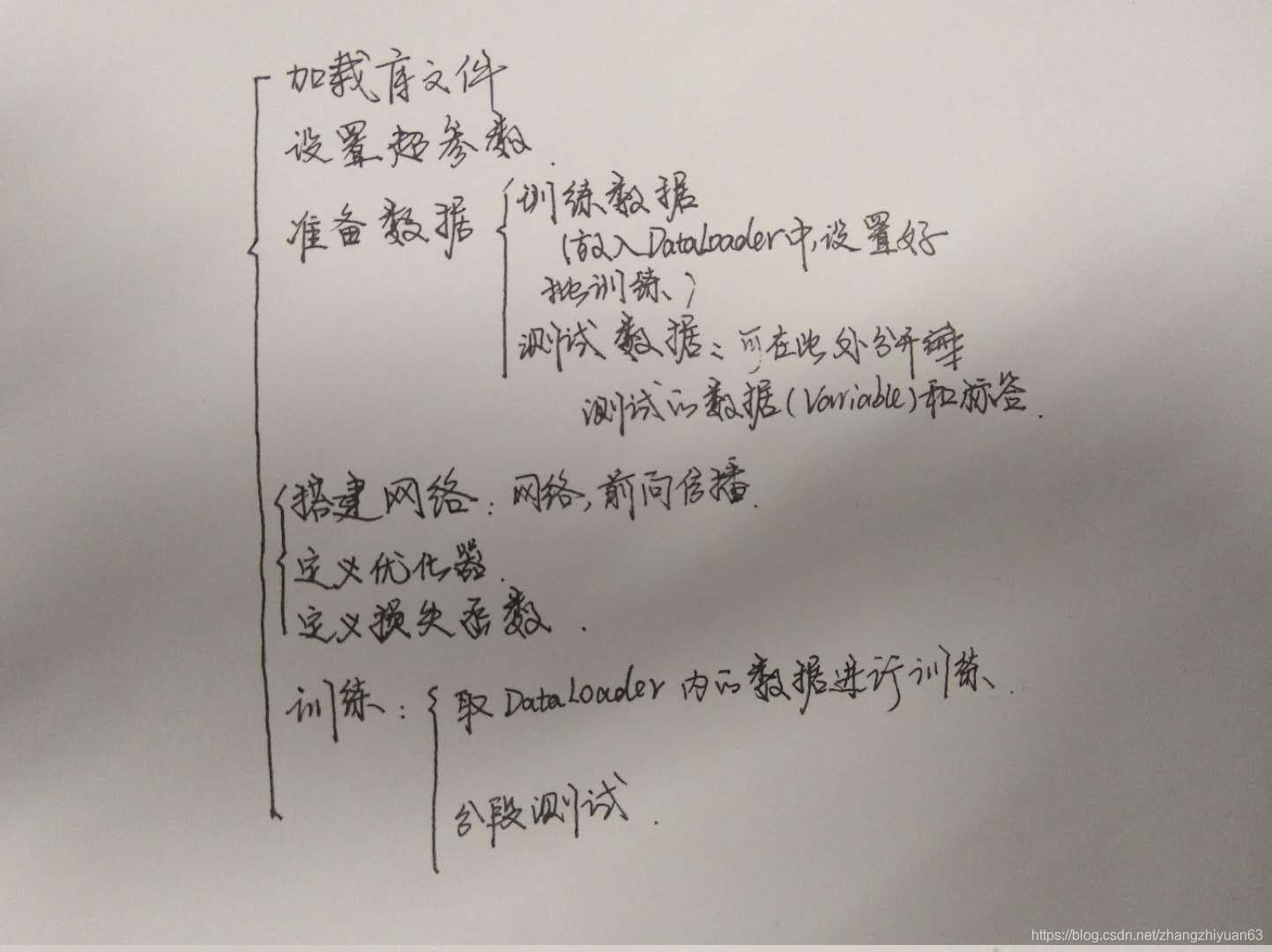

3.总结

综上,步骤可以总结为:

本文基于莫烦Python的教学视频,深入探讨PyTorch中的卷积神经网络(CNN)。通过全局代码分析,详细解读了从数据加载、网络构建、训练过程到超参数设置的每个关键步骤,为初学者提供了清晰的学习路径。

本文基于莫烦Python的教学视频,深入探讨PyTorch中的卷积神经网络(CNN)。通过全局代码分析,详细解读了从数据加载、网络构建、训练过程到超参数设置的每个关键步骤,为初学者提供了清晰的学习路径。

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?