MapReduce之去重

模式描述

这个模式过滤整个数据集,过滤应用的最终输出结果是一个唯一记录的集合

目的

在拥有一系列相思的数据集记录中,找到唯一的集合

适用场景

对于数据集中存在重复值进行去重操作,如果数据中没有重复值的话,则不需要这个模式

使用场景为

- 数据去重

- 抽取重复值

- 规避内连接的数据膨胀

问题描述

给定一个用户评论的列表,得到去重的用户ID集

样例输入

样例文本生成代码如下

import java.io.*;

import java.util.Random;

public class create {

public static String getRandomChar(int length) { //生成随机字符串

char[] chr = {'A', 'B', 'C', ' ','D', 'E', 'F', 'G', 'H', 'I', 'J', 'K', 'L', 'M', 'N', 'O', 'P', 'Q', 'R', 'S', 'T', 'U', 'V', 'W', 'X', 'Y', 'Z'};

Random random = new Random();

StringBuffer buffer = new StringBuffer();

for (int i = 0; i < length; i++) {

buffer.append(chr[random.nextInt(27)]);

}

return buffer.toString();

}

public static void main(String[] args) throws IOException{

String path="input/file.txt";

File file=new File(path);

if(!file.exists()){

file.getParentFile().mkdirs();

}

file.createNewFile();

FileWriter fw=new FileWriter(file,true);

BufferedWriter bw=new BufferedWriter(fw);

for(int i=0;i<1000;i++){

int id=(int)(Math.random()*200+100);

bw.write("< id = "+id+" common = "+getRandomChar(19)+" >\n");

}

bw.flush();

bw.close();;

fw.close();;

}

}

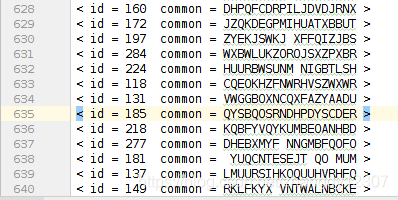

运行结果如下:

mapper阶段任务

mapper阶段任务主要是从输入记录中 得到用户ID,用这个ID作为键并用Null作为值输出到reducer

mapper阶段编码如下

public static class DeduplicationMappper extends Mapper<Object,Text,Text,NullWritable>{

private Text value=new Text();

public void map(Object key,Text value,Context context) throws IOException,InterruptedException{

String line=value.toString();

String id=line.substring(7,10); //7-10为用户ID

context.write(new Text(id), NullWritable.get());

}

}

reducer阶段任务

每个reducer接受到唯一一个键和一系列的Null值,这些键和Null值将被写入文件系统

public static class DeduplicationReducer extends Reducer<Text,NullWritable,Text,NullWritable>{

public void reduce(Text key,Iterable<NullWritable> values,Context context) throws IOException,InterruptedException{

context.write(key,NullWritable.get());

}

}

完整代码如下

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class Deduplication {

public static class DeduplicationMappper extends Mapper<Object,Text,Text,NullWritable>{

private Text value=new Text();

public void map(Object key,Text value,Context context) throws IOException,InterruptedException{

String line=value.toString();

String id=line.substring(7,10); //7-10为用户ID

context.write(new Text(id), NullWritable.get());

}

}

public static class DeduplicationReducer extends Reducer<Text,NullWritable,Text,NullWritable>{

public void reduce(Text key,Iterable<NullWritable> values,Context context) throws IOException,InterruptedException{

context.write(key,NullWritable.get());

}

}

public static void main(String[] args) throws Exception{

FileUtil.deleteDir("output");

Configuration configuration=new Configuration();

String[] otherArgs=new String[]{"input/file.txt","output"};

if(otherArgs.length!=2){

System.err.println("参数错误");

System.exit(2);

}

Job job=new Job(configuration,"Deduplication");

job.setJarByClass(Deduplication.class);

job.setMapperClass(DeduplicationMappper.class);

job.setReducerClass(DeduplicationReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(NullWritable.class);

FileInputFormat.addInputPath(job,new Path(otherArgs[0]));

FileOutputFormat.setOutputPath(job,new Path(otherArgs[1]));

System.exit(job.waitForCompletion(true)?0:1);

}

}

写在最后

数据去重相对来说比较简单,往后可以尝试是哟个combiner优化来加快处理速度

1864

1864

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?