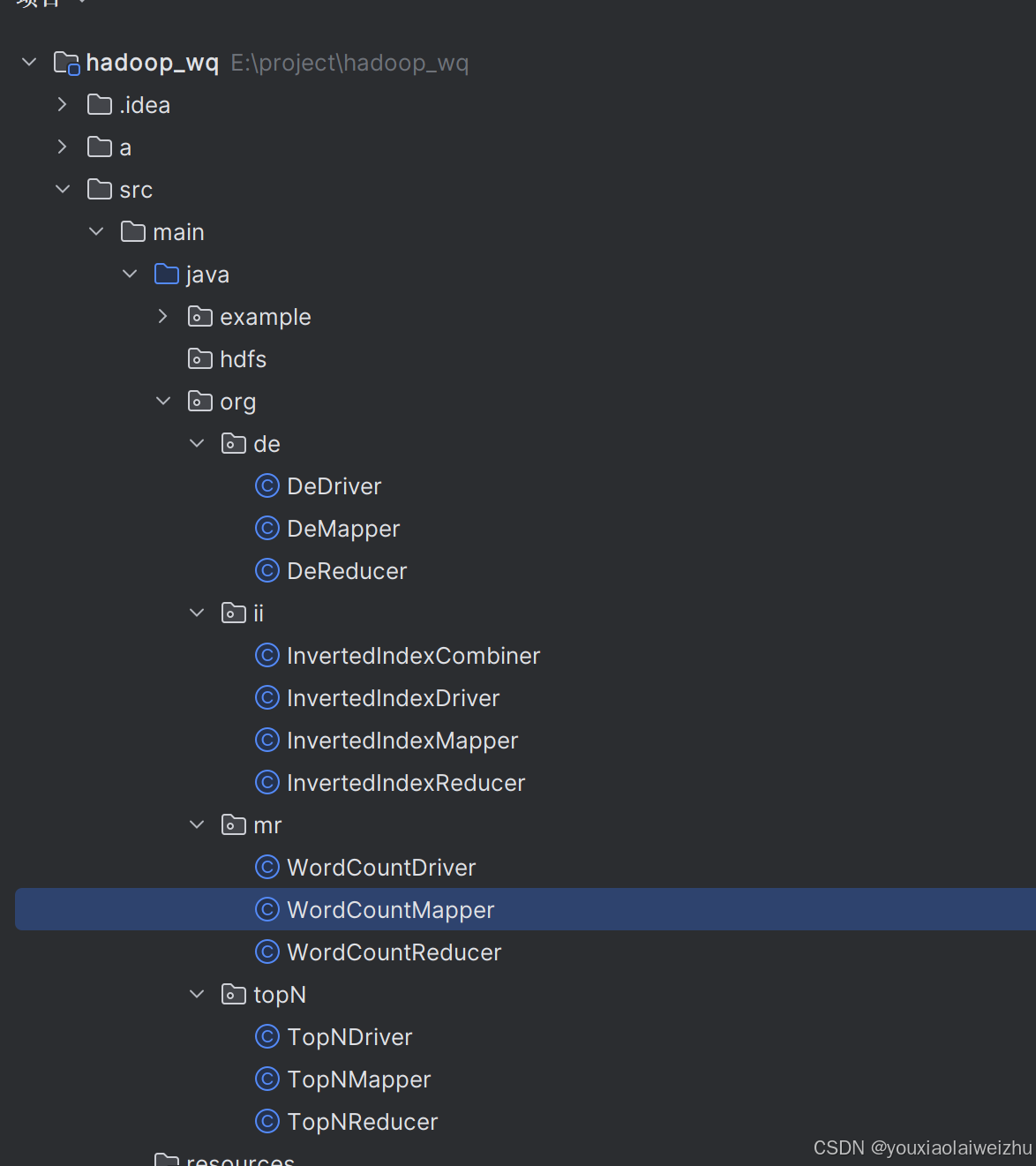

1.新建包(java rg下新建一个mr包,添加三个java类

WordCountMapper中

WordCountMapper中

package org.mr;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class WordCountMapper extends Mapper<LongWritable, Text, Text, IntWritable> {

@Override

protected void map(LongWritable key, Text value, Mapper<LongWritable, Text, Text, IntWritable>.Context context) throws IOException, InterruptedException {

//输入 <0, "Hello World">

//输出<"Hello", 1> <"World", 1>

String line = value.toString(); //"Hello World"

String[] words = line.split(" "); //"Hello" , "World"

for(String word: words) {

context.write(new Text(word), new IntWritable(1) ); //输出

}

}

}

WordCountReducer

package org.mr;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class WordCountReducer extends Reducer<Text, IntWritable, Text, IntWritable> {

//输入:<"Hello", (1,1,1)>

//输出:<"Hello", 3>

@Override

protected void reduce(Text key, Iterable<IntWritable> values, Reducer<Text, IntWritable, Text, IntWritable>.Context context) throws IOException, InterruptedException {

int count = 0; //求迭代器里的每个数字之和

for(IntWritable i: values) {

count += i.get();

}

context.write(key, new IntWritable(count));

}

}

WordCountDriver

package org.mr;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class WordCountDriver {

public static void main(String[] args) throws IOException, InterruptedException, ClassNotFoundException {

Configuration conf = new Configuration();

conf.set("mapreduce.framework.name", "local");

Job job = Job.getInstance(conf);

job.setJarByClass(WordCountDriver.class);

job.setMapperClass(WordCountMapper.class); //设置Mapper类

job.setReducerClass(WordCountReducer.class);//设置Reducer类

job.setMapOutputKeyClass(Text.class); //设置Map的输出的键的类型

job.setMapOutputValueClass(IntWritable.class);//设置Map的输出的值的类型

job.setOutputKeyClass(Text.class); //设置Reduce的输出的键的类型

job.setOutputValueClass(IntWritable.class); //设置Reduce的输出的值的类型

FileInputFormat.setInputPaths(job, new Path("/wordcount/input")); //设置输入目录

FileOutputFormat.setOutputPath(job, new Path("/wordcount/output")); //设置输出目录

boolean res = job.waitForCompletion(true);

System.exit(res? 0: 1);

}

}

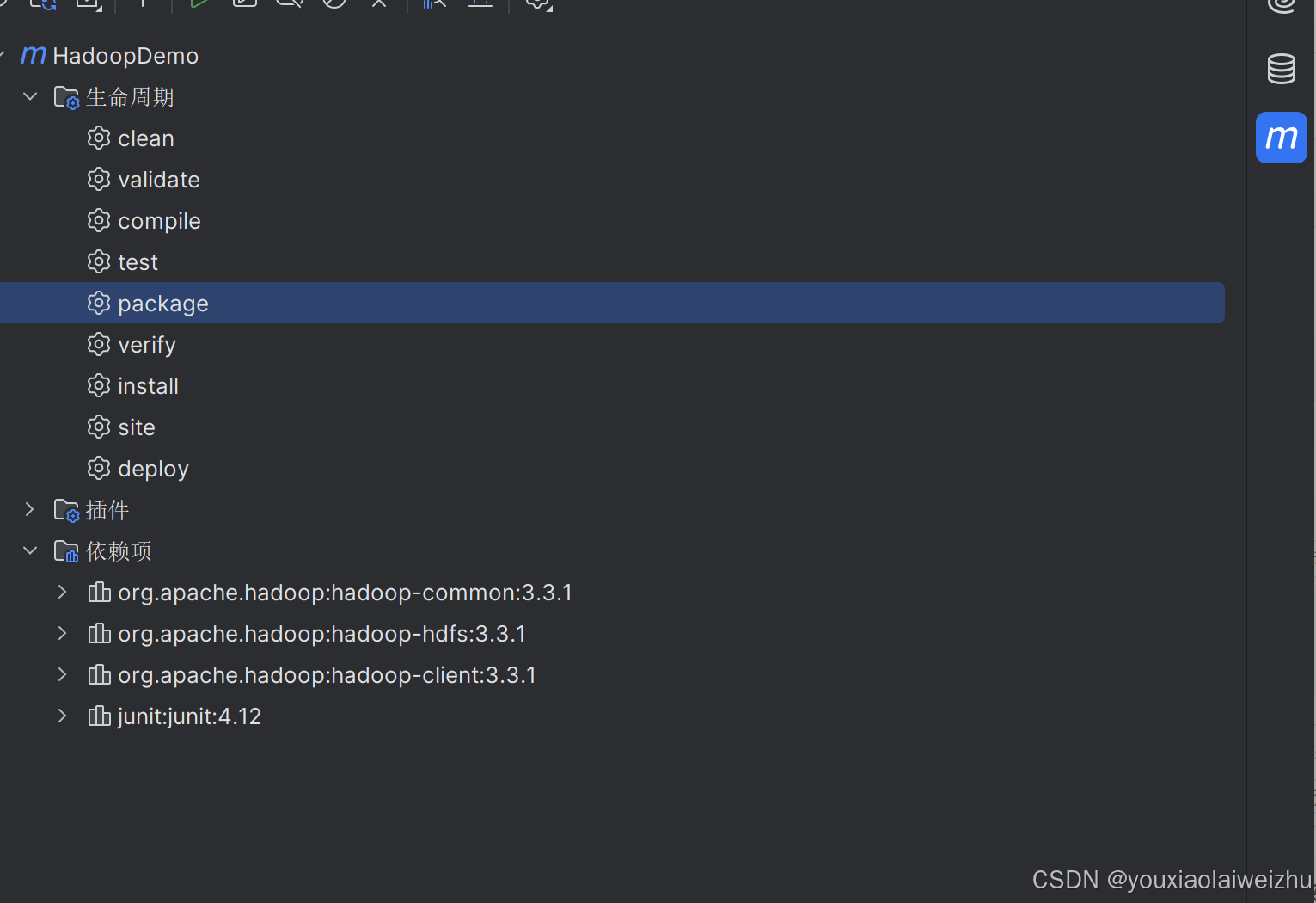

3.打包

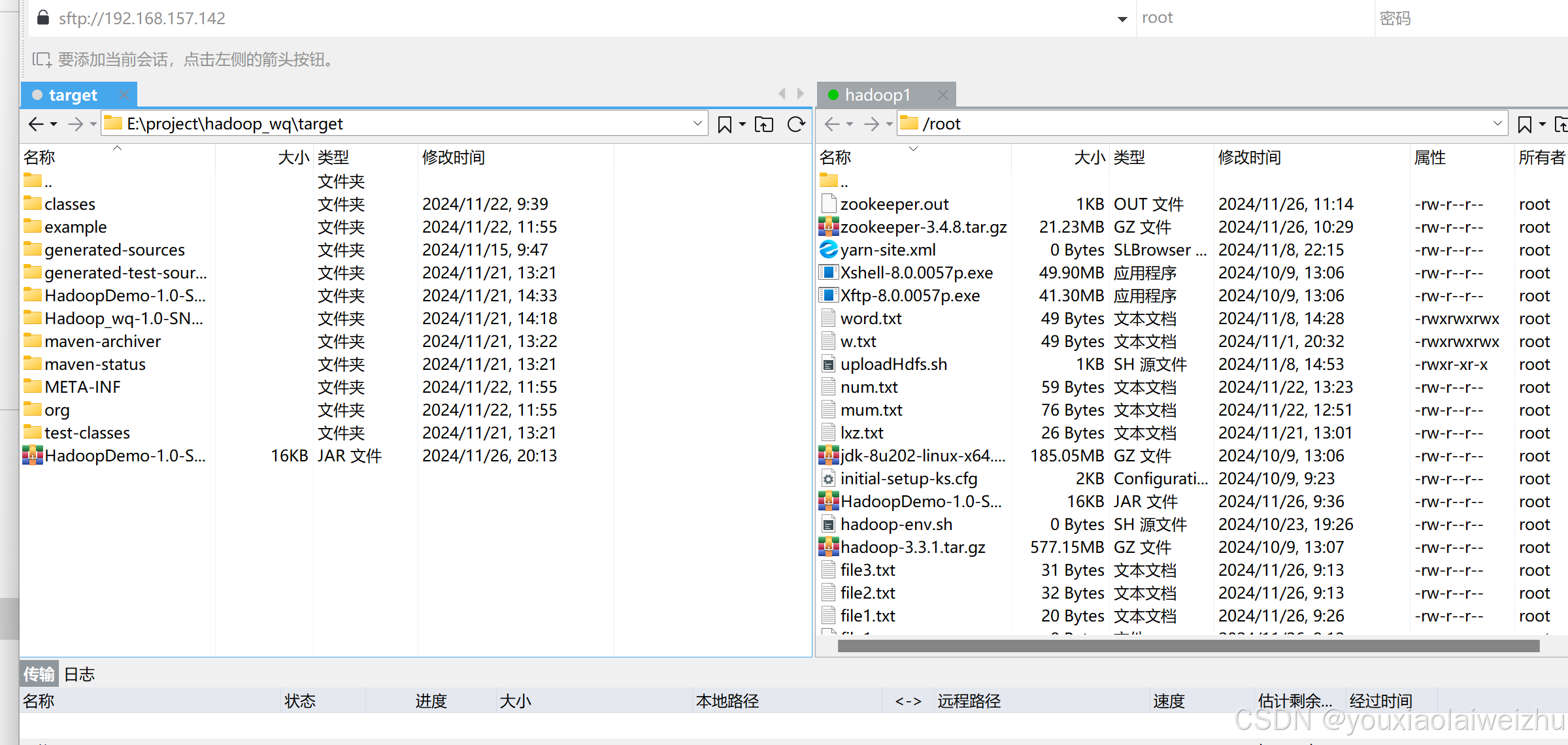

4.上传

5..启动hadoop集群

6.准备一个用于测试hadoop词频统计的文本文档,将这个上传。

vi wordcount_test.txt

hdfs dfs -mkdir -p /wordcount/input

dfs dfs -put wordcount_test.txt /wordcount/input

hadoop jar HadoopDemo-1.0-SNAPSHOT.jar org.mr.WordCountDriver

7.命令查看结果

2722

2722

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?