import torch

import numpy as np

from matplotlib import pyplot as plt

from torch.utils.data import DataLoader

from torchvision import transforms

from torchvision import datasets

import torch.nn.functional as F

“”"

卷积运算 使用mnist数据集,和10-4,11类似的,只是这里:1.输出训练轮的acc 2.模型上使用torch.nn.Sequential

“”"

Super parameter ------------------------------------------------------------------------------------

batch_size = 64

learning_rate = 0.01

momentum = 0.5

EPOCH = 10

Prepare dataset ------------------------------------------------------------------------------------

transform = transforms.Compose([transforms.ToTensor(), transforms.Normalize((0.1307,), (0.3081,))])

softmax归一化指数函数(https://blog.youkuaiyun.com/lz_peter/article/details/84574716),其中0.1307是mean均值和0.3081是std标准差

train_dataset = datasets.MNIST(root=‘./data/mnist’, train=True, transform=transform) # 本地没有就加上download=True

test_dataset = datasets.MNIST(root=‘./data/mnist’, train=False, transform=transform) # train=True训练集,=False测试集

train_loader = DataLoader(train_dataset, batch_size=batch_size, shuffle=True)

test_loader = DataLoader(test_dataset, batch_size=batch_size, shuffle=False)

fig = plt.figure()

for i in range(12):

plt.subplot(3, 4, i+1)

plt.tight_layout()

plt.imshow(train_dataset.train_data[i], cmap=‘gray’, interpolation=‘none’)

plt.title(“Labels: {}”.format(train_dataset.train_labels[i]))

plt.xticks([])

plt.yticks([])

plt.show()

训练集乱序,测试集有序

Design model using class ------------------------------------------------------------------------------

class Net(torch.nn.Module):

def init(self):

super(Net, self).init()

self.conv1 = torch.nn.Sequential(

torch.nn.Conv2d(1, 10, kernel_size=5),

torch.nn.ReLU(),

torch.nn.MaxPool2d(kernel_size=2),

)

self.conv2 = torch.nn.Sequential(

torch.nn.Conv2d(10, 20, kernel_size=5),

torch.nn.ReLU(),

torch.nn.MaxPool2d(kernel_size=2),

)

self.fc = torch.nn.Sequential(

torch.nn.Linear(320, 50),

torch.nn.Linear(50, 10),

)

def forward(self, x):

batch_size = x.size(0)

x = self.conv1(x) # 一层卷积层,一层池化层,一层激活层(图是先卷积后激活再池化,差别不大)

x = self.conv2(x) # 再来一次

x = x.view(batch_size, -1) # flatten 变成全连接网络需要的输入 (batch, 20,4,4) ==> (batch,320), -1 此处自动算出的是320

x = self.fc(x)

return x # 最后输出的是维度为10的,也就是(对应数学符号的0~9)

model = Net()

Construct loss and optimizer ------------------------------------------------------------------------------

criterion = torch.nn.CrossEntropyLoss() # 交叉熵损失

optimizer = torch.optim.SGD(model.parameters(), lr=learning_rate, momentum=momentum) # lr学习率,momentum冲量

Train and Test CLASS --------------------------------------------------------------------------------------

把单独的一轮一环封装在函数类里

def train(epoch):

running_loss = 0.0 # 这整个epoch的loss清零

running_total = 0

running_correct = 0

for batch_idx, data in enumerate(train_loader, 0):

inputs, target = data

optimizer.zero_grad()

# forward + backward + update

outputs = model(inputs)

loss = criterion(outputs, target)

loss.backward()

optimizer.step()

# 把运行中的loss累加起来,为了下面300次一除

running_loss += loss.item()

# 把运行中的准确率acc算出来

_, predicted = torch.max(outputs.data, dim=1)

running_total += inputs.shape[0]

running_correct += (predicted == target).sum().item()

if batch_idx % 300 == 299: # 不想要每一次都出loss,浪费时间,选择每300次出一个平均损失,和准确率

print('[%d, %5d]: loss: %.3f , acc: %.2f %%'

% (epoch + 1, batch_idx + 1, running_loss / 300, 100 * running_correct / running_total))

running_loss = 0.0 # 这小批300的loss清零

running_total = 0

running_correct = 0 # 这小批300的acc清零

#保存模型

torch.save(model.state_dict(), ‘./model_Mnist.pth’)

torch.save(optimizer.state_dict(), ‘./optimizer_Mnist.pth’)

def test():

correct = 0

total = 0

with torch.no_grad(): # 测试集不用算梯度

for data in test_loader:

images, labels = data

outputs = model(images)

_, predicted = torch.max(outputs.data, dim=1) # dim = 1 列是第0个维度,行是第1个维度,沿着行(第1个维度)去找1.最大值和2.最大值的下标

total += labels.size(0) # 张量之间的比较运算

correct += (predicted == labels).sum().item()

acc = correct / total

print('[%d / %d]: Accuracy on test set: %.1f %% ’ % (epoch+1, EPOCH, 100 * acc)) # 求测试的准确率,正确数/总数

return acc

Start train and Test --------------------------------------------------------------------------------------

if name == ‘main’:

acc_list_test = []

for epoch in range(EPOCH):

train(epoch)

# if epoch % 10 == 9: #每训练10轮 测试1次

acc_test = test()

acc_list_test.append(acc_test)

plt.plot(acc_list_test)

plt.xlabel('Epoch')

plt.ylabel('Accuracy On TestSet')

plt.show()

在原始训练代码文件末尾追加以下内容(确保Net类已定义)

import torch

import onnx

import onnxruntime as ort

模型定义必须与训练时完全一致(此处直接使用原文件中的Net类)

class Net(torch.nn.Module):

def init(self):

super(Net, self).init()

self.conv1 = torch.nn.Sequential(

torch.nn.Conv2d(1, 10, kernel_size=5),

torch.nn.ReLU(),

torch.nn.MaxPool2d(kernel_size=2),

)

self.conv2 = torch.nn.Sequential(

torch.nn.Conv2d(10, 20, kernel_size=5),

torch.nn.ReLU(),

torch.nn.MaxPool2d(kernel_size=2),

)

self.fc = torch.nn.Sequential(

torch.nn.Linear(320, 50),

torch.nn.Linear(50, 10),

)

def forward(self, x):

batch_size = x.size(0)

x = self.conv1(x)

x = self.conv2(x)

x = x.view(batch_size, -1)

x = self.fc(x)

return x

导出配置 ------------------------------------------------------------------

PTH_PATH = ‘./model_Mnist.pth’

ONNX_PATH = ‘./model_Mnist.onnx’

初始化并加载模型

model = Net()

model.load_state_dict(torch.load(PTH_PATH))

model.eval()

创建符合MNIST输入的虚拟数据(1,1,28,28)

dummy_input = torch.randn(1, 1, 28, 28)

执行导出

torch.onnx.export(

model,

dummy_input,

ONNX_PATH,

input_names=[“input”],

output_names=[“output”],

opset_version=13,

dynamic_axes={

‘input’: {0: ‘batch_size’},

‘output’: {0: ‘batch_size’}

}

)

验证导出结果 -------------------------------------------------------------

def validate_onnx():

# 加载ONNX模型

onnx_model = onnx.load(ONNX_PATH)

onnx.checker.check_model(onnx_model)

# 对比原始模型与ONNX模型输出

with torch.no_grad():

torch_out = model(dummy_input)

ort_session = ort.InferenceSession(ONNX_PATH)

onnx_out = ort_session.run(

None,

{'input': dummy_input.numpy()}

)[0]

# 输出相似度验证

print(f"PyTorch输出: {torch_out.numpy()}")

print(f"ONNX输出: {onnx_out}")

print(f"最大差值: {np.max(np.abs(torch_out.numpy() - onnx_out))}")

validate_onnx()

这是我的手写体识别代码,生成了onnx文件,现在我想在Ubuntu上将onnx转化为rknn模型,请基于下面的代码结构与内容,生成适配我的源码的转化rknn代码,且注意,下面代码的rknn-toolkit2版本是2.3.0,但我的Ubuntu安装的是1.6.0版本的按照这些要求来生成需要的正确的代码

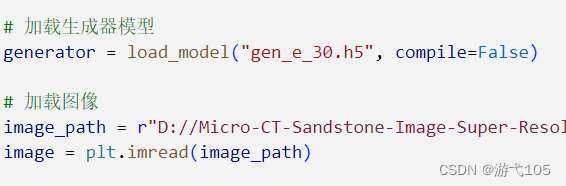

if __name__ == '__main__':

# Create RKNN object

rknn = RKNN(verbose=True)

if not os.path.exists(ONNX_MODEL):

print('Dosent exists ONNX_MODEL')

# pre-process config

print('--> config model')

rknn.config(mean_values=None, std_values=None,

target_platform='rk3588')

print('done')

# Load model

print('--> Loading model')

ret = rknn.load_onnx(model=ONNX_MODEL)

if ret != 0:

print('Load model failed!')

exit(ret)

print('done')

# Build model

print('--> Building model')

ret = rknn.build(do_quantization=False) # No quantization

if ret != 0:

print('Build model failed!')

exit(ret)

print('done')

# Export rknn model

print('--> Export rknn model')

ret = rknn.export_rknn(RKNN_MODEL)

if ret != 0:

print('Export rknn model failed!')

exit(ret)

print('done')

# Init runtime environment

print('--> Init runtime environment')

ret = rknn.init_runtime() # target = 'rk3588'

if ret != 0:

print('Init runtime environment failed!')

exit(ret)

print('done')

# Inference

print('--> Running model')

最新发布

553

553

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?