b站up主:刘二大人《PyTorch深度学习实践》

教程: https://www.bilibili.com/video/BV1Y7411d7Ys?p=6&vd_source=715b347a0d6cb8aa3822e5a102f366fe

无层模型

:

t

o

r

c

h

.

n

n

.

L

i

n

e

a

r

激活函数:

R

e

L

U

+

s

i

g

m

o

i

d

交叉熵损失函数:

n

n

.

C

r

o

s

s

E

n

t

r

o

p

y

L

o

s

s

优化器:

o

p

t

i

m

.

S

G

D

,

l

r

=

0.01

,

m

o

m

e

n

t

u

m

=

0.5

无层模型:torch.nn.Linear \\激活函数:ReLU+sigmoid \\交叉熵损失函数:nn.CrossEntropyLoss \\优化器:optim.SGD,lr=0.01,momentum=0.5

无层模型:torch.nn.Linear激活函数:ReLU+sigmoid交叉熵损失函数:nn.CrossEntropyLoss优化器:optim.SGD,lr=0.01,momentum=0.5

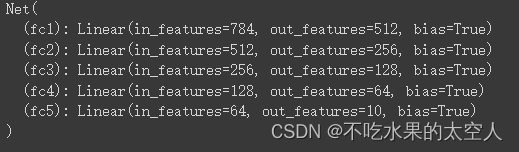

网络结构:

源码:

import torch

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.nn.functional as F

import torch.optim as optim

import numpy as np

import matplotlib.pyplot as plt

batch_size = 64

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307, ), (0.3081, ))

])

train_dataset = datasets.MNIST(root='../dataset/mnist/',

train=True,

download=True,

transform=transform)

train_loader = DataLoader(train_dataset,

shuffle=True,

batch_size=batch_size)

test_dataset = datasets.MNIST(root='../dataset/mnist',

train=False,

download=True,

transform=transform)

test_loader = DataLoader(test_dataset,

shuffle=False,

batch_size=batch_size)

class Net(torch.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.fc1 = torch.nn.Linear(784, 512)

self.fc2 = torch.nn.Linear(512, 256)

self.fc3 = torch.nn.Linear(256, 128)

self.fc4 = torch.nn.Linear(128, 64)

self.fc5 = torch.nn.Linear(64, 10)

def forward(self, x):

x = x.reshape(-1,784)

x = F.relu(self.fc1(x))

x = F.relu(self.fc2(x))

x = F.relu(self.fc3(x))

x = F.relu(self.fc4(x))

x = self.fc5(x)

return x

model = Net()

print(model, '\n')

criterion=torch.nn.CrossEntropyLoss()

optimizer=optim.SGD(model.parameters(),lr=0.01,momentum=0.5)

loss_val = []

def train(epoch):

running_loss = 0.0

for i, data in enumerate(train_loader, 0):

inputs, target = data

optimizer.zero_grad()

outputs = model(inputs)

# print('inputs = ', inputs.shape)

# print('outputs = ', outputs.shape)

# print('target = ', target.shape)

loss = criterion(outputs, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

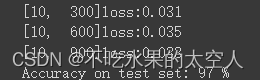

if i%300 == 299:

print('[%d,%5d]loss:%.3f'%(epoch+1,i+1,running_loss/300))

loss_val.append(running_loss)

running_loss = 0.0

def test():

correct = 0

total = 0

with torch.no_grad():

for data in test_loader:

images, labels = data

outputs = model(images)

_, predicted = torch.max(outputs.data, dim = 1) #在outputs中找到最高概率的index赋值给predicted

total += labels.size(0) #batch_size++ 也就是样本总数

correct += (predicted == labels).sum().item()

print('Accuracy on test set: %d %%' % (100 * correct / total))

for epoch in range(10):

train(epoch)

test()

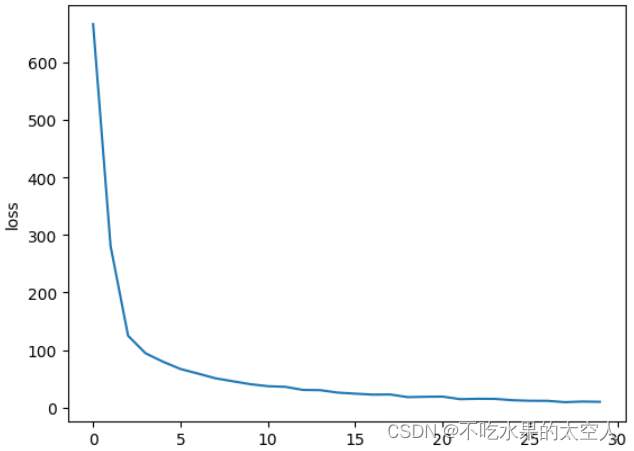

plt.plot(np.squeeze(loss_val))

plt.ylabel('loss')

plt.xlabel('Iteration')

plt.show()

训练过程(最后一个epoch):

该教程介绍了如何使用PyTorch进行深度学习实践,具体是通过一个无层模型在MNIST数据集上进行训练。模型包括了线性层、ReLU和sigmoid激活函数,以及CrossEntropyLoss作为损失函数,使用SGD优化器进行参数更新。文章展示了训练过程和损失函数变化情况。

该教程介绍了如何使用PyTorch进行深度学习实践,具体是通过一个无层模型在MNIST数据集上进行训练。模型包括了线性层、ReLU和sigmoid激活函数,以及CrossEntropyLoss作为损失函数,使用SGD优化器进行参数更新。文章展示了训练过程和损失函数变化情况。

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?