第一步: 数据载入

import tensorflow as tf

#from tensorflow.contrib import rnn

from tensorflow.examples.tutorials.mnist import input_data

import numpy as np

import matplotlib.pyplot as plt

print ("Packages imported")

mnist = input_data.read_data_sets("data/", one_hot=True)

trainimgs, trainlabels, testimgs, testlabels \

= mnist.train.images, mnist.train.labels, mnist.test.images, mnist.test.labels

ntrain, ntest, dim, nclasses \

= trainimgs.shape[0], testimgs.shape[0], trainimgs.shape[1], trainlabels.shape[1]

print ("MNIST loaded")

第二步: 初始化参数

diminput = 28 #每个输入都是28个像素点

dimhidden = 128 #隐层128个神经元

dimoutput = nclasses #想要分为多少类别(10)

nsteps = 28 #有28个1x28的小步

weights = {

'hidden': tf.Variable(tf.random_normal([diminput, dimhidden])),

'out': tf.Variable(tf.random_normal([dimhidden, dimoutput]))

}

biases = {

'hidden': tf.Variable(tf.random_normal([dimhidden])),

'out': tf.Variable(tf.random_normal([dimoutput]))

}

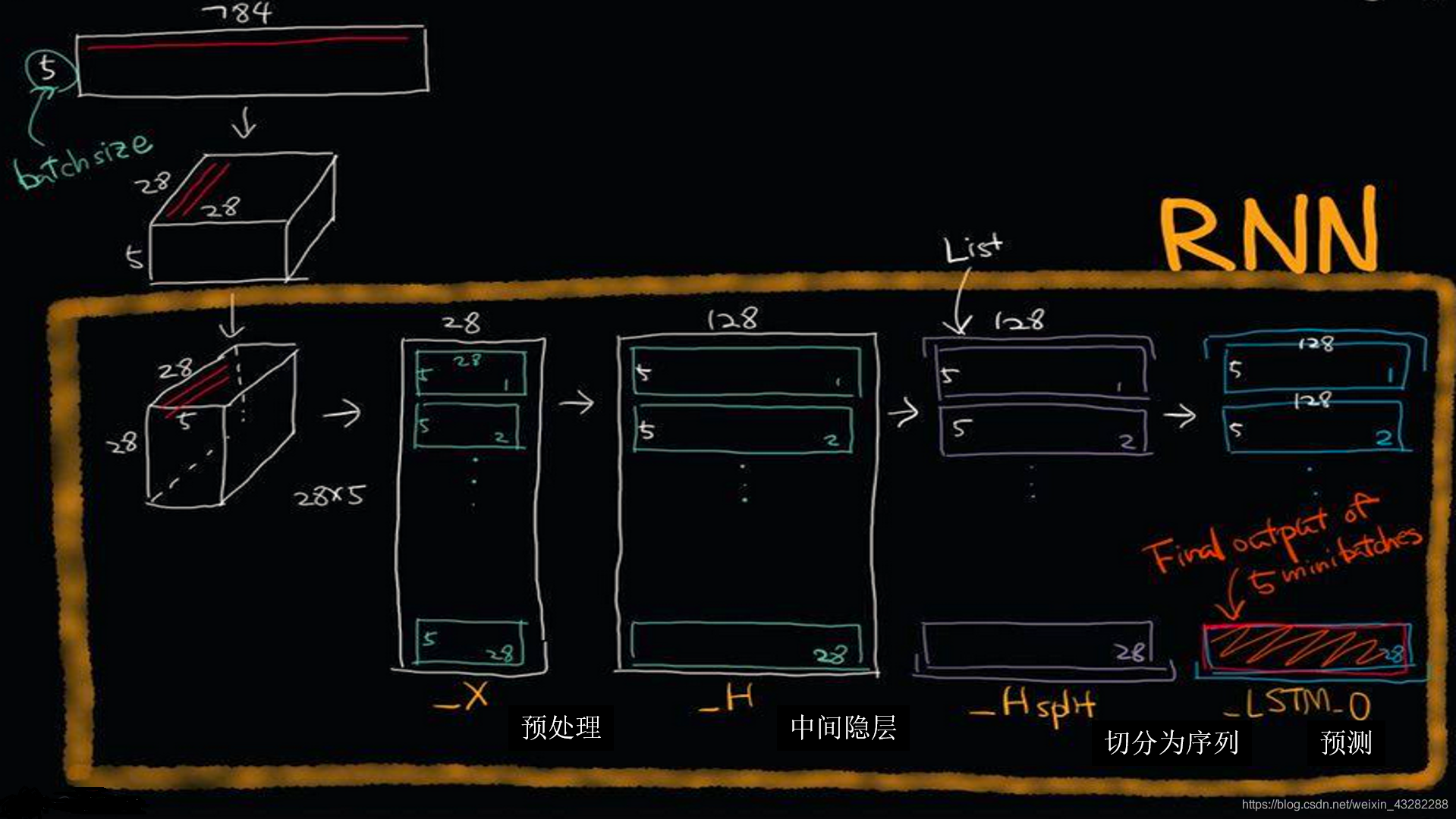

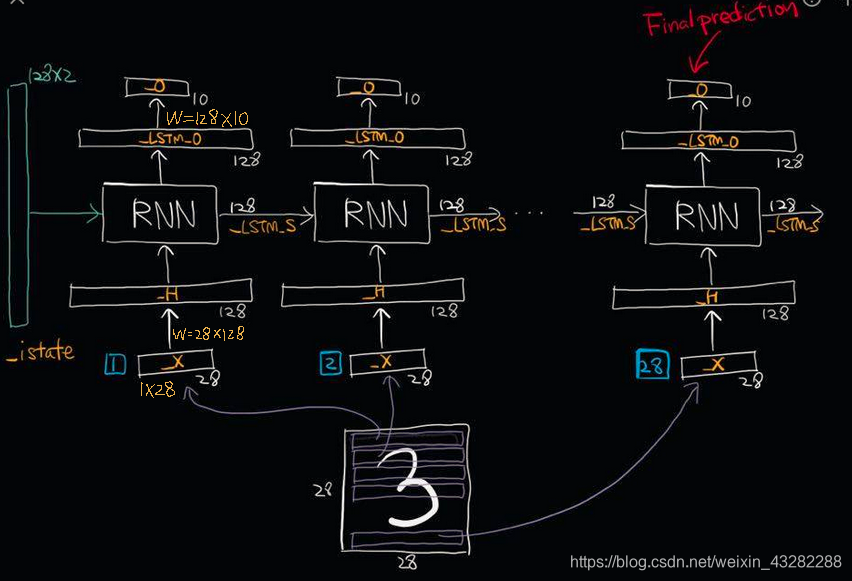

第三步: 构建RNN函数

def _RNN(_X, _W, _b, _nsteps, _name):

#1.转换输入,输入_X是还有batchSize=5的5张28*28图片,需要将输入从

# [batchSize,nsteps,diminput]==>[nsteps,batchSize,diminput]

_X = tf.transpose(_X, [1, 0, 2])

#2.reshape _X为[nsteps*batchSize,diminput]

_X = tf.reshape(_X, [-1, diminput])

#3.input layer -> hidden layer

_H = tf.matmul(_X, _W['hidden']) + _b['hidden']

#4.将数据切分为‘nsteps’个切片,第i个切片为第i个batch data

# tensoflow >0.12

_Hsplit = tf.split(_H, _nsteps, 0)

# tensoflow <0.12 _Hsplit = tf.split(0,_nsteps,_H)

# 5.计算LSTM final output(_LSTM_O) 和 state(_LSTM_S)

# _LSTM_O和_LSTM_S都有‘batchSize’个元素

# _LSTM_O用于预测输出

# 指定命名域

# with tf.variable_scope(tf.get_variable_scope(),reuse=True):先False 再True

with tf.variable_scope(_name) as scope:

#变量共享(运行时先注释再取消注释)

scope.reuse_variables()

# lstm_cell = tf.nn.rnn_cell.BasicLSTMCell(dimhidden,forget_bias = 1.0)# forget_bias = 1.0不忘记数据

# _LSTM_O,_SLTM_S = tf.nn.rnn(lstm_cell,_Hsplit,dtype=tf.float32)

lstm_cell = tf.contrib.rnn.BasicLSTMCell(dimhidden)

_LSTM_O, _LSTM_S = tf.contrib.rnn.static_rnn(lstm_cell, _Hsplit, dtype=tf.float32)

# 6.输出,需要最后一个RNN单元作为预测输出所以取_LSTM_O[-1]

_O = tf.matmul(_LSTM_O[-1], _W['out']) + _b['out']

# Return!

return {

'X': _X, 'H': _H, 'Hsplit': _Hsplit,

'LSTM_O': _LSTM_O, 'LSTM_S': _LSTM_S, 'O': _O

}

print ("Network ready")

第四步: 构建cost函数和准确度函数

learning_rate = 0.001

x = tf.placeholder("float", [None, nsteps, diminput])

y = tf.placeholder("float", [None, dimoutput])

myrnn = _RNN(x, weights, biases, nsteps, 'basic')

pred = myrnn['O']

#cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(pred,y))

cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=y, logits=pred))

optm = tf.train.GradientDescentOptimizer(learning_rate).minimize(cost) # Adam Optimizer

accr = tf.reduce_mean(tf.cast(tf.equal(tf.argmax(pred,1), tf.argmax(y,1)), tf.float32))

init = tf.global_variables_initializer()

print ("Network Ready!")

第五步: 训练模型, 降低cost值,优化参数

training_epochs = 5

batch_size = 16

display_step = 1

sess = tf.Session()

sess.run(init)

print ("Start optimization")

for epoch in range(training_epochs):

avg_cost = 0.

#total_batch = int(mnist.train.num_examples/batch_size)

total_batch = 100

# Loop over all batches

for i in range(total_batch):

batch_xs, batch_ys = mnist.train.next_batch(batch_size)

batch_xs = batch_xs.reshape((batch_size, nsteps, diminput))

# Fit training using batch data

feeds = {x: batch_xs, y: batch_ys}

sess.run(optm, feed_dict=feeds)

# Compute average loss

avg_cost += sess.run(cost, feed_dict=feeds)/total_batch

# Display logs per epoch step

if epoch % display_step == 0:

print ("Epoch: %03d/%03d cost: %.9f" % (epoch, training_epochs, avg_cost))

feeds = {x: batch_xs, y: batch_ys}

train_acc = sess.run(accr, feed_dict=feeds)

print (" Training accuracy: %.3f" % (train_acc))

testimgs = testimgs.reshape((ntest, nsteps, diminput))

#feeds = {x: testimgs, y: testlabels, istate: np.zeros((ntest, 2*dimhidden))}

feeds = {x: testimgs, y: testlabels}

test_acc = sess.run(accr, feed_dict=feeds)

print (" Test accuracy: %.3f" % (test_acc))

print ("Optimization Finished.")

本文介绍了一个使用RNN在MNIST数据集上进行手写数字识别的深度学习项目。通过详细步骤展示了如何加载数据、初始化参数、构建RNN网络、定义损失函数和优化过程,最终实现模型训练并评估其性能。

本文介绍了一个使用RNN在MNIST数据集上进行手写数字识别的深度学习项目。通过详细步骤展示了如何加载数据、初始化参数、构建RNN网络、定义损失函数和优化过程,最终实现模型训练并评估其性能。

1246

1246

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?