Ex1_ML by Andrew

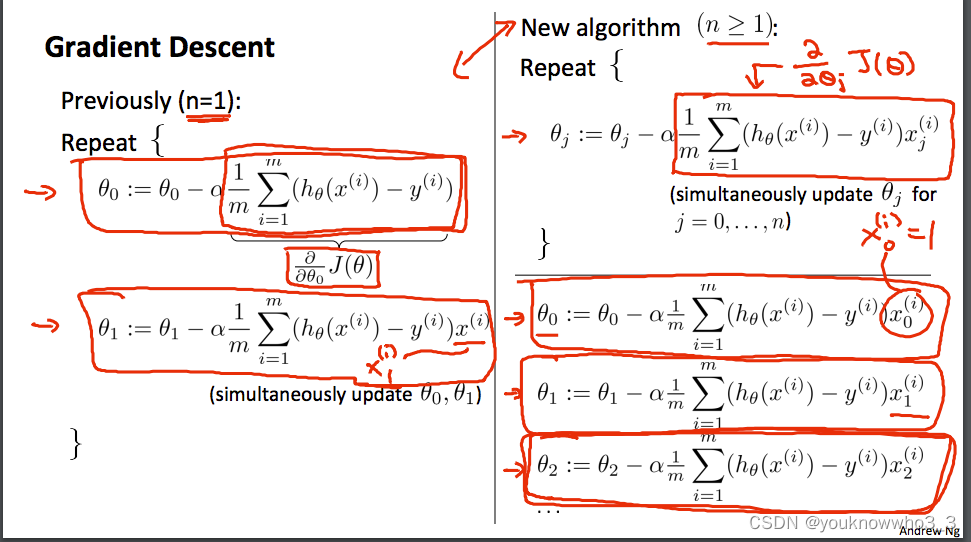

Gradient Descent

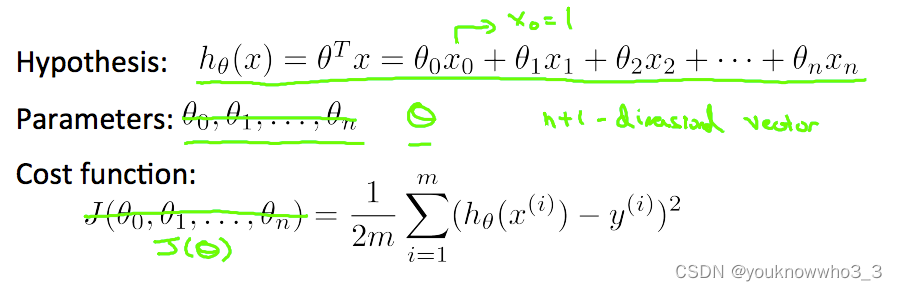

So, J(theta)=(1/2*m) * sum((h(i)-y(i)).^2)

hθ(x) = X * theta,size 为 m * 1

function J = computeCost(X, y, theta)

m = length(y); % number of training examples

h = X * theta; % h size =m *1

temp = 0;

for i=1:m

temp = temp + (h(i) - y(i))^2;

end

J = (1/(2*m)) * temp;

%J=(1/(2*m)) * (sum(X*theta-y).)^2

%.means each element multiply

end

get theta

matrix 先行再列,横着叫第几行,竖着的叫第几列;

xj(i) 表示第 j 列第 i 个

在这个X中有95行2列

function [theta, J_history] = gradientDescent(X, y, theta, alpha, num_iters)

%GRADIENTDESCENT Performs gradient descent to learn theta

% theta = GRADIENTDESCENT(X, y, theta, alpha, num_iters) updates theta by

% taking num_iters gradient steps with learning rate alpha

% Initialize some useful values

m = length(y); % number of training examples

J_history = zeros(num_iters, 1);

for iter = 1:num_iters

theta=theta-((alpha/m)*X'*(X*theta-y))

% X'是X的行列翻倒

% Save the cost J in every iteration

J_history(iter) = computeCost(X, y, theta);

end

end

328

328

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?