版本匹配

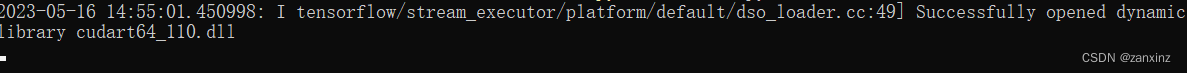

报错:Could not load dynamic library ‘cupti64_110.dll’; dlerror: cupti64_110.dll not found

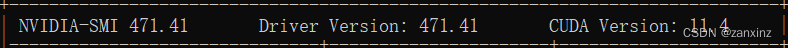

是因为我电脑中的 cuda 版本以前是 10,现在是 11.4 ,所以需要安装对应版本的 cudatoolkit

解决方法:在 anaconda 对应的环境下 pip install

conda install cudatoolkit=11.0

我这里的环境名是 tf

切换到不同容器环境是: conda activate tf 或者 conda activate base。从而,可以看到模块成功加载。

CUDA 降级

我电脑里本来是 11.4 ,这和 tensorflow 2.4.0 不匹配,所以需要降至 11.0

NVIDIA CUDA Toolkit 11.0 Downloads

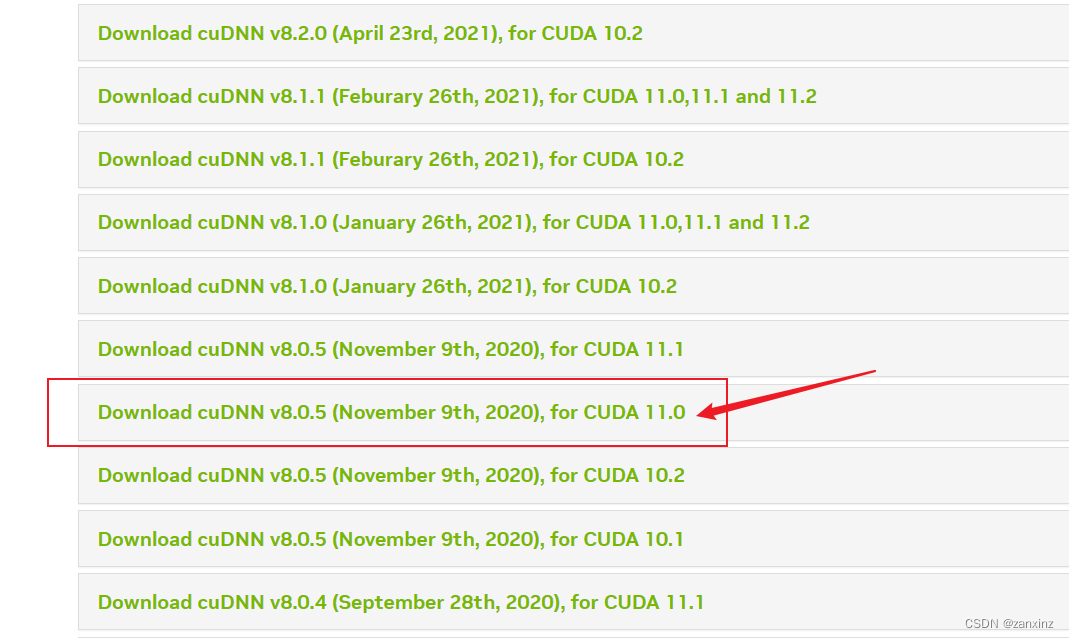

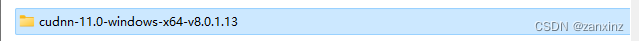

报错:找不到 cudnn64_8.dll

将 cudnn bin 目录文件下的几个文件粘贴到 …\NVIDIA GPU Computing Toolkit\CUDA\v11.0\bin应文件夹下即可。

选择匹配对应 cuda 版本的,我是 11.0

一定要版本匹配

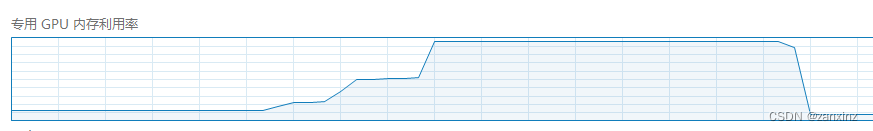

显存较小,需要设置按需增长的显存分配

gpus = tf.config.experimental.list_physical_devices(device_type='GPU')

for gpu in gpus:

tf.config.experimental.set_memory_growth(gpu, True)

GPU 显存不足

是因为数据量太大,类型太多,我这里 1650 的显存是 4G。

解决方法:

- 使用较少的数据量、识别的种类减少。

- 换显存更大 的显卡。

374

374

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?