[root@localhost service]# docker logs f469a8219fe9

Model 'bge-reranker-large' exists.

NPU_ID: 0, CHIP_ID: 0, CHIP_LOGIC_ID: 0 CHIP_TYPE: 310P3, MEMORY_TYPE: DDR, CAPACITY: 44280, USAGE_RATE: 85, AVAIL_CAPACITY: 6642

Using NPU: 0 start TEI service

Starting TEI service on 127.0.0.1:8080...

2025-06-12T03:43:19.463434Z INFO text_embeddings_router: router/src/main.rs:140: Args { model_id: "/hom*/**********/*****/***-********-**rge", revision: None, tokenization_workers: None, dtype: None, pooling: None, max_concurrent_requests: 512, max_batch_tokens: 16384, max_batch_requests: None, max_client_batch_size: 32, auto_truncate: false, hf_api_token: None, hostname: "127.0.0.1", port: 8080, uds_path: "/tmp/text-embeddings-inference-server", huggingface_hub_cache: None, payload_limit: 2000000, api_key: None, json_output: false, otlp_endpoint: None, cors_allow_origin: None }

2025-06-12T03:43:19.880754Z WARN text_embeddings_router: router/src/lib.rs:165: Could not find a Sentence Transformers config

2025-06-12T03:43:19.880797Z INFO text_embeddings_router: router/src/lib.rs:169: Maximum number of tokens per request: 512

2025-06-12T03:43:19.881707Z INFO text_embeddings_core::tokenization: core/src/tokenization.rs:23: Starting 96 tokenization workers

2025-06-12T03:43:35.456234Z INFO text_embeddings_router: router/src/lib.rs:198: Starting model backend

2025-06-12T03:43:35.456841Z INFO text_embeddings_backend_python::management: backends/python/src/management.rs:54: Starting Python backend

2025-06-12T03:43:39.258328Z WARN python-backend: text_embeddings_backend_python::logging: backends/python/src/logging.rs:39: Could not import Flash Attention enabled models: No module named 'dropout_layer_norm'

2025-06-12T03:43:45.496920Z INFO text_embeddings_backend_python::management: backends/python/src/management.rs:118: Waiting for Python backend to be ready...

2025-06-12T03:43:46.082016Z INFO python-backend: text_embeddings_backend_python::logging: backends/python/src/logging.rs:37: Server started at unix:///tmp/text-embeddings-inference-server

2025-06-12T03:43:46.085857Z INFO text_embeddings_backend_python::management: backends/python/src/management.rs:115: Python backend ready in 10.593263393s

2025-06-12T03:43:46.153985Z ERROR python-backend: text_embeddings_backend_python::logging: backends/python/src/logging.rs:40: Method Predict encountered an error.

Traceback (most recent call last):

File "/home/HwHiAiUser/.local/bin/python-text-embeddings-server", line 8, in <module>

sys.exit(app())

File "/home/HwHiAiUser/.local/lib/python3.11/site-packages/typer/main.py", line 311, in __call__

return get_command(self)(*args, **kwargs)

File "/home/HwHiAiUser/.local/lib/python3.11/site-packages/click/core.py", line 1157, in __call__

return self.main(*args, **kwargs)

File "/home/HwHiAiUser/.local/lib/python3.11/site-packages/typer/core.py", line 716, in main

return _main(

File "/home/HwHiAiUser/.local/lib/python3.11/site-packages/typer/core.py", line 216, in _main

rv = self.invoke(ctx)

File "/home/HwHiAiUser/.local/lib/python3.11/site-packages/click/core.py", line 1434, in invoke

return ctx.invoke(self.callback, **ctx.params)

File "/home/HwHiAiUser/.local/lib/python3.11/site-packages/click/core.py", line 783, in invoke

return __callback(*args, **kwargs)

File "/home/HwHiAiUser/.local/lib/python3.11/site-packages/typer/main.py", line 683, in wrapper

return callback(**use_params) # type: ignore

File "/home/HwHiAiUser/text-embeddings-inference/backends/python/server/text_embeddings_server/cli.py", line 50, in serve

server.serve(model_path, dtype, uds_path)

File "/home/HwHiAiUser/text-embeddings-inference/backends/python/server/text_embeddings_server/server.py", line 93, in serve

asyncio.run(serve_inner(model_path, dtype))

File "/usr/lib/python3.11/asyncio/runners.py", line 190, in run

return runner.run(main)

File "/usr/lib/python3.11/asyncio/runners.py", line 118, in run

return self._loop.run_until_complete(task)

File "/usr/lib/python3.11/asyncio/base_events.py", line 641, in run_until_complete

self.run_forever()

File "/usr/lib/python3.11/asyncio/base_events.py", line 608, in run_forever

self._run_once()

File "/usr/lib/python3.11/asyncio/base_events.py", line 1936, in _run_once

handle._run()

File "/usr/lib/python3.11/asyncio/events.py", line 84, in _run

self._context.run(self._callback, *self._args)

File "/home/HwHiAiUser/.local/lib/python3.11/site-packages/grpc_interceptor/server.py", line 159, in invoke_intercept_method

return await self.intercept(

> File "/home/HwHiAiUser/text-embeddings-inference/backends/python/server/text_embeddings_server/utils/interceptor.py", line 21, in intercept

return await response

File "/home/HwHiAiUser/.local/lib/python3.11/site-packages/opentelemetry/instrumentation/grpc/_aio_server.py", line 82, in _unary_interceptor

raise error

File "/home/HwHiAiUser/.local/lib/python3.11/site-packages/opentelemetry/instrumentation/grpc/_aio_server.py", line 73, in _unary_interceptor

return await behavior(request_or_iterator, context)

File "/home/HwHiAiUser/text-embeddings-inference/backends/python/server/text_embeddings_server/server.py", line 45, in Predict

predictions = self.model.predict(batch)

File "/usr/lib/python3.11/contextlib.py", line 81, in inner

return func(*args, **kwds)

File "/home/HwHiAiUser/text-embeddings-inference/backends/python/server/text_embeddings_server/models/rerank_model.py", line 50, in predict

scores = self.model(**kwargs).logits.cpu().tolist()

File "/home/HwHiAiUser/.local/lib/python3.11/site-packages/torch/nn/modules/module.py", line 1518, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

File "/home/HwHiAiUser/.local/lib/python3.11/site-packages/torch/nn/modules/module.py", line 1527, in _call_impl

return forward_call(*args, **kwargs)

File "/home/HwHiAiUser/.local/lib/python3.11/site-packages/transformers/models/xlm_roberta/modeling_xlm_roberta.py", line 1205, in forward

outputs = self.roberta(

File "/home/HwHiAiUser/.local/lib/python3.11/site-packages/torch/nn/modules/module.py", line 1518, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

File "/home/HwHiAiUser/.local/lib/python3.11/site-packages/torch/nn/modules/module.py", line 1527, in _call_impl

return forward_call(*args, **kwargs)

File "/home/HwHiAiUser/.local/lib/python3.11/site-packages/transformers/models/xlm_roberta/modeling_xlm_roberta.py", line 807, in forward

extended_attention_mask: torch.Tensor = self.get_extended_attention_mask(attention_mask, input_shape)

File "/home/HwHiAiUser/.local/lib/python3.11/site-packages/transformers/modeling_utils.py", line 1079, in get_extended_attention_mask

extended_attention_mask = extended_attention_mask.to(dtype=dtype) # fp16 compatibility

RuntimeError: call aclnnCast failed, detail:EZ9999: Inner Error!

EZ9999: [PID: 296] 2025-06-12-03:43:46.144.695 Parse dynamic kernel config fail.

TraceBack (most recent call last):

AclOpKernelInit failed opType

Cast ADD_TO_LAUNCHER_LIST_AICORE failed.

[ERROR] 2025-06-12-03:43:46 (PID:296, Device:0, RankID:-1) ERR01100 OPS call acl api failed

2025-06-12T03:43:46.154332Z ERROR health:predict:predict: backend_grpc_client: backends/grpc-client/src/lib.rs:25: Server error: call aclnnCast failed, detail:EZ9999: Inner Error!

EZ9999: [PID: 296] 2025-06-12-03:43:46.144.695 Parse dynamic kernel config fail.

TraceBack (most recent call last):

AclOpKernelInit failed opType

Cast ADD_TO_LAUNCHER_LIST_AICORE failed.

[ERROR] 2025-06-12-03:43:46 (PID:296, Device:0, RankID:-1) ERR01100 OPS call acl api failed

2025-06-12T03:43:46.277430Z INFO text_embeddings_backend_python::management: backends/python/src/management.rs:132: Python backend process terminated

Error: Model backend is not healthy

Caused by:

Server error: call aclnnCast failed, detail:EZ9999: Inner Error!

EZ9999: [PID: 296] 2025-06-12-03:43:46.144.695 Parse dynamic kernel config fail.

TraceBack (most recent call last):

AclOpKernelInit failed opType

Cast ADD_TO_LAUNCHER_LIST_AICORE failed.

[ERROR] 2025-06-12-03:43:46 (PID:296, Device:0, RankID:-1) ERR01100 OPS call acl api failed

以上是我需要在dify中调用的大模型rerank,现在正在容器中运行,运行后up一段时间就exited了,然后我现在查看日志,以上是logs

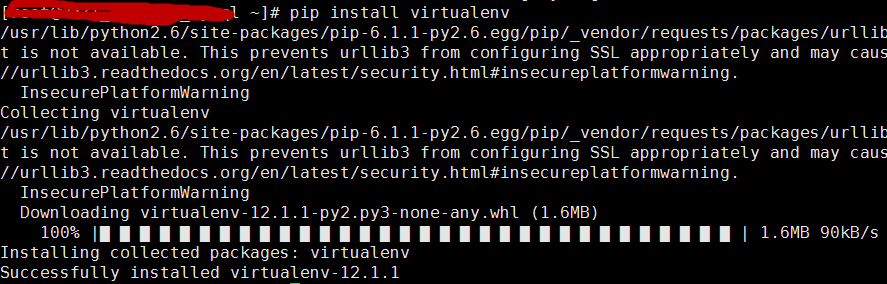

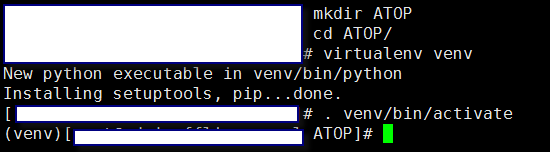

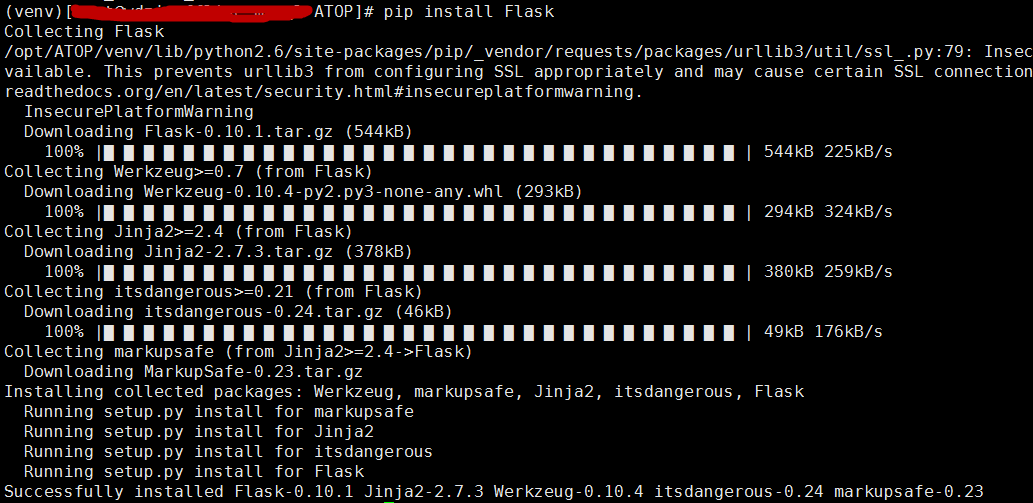

本文详细介绍了如何使用easy_install和pip这两个工具来下载并安装Python的公共资源库PyPI上的资源包,包括安装流程、注意事项及实例演示。

本文详细介绍了如何使用easy_install和pip这两个工具来下载并安装Python的公共资源库PyPI上的资源包,包括安装流程、注意事项及实例演示。

818

818

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?