[root@node ~]# mysql -u root -p

Enter password:

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 8

Server version: 8.0.42 MySQL Community Server - GPL

Copyright (c) 2000, 2025, Oracle and/or its affiliates.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql> CREATE DATABASE weblog_db;

ERROR 1064 (42000): You have an error in your SQL syntax; check the manual that corresponds to your MySQL server version for the right syntax to use near 'CREATE DATABASE weblog_db' at line 1

mysql> CREATE DATABASE weblog_db;

ERROR 1007 (HY000): Can't create database 'weblog_db'; database exists

mysql> USE weblog_db;

Reading table information for completion of table and column names

You can turn off this feature to get a quicker startup with -A

Database changed

mysql> DROP DATABASE IF EXISTS weblog_db;

Query OK, 2 rows affected (0.02 sec)

mysql> CREATE DATABASE weblog_db;

Query OK, 1 row affected (0.01 sec)

mysql> USE weblog_db;

Database changed

mysql> CREATE TABLE page_visits (

-> page VARCHAR(255) ,

-> visits BIGINT

-> );

ERROR 1064 (42000): You have an error in your SQL syntax; check the manual that corresponds to your MySQL server version for the right syntax to use near 'TABLE page_visits (

page VARCHAR(255) ,

visits BIGINT

)' at line 1

mysql> CREATE TABLE page_visits (

-> page VARCHAR(255),

-> visits BIGINT

-> );

Query OK, 0 rows affected (0.02 sec)

mysql> SHOW TABLES;

+---------------------+

| Tables_in_weblog_db |

+---------------------+

| page_visits |

+---------------------+

1 row in set (0.00 sec)

mysql> ^C

mysql> q

-> quit

-> exit

-> ^C

mysql> ^C

mysql> ^C

mysql> ^DBye

[root@node ~]# hive

SLF4J: Failed to load class "org.slf4j.impl.StaticLoggerBinder".

SLF4J: Defaulting to no-operation (NOP) logger implementation

SLF4J: See http://www.slf4j.org/codes.html#StaticLoggerBinder for further details.

Hive Session ID = 7bb79582-cc2b-49b6-abc7-020dcdc46542

Logging initialized using configuration in jar:file:/home/hive-3.1.3/lib/hive-common-3.1.3.jar!/hive-log4j2.properties Async: true

Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.

Hive Session ID = 15d9da52-e18e-40b2-a80f-e76eda81df4c

hive> DESCRIBE FORMATTED page_visits;

OK

# col_name data_type comment

page string

visits bigint

# Detailed Table Information

Database: default

OwnerType: USER

Owner: root

CreateTime: Tue Jul 08 01:43:42 CST 2025

LastAccessTime: UNKNOWN

Retention: 0

Location: hdfs://node:9000/hive/warehouse/page_visits

Table Type: MANAGED_TABLE

Table Parameters:

COLUMN_STATS_ACCURATE {\"BASIC_STATS\":\"true\"}

bucketing_version 2

numFiles 1

numRows 4

rawDataSize 56

totalSize 60

transient_lastDdlTime 1751910222

# Storage Information

SerDe Library: org.apache.hadoop.hive.serde2.lazy.LazySimpleSerDe

InputFormat: org.apache.hadoop.mapred.TextInputFormat

OutputFormat: org.apache.hadoop.hive.ql.io.HiveIgnoreKeyTextOutputFormat

Compressed: No

Num Buckets: -1

Bucket Columns: []

Sort Columns: []

Storage Desc Params:

serialization.format 1

Time taken: 0.785 seconds, Fetched: 32 row(s)

hive> [root@node ~]#

[root@node ~]# sqoop export \

> --connect jdbc:mysql://localhost/weblog_db \

> --username root \

> --password Aa@123456 \

> --table page_visits \

> --export-dir hdfs://node:9000/hive/warehouse/page_visits \

> --input-fields-terminated-by '\001' \

> --num-mappers 1

Warning: /home/sqoop-1.4.7/../hcatalog does not exist! HCatalog jobs will fail.

Please set $HCAT_HOME to the root of your HCatalog installation.

Warning: /home/sqoop-1.4.7/../accumulo does not exist! Accumulo imports will fail.

Please set $ACCUMULO_HOME to the root of your Accumulo installation.

2025-07-08 15:28:12,550 INFO sqoop.Sqoop: Running Sqoop version: 1.4.7

2025-07-08 15:28:12,587 WARN tool.BaseSqoopTool: Setting your password on the command-line is insecure. Consider using -P instead.

2025-07-08 15:28:12,704 INFO manager.MySQLManager: Preparing to use a MySQL streaming resultset.

2025-07-08 15:28:12,708 INFO tool.CodeGenTool: Beginning code generation

Loading class `com.mysql.jdbc.Driver'. This is deprecated. The new driver class is `com.mysql.cj.jdbc.Driver'. The driver is automatically registered via the SPI and manual loading of the driver class is generally unnecessary.

2025-07-08 15:28:13,225 INFO manager.SqlManager: Executing SQL statement: SELECT t.* FROM `page_visits` AS t LIMIT 1

2025-07-08 15:28:13,266 INFO manager.SqlManager: Executing SQL statement: SELECT t.* FROM `page_visits` AS t LIMIT 1

2025-07-08 15:28:13,280 INFO orm.CompilationManager: HADOOP_MAPRED_HOME is /home/hadoop/hadoop3.3

Note: /tmp/sqoop-root/compile/363869e21c2078b9742685122c43a3cc/page_visits.java uses or overrides a deprecated API.

Note: Recompile with -Xlint:deprecation for details.

2025-07-08 15:28:16,377 INFO orm.CompilationManager: Writing jar file: /tmp/sqoop-root/compile/363869e21c2078b9742685122c43a3cc/page_visits.jar

2025-07-08 15:28:16,391 INFO mapreduce.ExportJobBase: Beginning export of page_visits

2025-07-08 15:28:16,391 INFO Configuration.deprecation: mapred.job.tracker is deprecated. Instead, use mapreduce.jobtracker.address

2025-07-08 15:28:16,484 INFO Configuration.deprecation: mapred.jar is deprecated. Instead, use mapreduce.job.jar

2025-07-08 15:28:17,339 INFO Configuration.deprecation: mapred.reduce.tasks.speculative.execution is deprecated. Instead, use mapreduce.reduce.speculative

2025-07-08 15:28:17,342 INFO Configuration.deprecation: mapred.map.tasks.speculative.execution is deprecated. Instead, use mapreduce.map.speculative

2025-07-08 15:28:17,343 INFO Configuration.deprecation: mapred.map.tasks is deprecated. Instead, use mapreduce.job.maps

2025-07-08 15:28:17,555 INFO client.DefaultNoHARMFailoverProxyProvider: Connecting to ResourceManager at node/192.168.196.122:8032

2025-07-08 15:28:17,782 INFO mapreduce.JobResourceUploader: Disabling Erasure Coding for path: /tmp/hadoop-yarn/staging/root/.staging/job_1751959003014_0001

2025-07-08 15:28:26,026 INFO input.FileInputFormat: Total input files to process : 1

2025-07-08 15:28:26,029 INFO input.FileInputFormat: Total input files to process : 1

2025-07-08 15:28:26,495 INFO mapreduce.JobSubmitter: number of splits:1

2025-07-08 15:28:26,528 INFO Configuration.deprecation: mapred.map.tasks.speculative.execution is deprecated. Instead, use mapreduce.map.speculative

2025-07-08 15:28:26,619 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1751959003014_0001

2025-07-08 15:28:26,620 INFO mapreduce.JobSubmitter: Executing with tokens: []

2025-07-08 15:28:26,805 INFO conf.Configuration: resource-types.xml not found

2025-07-08 15:28:26,805 INFO resource.ResourceUtils: Unable to find 'resource-types.xml'.

2025-07-08 15:28:27,226 INFO impl.YarnClientImpl: Submitted application application_1751959003014_0001

2025-07-08 15:28:27,264 INFO mapreduce.Job: The url to track the job: http://node:8088/proxy/application_1751959003014_0001/

2025-07-08 15:28:27,264 INFO mapreduce.Job: Running job: job_1751959003014_0001

2025-07-08 15:28:34,334 INFO mapreduce.Job: Job job_1751959003014_0001 running in uber mode : false

2025-07-08 15:28:34,335 INFO mapreduce.Job: map 0% reduce 0%

2025-07-08 15:28:38,374 INFO mapreduce.Job: map 100% reduce 0%

2025-07-08 15:28:38,381 INFO mapreduce.Job: Job job_1751959003014_0001 failed with state FAILED due to: Task failed task_1751959003014_0001_m_000000

Job failed as tasks failed. failedMaps:1 failedReduces:0 killedMaps:0 killedReduces: 0

2025-07-08 15:28:38,448 INFO mapreduce.Job: Counters: 8

Job Counters

Failed map tasks=1

Launched map tasks=1

Data-local map tasks=1

Total time spent by all maps in occupied slots (ms)=2061

Total time spent by all reduces in occupied slots (ms)=0

Total time spent by all map tasks (ms)=2061

Total vcore-milliseconds taken by all map tasks=2061

Total megabyte-milliseconds taken by all map tasks=2110464

2025-07-08 15:28:38,456 WARN mapreduce.Counters: Group FileSystemCounters is deprecated. Use org.apache.hadoop.mapreduce.FileSystemCounter instead

2025-07-08 15:28:38,457 INFO mapreduce.ExportJobBase: Transferred 0 bytes in 21.1033 seconds (0 bytes/sec)

2025-07-08 15:28:38,462 WARN mapreduce.Counters: Group org.apache.hadoop.mapred.Task$Counter is deprecated. Use org.apache.hadoop.mapreduce.TaskCounter instead

2025-07-08 15:28:38,462 INFO mapreduce.ExportJobBase: Exported 0 records.

2025-07-08 15:28:38,462 ERROR mapreduce.ExportJobBase: Export job failed!

2025-07-08 15:28:38,463 ERROR tool.ExportTool: Error during export:

Export job failed!

at org.apache.sqoop.mapreduce.ExportJobBase.runExport(ExportJobBase.java:445)

at org.apache.sqoop.manager.SqlManager.exportTable(SqlManager.java:931)

at org.apache.sqoop.tool.ExportTool.exportTable(ExportTool.java:80)

at org.apache.sqoop.tool.ExportTool.run(ExportTool.java:99)

at org.apache.sqoop.Sqoop.run(Sqoop.java:147)

at org.apache.hadoop.util.ToolRunner.run(ToolRunner.java:81)

at org.apache.sqoop.Sqoop.runSqoop(Sqoop.java:183)

at org.apache.sqoop.Sqoop.runTool(Sqoop.java:234)

at org.apache.sqoop.Sqoop.runTool(Sqoop.java:243)

at org.apache.sqoop.Sqoop.main(Sqoop.java:252)

[root@node ~]# sqoop export \

> --connect jdbc:mysql://localhost/weblog_db \

> --username root \

> --password Aa@123456 \

> --table page_visits \

> --export-dir hdfs://node:9000/hive/warehouse/page_visits \

> --input-fields-terminated-by ',' \

> --num-mappers 1

Warning: /home/sqoop-1.4.7/../hcatalog does not exist! HCatalog jobs will fail.

Please set $HCAT_HOME to the root of your HCatalog installation.

Warning: /home/sqoop-1.4.7/../accumulo does not exist! Accumulo imports will fail.

Please set $ACCUMULO_HOME to the root of your Accumulo installation.

2025-07-08 15:30:31,174 INFO sqoop.Sqoop: Running Sqoop version: 1.4.7

2025-07-08 15:30:31,218 WARN tool.BaseSqoopTool: Setting your password on the command-line is insecure. Consider using -P instead.

2025-07-08 15:30:31,333 INFO manager.MySQLManager: Preparing to use a MySQL streaming resultset.

2025-07-08 15:30:31,336 INFO tool.CodeGenTool: Beginning code generation

Loading class `com.mysql.jdbc.Driver'. This is deprecated. The new driver class is `com.mysql.cj.jdbc.Driver'. The driver is automatically registered via the SPI and manual loading of the driver class is generally unnecessary.

2025-07-08 15:30:31,771 INFO manager.SqlManager: Executing SQL statement: SELECT t.* FROM `page_visits` AS t LIMIT 1

2025-07-08 15:30:31,814 INFO manager.SqlManager: Executing SQL statement: SELECT t.* FROM `page_visits` AS t LIMIT 1

2025-07-08 15:30:31,821 INFO orm.CompilationManager: HADOOP_MAPRED_HOME is /home/hadoop/hadoop3.3

Note: /tmp/sqoop-root/compile/ab00e36d1f5084a0f7d522b4e9a975e5/page_visits.java uses or overrides a deprecated API.

Note: Recompile with -Xlint:deprecation for details.

2025-07-08 15:30:33,116 INFO orm.CompilationManager: Writing jar file: /tmp/sqoop-root/compile/ab00e36d1f5084a0f7d522b4e9a975e5/page_visits.jar

2025-07-08 15:30:33,129 INFO mapreduce.ExportJobBase: Beginning export of page_visits

2025-07-08 15:30:33,129 INFO Configuration.deprecation: mapred.job.tracker is deprecated. Instead, use mapreduce.jobtracker.address

2025-07-08 15:30:33,212 INFO Configuration.deprecation: mapred.jar is deprecated. Instead, use mapreduce.job.jar

2025-07-08 15:30:33,877 INFO Configuration.deprecation: mapred.reduce.tasks.speculative.execution is deprecated. Instead, use mapreduce.reduce.speculative

2025-07-08 15:30:33,880 INFO Configuration.deprecation: mapred.map.tasks.speculative.execution is deprecated. Instead, use mapreduce.map.speculative

2025-07-08 15:30:33,880 INFO Configuration.deprecation: mapred.map.tasks is deprecated. Instead, use mapreduce.job.maps

2025-07-08 15:30:34,097 INFO client.DefaultNoHARMFailoverProxyProvider: Connecting to ResourceManager at node/192.168.196.122:8032

2025-07-08 15:30:34,310 INFO mapreduce.JobResourceUploader: Disabling Erasure Coding for path: /tmp/hadoop-yarn/staging/root/.staging/job_1751959003014_0002

2025-07-08 15:30:39,127 INFO input.FileInputFormat: Total input files to process : 1

2025-07-08 15:30:39,131 INFO input.FileInputFormat: Total input files to process : 1

2025-07-08 15:30:39,995 INFO mapreduce.JobSubmitter: number of splits:1

2025-07-08 15:30:40,022 INFO Configuration.deprecation: mapred.map.tasks.speculative.execution is deprecated. Instead, use mapreduce.map.speculative

2025-07-08 15:30:40,532 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1751959003014_0002

2025-07-08 15:30:40,532 INFO mapreduce.JobSubmitter: Executing with tokens: []

2025-07-08 15:30:40,689 INFO conf.Configuration: resource-types.xml not found

2025-07-08 15:30:40,689 INFO resource.ResourceUtils: Unable to find 'resource-types.xml'.

2025-07-08 15:30:40,746 INFO impl.YarnClientImpl: Submitted application application_1751959003014_0002

2025-07-08 15:30:40,783 INFO mapreduce.Job: The url to track the job: http://node:8088/proxy/application_1751959003014_0002/

2025-07-08 15:30:40,784 INFO mapreduce.Job: Running job: job_1751959003014_0002

2025-07-08 15:30:46,847 INFO mapreduce.Job: Job job_1751959003014_0002 running in uber mode : false

2025-07-08 15:30:46,848 INFO mapreduce.Job: map 0% reduce 0%

2025-07-08 15:30:50,893 INFO mapreduce.Job: map 100% reduce 0%

2025-07-08 15:30:51,905 INFO mapreduce.Job: Job job_1751959003014_0002 failed with state FAILED due to: Task failed task_1751959003014_0002_m_000000

Job failed as tasks failed. failedMaps:1 failedReduces:0 killedMaps:0 killedReduces: 0

2025-07-08 15:30:51,973 INFO mapreduce.Job: Counters: 8

Job Counters

Failed map tasks=1

Launched map tasks=1

Data-local map tasks=1

Total time spent by all maps in occupied slots (ms)=2058

Total time spent by all reduces in occupied slots (ms)=0

Total time spent by all map tasks (ms)=2058

Total vcore-milliseconds taken by all map tasks=2058

Total megabyte-milliseconds taken by all map tasks=2107392

2025-07-08 15:30:51,979 WARN mapreduce.Counters: Group FileSystemCounters is deprecated. Use org.apache.hadoop.mapreduce.FileSystemCounter instead

2025-07-08 15:30:51,980 INFO mapreduce.ExportJobBase: Transferred 0 bytes in 18.0828 seconds (0 bytes/sec)

2025-07-08 15:30:51,983 WARN mapreduce.Counters: Group org.apache.hadoop.mapred.Task$Counter is deprecated. Use org.apache.hadoop.mapreduce.TaskCounter instead

2025-07-08 15:30:51,983 INFO mapreduce.ExportJobBase: Exported 0 records.

2025-07-08 15:30:51,983 ERROR mapreduce.ExportJobBase: Export job failed!

2025-07-08 15:30:51,984 ERROR tool.ExportTool: Error during export:

Export job failed!

at org.apache.sqoop.mapreduce.ExportJobBase.runExport(ExportJobBase.java:445)

at org.apache.sqoop.manager.SqlManager.exportTable(SqlManager.java:931)

at org.apache.sqoop.tool.ExportTool.exportTable(ExportTool.java:80)

at org.apache.sqoop.tool.ExportTool.run(ExportTool.java:99)

at org.apache.sqoop.Sqoop.run(Sqoop.java:147)

at org.apache.hadoop.util.ToolRunner.run(ToolRunner.java:81)

at org.apache.sqoop.Sqoop.runSqoop(Sqoop.java:183)

at org.apache.sqoop.Sqoop.runTool(Sqoop.java:234)

at org.apache.sqoop.Sqoop.runTool(Sqoop.java:243)

at org.apache.sqoop.Sqoop.main(Sqoop.java:252)

[root@node ~]#

6.2 Sqoop导出数据

6.2.1从Hive将数据导出到MySQL

6.2.2sqoop导出格式

6.2.3导出page_visits表

6.2.4导出到ip_visits表

6.3验证导出数据

6.3.1登录MySQL

6.3.2执行查询

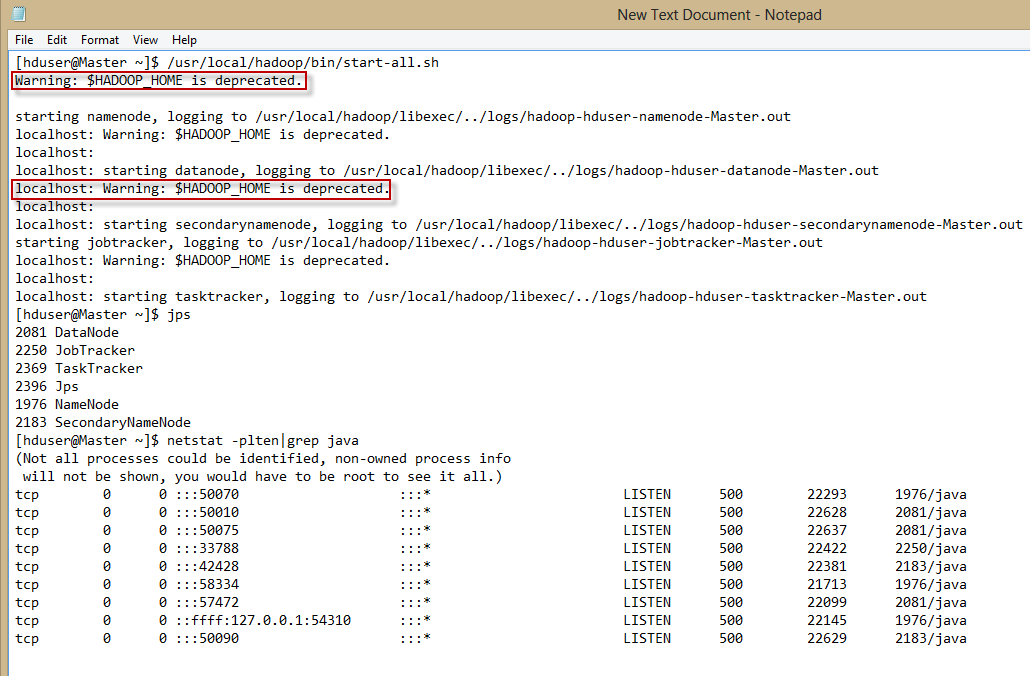

本文提供了一套详细的指南,帮助用户解决在Ubuntu上安装和使用Hadoop过程中遇到的各种问题,包括警告信息的消除、权限配置、SSH密钥设置、HDFS文件操作等。

本文提供了一套详细的指南,帮助用户解决在Ubuntu上安装和使用Hadoop过程中遇到的各种问题,包括警告信息的消除、权限配置、SSH密钥设置、HDFS文件操作等。

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?