I know, i know

地球另一端有你陪我

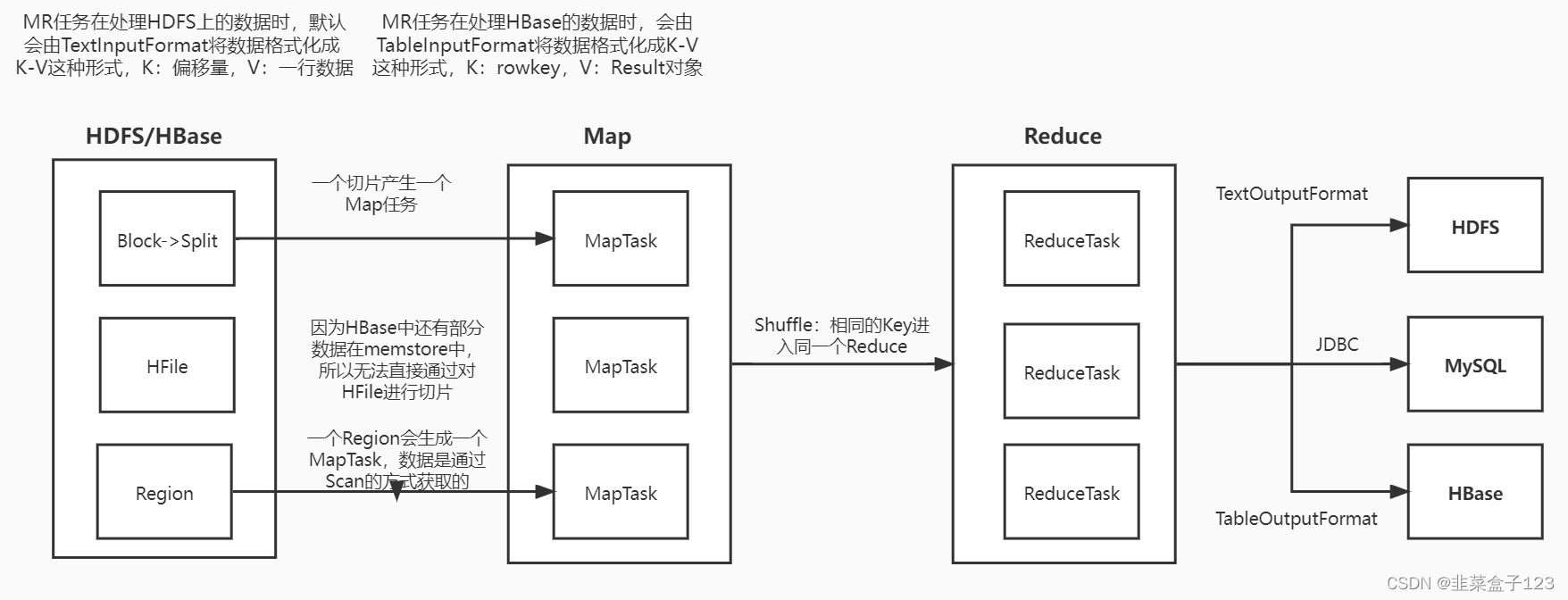

一、MapReduce 连接 HBase

统计班级人数,输出到 hdfs

package day51;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.TableName;

import org.apache.hadoop.hbase.client.Result;

import org.apache.hadoop.hbase.client.Scan;

import org.apache.hadoop.hbase.io.ImmutableBytesWritable;

import org.apache.hadoop.hbase.mapreduce.TableMapReduceUtil;

import org.apache.hadoop.hbase.mapreduce.TableMapper;

import org.apache.hadoop.hbase.util.Bytes;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class MRHBase {

// 读取hbase需要继承类tablemapper,泛型只需要给输出内容的数据类型

public static class readHBaseMapper

extends TableMapper<Text,IntWritable>{

@Override

protected void map(ImmutableBytesWritable key, Result value,

Context context) throws IOException, InterruptedException {

// 此处的 key 即是 rowkey value 即是读取出来的 result

// rowkey

String id = Bytes.toString(key.get());

String clazz = Bytes.toString(value.getValue(

"cf1".getBytes(), "clazz".getBytes()));

context.write(new Text(clazz),new IntWritable(1));

}

}

public static class myReducer

extends Reducer<Text,IntWritable,Text,IntWritable>{

@Override

protected void reduce(Text key, Iterable<IntWritable> values,

Context context) throws IOException, InterruptedException{

int sum=0;

for (IntWritable value : values) {

sum += value.get();

}

context.write(key,new IntWritable(sum));

}

}

public static void main(String[] args)

throws Exception {

// 配置文件,连接 hbase

Configuration conf = HBaseConfiguration.create();

conf.set("hbase.zookeeper.quorum",

"master:2181,node1:2181,node2:2181");

Job job = Job.getInstance();

job.setJobName("countclazz");

job.setJarByClass(MRHBase.class);

// 配置Map

TableMapReduceUtil.initTableMapperJob(

TableName.valueOf("students"),

new Scan(),

readHBaseMapper.class,

Text.class,

IntWritable.class,

job

);

// 配置Reduce

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

job.setReducerClass(myReducer.class);

// 配置输入输出路径

Path outputPath = new Path("/hbaseMR/output1");

FileSystem fs = FileSystem.get(conf);

if (fs.exists(outputPath)) {

fs.delete(outputPath, true);

}

FileOutputFormat.setOutputPath(job, outputPath);

job.waitForCompletion(true);

}

}

/*

打包,丢到hadoop

hadoop jar hbase-1.0-SNAPSHOT-jar-with-dependencies.jar

day51.MRHBase

*/

package day51;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.TableName;

import org.apache.hadoop.hbase.client.Put;

import org.apache.hadoop.hbase.client.Result;

import org.apache.hadoop.hbase.client.Scan;

import org.apache.hadoop.hbase.io.ImmutableBytesWritable;

import org.apache.hadoop.hbase.mapreduce.TableMapReduceUtil;

import org.apache.hadoop.hbase.mapreduce.TableMapper;

import org.apache.hadoop.hbase.mapreduce.TableReducer;

import org.apache.hadoop.hbase.util.Bytes;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import java.io.IOException;

public class MRHBase2 {

public static class readHBaseMapper

extends TableMapper<Text,IntWritable>{

@Override

protected void map(ImmutableBytesWritable key, Result value,

Context context) throws IOException, InterruptedException {

String clazz = Bytes.toString(

value.getValue("cf1".getBytes(), "clazz".getBytes()));

context.write(new Text(clazz),new IntWritable(1));

}

}

public static class writeHBaseReducer

extends TableReducer<Text, IntWritable, NullWritable>{

@Override

protected void reduce(Text key,Iterable<IntWritable>values,

Context context) throws IOException, InterruptedException {

int sum = 0;

for (IntWritable v : values) {

sum += v.get();

}

Put put = new Put(key.getBytes());

put.addColumn("cf1".getBytes(),

"cnt".getBytes(), (sum + "").getBytes());

// 输出的 value 即是put对象

context.write(NullWritable.get(), put);

}

}

public static void main(String[] args)

throws Exception {

Configuration conf = HBaseConfiguration.create();

conf.set("hbase.zookeeper.quorum",

"master:2181,node1:2181,node2:2181");

Job job = Job.getInstance(conf);

job.setJobName("Demo6MRReadAndWriteHBase");

job.setJarByClass(MRHBase2.class);

// 配置Map

TableMapReduceUtil.initTableMapperJob(

TableName.valueOf("students"),

new Scan(),

readHBaseMapper.class,

Text.class,

IntWritable.class,

job

);

// 配置Reduce

TableMapReduceUtil.initTableReducerJob(

"test",

writeHBaseReducer.class,

job

);

job.waitForCompletion(true);

}

}

/*

打包,丢到hadoop

hadoop jar hbase-1.0-SNAPSHOT-jar-with-dependencies.jar

day51.MRHBase

*/

总结

1、关于这样的参数提醒

在 setting 中查找 parameter info

修改其快捷键即可

382

382

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?