Source: https://en.wikipedia.org/wiki/Backpropagation

Backpropagation

| Machine learning and data mining |

|---|

|

The backward propagation of errors or backpropagation, is a common method of training artificial neural networks and used in conjunction with an optimization method such as gradient descent. The algorithm repeats a two phase cycle, propagation and weight update. When an input vector is presented to the network, it is propagated forward through the network, layer by layer, until it reaches the output layer. The output of the network is then compared to the desired output, using a loss function, and an error value is calculated for each of the neurons in the output layer. The error values are then propagated backwards, starting from the output, until each neuron has an associated error value which roughly represents its contribution to the original output.

Backpropagation uses these error values to calculate the gradient of the loss function with respect to the weights in the network. In the second phase, this gradient is fed to the optimization method, which in turn uses it to update the weights, in an attempt to minimize the loss function.

The importance of this process is that, as the network is trained, the neurons in the intermediate layers organize themselves in such a way that the different neurons learn to recognize different characteristics of the total input space. After training, when an arbitrary input pattern is present which contains noise or is incomplete, neurons in the hidden layer of the network will respond with an active output if the new input contains a pattern that resembles a feature that the individual neurons have learned to recognize during their training.

Backpropagation requires a known, desired output for each input value in order to calculate the loss function gradient – it is therefore usually considered to be a supervised learning method; nonetheless, it is also used in some unsupervised networks such as autoencoders. It is a generalization of the delta rule to multi-layered feedforward networks, made possible by using the chain rule to iteratively compute gradients for each layer. Backpropagation requires that the activation function used by the artificial neurons (or "nodes") be differentiable.

Contents

[hide]Motivation[edit]

The goal of any supervised learning algorithm is to find a function that best maps a set of inputs to its correct output. An example would be a classification task, where the input is an image of an animal, and the correct output would be the name of the animal.

The motivation for developing the backpropagation algorithm was to find a way to train a multi-layered neural network such that it can learn the appropriate internal representations to allow it to learn any arbitrary mapping of input to output.[1] The goal of backpropagation is to compute the partial derivative, or gradient,

Loss function[edit]

Sometimes referred to as the cost function or error function (not to be confused with the Gauss error function), the loss function is a function that maps values of one or more variables onto a real number intuitively representing some "cost" associated with the event. For backpropagation, the loss function calculates the difference between the input training example and its expected output, after the example has been propagated through the network.

Assumptions about the loss function[edit]

For backpropagation to work, two assumptions are made about the form of the error function.[2] The first is that it can be written as an average

Example loss function[edit]

Let

Select an error function

Algorithm[edit]

Let

Below,

The backpropagation algorithm takes as input a sequence of training examples

Calculating

This makes

Algorithm in code[edit]

|

| This article's tone or style may not reflect the encyclopedic tone used on Wikipedia. (December 2016) (Learn how and when to remove this template message) |

To implement the algorithm above, explicit formulas are required for the gradient of the function

The backpropagation learning algorithm can be divided into two phases: propagation and weight update.

Phase 1: Propagation[edit]

Each propagation involves the following steps:

- Forward propagation of a training pattern's input through the neural network in order to generate the network's output value(s).

- Backward propagation of the propagation's output activations through the neural network using the training pattern target in order to generate the deltas (the difference between the targeted and actual output values) of all output and hidden neurons.

Phase 2: Weight update[edit]

For each weight, the following steps must be followed:

- The weight's output delta and input activation are multiplied to find the gradient of the weight.

- A ratio (percentage) of the weight's gradient is subtracted from the weight.

This ratio (percentage) influences the speed and quality of learning; it is called the learning rate. The greater the ratio, the faster the neuron trains, but the lower the ratio, the more accurate the training is. The sign of the gradient of a weight indicates whether the error varies directly with, or inversely to, the weight. Therefore, the weight must be updated in the opposite direction, "descending" the gradient.

Phases 1 and 2 are repeated until the performance of the network is satisfactory.

Code[edit]

The following is pseudocode for a stochastic gradient descent algorithm for training a three-layer network (only one hidden layer):

initialize network weights (often small random values)

do

forEach training example named ex

prediction = neural-net-output(network, ex) // forward pass

actual = teacher-output(ex)

compute error (prediction - actual) at the output units

compute  for all weights from hidden layer to output layer // backward pass

compute

for all weights from hidden layer to output layer // backward pass

compute  for all weights from input layer to hidden layer // backward pass continued

update network weights // input layer not modified by error estimate

until all examples classified correctly or another stopping criterion satisfied

return the network

for all weights from input layer to hidden layer // backward pass continued

update network weights // input layer not modified by error estimate

until all examples classified correctly or another stopping criterion satisfied

return the network

The lines labeled "backward pass" can be implemented using the backpropagation algorithm, which calculates the gradient of the error of the network regarding the network's modifiable weights.[3] Often the term "backpropagation" is used in a more general sense, to refer to the entire procedure encompassing both the calculation of the gradient and its use in stochastic gradient descent, but backpropagation properties can be used with any gradient-based optimizer, such as L-BFGS or truncated Newton.

Backpropagation networks are necessarily multilayer perceptrons (usually with one input, multiple hidden, and one output layer). In order for the hidden layer to serve any useful function, multilayer networks must have non-linear activation functions for the multiple layers: a multilayer network using only linear activation functions is equivalent to some single layer, linear network. Non-linear activation functions that are commonly used include the rectifier, logistic function, the softmax function, and the gaussian function.

The backpropagation algorithm for calculating a gradient has been rediscovered a number of times, and is a special case of a more general technique called automatic differentiation in the reverse accumulation mode.

It is also closely related to the Gauss–Newton algorithm, and is also part of continuing research in neural backpropagation.

Intuition[edit]

Learning as an optimization problem[edit]

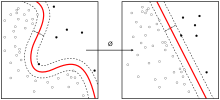

Before showing the mathematical derivation of the backpropagation algorithm, it helps to develop some intuitions about the relationship between the actual output of a neuron and the correct output for a particular training case. Consider a simple neural network with two input units, one output unit and no hidden units. Each neuron uses a linear output[note 1] that is the weighted sum of its input.

Initially, before training, the weights will be set randomly. Then the neuron learns from training examples, which in this case consists of a set of tuples (

-

,

where

As an example, consider the network on a single training case:

However, the output of a neuron depends on the weighted sum of all its inputs:

-

,

where

The backpropagation algorithm aims to find the set of weights that minimizes the error. There are several methods for finding the minima of a parabola or any function in any dimension. One way is analytically by solving systems of equations, however this relies on the network being a linear system, and the goal is to be able to also train multi-layer, non-linear networks (since a multi-layered linear network is equivalent to a single-layer network). The method used in backpropagation is gradient descent.

An analogy for understanding gradient descent[edit]

The basic intuition behind gradient descent can be illustrated by a hypothetical scenario. A person is stuck in the mountains and is trying to get down (i.e. trying to find the minima). There is heavy fog such that visibility is extremely low. Therefore, the path down the mountain is not visible, so he must use local information to find the minima. He can use the method of gradient descent, which involves looking at the steepness of the hill at his current position, then proceeding in the direction with the steepest descent (i.e. downhill). If he was trying to find the top of the mountain (i.e. the maxima), then he would proceed in the direction steepest ascent (i.e. uphill). Using this method, he would eventually find his way down the mountain. However, assume also that the steepness of the hill is not immediately obvious with simple observation, but rather it requires a sophisticated instrument to measure, which the person happens to have at the moment. It takes quite some time to measure the steepness of the hill with the instrument, thus he should minimize his use of the instrument if he wanted to get down the mountain before sunset. The difficulty then is choosing the frequency at which he should measure the steepness of the hill so not to go off track.

In this analogy, the person represents the backpropagation algorithm, and the path taken down the mountain represents the sequence of parameter settings that the algorithm will explore. The steepness of the hill represents the slope of the error surface at that point. The instrument used to measure steepness is differentiation (the slope of the error surface can be calculated by taking the derivative of the squared error function at that point). The direction he chooses to travel in aligns with the gradient of the error surface at that point. The amount of time he travels before taking another measurement is the learning rate of the algorithm. See the limitation section for a discussion of the limitations of this type of "hill climbing" algorithm.

Derivation[edit]

Since backpropagation uses the gradient descent method, one needs to calculate the derivative of the squared error function with respect to the weights of the network. Assuming one output neuron,[note 2] the squared error function is:

-

,

where

-

is the squared error,

-

is the target output for a training sample, and

-

is the actual output of the output neuron.

The factor of

For each neuron

-

.

The input

The activation function

which has a nice derivative of:

Finding the derivative of the error[edit]

Calculating the partial derivative of the error with respect to a weight

In the last factor of the right-hand side of the above, only one term in the sum

-

.

If the neuron is in the first layer after the input layer,

The derivative of the output of neuron

This is the reason why backpropagation requires the activation function to be differentiable.

The first factor is straightforward to evaluate if the neuron is in the output layer, because then

However, if

Considering

and taking the total derivative with respect to

Therefore, the derivative with respect to

Putting it all together:

with

To update the weight

The

For a single-layer network, this expression becomes the Delta Rule. To better understand how backpropagation works, here is an example to illustrate it: The Back Propagation Algorithm, page 20.

Extension[edit]

The choice of learning rate

Various optimizations of backpropagation, such as Quickprop, are primarily aimed at speeding up the error minimization; other improvements mainly try to increase reliability.

Backpropagation with adaptive learning rate[edit]

In order to avoid oscillation inside the network, such as alternating connection weights, and to improve the rate of convergence, there are refinements of this algorithm that use an adaptive learning rate.[4]

Backpropagation with inertia[edit]

By using a variable inertia term (Momentum)

Similar to a ball rolling down a mountain, whose current speed is determined not only by the current slope of the mountain but also by its own inertia, inertia can be added to backpropagation:

-

is the change in weight

in the connection of neuron

to neuron

at time

,

-

a learning rate,

-

the error signal of neuron

and

-

the input of neuron

,

-

the influence of the inertial term

. This corresponds to the weight change at the previous point in time.

This will depend on the current weight change

With inertia, the previous problems of the backpropagation getting stuck in steep ravines and flat plateaus are avoided. Since, for example, the gradient of the error function becomes very small in flat plateaus, it would immediately lead to a "deceleration" of the gradient descent. This "deceleration" is delayed by the addition of the inertia term so that a flat plateau can be overcome more quickly.

Modes of learning[edit]

There are two modes of learning to choose from: stochastic and batch. In stochastic learning, each propagation is followed immediately by a weight update. In batch learning many propagations occur before updating the weights, accumulating errors over the samples within a batch. Stochastic learning introduces "noise" into the gradient descent process, using the local gradient calculated from one data point; this reduces the chance of the network getting stuck in a local minima. Yet batch learning typically yields a faster, more stable descent to a local minima, since each update is performed in the direction of the average error of the batch samples. In modern applications a common compromise choice is to use "mini-batches", meaning batch learning but with a batch of small size and with stochastically selected samples.

Training data collection[edit]

Online learning is used for dynamic environments that provide a continuous stream of new training data patterns. Offline learning makes use of a training set of static patterns.

Limitations[edit]

- Gradient descent with backpropagation is not guaranteed to find the global minimum of the error function, but only a local minimum; also, it has trouble crossing plateaux in the error function landscape. This issue, caused by the non-convexity of error functions in neural networks, was long thought to be a major drawback, but in a 2015 review article, Yann LeCun et al. argue that in many practical problems, it is not.[5]

- Backpropagation learning does not require normalization of input vectors; however, normalization could improve performance.[6]

History[edit]

According to various sources,[7][8][9][10] basics of continuous backpropagation were derived in the context of control theory by Henry J. Kelley[11] in 1960 and by Arthur E. Bryson in 1961,[12] using principles of dynamic programming. In 1962, Stuart Dreyfus published a simpler derivation based only on the chain rule.[13] Vapnik cites reference[14] in his book on Support Vector Machines. Arthur E. Bryson and Yu-Chi Ho described it as a multi-stage dynamic system optimization method in 1969.[15][16]

In 1970, Seppo Linnainmaa finally published the general method for automatic differentiation (AD) of discrete connected networks of nested differentiable functions.[17][18] This corresponds to the modern version of backpropagation which is efficient even when the networks are sparse.[9][10][19][20]

In 1973, Stuart Dreyfus used backpropagation to adapt parameters of controllers in proportion to error gradients.[21] In 1974, Paul Werbos mentioned the possibility of applying this principle to artificial neural networks,[22] and in 1982, he applied Linnainmaa's AD method to neural networks in the way that is widely used today.[10][23]

In 1986, David E. Rumelhart, Geoffrey E. Hinton and Ronald J. Williams showed through computer experiments that this method can generate useful internal representations of incoming data in hidden layers of neural networks.[1] [24] In 1993, Eric A. Wan was the first[9] to win an international pattern recognition contest through backpropagation.[25]

During the 2000s it fell out of favour but has returned again in the 2010s, now able to train much larger networks using huge modern computing power such as GPUs. In the context of this new hardware it is sometimes referred to as deep learning, though this is often seen[by whom?] as marketing hype. For example, in 2014, backpropagation was used to train a deep neural network for state of the art speech recognition.[26]

Notes[edit]

- ^ One may notice that multi-layer neural networks use non-linear activation functions, so an example with linear neurons seems obscure. However, even though the error surface of multi-layer networks are much more complicated, locally they can be approximated by a paraboloid. Therefore, linear neurons are used for simplicity and easier understanding.

- ^ There can be multiple output neurons, in which case the error is the squared norm of the difference vector.

本文介绍反向传播算法的基本原理及应用,包括损失函数的选择、算法流程、梯度下降法的应用等,强调其在训练人工神经网络中的作用。

本文介绍反向传播算法的基本原理及应用,包括损失函数的选择、算法流程、梯度下降法的应用等,强调其在训练人工神经网络中的作用。

991

991

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?