文章目录

DolphinScheduler 集群模式部署

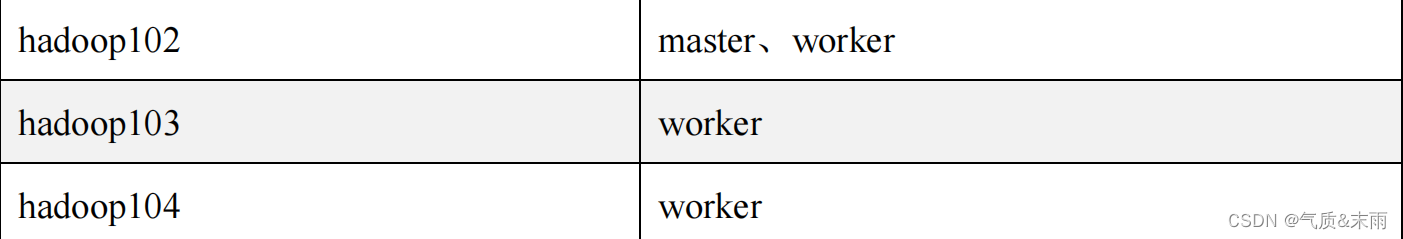

一、集群规划

集群模式下,可配置多个 Master 及多个 Worker。通常可配置 2~3 个 Master,若干个

Worker。由于集群资源有限,此处配置一个 Master,三个 Worker,集群规划如下。

1、前置准备工作

(1)三台节点均需部署 JDK(1.8+),并配置相关环境变量。

(2)需部署数据库,支持 MySQL(5.7+)或者 PostgreSQL(8.2.15+)。

(3)需部署 Zookeeper(3.4.6+)。

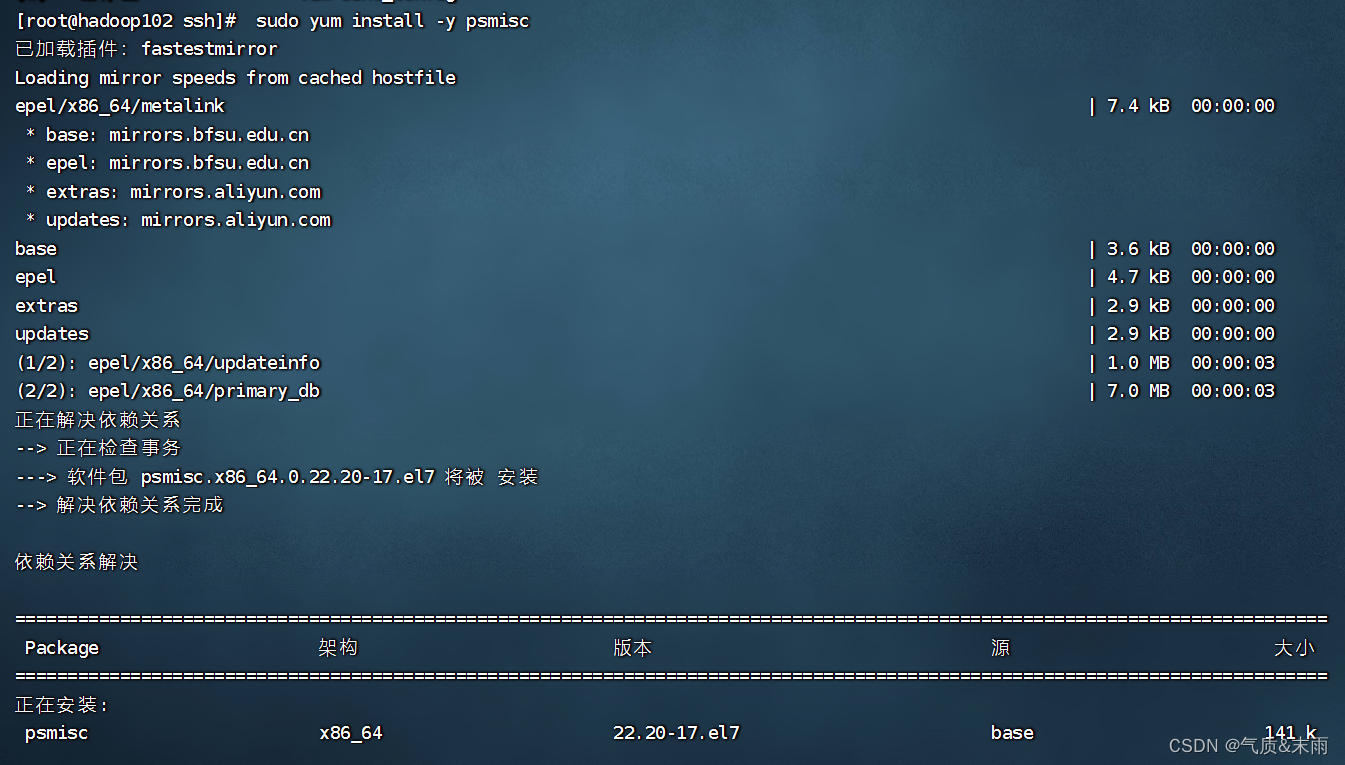

(4)三台节点均需安装进程树分析工具 psmisc。

输入命令: sudo yum install -y psmisc 三台节点执行

2、解压DolphinScheduler 安装包

(1)上传 DolphinScheduler 安装包到 hadoop102 节点的/opt/software 目录

(2)解压安装包到当前目录

输入目录:tar -zxvf apache-dolphinscheduler-2.0.5-bin.tar.gz -C /opt/software/

3、创建元数据库及用户

DolphinScheduler 元数据存储在关系型数据库中,故需创建相应的数据库和用户。

(1)创建数据库

输入目录:CREATE DATABASE dolphinscheduler DEFAULT CHARACTER SET utf8 DEFAULT COLLATE utf8_general_ci;

(2)创建用户

输入命令: create user 'dolpinscheduler'@'%' identified by 'dolpinscheduler';

(3)赋予用户相应权限

输入命令:GRANT ALL PRIVILEGES ON dolphinscheduler.* TO 'dolphinscheduler'@'%';

输入命令: flush privileges;

二、配置一键部署脚本

输入命令: cd apache-dolphinscheduler-2.0.5-bin

输入命令:vim conf/config/install_config.conf 配置文件内容如下

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#

# ---------------------------------------------------------

# INSTALL MACHINE

# ---------------------------------------------------------

# A comma separated list of machine hostname or IP would be installed DolphinScheduler,

# including master, worker, api, alert. If you want to deploy in pseudo-distributed

# mode, just write a pseudo-distributed hostname

# Example for hostnames: ips="ds1,ds2,ds3,ds4,ds5", Example for IPs: ips="192.168.8.1,192.168.8.2,192.168.8.3,192.168.8.4,192.168.8.5"

ips="hadoop102,hadoop103,hadoop104"

# Port of SSH protocol, default value is 22. For now we only support same port in all `ips` machine

# modify it if you use different ssh port

sshPort="22"

# A comma separated list of machine hostname or IP would be installed Master server, it

# must be a subset of configuration `ips`.

# Example for hostnames: masters="ds1,ds2", Example for IPs: masters="192.168.8.1,192.168.8.2"

masters="hadoop102"

# A comma separated list of machine <hostname>:<workerGroup> or <IP>:<workerGroup>.All hostname or IP must be a

# subset of configuration `ips`, And workerGroup have default value as `default`, but we recommend you declare behind the hosts

# Example for hostnames: workers="ds1:default,ds2:default,ds3:default", Example for IPs: workers="192.168.8.1:default,192.168.8.2:default,192.168.8.3:default"

workers="hadoop102:default,hadoop103:default,hadoop104:default"

# A comma separated list of machine hostname or IP would be installed Alert server, it

# must be a subset of configuration `ips`.

# Example for hostname: alertServer="ds3", Example for IP: alertServer="192.168.8.3"

alertServer="hadoop102"

# A comma separated list of machine hostname or IP would be installed API server, it

# must be a subset of configuration `ips`.

# Example for hostname: apiServers="ds1", Example for IP: apiServers="192.168.8.1"

apiServers="hadoop102"

# A comma separated list of machine hostname or IP would be installed Python gateway server, it

# must be a subset of configuration `ips`.

# Example for hostname: pythonGatewayServers="ds1", Example for IP: pythonGatewayServers="192.168.8.1"

pythonGatewayServers="ds1"

# The directory to install DolphinScheduler for all machine we config above. It will automatically be created by `install.sh` script if not exists.

# Do not set this configuration same as the current path (pwd)

installPath="/opt/dolphinscheduler"

# The user to deploy DolphinScheduler for all machine we config above. For now user must create by yourself before running `install.sh`

# script. The user needs to have sudo privileges and permissions to operate hdfs. If hdfs is enabled than the root directory needs

# to be created by this user

deployUser="root"

# The directory to store local data for all machine we config above. Make sure user `deployUser` have permissions to read and write this directory.

dataBasedirPath="/tmp/dolphinscheduler"

# ---------------------------------------------------------

# DolphinScheduler ENV

# ---------------------------------------------------------

# JAVA_HOME, we recommend use same JAVA_HOME in all machine you going to install DolphinScheduler

# and this configuration only support one parameter so far.

javaHome="/opt/jdk1.8"

# DolphinScheduler API service port, also this is your DolphinScheduler UI component's URL port, default value is 12345

apiServerPort="12345"

# ---------------------------------------------------------

# Database

# NOTICE: If database value has special characters, such as `.*[]^${}\+?|()@#&`, Please add prefix `\` for escaping.

# ---------------------------------------------------------

# The type for the metadata database

# Supported values: ``postgresql``, ``mysql`, `h2``.

DATABASE_TYPE="mysql"

# Spring datasource url, following <HOST>:<PORT>/<database>?<parameter> format, If you using mysql, you could use jdbc

# string jdbc:mysql://127.0.0.1:3306/dolphinscheduler?useUnicode=true&characterEncoding=UTF-8 as example

SPRING_DATASOURCE_URL="jdbc:mysql://hadoop102:3306/dolphinscheduler?useUnicode=true&characterEncoding=UTF-8"

# Spring datasource username

SPRING_DATASOURCE_USERNAME="dolphinscheduler"

# Spring datasource password

SPRING_DATASOURCE_PASSWORD="dolphinscheduler"

# ---------------------------------------------------------

# Registry Server

# ---------------------------------------------------------

# Registry Server plugin name, should be a substring of `registryPluginDir`, DolphinScheduler use this for verifying configuration consistency

registryPluginName="zookeeper"

# Registry Server address.

registryServers="hadoop102:2181,hadoop103:2181,hadoop104:2181"

# Registry Namespace

registryNamespace="dolphinscheduler"

# ---------------------------------------------------------

# Worker Task Server

# ---------------------------------------------------------

# Worker Task Server plugin dir. DolphinScheduler will find and load the worker task plugin jar package from this dir.

taskPluginDir="lib/plugin/task"

# resource storage type: HDFS, S3, NONE

resourceStorageType="HDFS"

# resource store on HDFS/S3 path, resource file will store to this hdfs path, self configuration, please make sure the directory exists on hdfs and has read write permissions. "/dolphinscheduler" is recommended

resourceUploadPath="/dolphinscheduler"

# if resourceStorageType is HDFS,defaultFS write namenode address,HA, you need to put core-site.xml and hdfs-site.xml in the conf directory.

# if S3,write S3 address,HA,for example :s3a://dolphinscheduler,

# Note,S3 be sure to create the root directory /dolphinscheduler

defaultFS="hdfs://hadoop102:8020"

# if resourceStorageType is S3, the following three configuration is required, otherwise please ignore

s3Endpoint="http://192.168.xx.xx:9010"

s3AccessKey="xxxxxxxxxx"

s3SecretKey="xxxxxxxxxx"

# resourcemanager port, the default value is 8088 if not specified

resourceManagerHttpAddressPort="8088"

# if resourcemanager HA is enabled, please set the HA IPs; if resourcemanager is single node, keep this value empty

yarnHaIps="192.168.xx.xx,192.168.xx.xx"

# if resourcemanager HA is enabled or not use resourcemanager, please keep the default value; If resourcemanager is single node, you only need to replace 'yarnIp1' to actual resourcemanager hostname

singleYarnIp="hadoop103"

# who has permission to create directory under HDFS/S3 root path

# Note: if kerberos is enabled, please config hdfsRootUser=

hdfsRootUser="root"

# kerberos config

# whether kerberos starts, if kerberos starts, following four items need to config, otherwise please ignore

kerberosStartUp="false"

# kdc krb5 config file path

krb5ConfPath="$installPath/conf/krb5.conf"

# keytab username,watch out the @ sign should followd by \\

keytabUserName="hdfs-mycluster\\@ESZ.COM"

# username keytab path

keytabPath="$installPath/conf/hdfs.headless.keytab"

# kerberos expire time, the unit is hour

kerberosExpireTime="2"

# use sudo or not

sudoEnable="true"

# worker tenant auto create

workerTenantAutoCreate="false"

1、初始化数据库

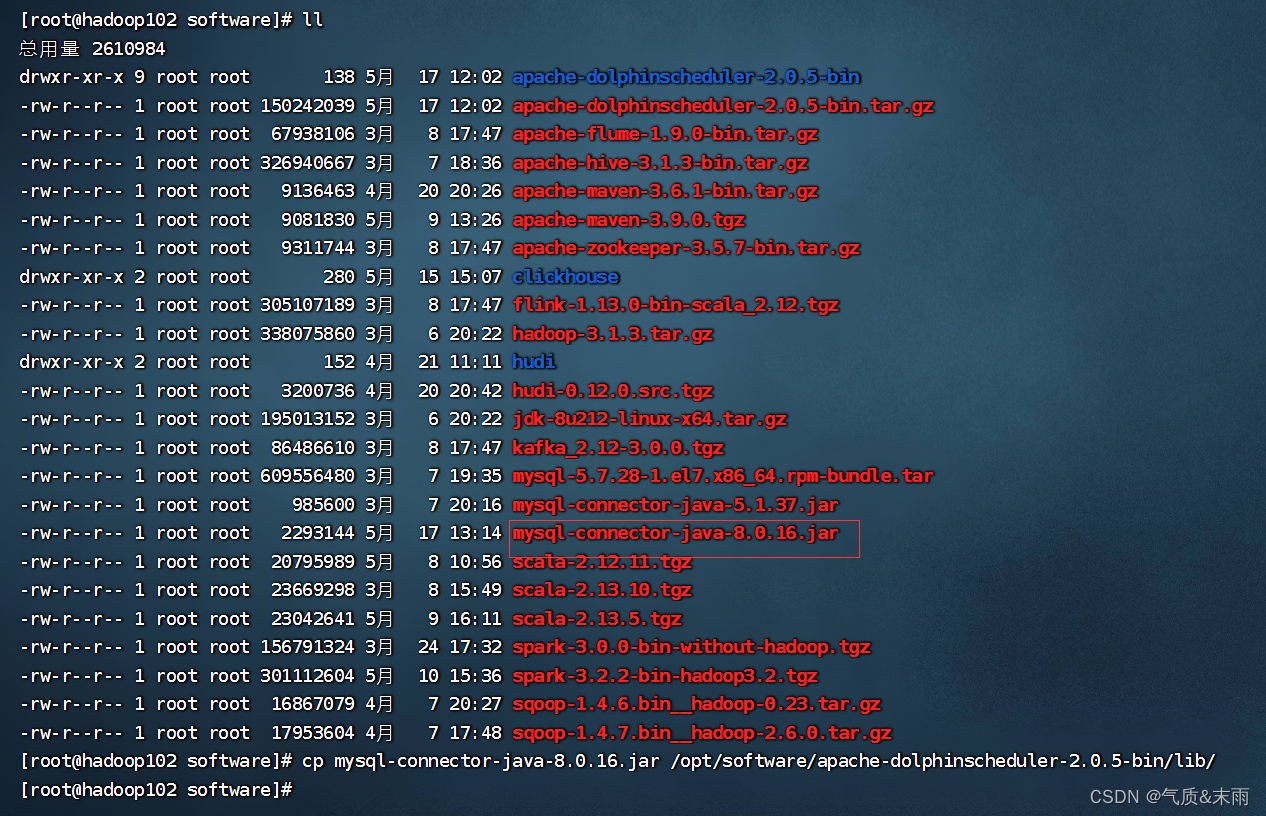

(1)拷贝mysql8.0 驱动

拷贝 MySQL 驱动到 DolphinScheduler 的解压目录下的 lib 中,要求使用 MySQL

JDBC Driver 8.0.16 要匹配对应的版本

输入命令: cp mysql-connector-java-8.0.16.jar /opt/software/apache-dolphinscheduler-2.0.5-bin/lib/

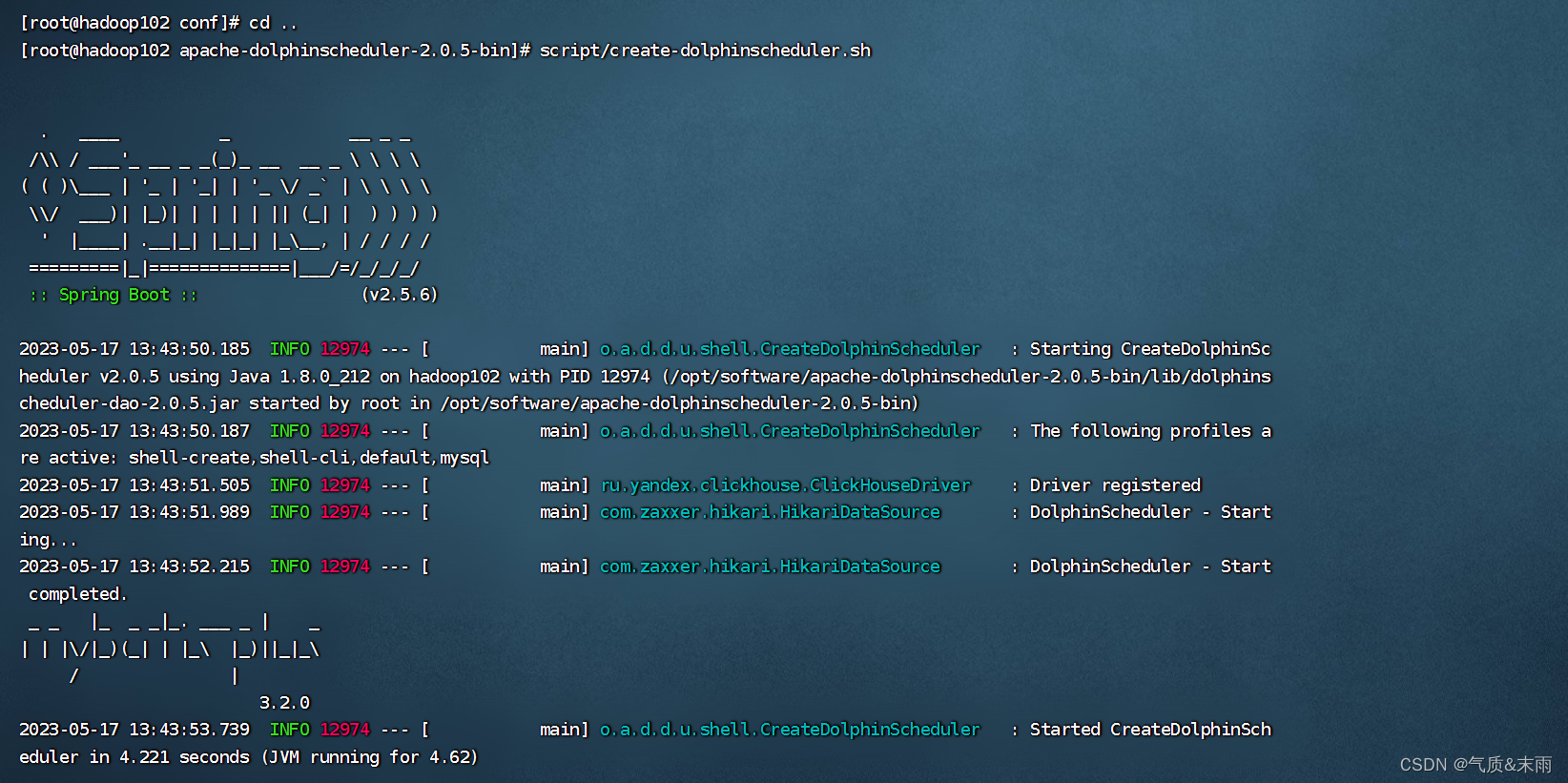

(2)执行数据库初始化脚本

数据库初始化脚本位于 DolphinScheduler 解 压 目 录 下 的 script 目 录 中 , 即

/opt/software/ds/apache-dolphinscheduler-2.0.5-bin/script/。

输入命令:/opt/software/ds/apache-dolphinscheduler-2.0.5-bin/script/

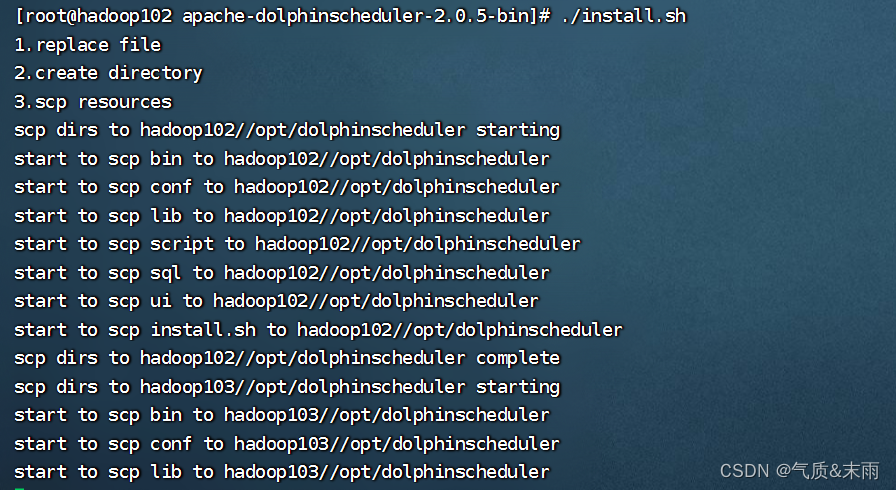

2、一键部署 DolphinScheduler

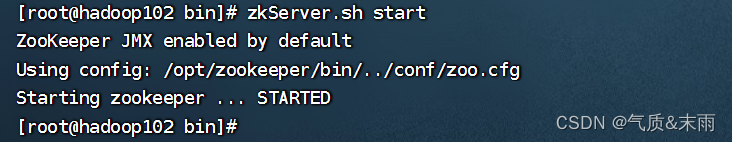

(1)启动 Zookeeper 集群

输入命令: zkServer.sh start 三台节点都执行一下,这个是在zookeeper bin目录下命令

(2)一键部署并启动 DolphinScheduler

输入命令:./install.sh

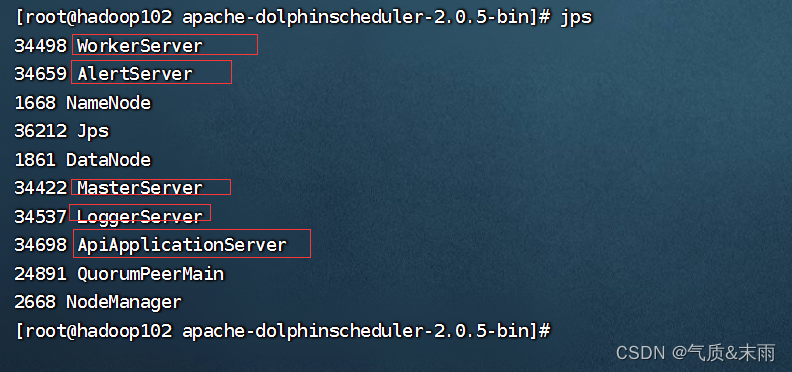

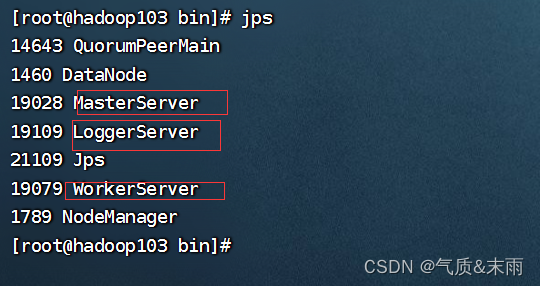

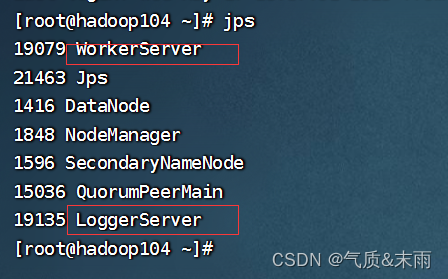

(3)查看 DolphinScheduler 进程

(4)访问 DolphinScheduler UI

DolphinScheduler UI 地址为:http://hadoop102:12345/dolphinscheduler

初始用户:admin 初始密码:dolphinscheduler123

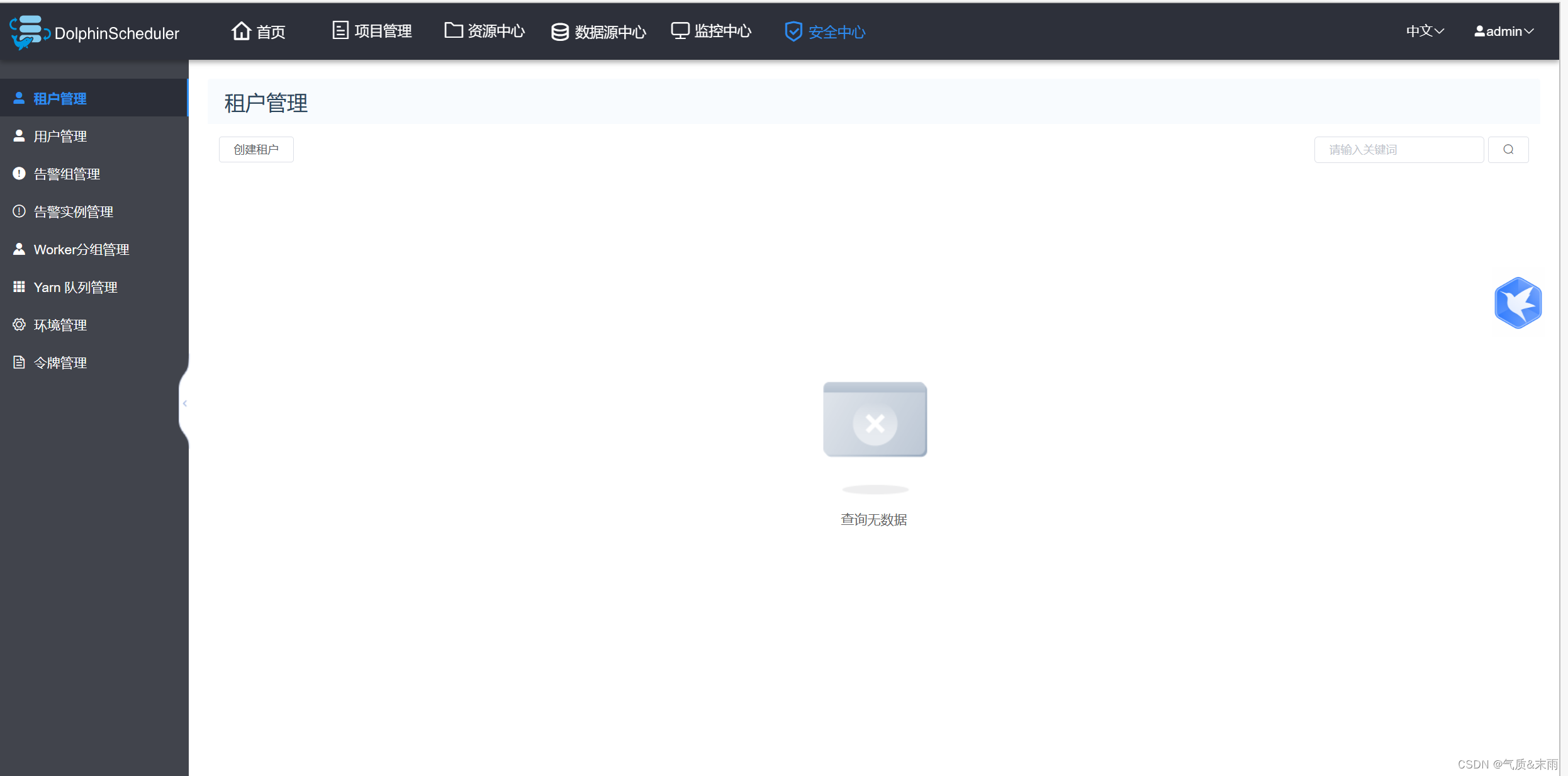

进入这个界面,说明成功了

3、DolphinScheduler 启停命令

DolphinScheduler 的启停脚本均位于其安装目录的 bin 目录下。

1)一键启停所有服务

./bin/start-all.sh

./bin/stop-all.sh

注意同 Hadoop 的启停脚本进行区分。

2)启停 Master

./bin/dolphinscheduler-daemon.sh start master-server

./bin/dolphinscheduler-daemon.sh stop master-server

3)启停 Worker

./bin/dolphinscheduler-daemon.sh start worker-server

./bin/dolphinscheduler-daemon.sh stop worker-server

4)启停 Api

./bin/dolphinscheduler-daemon.sh start api-server

./bin/dolphinscheduler-daemon.sh stop api-server

5)启停 Logger

./bin/dolphinscheduler-daemon.sh start logger-server

./bin/dolphinscheduler-daemon.sh stop logger-server

6)启停 Alert

./bin/dolphinscheduler-daemon.sh start alert-server

./bin/dolphinscheduler-daemon.sh stop alert-server

服务全部启动之后

该文详细介绍了DolphinScheduler的集群模式部署步骤,包括集群规划、前置准备、安装包解压、数据库创建和用户配置、一键部署脚本的配置以及数据库初始化。重点强调了多台服务器上JDK、数据库、Zookeeper的安装,以及DolphinScheduler各组件如Master、Worker的配置和启动命令。

该文详细介绍了DolphinScheduler的集群模式部署步骤,包括集群规划、前置准备、安装包解压、数据库创建和用户配置、一键部署脚本的配置以及数据库初始化。重点强调了多台服务器上JDK、数据库、Zookeeper的安装,以及DolphinScheduler各组件如Master、Worker的配置和启动命令。

2819

2819

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?