【声明】来源b站视频小土堆PyTorch深度学习快速入门教程(绝对通俗易懂!)【小土堆】_哔哩哔哩_bilibili

GPU训练有两种方式

方式1

1 mymodel = MyModel()

mymodel = mymodel.cuda()2 #损失函数 loss_fun = nn.CrossEntropyLoss()

loss_fun = loss_fun.cuda()3 imgs,targets = data

imgs = imgs.cuda()

targets = targets.cuda()方式2

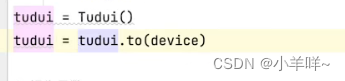

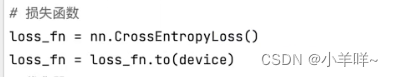

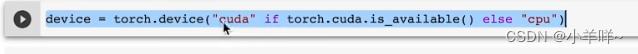

.to(device)

device = torch.device("cpu")

device = torch.device("cuda")

#如果有多个显卡

device = torch.device("cuda:0")

device = torch.device("cuda:1")代码方式1

import torch

import torch.nn as nn

import torchvision

from torch.utils.data import DataLoader

# from model import *

import time

#准备数据集

train_data = torchvision.datasets.CIFAR10(root="./data",train=True,transform=torchvision.transforms.ToTensor(),

download=False)

test_data = torchvision.datasets.CIFAR10(root="./data",train=False,transform=torchvision.transforms.ToTensor(),

download=False)

#length长度

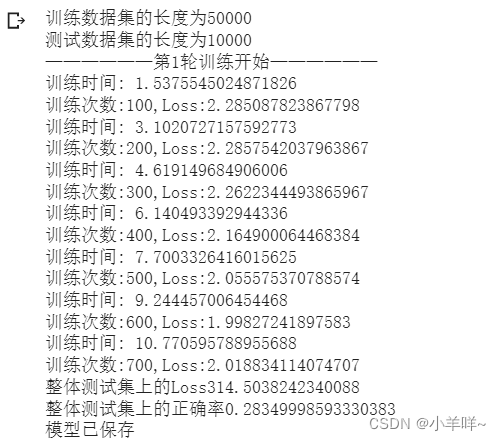

train_data_size = len(train_data)

test_data_size = len(test_data)

print("训练数据集的长度为{}".format(train_data_size))

print("测试数据集的长度为{}".format(test_data_size))

#利用dataloader来加载数据集

train_dataloder = DataLoader(dataset=train_data,batch_size=64)

test_dataloader = DataLoader(dataset=test_data,batch_size=64)

#搭建神经网络

class Model(nn.Module):

def __init__(self) -> None:

super().__init__()

self.model = nn.Sequential(

nn.Conv2d(3, 32, 5, 1, 2),

nn.MaxPool2d(2),

nn.Conv2d(32, 32, 5, 1, 2),

nn.MaxPool2d(2),

nn.Conv2d(32, 64, 5, 1, 2),

nn.MaxPool2d(2),

nn.Flatten(),

nn.Linear(64*4*4, 64),

nn.Linear(64, 10)

)

def forward(self, input):

input = self.model(input)

return input

mymodel = Model()

mymodel = mymodel.cuda()

#损失函数

loss_fun = nn.CrossEntropyLoss()

loss_fun = loss_fun.cuda()

#优化器

optim = torch.optim.SGD(mymodel.parameters(),lr=0.01)

#设置训练网络的一些参数

total_strain_step = 0#训练次数

total_test_step = 0#测试次数

epoch = 10#训练轮数

start_time = time.time() # 开始训练的时间

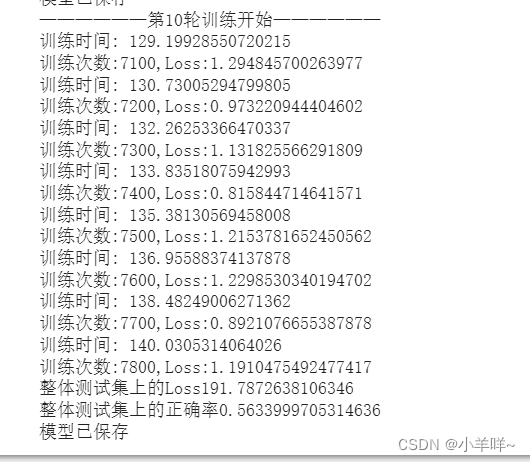

for i in range(epoch):

print("——————第{}轮训练开始——————".format(i+1))

#训练

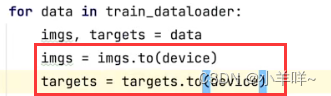

for data in train_dataloder:

imgs,targets = data

imgs = imgs.cuda()

targets = targets.cuda()

outputs = mymodel(imgs)

loss = loss_fun(outputs,targets)#计算损失

#优化器优化模型

optim.zero_grad()#梯度清零

loss.backward() #损失后向传播

optim.step() #更新网络参数

total_strain_step = total_strain_step + 1

if total_strain_step % 100 ==00:

end_time = time.time() # 训练结束时间

print("训练时间: {}".format(end_time - start_time))

print("训练次数:{},Loss:{}".format(total_strain_step, loss.item()))

#测试

total_test_loss = 0

total_accuracy = 0#整体正确率

with torch.no_grad():

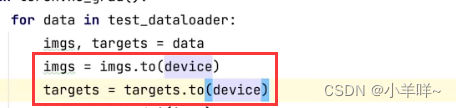

for data in test_dataloader:

imgs, targets = data

targets = targets.cuda()

imgs = imgs.cuda()

outputs = mymodel(imgs)

loss = loss_fun(outputs,targets)

total_test_loss = total_test_loss + loss.item()

accuracy = (outputs.argmax(1) == targets).sum()

total_accuracy = total_accuracy + accuracy

print("整体测试集上的Loss{}".format(total_test_loss))

print("整体测试集上的正确率{}".format(total_accuracy/test_data_size))

torch.save(mymodel,"./data/mymodel_train{}.pth".format(i))

print("模型已保存")

代码方式2

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?