KF滤波器

参考:

卡尔曼滤波 -- 从推导到应用(一)_白巧克力亦唯心的博客-优快云博客_卡尔曼滤波算法

卡尔曼滤波器原理简介_Aromash的博客-优快云博客_卡尔曼滤波原理

从放弃到精通!卡尔曼滤波从理论到实践~_哔哩哔哩_bilibili

刚接触卡尔曼滤波器,被复杂的推导过程绕晕,现在梳理一下卡尔曼滤波的基本原理与实现过程。

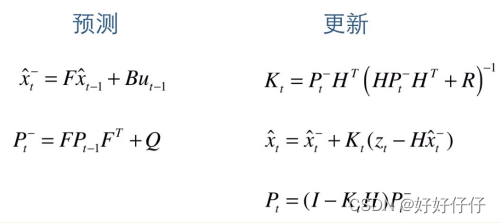

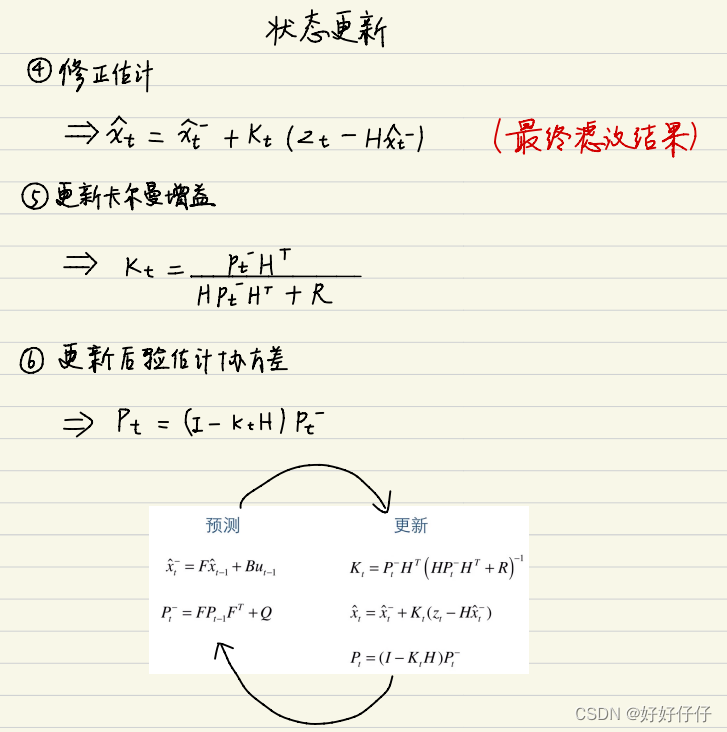

卡尔曼滤波分为两步:

1. 使用上一次的最优结果预测当前的值

2. 同时使用观测值修正当前值,得到最优结果

整体上看卡尔曼滤波器相当于一个带反馈的闭环控制系统,融合已建立的数学模型和实际测量值推断出最优的解。

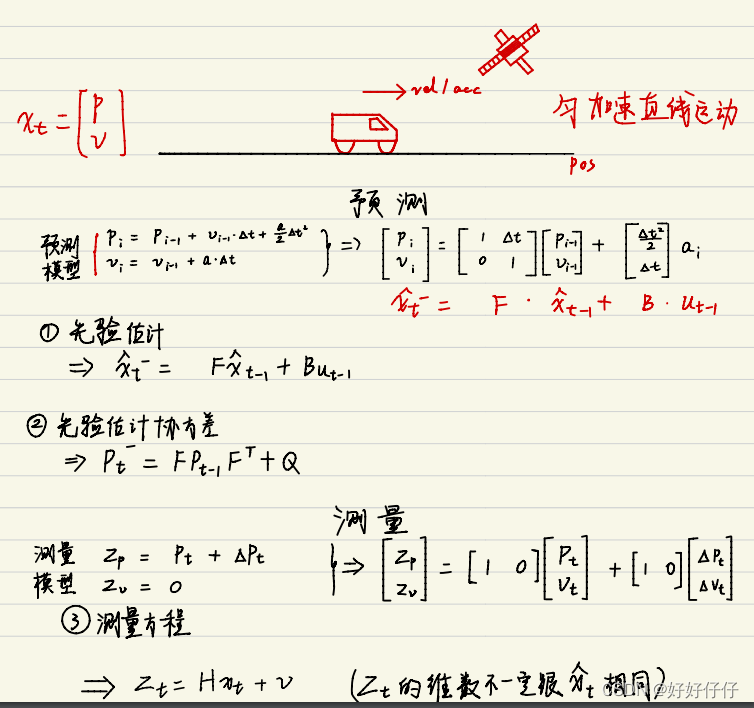

以一辆向前行驶且有GPS定位的小车为例,可以构建如下的状态方程

来自:从放弃到精通!卡尔曼滤波从理论到实践~_哔哩哔哩_bilibili

不难看出整个卡尔曼滤波的使用遵循以下6个步骤

1. 选择状态量、观测量

2. 构建方程

3. 初始化参数

4. 带入公式进行迭代

5. 调节超参数

EKF滤波器

由于SLAM中运动方程和观测方程通常是非线性的,因此为了将卡尔曼滤波器推广到非线性系统中,需要将运动方程和观测方程一阶泰勒展开,近似成线性系统。所以可以认为,在线性高斯系统下,卡尔曼滤波器给出最优无偏估计,在非线性下,给出了单此线性近似下的最优无偏估计。

EKF的局限性:

1. 假设了马尔可夫性,即k时刻状态只与k-1时刻相关

2. 由于EKF对运动方程和观测方程进行了一阶泰勒展开,近似成了线性系统,然后直接计算出了后验概率,就存在非线性误差。

3. EKF需要存储状态量的均值和方差,由于协方差与状态量呈平方增长,所以不适用大型场景

4. 没有异常检测机制,容易发散

BA与图优化

使用边缘化可以有效降低H矩阵的维度

鲁棒核函数的目的是保证每条边的误差不会过大掩盖其他的边

使用ceres优化BA

要注意eigen3.2系列的版本安装不了sophus,要先卸载

ubuntu16.04卸载、安装eigen_TB81266的博客-优快云博客_ubuntu卸载eigen3

#include <iostream>

#include <ceres/ceres.h>

#include "common.h"

#include "SnavelyReprojectionError.h"

using namespace std;

void SolveBA(BALProblem &bal_problem);

int main(int argc, char **argv) {

if (argc != 2) {

cout << "usage: bundle_adjustment_ceres bal_data.txt" << endl;

return 1;

}

BALProblem bal_problem(argv[1]);

bal_problem.Normalize();

bal_problem.Perturb(0.1, 0.5, 0.5);

bal_problem.WriteToPLYFile("initial.ply");

SolveBA(bal_problem);

bal_problem.WriteToPLYFile("final.ply");

return 0;

}

void SolveBA(BALProblem &bal_problem) {

const int point_block_size = bal_problem.point_block_size();

const int camera_block_size = bal_problem.camera_block_size();

double *points = bal_problem.mutable_points();

double *cameras = bal_problem.mutable_cameras();

// Observations is 2 * num_observations long array observations

// [u_1, u_2, ... u_n], where each u_i is two dimensional, the x

// and y position of the observation.

const double *observations = bal_problem.observations();

ceres::Problem problem;

for (int i = 0; i < bal_problem.num_observations(); ++i) {

ceres::CostFunction *cost_function;

// Each Residual block takes a point and a camera as input

// and outputs a 2 dimensional Residual

cost_function = SnavelyReprojectionError::Create(observations[2 * i + 0], observations[2 * i + 1]);

// If enabled use Huber's loss function.

ceres::LossFunction *loss_function = new ceres::HuberLoss(1.0);

// Each observation corresponds to a pair of a camera and a point

// which are identified by camera_index()[i] and point_index()[i]

// respectively.

double *camera = cameras + camera_block_size * bal_problem.camera_index()[i];

double *point = points + point_block_size * bal_problem.point_index()[i];

problem.AddResidualBlock(cost_function, loss_function, camera, point);

}

// show some information here ...

std::cout << "bal problem file loaded..." << std::endl;

std::cout << "bal problem have " << bal_problem.num_cameras() << " cameras and "

<< bal_problem.num_points() << " points. " << std::endl;

std::cout << "Forming " << bal_problem.num_observations() << " observations. " << std::endl;

std::cout << "Solving ceres BA ... " << endl;

ceres::Solver::Options options;

options.linear_solver_type = ceres::LinearSolverType::SPARSE_SCHUR;

options.minimizer_progress_to_stdout = true;

ceres::Solver::Summary summary;

ceres::Solve(options, &problem, &summary);

std::cout << summary.FullReport() << "\n";

}#ifndef SnavelyReprojection_H

#define SnavelyReprojection_H

#include <iostream>

#include "ceres/ceres.h"

#include "rotation.h"

class SnavelyReprojectionError {

public:

SnavelyReprojectionError(double observation_x, double observation_y) : observed_x(observation_x),

observed_y(observation_y) {}

template<typename T>

bool operator()(const T *const camera,//括号运算符实现ceres计算误差的接口,传入三个参数,相机位姿,点的坐标,误差

const T *const point,

T *residuals) const {

// camera[0,1,2] are the angle-axis rotation

T predictions[2];//定义一个2维容器,用来计算投影误差

CamProjectionWithDistortion(camera, point, predictions);

residuals[0] = predictions[0] - T(observed_x);

residuals[1] = predictions[1] - T(observed_y);

return true;

}

// camera : 9 dims array

// [0-2] : angle-axis rotation

// [3-5] : translateion

// [6-8] : camera parameter, [6] focal length, [7-8] second and forth order radial distortion

// point : 3D location.

// predictions : 2D predictions with center of the image plane.

template<typename T>

static inline bool CamProjectionWithDistortion(const T *camera, const T *point, T *predictions) {

// Rodrigues' formula

T p[3];

AngleAxisRotatePoint(camera, point, p);

// camera[3,4,5] are the translation

p[0] += camera[3];

p[1] += camera[4];

p[2] += camera[5];

// Compute the center of distortion

T xp = -p[0] / p[2];

T yp = -p[1] / p[2];

// Apply second and fourth order radial distortion

const T &l1 = camera[7];

const T &l2 = camera[8];

T r2 = xp * xp + yp * yp;

T distortion = T(1.0) + r2 * (l1 + l2 * r2);

const T &focal = camera[6];

predictions[0] = focal * distortion * xp;

predictions[1] = focal * distortion * yp;

return true;

}

static ceres::CostFunction *Create(const double observed_x, const double observed_y) {

return (new ceres::AutoDiffCostFunction<SnavelyReprojectionError, 2, 9, 3>(//每个相机有6维姿态,1维焦距和2维畸变参数

new SnavelyReprojectionError(observed_x, observed_y)));

}

private:

double observed_x;

double observed_y;

};

#endif // SnavelyReprojection.h

使用g2o优化BA

#include <g2o/core/base_vertex.h>

#include <g2o/core/base_binary_edge.h>

#include <g2o/core/block_solver.h>

#include <g2o/core/optimization_algorithm_levenberg.h>

#include <g2o/solvers/csparse/linear_solver_csparse.h>

#include <g2o/core/robust_kernel_impl.h>

#include <iostream>

#include "common.h"

#include "sophus/se3.hpp"

using namespace Sophus;

using namespace Eigen;

using namespace std;

/// 姿态和内参的结构

struct PoseAndIntrinsics {

PoseAndIntrinsics() {}

/// set from given data address

explicit PoseAndIntrinsics(double *data_addr) {

rotation = SO3d::exp(Vector3d(data_addr[0], data_addr[1], data_addr[2]));

translation = Vector3d(data_addr[3], data_addr[4], data_addr[5]);

focal = data_addr[6];

k1 = data_addr[7];

k2 = data_addr[8];

}

/// 将估计值放入内存

void set_to(double *data_addr) {

auto r = rotation.log();

for (int i = 0; i < 3; ++i) data_addr[i] = r[i];

for (int i = 0; i < 3; ++i) data_addr[i + 3] = translation[i];

data_addr[6] = focal;

data_addr[7] = k1;

data_addr[8] = k2;

}

SO3d rotation;

Vector3d translation = Vector3d::Zero();

double focal = 0;

double k1 = 0, k2 = 0;

};

/// 位姿加相机内参的顶点,9维,前三维为so3,接下去为t, f, k1, k2

class VertexPoseAndIntrinsics : public g2o::BaseVertex<9, PoseAndIntrinsics> {

public:

EIGEN_MAKE_ALIGNED_OPERATOR_NEW;

VertexPoseAndIntrinsics() {}

virtual void setToOriginImpl() override {

_estimate = PoseAndIntrinsics();

}

virtual void oplusImpl(const double *update) override {

_estimate.rotation = SO3d::exp(Vector3d(update[0], update[1], update[2])) * _estimate.rotation;

_estimate.translation += Vector3d(update[3], update[4], update[5]);

_estimate.focal += update[6];

_estimate.k1 += update[7];

_estimate.k2 += update[8];

}

/// 根据估计值投影一个点

Vector2d project(const Vector3d &point) {

Vector3d pc = _estimate.rotation * point + _estimate.translation;

pc = -pc / pc[2];

double r2 = pc.squaredNorm();

double distortion = 1.0 + r2 * (_estimate.k1 + _estimate.k2 * r2);

return Vector2d(_estimate.focal * distortion * pc[0],

_estimate.focal * distortion * pc[1]);

}

virtual bool read(istream &in) {}

virtual bool write(ostream &out) const {}

};

class VertexPoint : public g2o::BaseVertex<3, Vector3d> {

public:

EIGEN_MAKE_ALIGNED_OPERATOR_NEW;

VertexPoint() {}

virtual void setToOriginImpl() override {

_estimate = Vector3d(0, 0, 0);

}

virtual void oplusImpl(const double *update) override {

_estimate += Vector3d(update[0], update[1], update[2]);

}

virtual bool read(istream &in) {}

virtual bool write(ostream &out) const {}

};

class EdgeProjection :

public g2o::BaseBinaryEdge<2, Vector2d, VertexPoseAndIntrinsics, VertexPoint> {

public:

EIGEN_MAKE_ALIGNED_OPERATOR_NEW;

virtual void computeError() override {

auto v0 = (VertexPoseAndIntrinsics *) _vertices[0];

auto v1 = (VertexPoint *) _vertices[1];

auto proj = v0->project(v1->estimate());

_error = proj - _measurement;

}

// use numeric derivatives

virtual bool read(istream &in) {}

virtual bool write(ostream &out) const {}

};

void SolveBA(BALProblem &bal_problem);

int main(int argc, char **argv) {

if (argc != 2) {

cout << "usage: bundle_adjustment_g2o bal_data.txt" << endl;

return 1;

}

BALProblem bal_problem(argv[1]);

bal_problem.Normalize();

bal_problem.Perturb(0.1, 0.5, 0.5);

bal_problem.WriteToPLYFile("initial.ply");

SolveBA(bal_problem);

bal_problem.WriteToPLYFile("final.ply");

return 0;

}

void SolveBA(BALProblem &bal_problem) {

const int point_block_size = bal_problem.point_block_size();

const int camera_block_size = bal_problem.camera_block_size();

double *points = bal_problem.mutable_points();

double *cameras = bal_problem.mutable_cameras();

// pose dimension 9, landmark is 3

typedef g2o::BlockSolver<g2o::BlockSolverTraits<9, 3>> BlockSolverType;

typedef g2o::LinearSolverCSparse<BlockSolverType::PoseMatrixType> LinearSolverType;

// use LM

auto solver = new g2o::OptimizationAlgorithmLevenberg(

g2o::make_unique<BlockSolverType>(g2o::make_unique<LinearSolverType>()));

g2o::SparseOptimizer optimizer;

optimizer.setAlgorithm(solver);

optimizer.setVerbose(true);

/// build g2o problem

const double *observations = bal_problem.observations();

// vertex

vector<VertexPoseAndIntrinsics *> vertex_pose_intrinsics;

vector<VertexPoint *> vertex_points;

for (int i = 0; i < bal_problem.num_cameras(); ++i) {

VertexPoseAndIntrinsics *v = new VertexPoseAndIntrinsics();

double *camera = cameras + camera_block_size * i;

v->setId(i);

v->setEstimate(PoseAndIntrinsics(camera));

optimizer.addVertex(v);

vertex_pose_intrinsics.push_back(v);

}

for (int i = 0; i < bal_problem.num_points(); ++i) {

VertexPoint *v = new VertexPoint();

double *point = points + point_block_size * i;

v->setId(i + bal_problem.num_cameras());

v->setEstimate(Vector3d(point[0], point[1], point[2]));

// g2o在BA中需要手动设置待Marg的顶点

v->setMarginalized(true);

optimizer.addVertex(v);

vertex_points.push_back(v);

}

// edge

for (int i = 0; i < bal_problem.num_observations(); ++i) {

EdgeProjection *edge = new EdgeProjection;

edge->setVertex(0, vertex_pose_intrinsics[bal_problem.camera_index()[i]]);

edge->setVertex(1, vertex_points[bal_problem.point_index()[i]]);

edge->setMeasurement(Vector2d(observations[2 * i + 0], observations[2 * i + 1]));

edge->setInformation(Matrix2d::Identity());

edge->setRobustKernel(new g2o::RobustKernelHuber());

optimizer.addEdge(edge);

}

optimizer.initializeOptimization();

optimizer.optimize(40);

// set to bal problem

for (int i = 0; i < bal_problem.num_cameras(); ++i) {

double *camera = cameras + camera_block_size * i;

auto vertex = vertex_pose_intrinsics[i];

auto estimate = vertex->estimate();

estimate.set_to(camera);

}

for (int i = 0; i < bal_problem.num_points(); ++i) {

double *point = points + point_block_size * i;

auto vertex = vertex_points[i];

for (int k = 0; k < 3; ++k) point[k] = vertex->estimate()[k];

}

}

卡尔曼滤波与扩展卡尔曼滤波在SLAM中的应用解析

卡尔曼滤波与扩展卡尔曼滤波在SLAM中的应用解析

3614

3614

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?