Advanced CNN

传送门:https://www.bilibili.com/video/BV1Y7411d7Ys?p=11

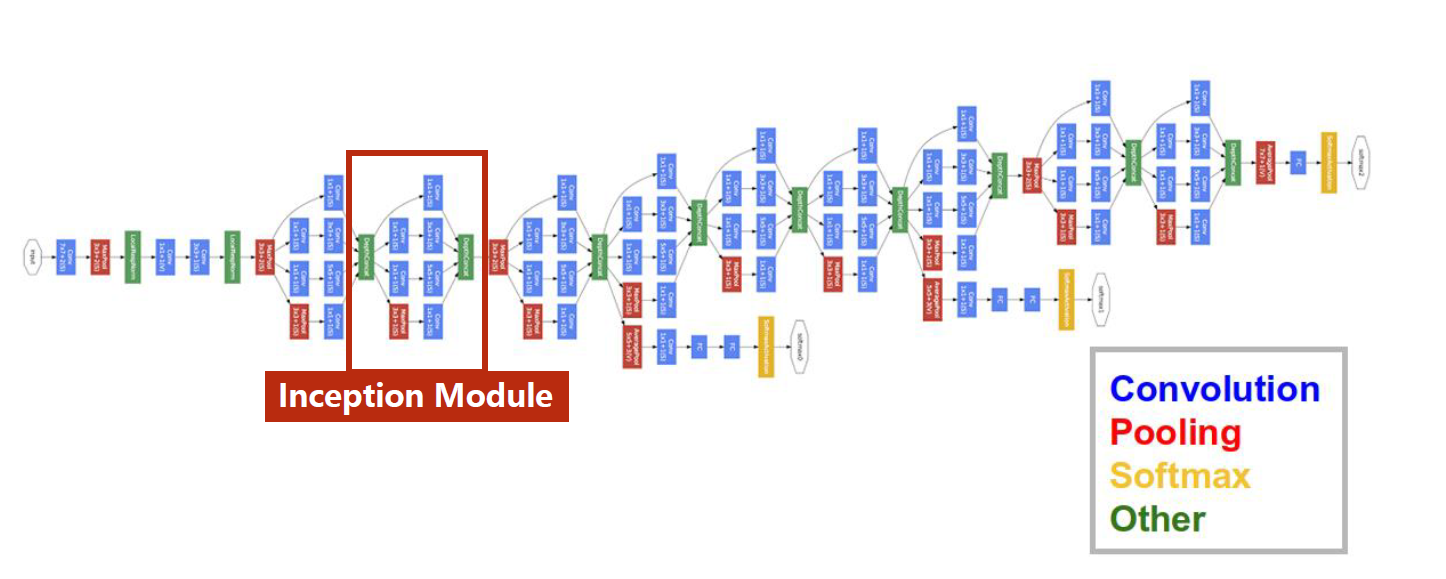

基础的网络无法很大程度上限制了我们的发挥,高级的网络能够有效的提升训练模型的精度,本节采用了GoogLeNet中的Inception主干网络和ResNet残差主干网络,经过调试,训练精度均在99%以上。

GoogLeNet

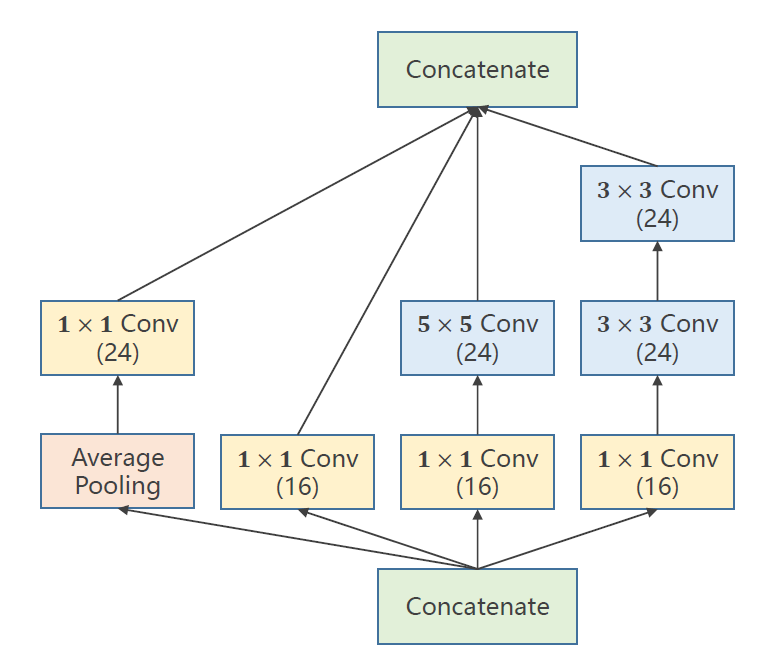

Inception Module

代码

import torch

from torch.utils.data import DataLoader

from torchvision import transforms

from torchvision import datasets

import torch.nn.functional as F

import torch.optim as optim

import matplotlib.pyplot as plt

#1.prepare dataset

#2.design model using class

#3.construct loss and optimizer

#4.training cycle+test

#1.准备数据集

batch_size = 64

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307, ), (0.3081, ))#均值,标准化

])

train_dataset = datasets.MNIST(root='./dataset/mnist',

train=True,

transform=transform,

download=True)

print(train_dataset[0])

test_dataset = datasets.MNIST(root='./dataset/mnist',

train=False,

transform=transform,

download=True)

train_loader = DataLoader(dataset=train_dataset,

batch_size=32,

shuffle=True)

test_loader = DataLoader(dataset=test_dataset,

batch_size=32,

shuffle=False)

#---------------------Inception-----------------------

class InceptionA(torch.nn.Module):

def __init__(self, in_channels):

super(InceptionA, self).__init__()

self.branch1x1 = torch.nn.Conv2d(in_channels, 16, kernel_size=1)

self.branch5x5_1 = torch.nn.Conv2d(in_channels, 16, kernel_size=1)

self.branch5x5_2 = torch.nn.Conv2d(16, 24, kernel_size=5, padding=2)

self.branch3x3_1 = torch.nn.Conv2d(in_channels, 16, kernel_size=1)

self.branch3x3_2 = torch.nn.Conv2d(16, 24, kernel_size=3, padding=1)

self.branch3x3_3 = torch.nn.Conv2d(24, 24, kernel_size=3, padding=1)

self.branch_pool = torch.nn.Conv2d(in_channels, 24, kernel_size=1)

def forward(self, x):

branch1x1 = self.branch1x1(x)

branch5x5 = self.branch5x5_1(x)

branch5x5 = self.branch5x5_2(branch5x5)

branch3x3 = self.branch3x3_1(x)

branch3x3 = self.branch3x3_2(branch3x3)

branch3x3 = self.branch3x3_3(branch3x3)

branch_pool = F.avg_pool2d(x, kernel_size=3, stride=1, padding=1)

branch_pool = self.branch_pool(branch_pool)

outputs = [branch1x1, branch5x5, branch3x3, branch_pool]

return torch.cat(outputs, dim=1)

model = Net()

# 开启显卡

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

model.to(device)

#3.构建loss和optimzer

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)

#4.循环

def train(epoch):

running_loss = 0.0

for batch_idx, (inputs, target) in enumerate(train_loader):

inputs, target = inputs.to(device), target.to(device) #显卡加速

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

if batch_idx % 300 == 299:

print('[%d, %5d] loss: %.3f' % (epoch+1, batch_idx+1, running_loss/300))

running_loss = 0.0

def test():

correct = 0

total = 0

with torch.no_grad():

for data in test_loader:

images, labels = data

outputs = model(images)

_, predicted = torch.max(outputs.data, dim=1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('Accuracy on test set: %d %%' % (100*correct / total))

return correct / total

if __name__ == '__main__':

epoch_list = []

acc_list = []

for epoch in range(10):

train(epoch)

acc = test()

epoch_list.append(epoch)

acc_list.append(acc)

# if epoch % 10 == 9:

# test()

import os

os.environ['KMP_DUPLICATE_LIB_OK'] = 'TRUE'

plt.plot(epoch_list, acc_list)

plt.xlabel('epoch')

plt.ylabel('accuracy')

plt.show()

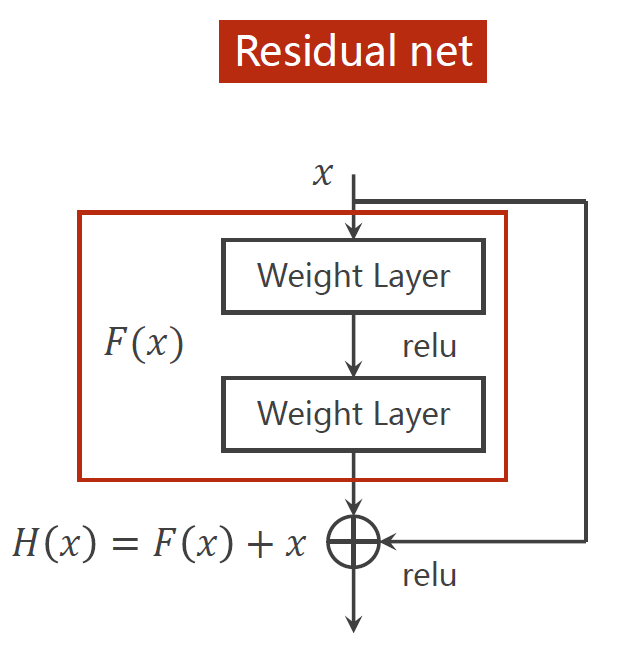

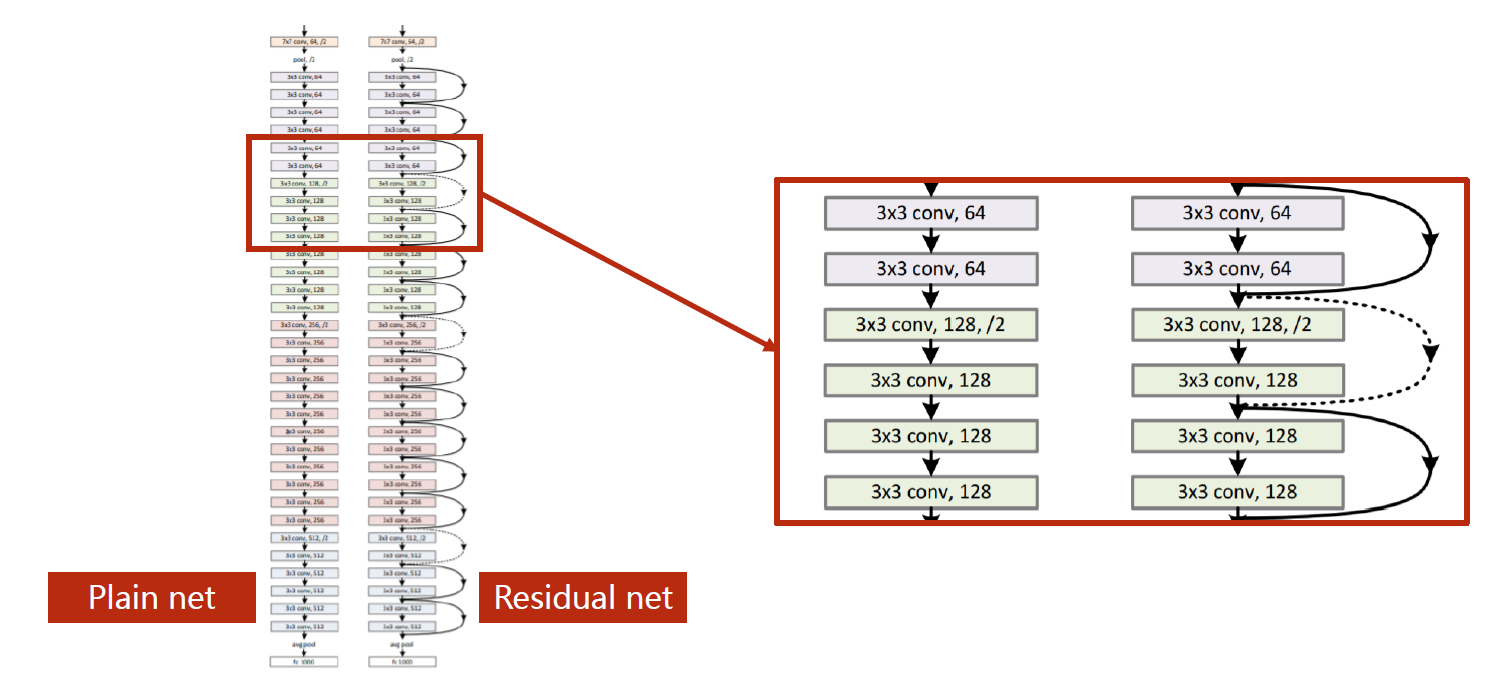

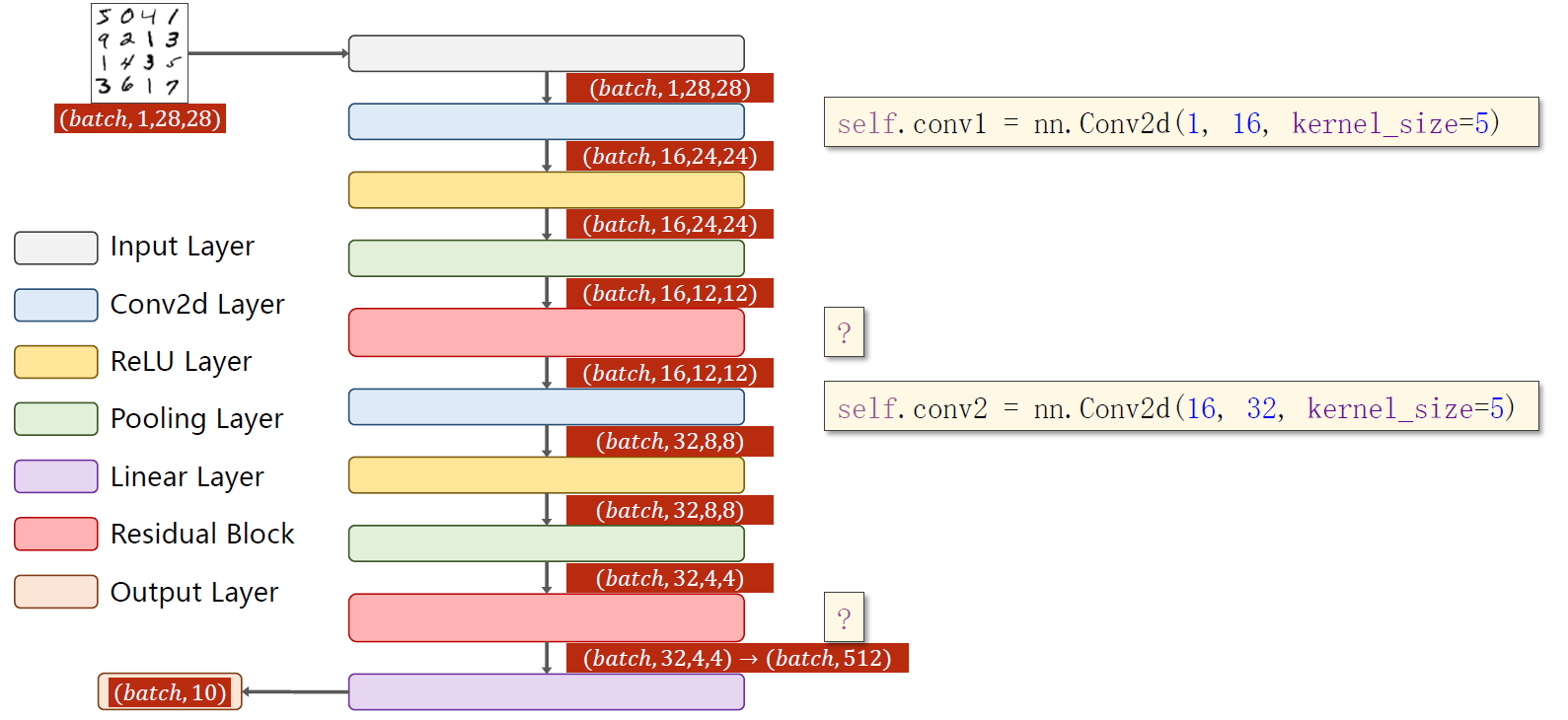

ResNet

import torch

from torch.utils.data import DataLoader

from torchvision import transforms

from torchvision import datasets

import torch.nn.functional as F

import torch.optim as optim

import matplotlib.pyplot as plt

#1.prepare dataset

#2.design model using class

#3.construct loss and optimizer

#4.training cycle+test

#1.准备数据集

batch_size = 64

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307, ), (0.3081, ))#均值,标准化

])

train_dataset = datasets.MNIST(root='./dataset/mnist',

train=True,

transform=transform,

download=True)

print(train_dataset[0])

test_dataset = datasets.MNIST(root='./dataset/mnist',

train=False,

transform=transform,

download=True)

train_loader = DataLoader(dataset=train_dataset,

batch_size=32,

shuffle=True)

test_loader = DataLoader(dataset=test_dataset,

batch_size=32,

shuffle=False)

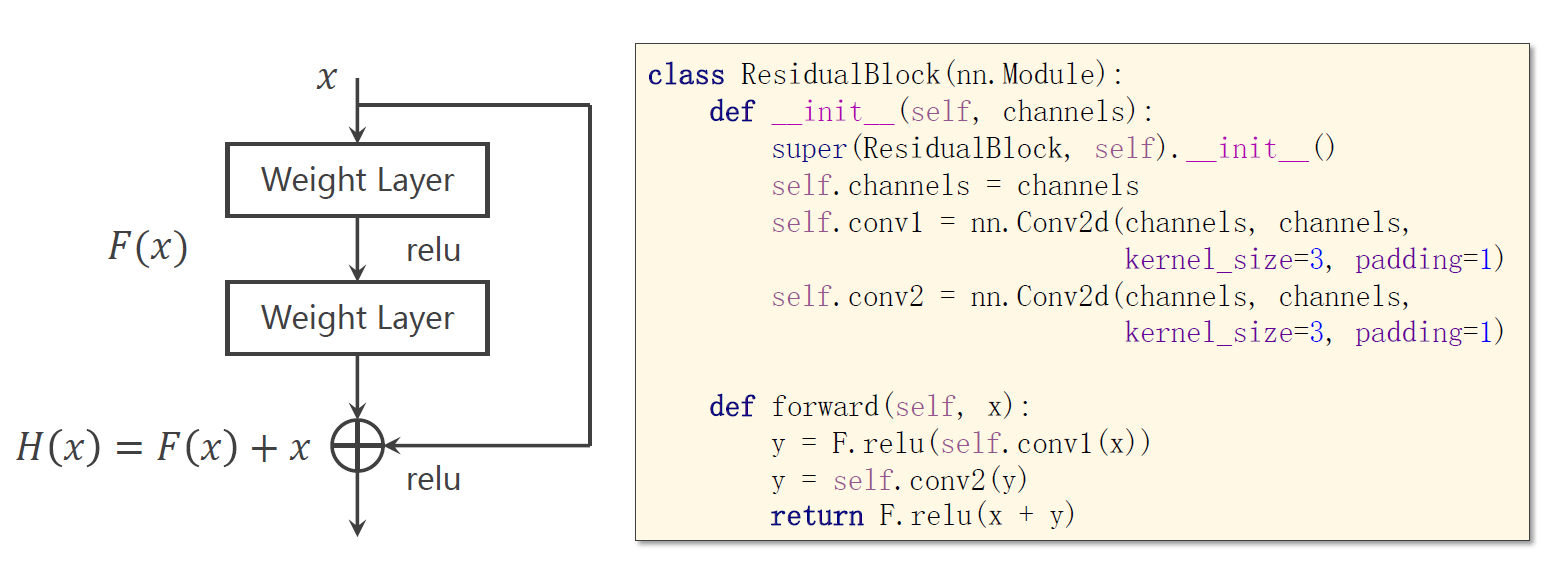

#--------------------------RestNet------------------------

class ResidualBlock(torch.nn.Module):

def __init__(self, channels):

super(ResidualBlock, self).__init__()

self.channels = channels

self.conv1 = torch.nn.Conv2d(channels, channels, kernel_size=3, padding=1)

self.conv2 = torch.nn.Conv2d(channels, channels, kernel_size=3, padding=1)

def forward(self, x):

y = F.relu(self.conv1(x))

y = self.conv2(y)

return F.relu(x + y)

class Net(torch.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = torch.nn.Conv2d(1, 16, kernel_size=5)

self.conv2 = torch.nn.Conv2d(16, 32, kernel_size=5)

self.mp = torch.nn.MaxPool2d(2)

self.rblock1 = ResidualBlock(16)

self.rblock2 = ResidualBlock(32)

self.fc1 = torch.nn.Linear(512, 256)

self.fc2 = torch.nn.Linear(256, 128)

self.fc3 = torch.nn.Linear(128, 64)

self.fc4 = torch.nn.Linear(64, 10)

def forward(self, x):

in_size = x.size(0)

x = self.mp(F.relu(self.conv1(x)))

x = self.rblock1(x)

x = self.mp(F.relu(self.conv2(x)))

x = self.rblock2(x)

x = x.view(in_size, -1)

x = self.fc1(x)

x = self.fc2(x)

x = self.fc3(x)

x = self.fc4(x)

return x

model = Net()

# 开启显卡

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

model.to(device)

#3.构建loss和optimzer

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)

#4.循环

def train(epoch):

running_loss = 0.0

for batch_idx, (inputs, target) in enumerate(train_loader):

inputs, target = inputs.to(device), target.to(device) #显卡加速

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

if batch_idx % 300 == 299:

print('[%d, %5d] loss: %.3f' % (epoch+1, batch_idx+1, running_loss/300))

running_loss = 0.0

def test():

correct = 0

total = 0

with torch.no_grad():

for data in test_loader:

images, labels = data

outputs = model(images)

_, predicted = torch.max(outputs.data, dim=1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('Accuracy on test set: %d %%' % (100*correct / total))

return correct / total

if __name__ == '__main__':

epoch_list = []

acc_list = []

for epoch in range(10):

train(epoch)

acc = test()

epoch_list.append(epoch)

acc_list.append(acc)

# if epoch % 10 == 9:

# test()

import os

os.environ['KMP_DUPLICATE_LIB_OK'] = 'TRUE'

plt.plot(epoch_list, acc_list)

plt.xlabel('epoch')

plt.ylabel('accuracy')

plt.show()

本文介绍了如何使用GoogLeNet的Inception模块和ResNet的残差网络结构改进基础模型,实现在MNIST数据集上超过99%的训练精度。通过代码实例展示了如何构建InceptionA模块和ResidualBlock,以及整个训练和测试流程。

本文介绍了如何使用GoogLeNet的Inception模块和ResNet的残差网络结构改进基础模型,实现在MNIST数据集上超过99%的训练精度。通过代码实例展示了如何构建InceptionA模块和ResidualBlock,以及整个训练和测试流程。

9万+

9万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?