ImportError: This modeling file requires the following packages that were not found in your environment: flash_attn. 明明安装flash-attn成功,但是import的时候报错

问题描述:

运行Llama2需要flash-attn,在 https://github.com/Dao-AILab/flash-attention/releases上找到对应的whl文件安装

使用命令nvidia-smi、pip show torch、pip debug查询到环境是CUDA12.2、torch2.1.2和cp310-cp310-manylinux_2_35_x86_64。

因此,对应whl文件参数cu12、torch2.1、cp310-cp310-linux_x86_64

运行一下安装命令

pip install https://github.com/Dao-AILab/flash-attention/releases/download/v2.7.1.post4/flash_attn-2.7.1.post4+cu12torch2.1cxx11abiTRUE-cp310-cp310-linux_x86_64.whl --no-build-isolation

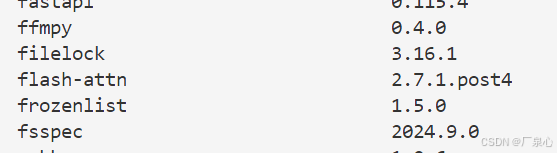

pip list 显示是有flash-attn的

明明安装flash-attn成功,但是import的时候报错

ImportError: This modeling file requires the following packages that were not found in your environment: flash_attn.

undefined symbol: _ZN3c105ErrorC2ENS_14SourceLocationENSt7__cxx1112basic_stringIcSt11char_traitsIcESaIcEEE

解决方案:

原先下载的文件:flash_attn-2.7.1.post4+cu12torch2.1cxx11abiTRUE-cp310-cp310-linux_x86_64.whl

如果whl文件名上包含参数abiTRUE,则会报错。需要安装包含abiFALSE的whl文件

卸载原有的flash-attn

pip uninstall flash-attn

更换下载的whl文件:flash_attn-2.7.1.post4+cu12torch2.1cxx11abiFALSE-cp310-cp310-linux_x86_64.whl

pip install https://github.com/Dao-AILab/flash-attention/releases/download/v2.7.1.post4/flash_attn-2.7.1.post4+cu12torch2.1cxx11abiFALSE-cp310-cp310-linux_x86_64.whl --no-build-isolation

487

487

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?