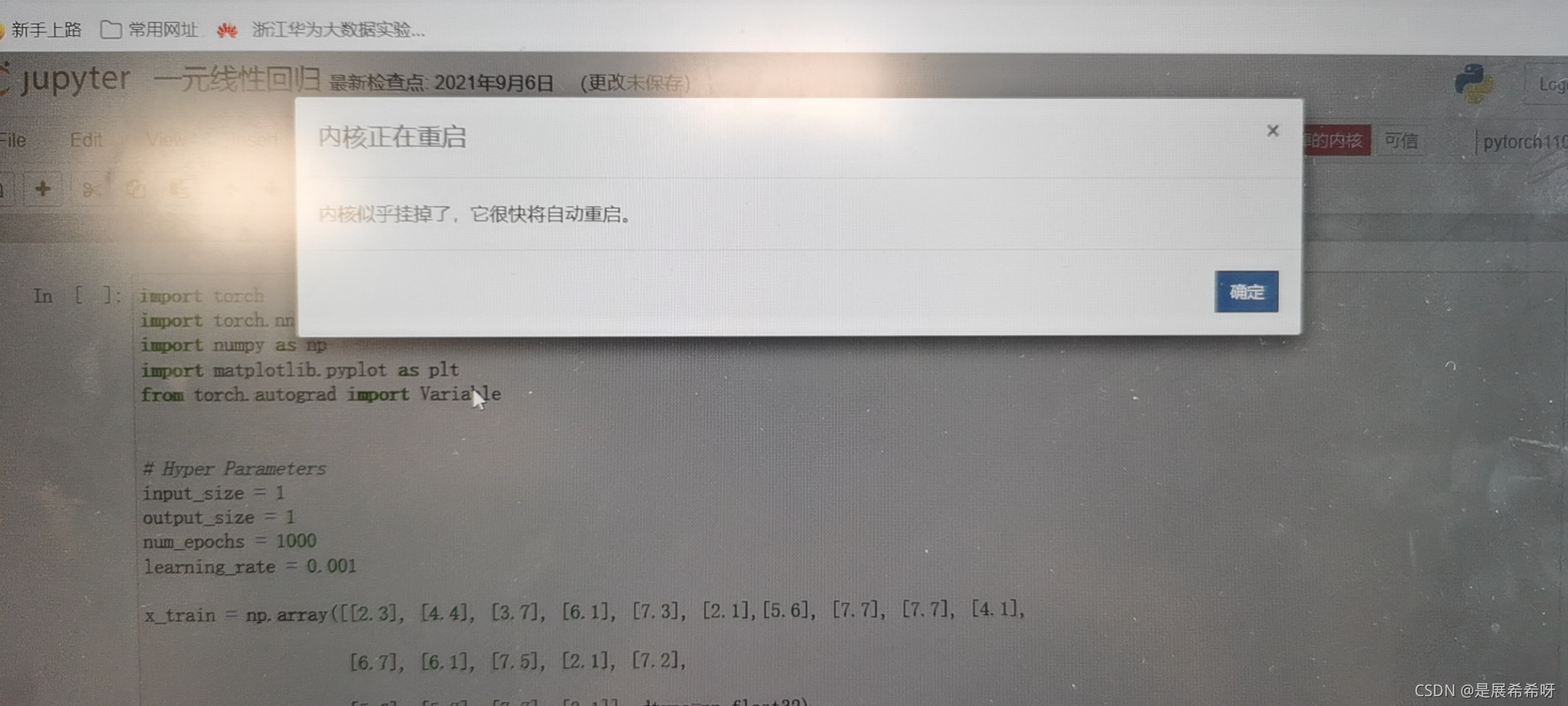

notebook关于启动代码出现炸内核的解决方法

在安装配置pycharm的后,运行import torch as t 就出现了炸内核的情况,后来知道是因为安装notebook的时候多点了一个东西,导致内核便空。后来我重新安装了notebook,配置了pytorch环境,但是还是出现

以下是原代码:

import torch

import torch.nn as nn

import numpy as np

import matplotlib.pyplot as plt

from torch.autograd import Variable

Hyper Parameters

input_size = 1

output_size = 1

num_epochs = 1000

learning_rate = 0.001

x_train = np.array([[2.3], [4.4], [3.7], [6.1], [7.3], [2.1],[5.6], [7.7], [7.7], [4.1],

[6.7], [6.1], [7.5], [2.1], [7.2],

[5.6], [5.7], [7.7], [3.1]], dtype=np.float32)

#xtrain生成矩阵数据

y_train = np.array([[2.7], [4.76], [4.1], [7.1], [7.6], [3.5],[5.4], [7.6], [7.9], [5.3],

[7.3], [7.5], [7.5], [3.2], [7.7],

[6.4], [6.6], [7.9], [4.9]], dtype=np.float32)

plt.figure()

#画图散点图

plt.scatter(x_train,y_train)

plt.xlabel(‘x_train’)

#x轴名称

plt.ylabel(‘y_train’)

#y轴名称

#显示图片

plt.show()

#Linear Regression Model

class LinearRegression(nn.Module):

def init(self, input_size, output_size):

super(LinearRegression, self).init()

self.linear = nn.Linear(input_size, output_size)

def forward(self, x):

out = self.linear(x)

return out

model = LinearRegression(input_size, output_size)

Loss and Optimizer

criterion = nn.MSELoss()

optimizer = torch.optim.SGD(model.parameters(), lr=learning_rate)

#Train the Model

for epoch in range(num_epochs):

# Convert numpy array to torch Variable

inputs = Variable(torch.from_numpy(x_train))

targets = Variable(torch.from_numpy(y_train))

# Forward + Backward + Optimize

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs, targets)

loss.backward()

optimizer.step()

if (epoch+1) % 50 == 0:

print ('Epoch [%d/%d], Loss: %.4f'

%(epoch+1, num_epochs, loss.item()))

Plot the graph

model.eval()

predicted = model(Variable(torch.from_numpy(x_train))).data.numpy()

plt.plot(x_train, y_train, ‘ro’)

plt.plot(x_train, predicted, label=‘predict’)

plt.legend()

plt.show()

执行这个代码会出现炸内核的情况,后来发现是因为是由于在每次打开jupyter notebook后没有实际上关掉,所以占据了大量的内存

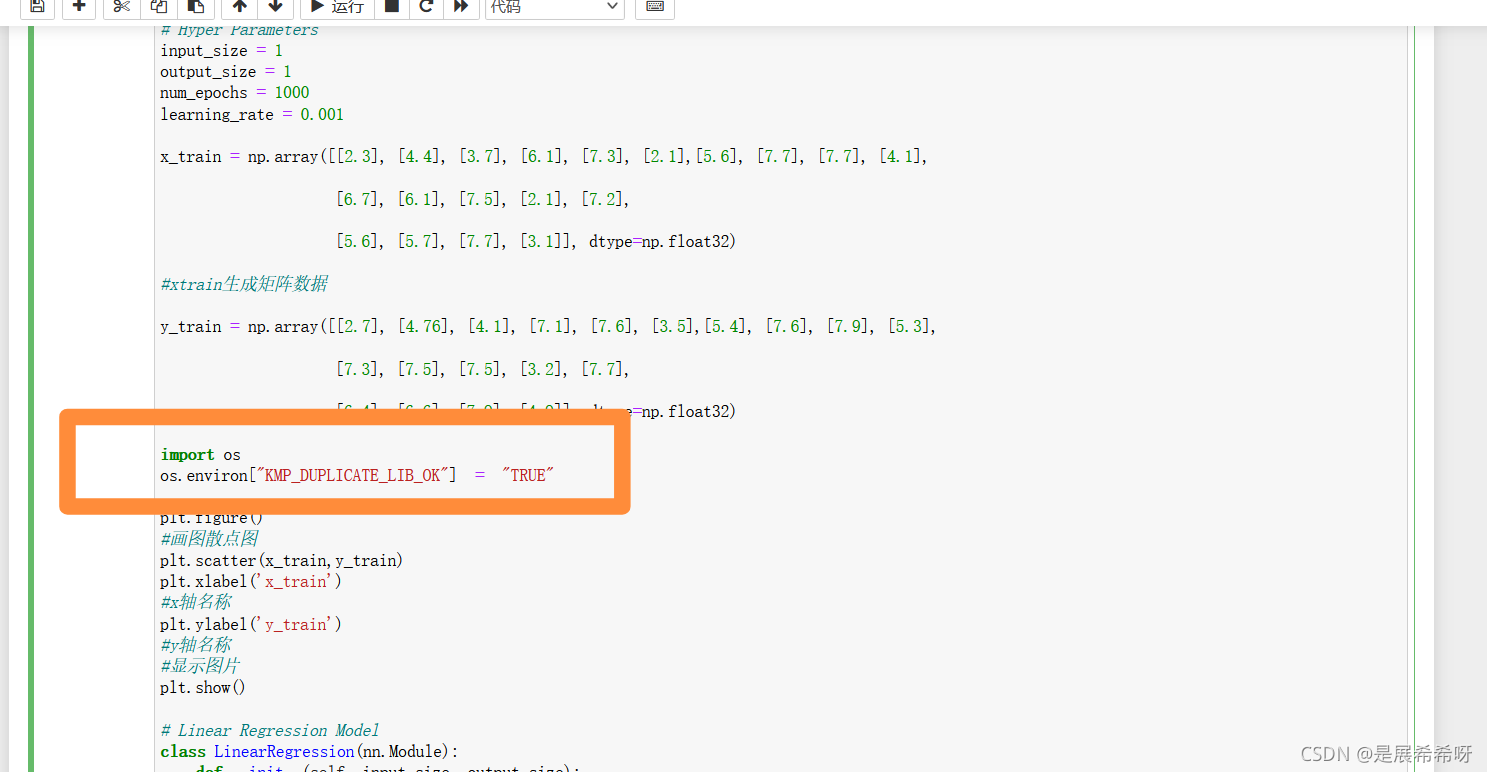

只要执行:

import os

os.environ[“KMP_DUPLICATE_LIB_OK”] = “TRUE”

就可以了

最终代码如下:

在配置PyCharm并使用PyTorch时遇到notebook炸内核的问题,原因是安装过程中的某个错误。通过重新安装notebook和配置环境仍无法解决。问题最终发现是由于未正确关闭Jupyter notebook导致内存占用过高。解决方法是在代码开头添加`import os; os.environ["KMP_DUPLICATE_LIB_OK"] = "TRUE"`。

在配置PyCharm并使用PyTorch时遇到notebook炸内核的问题,原因是安装过程中的某个错误。通过重新安装notebook和配置环境仍无法解决。问题最终发现是由于未正确关闭Jupyter notebook导致内存占用过高。解决方法是在代码开头添加`import os; os.environ["KMP_DUPLICATE_LIB_OK"] = "TRUE"`。

12万+

12万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?