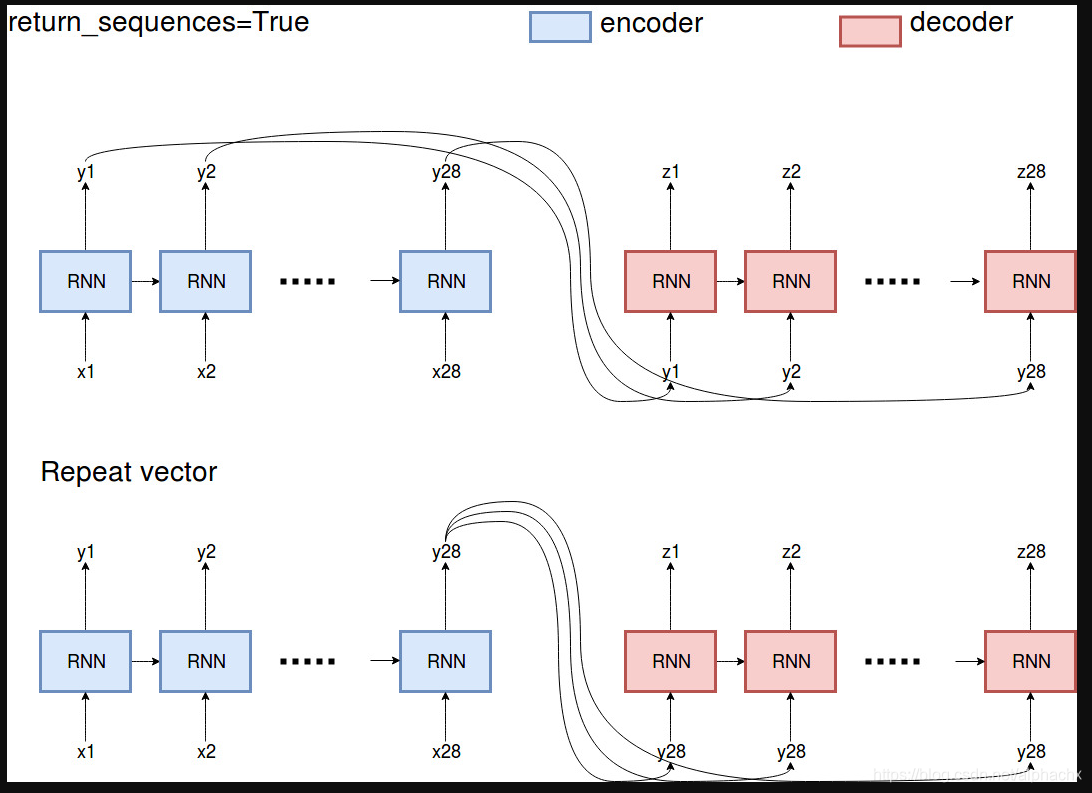

Essentially, return_sequences=True returns all the outputs the encoder observed in the past,

where RepeatVector repeats the very last output of the encoder

some tips for the use of the two statements:

one example is machine translation. For example, if you have a seq2seq model and you don't want to use teacher forcing and want a quick and dirty solution, you can pass in the last state of the encoder RNN (last blue box) using RepeatVector and return_sequences=False. But if you are to compute attention weights for the encoder states, you will need to use return_sequences=True as you need all encoder states to compute attention weights.

本文探讨了Seq2Seq模型中Return Sequences与Repeat Vector的应用场景。具体而言,Return Sequences返回所有历史状态输出,适用于计算注意力权重等场景;而Repeat Vector则重复编码器的最后一项输出,适合快速实现无教师强迫训练。

本文探讨了Seq2Seq模型中Return Sequences与Repeat Vector的应用场景。具体而言,Return Sequences返回所有历史状态输出,适用于计算注意力权重等场景;而Repeat Vector则重复编码器的最后一项输出,适合快速实现无教师强迫训练。

1469

1469

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?