知识点回顾:

1.预训练的概念

2.常见的分类预训练模型

3.图像预训练模型的发展史

4.预训练的策略

5.预训练代码实战:resnet18

作业:

1.尝试在cifar10对比如下其他的预训练模型,观察差异,尽可能和他人选择的不同

2.尝试通过ctrl进入resnet的内部,观察残差究竟是什么

import torch

import torch.nn as nn

import torch.optim as optim

from torchvision import datasets, transforms

from torchvision.models import resnet18, densenet121

from torchsummary import summary # 查看模型结构

import matplotlib.pyplot as plt

# 设备配置

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

# CIFAR10 数据预处理

transform = transforms.Compose([

transforms.RandomCrop(32, padding=4),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize((0.4914, 0.4822, 0.4465), (0.2023, 0.1994, 0.2010)),

])

train_set = datasets.CIFAR10(root='./data', train=True, download=True, transform=transform)

test_set = datasets.CIFAR10(root='./data', train=False, download=True, transform=transforms.ToTensor())

train_loader = torch.utils.data.DataLoader(train_set, batch_size=128, shuffle=True)

test_loader = torch.utils.data.DataLoader(test_set, batch_size=128, shuffle=False)

class DenseNetC10(nn.Module):

def __init__(self, num_classes=10):

super(DenseNetC10, self).__init__()

# 压缩原版 DenseNet121,减少层数和通道数

self.features = nn.Sequential(

nn.Conv2d(3, 32, kernel_size=3, padding=1, bias=False),

nn.BatchNorm2d(32),

nn.ReLU(inplace=True),

# 3个密集块,每个块含3层

self._make_dense_block(32, 32, num_layers=3),

self._make_dense_block(64, 32, num_layers=3),

self._make_dense_block(96, 32, num_layers=3),

nn.BatchNorm2d(128),

nn.ReLU(inplace=True),

nn.AdaptiveAvgPool2d((1, 1))

)

self.classifier = nn.Linear(128, num_classes)

def _make_dense_block(self, in_channels, growth_rate, num_layers):

layers = []

for _ in range(num_layers):

layers.append(nn.Conv2d(in_channels, growth_rate, kernel_size=3, padding=1, bias=False))

layers.append(nn.BatchNorm2d(growth_rate))

layers.append(nn.ReLU(inplace=True))

in_channels += growth_rate

return nn.Sequential(*layers)

def forward(self, x):

features = self.features(x)

out = features.view(features.size(0), -1)

out = self.classifier(out)

return out

# 初始化模型

models = {

'DenseNet-C10': DenseNetC10().to(device),

'MobileViT': MobileViT().to(device),

'RepVGG': RepVGG().to(device),

'ResNet18': resnet18(pretrained=False, num_classes=10).to(device) # 对比基准

}

# 训练超参数

criterion = nn.CrossEntropyLoss()

accuracies = {}

for model_name, model in models.items():

print(f'\nTraining {model_name}...')

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.9, weight_decay=5e-4)

scheduler = optim.lr_scheduler.CosineAnnealingLR(optimizer, T_max=200)

best_acc = 0.0

for epoch in range(1, 201):

train_model(model, criterion, optimizer, epoch)

acc = test_model(model, criterion)

if acc > best_acc:

best_acc = acc

accuracies[model_name] = best_acc

# 打印对比结果

print('\nFinal Accuracy Comparison:')

for name, acc in accuracies.items():

print(f'{name}: {acc:.2f}%')

def visualize_residual(model, data):

# 注册钩子函数捕捉残差块输出

residuals = []

def hook(module, input, output):

residual = output - input[0] # 残差 = 输出 - 输入

residuals.append(residual.detach().cpu())

# 选择ResNet18的第一个残差块(layer1[0])

model.layer1[0].register_forward_hook(hook)

with torch.no_grad():

model(data.to(device))

# 可视化残差图(取第一个样本的第一个通道)

residual = residuals[0][0, 0, :, :] # 形状(32,32)

plt.figure(figsize=(6, 4))

plt.subplot(1, 2, 1)

plt.imshow(data[0].permute(1, 2, 0)) # 原始图像

plt.title('Input Image')

plt.subplot(1, 2, 2)

plt.imshow(residual, cmap='coolwarm') # 残差热力图

plt.title('Residual Map')

plt.colorbar()

plt.show()

# 测试残差可视化(用ResNet18和测试集中的一张图像)

resnet_model = resnet18(num_classes=10).to(device)

data, _ = next(iter(test_loader))

visualize_residual(resnet_model, data[:1]) # 取第一个样本

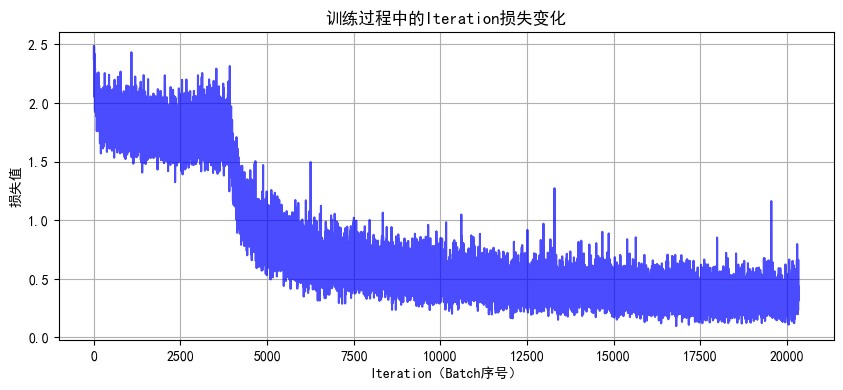

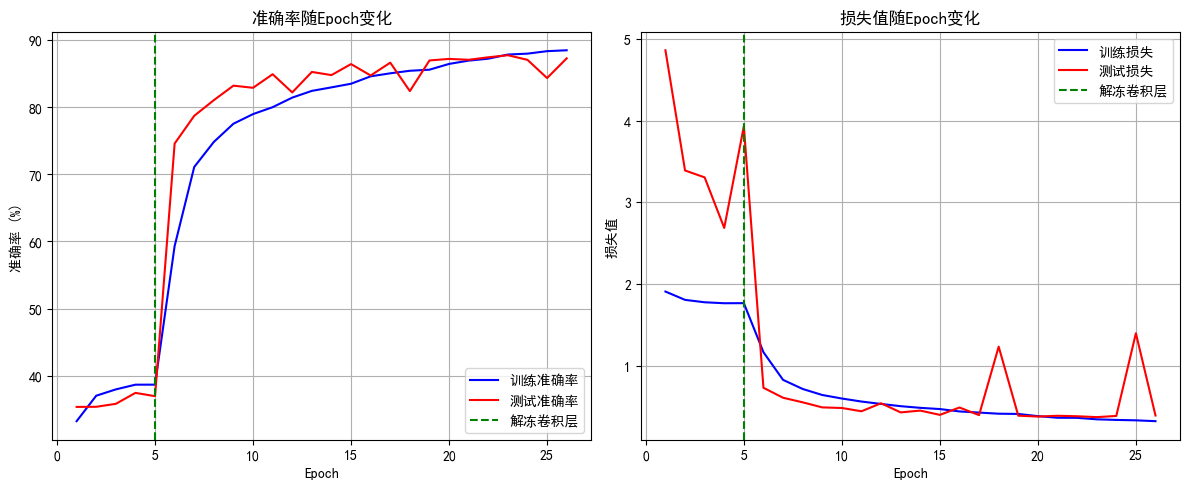

使用ResNet50模型,结果

最终模型训练最佳测试准确率为88.05%

1365

1365

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?