一、使用scala2.11版本的Kafka 2.2.1。

pom.xml文件:

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka_2.11</artifactId>

<version>2.2.1</version>

</dependency>

二、Java实现生产者:

package com.demo.kafka;

import java.util.Properties;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.ProducerConfig;

import org.apache.kafka.clients.producer.ProducerRecord;

import org.apache.kafka.clients.producer.RecordMetadata;

import org.apache.kafka.common.serialization.StringSerializer;

public class Producer {

public static void main(String[] _args){

Properties p = new Properties();

p.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG, "xxx:9092,xxx:9092,xxx:9092"); //Broker的IP地址和端口

p.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getName()); //key序列化器

p.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getName());//value序列化器

p.put(ProducerConfig.CLIENT_ID_CONFIG, "producer.client.id.demo");

KafkaProducer<String, String> producer = new KafkaProducer<>(p);

try{

for(int i = 1; i <= 10; i++) { //发送10条消息

ProducerRecord<String, String> record = new ProducerRecord<>("topic-test-0", "message-" + i);

RecordMetadata meta = producer.send(record).get();

System.out.println("发送数据: topic=" + meta.topic() + "; partition=" + meta.partition() + "; offset=" + meta.offset());

Thread.sleep(1000);

}

}catch(Exception e){

}

producer.flush();//发送缓冲区中的record立即发送,并且一直阻塞,直到全部发送

producer.close();

}

}

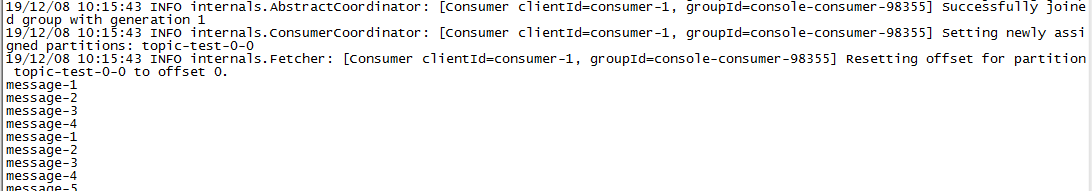

三、模拟消费者

./kafka-console-consumer --bootstrap-server xxx:9092,xxx:9092,xxx:9092 --topic topic-test-0 --from-beginning

运行Java生产者程序,关注consumer消费消息结果

四、消费者java代码:

package com.demo.kafka;

import java.time.Duration;

import java.util.Arrays;

import java.util.Properties;

import org.apache.kafka.clients.consumer.ConsumerConfig;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.apache.kafka.clients.consumer.ConsumerRecords;

import org.apache.kafka.clients.consumer.KafkaConsumer;

public class Consumer {

private static final String TOPIC_EXACTLY_ONCE = "topic-test-0";

private final KafkaConsumer<String, String> consumer;

private Consumer() {

Properties props = new Properties();

props.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG, "bigdata001:9092,bigdata002:9092,bigdata003:9092");

props.put(ConsumerConfig.GROUP_ID_CONFIG, "test");

props.put(ConsumerConfig.ENABLE_AUTO_COMMIT_CONFIG, "false");

props.put(ConsumerConfig.AUTO_COMMIT_INTERVAL_MS_CONFIG, "1000");

props.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, "org.apache.kafka.common.serialization.StringDeserializer");

props.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG, "org.apache.kafka.common.serialization.StringDeserializer");

//consumer从头开始消费

props.put(ConsumerConfig.AUTO_OFFSET_RESET_CONFIG, "earliest");

consumer = new KafkaConsumer<String, String>(props);

}

private void consume() {

consumer.subscribe(Arrays.asList(TOPIC_EXACTLY_ONCE));

Duration timeout = Duration.ofMillis(100);

while (true) {

ConsumerRecords<String, String> records = consumer.poll(timeout);

for (ConsumerRecord<String, String> record : records) {

System.out.printf("offset = %d, key = %s, value = %s%n",record.offset(), record.key(), record.value());

}

}

}

public static void main(String[] args) {

Consumer kafkaConsumerDemo = new Consumer();

kafkaConsumerDemo.consume();

}

}

671

671

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?