导言

Loki 是 Grafana Labs 团队最新的开源项目,是一个水平可扩展,高可用性,多租户的日志聚合系统。它的设计非常经济高效且易于操作,因为它不会为日志内容编制索引,而是为每个日志流编制一组标签。本文档对其中标签的实现index进行探究。

Index结构

Index是Loki查询最重要的数据结构,为了理解整体查询的原理,我们必须理解index的实现。

这里以boltdb-shipper作为index的存储类型,这里注意两点即可:KV存储+前缀查询。存储模型如下图索引:

存储结构说明:

| 字段解释 | index查询 | boltdb使用 |

|---|---|---|

| - seriesID:日志流ID - shard:分片, shard = seriesID % 分片数(可配置) - userID:租户ID - lableName:标签名 - labelVaue: 标签值 - labelValueHash:标签值hash - chunkID:chunk的ID(cos中key) - bucketID: 分桶,timestamp / secondsInDay(按天分割) - **chunkThrough:**chunk里最后一条数据的时间 - metricName:固定为logs | 图中四种颜色表示的索引类型从上到下分别为: - 数据类型1: 用于根据用户ID搜索查询所有日志流的ID - 数据类型2: 用户根据日志流ID搜索对应的所有标签名 - 数据类型3: 用于根据用户ID和标签查询日志流的ID - 数据类型4: 用于根据用户ID日志流ID查询底层存储ChunkID 其中数据类型1和数据类型2用于查询Label; 数据类型3和数据类型4用户查询实际数据,这个是我们经常用的 | boltdb:https://github.com/boltdb/bolt Bolt stores its keys in byte-sorted order within a bucket。 - key:HashValue + “\000” + RangeValue - value: Value serialID相关信息放到key里面,是可以有效利用boltdb的key是按照 byte-sorted order的特性 但是value无法排序,所以在key上面做文章;使用前缀匹配查询 key分为HashValue和RangeValue,是为了设计不同版本的schema。 |

streamID/seriersID/ChunkID的生成规则如下代码所示

// streamID或者Fingerprint获得

func (i *instance) getHashForLabels(ls labels.Labels) model.Fingerprint {

var fp uint64

fp, i.buf = ls.HashWithoutLabels(i.buf, []string(nil)...)

return i.mapper.mapFP(model.Fingerprint(fp), ls)

}

// seriersID如何获得, 对应stream

seriersID := labelsSeriesID(labels)

func labelsSeriesID(ls labels.Labels) []byte {

h := sha256.Sum256([]byte(labelsString(ls)))

return encodeBase64Bytes(h[:])

}

labelsString(ls) --> logs{ls[0].name=ls[0]=value, ls[1].name=ls[1].value, ..., ls[n].name=ls[n].value}

// ChunkID生成方法

// ExternalKey returns the key you can use to fetch this chunk from external

// storage. For newer chunks, this key includes a checksum.

func (c *Chunk) ExternalKey() string {

// Some chunks have a checksum stored in dynamodb, some do not. We must

// generate keys appropriately.

if c.ChecksumSet {

// This is the inverse of parseNewExternalKey.

return fmt.Sprintf("%s/%x:%x:%x:%x", c.UserID, uint64(c.Fingerprint), int64(c.From), int64(c.Through), c.Checksum)

}

// This is the inverse of parseLegacyExternalKey, with "<user id>/" prepended.

// Legacy chunks had the user ID prefix on s3/memcache, but not in DynamoDB.

// See comment on parseExternalKey.

return fmt.Sprintf("%s/%d:%d:%d", c.UserID, uint64(c.Fingerprint), int64(c.From), int64(c.Through))

}

Index写入

Index写入概要

Index的写入数据流如下图所示:

其中ingester内存的处理流程如下图所示:

没刷新一个chunk,需要写入的index如下

data = jsoniter.ConfigFastest.Marshal(labelNames)

entries := []IndexEntry{

// Entry for metricName -> seriesID (数据类型1)

{

TableName: bucket.tableName,

HashValue: fmt.Sprintf("%02d:%s:%s", shard, bucket.hashKey, metricName),

RangeValue: encodeRangeKey(seriesRangeKeyV1, seriesID, nil, nil),

Value: empty,

},

// Entry for seriesID -> label names (数据类型2)

{

TableName: bucket.tableName,

HashValue: string(seriesID),

RangeValue: encodeRangeKey(labelNamesRangeKeyV1, nil, nil, nil),

Value: data,

},

}

// Entries for metricName:labelName -> hash(value):seriesID (数据类型3)

for _, v := range labels {

if v.Name == model.MetricNameLabel {

continue

}

valueHash := sha256bytes(v.Value)

entries = append(entries, IndexEntry{

TableName: bucket.tableName,

HashValue: fmt.Sprintf("%02d:%s:%s:%s", shard, bucket.hashKey, metricName, v.Name),

RangeValue: encodeRangeKey(labelSeriesRangeKeyV1, valueHash, seriesID, nil),

Value: []byte(v.Value),

})

}

// Entry for seriesID -> chunkID (数据类型4)

entries := []IndexEntry{

// Entry for seriesID -> chunkID

{

TableName: bucket.tableName,

HashValue: bucket.hashKey + ":" + string(seriesID),

RangeValue: encodeRangeKey(chunkTimeRangeKeyV3, encodedThroughBytes, nil, []byte(chunkID)),

Value: empty,

},

}

上述代码中RangeValue的生成使用了encodeRangeKey,其具体实现如下

// Encode a complete key including type marker (which goes at the end)

func encodeRangeKey(keyType byte, ss ...[]byte) []byte {

output := buildRangeValue(2, ss...)

output[len(output)-2] = keyType

return output

}

// Build an index key, encoded as multiple parts separated by a 0 byte, with extra space at the end.

func buildRangeValue(extra int, ss ...[]byte) []byte {

length := extra

for _, s := range ss {

length += len(s) + 1

}

output, i := make([]byte, length), 0

for _, s := range ss {

i += copy(output[i:], s) + 1

}

return output

}

详细代码分析

Index在loki中的结构体如下

// IndexEntry describes an entry in the chunk index

type IndexEntry struct {

TableName string

HashValue string

// For writes, RangeValue will always be set.

RangeValue []byte

// New for v6 schema, label value is not written as part of the range key.

Value []byte

}

// WriteBatch

type TableWrites struct {

puts map[string][]byte

deletes map[string]struct{}

}

type BoltWriteBatch struct {

Writes map[string]TableWrites

}

根据Chunk计算需要写入的IndexEntry条目(有简化部分)

// calculateIndexEntries creates a set of batched WriteRequests for all the chunks it is given.

func (c *seriesStore) calculateIndexEntries(ctx context.Context, from, through model.Time, chunk Chunk) (WriteBatch, []string, error) {

keys, labelEntries, err := c.schema.GetCacheKeysAndLabelWriteEntries(from, through, chunk.UserID, metricName, chunk.Metric, chunk.ExternalKey())

for _, missingKey := range missing {

for i, key := range keys {

if key == missingKey {

entries = append(entries, labelEntries[i]...)

}

}

}

chunkEntries, err := c.schema.GetChunkWriteEntries(from, through, chunk.UserID, metricName, chunk.Metric, chunk.ExternalKey())

result := c.index.NewWriteBatch()

for _, entry := range entries {

key := fmt.Sprintf("%s:%s:%x", entry.TableName, entry.HashValue, entry.RangeValue)

if _, ok := seenIndexEntries[key]; !ok {

seenIndexEntries[key] = struct{}{}

result.Add(entry.TableName, entry.HashValue, entry.RangeValue, entry.Value)

}

}

return result, missing, nil

}

// WriteBatch 添加

func (b *BoltWriteBatch) Add(tableName, hashValue string, rangeValue []byte, value []byte) {

writes := b.getOrCreateTableWrites(tableName)

// separator = "\000"

key := hashValue + separator + string(rangeValue)

writes.puts[key] = value

}

其中获取IndexEntry分两个部分

- GetCacheKeysAndLabelWriteEntries,获取存储结构中数据类型1,2,3,即label的IndexEntry

- GetChunkWriteEntries,获取存储结构中数据类型4的信息,及ChunkID和SerialID的对应关系

GetCacheKeysAndLabelWriteEntries(Label索引)

// returns cache key string and []IndexEntry per bucket, matched in order

func (s seriesStoreSchema) GetCacheKeysAndLabelWriteEntries(from, through model.Time, userID string, metricName string, labels labels.Labels, chunkID string) ([]string, [][]IndexEntry, error) {

var keys []string

var indexEntries [][]IndexEntry

for _, bucket := range s.buckets(from, through, userID) {

key := strings.Join([]string{

bucket.tableName,

bucket.hashKey,

string(labelsSeriesID(labels)),

},

"-",

)

// This is just encoding to remove invalid characters so that we can put them in memcache.

// We're not hashing them as the length of the key is well within memcache bounds. tableName + userid + day + 32Byte(seriesID)

key = hex.EncodeToString([]byte(key))

keys = append(keys, key)

entries, err := s.entries.GetLabelWriteEntries(bucket, metricName, labels, chunkID)

if err != nil {

return nil, nil, err

}

indexEntries = append(indexEntries, entries)

}

return keys, indexEntries, nil

}

其中使用到了s.buckets和GetLabelWriteEntries两个函数,第一个负责切分bucket;第二个负责获取实际的IndexEntry

其中bucket的注册原型如下

// 切分bucket函数

func (cfg *PeriodConfig) dailyBuckets(from, through model.Time, userID string) []Bucket {

var (

fromDay = from.Unix() / secondsInDay

throughDay = through.Unix() / secondsInDay

result = []Bucket{}

)

for i := fromDay; i <= throughDay; i++ {

relativeFrom := math.Max64(0, int64(from)-(i*millisecondsInDay))

relativeThrough := math.Min64(millisecondsInDay, int64(through)-(i*millisecondsInDay))

result = append(result, Bucket{

from: uint32(relativeFrom),

through: uint32(relativeThrough),

tableName: cfg.IndexTables.TableFor(model.TimeFromUnix(i * secondsInDay)),

hashKey: fmt.Sprintf("%s:d%d", userID, i),

bucketSize: uint32(millisecondsInDay), // helps with deletion of series ids in series store

})

}

return result

}

GetLabelWriteEntries的写入如下

func (s v11Entries) GetLabelWriteEntries(bucket Bucket, metricName string, labels labels.Labels, chunkID string) ([]IndexEntry, error) {

seriesID := labelsSeriesID(labels)

// read first 32 bits of the hash and use this to calculate the shard

shard := binary.BigEndian.Uint32(seriesID) % s.rowShards

labelNames := make([]string, 0, len(labels))

for _, l := range labels {

if l.Name == model.MetricNameLabel {

continue

}

labelNames = append(labelNames, l.Name)

}

data, err := jsoniter.ConfigFastest.Marshal(labelNames)

if err != nil {

return nil, err

}

entries := []IndexEntry{

// Entry for metricName -> seriesID

{

TableName: bucket.tableName,

HashValue: fmt.Sprintf("%02d:%s:%s", shard, bucket.hashKey, metricName),

RangeValue: encodeRangeKey(seriesRangeKeyV1, seriesID, nil, nil),

Value: empty,

},

// Entry for seriesID -> label names

{

TableName: bucket.tableName,

HashValue: string(seriesID),

RangeValue: encodeRangeKey(labelNamesRangeKeyV1, nil, nil, nil),

Value: data,

},

}

// Entries for metricName:labelName -> hash(value):seriesID

// We use a hash of the value to limit its length.

for _, v := range labels {

if v.Name == model.MetricNameLabel {

continue

}

valueHash := sha256bytes(v.Value)

entries = append(entries, IndexEntry{

TableName: bucket.tableName,

HashValue: fmt.Sprintf("%02d:%s:%s:%s", shard, bucket.hashKey, metricName, v.Name),

RangeValue: encodeRangeKey(labelSeriesRangeKeyV1, valueHash, seriesID, nil),

Value: []byte(v.Value),

})

}

return entries, nil

}

GetChunkWriteEntries(chunkID索引)

GetChunkWriteEntries的实现如下

func (s seriesStoreSchema) GetChunkWriteEntries(from, through model.Time, userID string, metricName string, labels labels.Labels, chunkID string) ([]IndexEntry, error) {

var result []IndexEntry

for _, bucket := range s.buckets(from, through, userID) {

entries, err := s.entries.GetChunkWriteEntries(bucket, metricName, labels, chunkID)

if err != nil {

return nil, err

}

result = append(result, entries...)

}

return result, nil

}

buckets的功能是根据时间切分bucket,详见相关章节

GetChunkWriteEntries的时下如下

func (v10Entries) GetChunkWriteEntries(bucket Bucket, metricName string, labels labels.Labels, chunkID string) ([]IndexEntry, error) {

seriesID := labelsSeriesID(labels)

encodedThroughBytes := encodeTime(bucket.through)

entries := []IndexEntry{

// Entry for seriesID -> chunkID

{

TableName: bucket.tableName,

HashValue: bucket.hashKey + ":" + string(seriesID),

// "encodedThroughBytes chunkID chunkTimeRangeKeyV3 "

RangeValue: encodeRangeKey(chunkTimeRangeKeyV3, encodedThroughBytes, nil, []byte(chunkID)),

Value: empty,

},

}

return entries, nil

}

支持index写入流程已完成

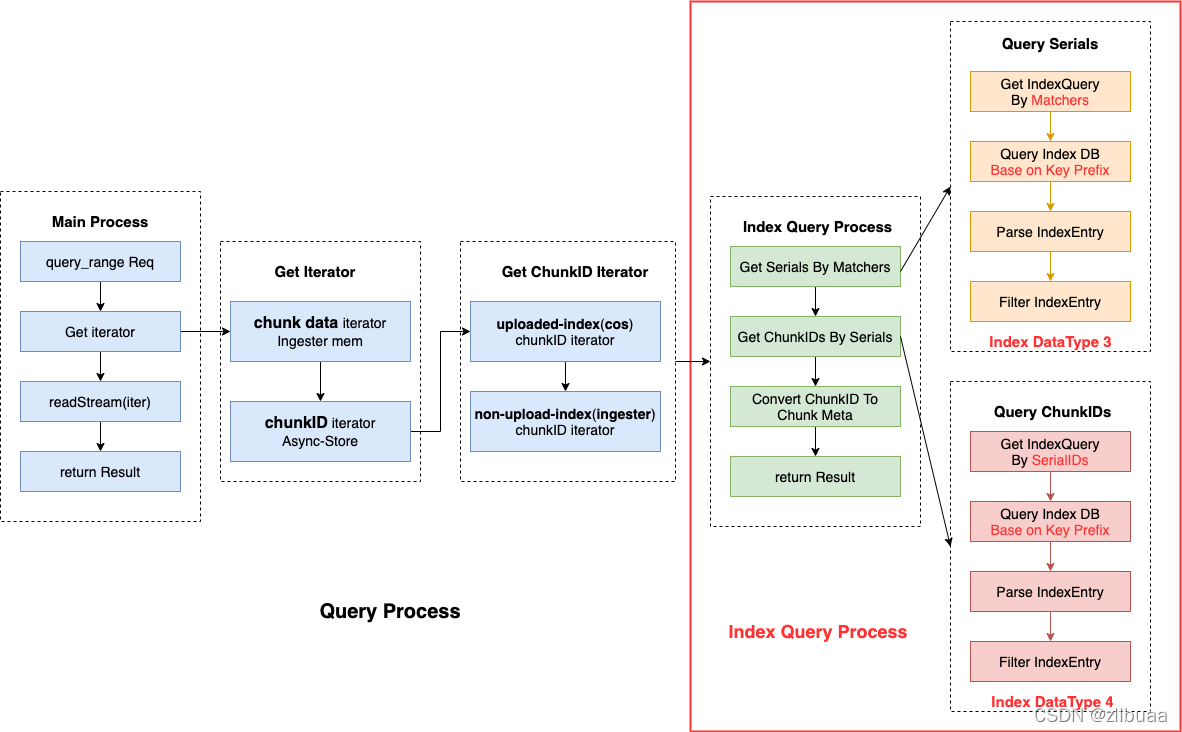

Index查询

Index查询概要

这里我们主要关注Index查询过程,主要分为

- 根据Label Machers查询Serials,使用数据类型3

- 根据Serials查询ChunkIDs,使用数据类型4

其中查询中用到最重要的思想是key前缀查询

详细代码分析

查询数据结构的Index结构如下

// IndexQuery describes a query for entries

type IndexQuery struct {

TableName string

HashValue string

// 前缀匹配使用;例如查询ChunkID的时候,可以使用RangeValue更多的值,减少查询到的Index数量

RangeValuePrefix []byte

RangeValueStart []byte

ValueEqual []byte

// If the result of this lookup is immutable or not (for caching).

Immutable bool

}

查询Index主流程

func (c *seriesStore) GetChunkRefs(ctx context.Context, userID string, from, through model.Time, allMatchers ...*labels.Matcher) ([][]Chunk, []*Fetcher, error) {

metricName, matchers, shortcut, err := c.validateQuery(ctx, userID, &from, &through, allMatchers)

_, matchers = util.SplitFiltersAndMatchers(matchers)

// 获取serialIDs

seriesIDs, err := c.lookupSeriesByMetricNameMatchers(ctx, from, through, userID, metricName, matchers)

// 获取ChunkIDs

chunkIDs, err := c.lookupChunksBySeries(ctx, from, through, userID, seriesIDs)

// 根据ChunkID生成Chunks的元数据

chunks, err := c.convertChunkIDsToChunks(ctx, userID, chunkIDs)

return [][]Chunk{chunks}, []*Fetcher{c.baseStore.fetcher}, nil

}

下面详细分析lookupSeriesByMetricNameMatchers和lookupChunksBySeries

lookupSeriesByMetricNameMatchers(查询serials)

查询serials主要分为两个步骤:

- 获取IndexQuery

- 根据IndexQuery查询DB获取Serials

其中获取IndexQuery代码如下(介绍两种Label匹配方式:正则=~;相等=)

获取IndexQuery(Label MatchRegexp)

Label正则匹配,查询的时候不对Value进行分析

func (s baseSchema) GetReadQueriesForMetricLabel(from, through model.Time, userID string, metricName string, labelName string) ([]IndexQuery, error) {

var result []IndexQuery

buckets := s.buckets(from, through, userID)

for _, bucket := range buckets {

entries, err := s.entries.GetReadMetricLabelQueries(bucket, metricName, labelName)

if err != nil {

return nil, err

}

result = append(result, entries...)

}

return result, nil

}

func (s v10Entries) GetReadMetricLabelQueries(bucket Bucket, metricName string, labelName string) ([]IndexQuery, error) {

result := make([]IndexQuery, 0, s.rowShards)

for i := uint32(0); i < s.rowShards; i++ {

result = append(result, IndexQuery{

TableName: bucket.tableName,

HashValue: fmt.Sprintf("%02d:%s:%s:%s", i, bucket.hashKey, metricName, labelName),

})

}

return result, nil

}

获取IndexQuery(Label MatchEqual)

增加Value相关信息,进一步降低匹配到的IndexEntry数目

func (s baseSchema) GetReadQueriesForMetricLabelValue(from, through model.Time, userID string, metricName string, labelName string, labelValue string) ([]IndexQuery, error) {

var result []IndexQuery

buckets := s.buckets(from, through, userID)

for _, bucket := range buckets {

entries, err := s.entries.GetReadMetricLabelValueQueries(bucket, metricName, labelName, labelValue)

if err != nil {

return nil, err

}

result = append(result, entries...)

}

return result, nil

}

func (s v10Entries) GetReadMetricLabelValueQueries(bucket Bucket, metricName string, labelName string, labelValue string) ([]IndexQuery, error) {

valueHash := sha256bytes(labelValue)

result := make([]IndexQuery, 0, s.rowShards)

for i := uint32(0); i < s.rowShards; i++ {

result = append(result, IndexQuery{

TableName: bucket.tableName,

HashValue: fmt.Sprintf("%02d:%s:%s:%s", i, bucket.hashKey, metricName, labelName),

RangeValueStart: rangeValuePrefix(valueHash),

ValueEqual: []byte(labelValue),

})

}

return result, nil

}

Index查询

生成好IndexQuery后,开始查询Index,核心函数如下

func DoParallelQueries(ctx context.Context, tableQuerier TableQuerier, queries []chunk.IndexQuery, callback chunk_util.Callback) error {

errs := make(chan error)

id := NewIndexDeduper(callback)

for i := 0; i < len(queries); i += maxQueriesPerGoroutine {

q := queries[i:util_math.Min(i+maxQueriesPerGoroutine, len(queries))]

go func(queries []chunk.IndexQuery) {

errs <- tableQuerier.MultiQueries(ctx, queries, id.Callback)

}(q)

}

var lastErr error

for i := 0; i < len(queries); i += maxQueriesPerGoroutine {

err := <-errs

if err != nil {

lastErr = err

}

}

return lastErr

}

其中MultiQueries调用的boltdb table的相应函数

// MultiQueries runs multiple queries without having to take lock multiple times for each query.

func (lt *Table) MultiQueries(ctx context.Context, queries []chunk.IndexQuery, callback chunk_util.Callback) error {

lt.dbSnapshotsMtx.RLock()

defer lt.dbSnapshotsMtx.RUnlock()

for _, db := range lt.dbSnapshots {

err := db.boltdb.View(func(tx *bbolt.Tx) error {

bucket := tx.Bucket(bucketName)

if bucket == nil {

return nil

}

for _, query := range queries {

if err := lt.boltdbIndexClient.QueryWithCursor(ctx, bucket.Cursor(), query, callback); err != nil {

return err

}

}

return nil

})

if err != nil {

return err

}

}

return nil

}

最终调用boltdb的client去db中查询

func (b *BoltIndexClient) QueryWithCursor(_ context.Context, c *bbolt.Cursor, query chunk.IndexQuery, callback func(chunk.IndexQuery, chunk.ReadBatch) (shouldContinue bool)) error {

var start []byte

// 充分使用前缀,定位到精确的Key,然后开始遍历

if len(query.RangeValuePrefix) > 0 {

start = []byte(query.HashValue + separator + string(query.RangeValuePrefix))

} else if len(query.RangeValueStart) > 0 {

start = []byte(query.HashValue + separator + string(query.RangeValueStart))

} else {

start = []byte(query.HashValue + separator)

}

rowPrefix := []byte(query.HashValue + separator)

var batch boltReadBatch

for k, v := c.Seek(start); k != nil; k, v = c.Next() {

if len(query.ValueEqual) > 0 && !bytes.Equal(v, query.ValueEqual) {

continue

}

if len(query.RangeValuePrefix) > 0 && !bytes.HasPrefix(k, start) {

break

}

if !bytes.HasPrefix(k, rowPrefix) {

break

}

// make a copy since k, v are only valid for the life of the transaction.

// See: https://godoc.org/github.com/boltdb/bolt#Cursor.Seek

batch.rangeValue = make([]byte, len(k)-len(rowPrefix))

copy(batch.rangeValue, k[len(rowPrefix):])

batch.value = make([]byte, len(v))

copy(batch.value, v)

if !callback(query, &batch) {

break

}

}

return nil

}

Index解析

func (c *baseStore) parseIndexEntries(_ context.Context, entries []IndexEntry, matcher *labels.Matcher) ([]string, error) {

// Nothing to do if there are no entries.

if len(entries) == 0 {

return nil, nil

}

matchSet := map[string]struct{}{}

if matcher != nil && matcher.Type == labels.MatchRegexp {

set := FindSetMatches(matcher.Value)

for _, v := range set {

matchSet[v] = struct{}{}

}

}

result := make([]string, 0, len(entries))

for _, entry := range entries {

chunkKey, labelValue, err := parseChunkTimeRangeValue(entry.RangeValue, entry.Value)

if err != nil {

return nil, err

}

// If the matcher is like a set (=~"a|b|c|d|...") and

// the label value is not in that set move on.

if len(matchSet) > 0 {

if _, ok := matchSet[string(labelValue)]; !ok {

continue

}

// If its in the set, then add it to set, we don't need to run

// matcher on it again.

result = append(result, chunkKey)

continue

}

if matcher != nil && !matcher.Matches(string(labelValue)) {

continue

}

result = append(result, chunkKey)

}

// Return ids sorted and deduped because they will be merged with other sets.

sort.Strings(result)

result = uniqueStrings(result)

return result, nil

}

至此,series的查询结束,下面介绍根据series查询chunkIDs

lookupChunksBySeries(查询ChunkIDs)

获取IndexQuery

// 根据seriesID查询Chunks

func (s seriesStoreSchema) GetChunksForSeries(from, through model.Time, userID string, seriesID []byte) ([]IndexQuery, error) {

var result []IndexQuery

buckets := s.buckets(from, through, userID)

for _, bucket := range buckets {

entries, err := s.entries.GetChunksForSeries(bucket, seriesID)

if err != nil {

return nil, err

}

result = append(result, entries...)

}

return result, nil

}

func (v10Entries) GetChunksForSeries(bucket Bucket, seriesID []byte) ([]IndexQuery, error) {

encodedFromBytes := encodeTime(bucket.from)

return []IndexQuery{

{

TableName: bucket.tableName,

HashValue: bucket.hashKey + ":" + string(seriesID),

RangeValueStart: rangeValuePrefix(encodedFromBytes),

},

}, nil

}

这里可以看到GetChunksForSeries中的encodedFromBytes是使用的请求的startTime,而Index写入的时候,是使用的Chunk的Through Time。

原因是数据类型4的Key中有对应的Time,而Time是可以按照字符串排序的,为了保证获取到足够有效的Chunk,使用StartTime作为查询的Key的相应部分,可以避免漏掉相应的Index

index查询

同series查询中的index查询

index解析

同series查询中的index解析

然后根据chunkID去相应的backstore获取日志数据

本文深入探讨了Grafana Labs的Loki项目中用于日志聚合的Loki Index结构、写入与查询流程。Loki利用标签而非日志内容创建索引,重点介绍了基于boltdb-shipper的存储模型,包括KV存储和前缀查询。文章详细分析了Index的写入过程,包括GetCacheKeysAndLabelWriteEntries和GetChunkWriteEntries函数,并解析了Index查询过程,涉及lookupSeriesByMetricNameMatchers和lookupChunksBySeries等关键步骤。

本文深入探讨了Grafana Labs的Loki项目中用于日志聚合的Loki Index结构、写入与查询流程。Loki利用标签而非日志内容创建索引,重点介绍了基于boltdb-shipper的存储模型,包括KV存储和前缀查询。文章详细分析了Index的写入过程,包括GetCacheKeysAndLabelWriteEntries和GetChunkWriteEntries函数,并解析了Index查询过程,涉及lookupSeriesByMetricNameMatchers和lookupChunksBySeries等关键步骤。

858

858

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?