1. ip代理

- 什么是代理ip?它的作用?

- HTTP和HTTPS的区别

- 获取ip地址并验证代理IP地址的有效性

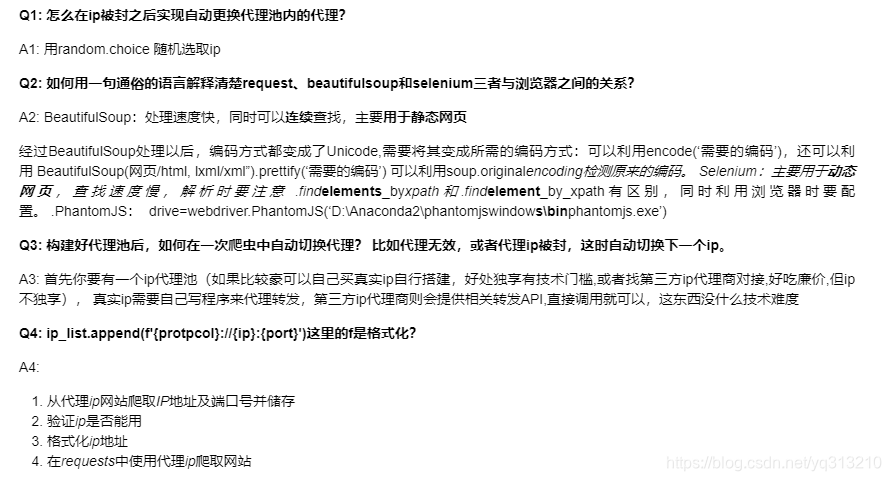

A1: 我们知道IP是上网需要唯一的身份地址,身份凭证,代理IP是我们上网过程中的一个中间平台,是由自己的电脑先访问代理IP,之后由代理IP访问点开的页面。所以在这次访问记录里留下的是代理IP的地址,而不少自己电脑本机IP。

使用代理IP的好处有:1.加快访问速度 2. 保护隐私信息 3. 提高下载速度 4.可以当防火墙。

A2:

代码如下:

from bs4 import BeautifulSoup

import requests

import re

import json

def open_proxy_url(url):

user_agent = 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_14_2) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/72.0.3626.119 Safari/537.36'

headers = {'User-Agent': user_agent}

try:

r = requests.get(url, headers = headers, timeout = 10)

r.raise_for_status()

r.encoding = r.apparent_encoding

return r.text

except:

print('无法访问网页' + url)

def get_proxy_ip(response):

proxy_ip_list = []

soup = BeautifulSoup(response, 'html.parser')

proxy_ips = soup.find(id = 'ip_list').find_all('tr')

for proxy_ip in proxy_ips:

if len(proxy_ip.select('td')) >=8:

ip = proxy_ip.select('td')[1].text

port = proxy_ip.select('td')[2].text

protocol = proxy_ip.select('td')[5].text

if protocol in ('HTTP','HTTPS','http','https'):

proxy_ip_list.append(f'{protocol}://{ip}:{port}')

return proxy_ip_list

def open_url_using_proxy(url, proxy):

user_agent = 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_14_2) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/72.0.3626.119 Safari/537.36'

headers = {'User-Agent': user_agent}

proxies = {}

if proxy.startswith(('HTTPS','https')):

proxies['https'] = proxy

else:

proxies['http'] = proxy

try:

r = requests.get(url, headers = headers, proxies = proxies, timeout = 10)

r.raise_for_status()

r.encoding = r.apparent_encoding

return (r.text, r.status_code)

except:

print('无法访问网页' + url)

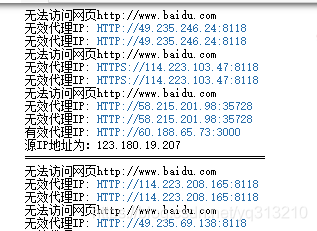

print('无效代理IP: ' + proxy)

return False

def check_proxy_avaliability(proxy):

url = 'http://www.baidu.com'

result = open_url_using_proxy(url, proxy)

VALID_PROXY = False

if result:

text, status_code = result

if status_code == 200:

r_title = re.findall('<title>.*</title>', text)

if r_title:

if r_title[0] == '<title>百度一下,你就知道</title>':

VALID_PROXY = True

if VALID_PROXY:

check_ip_url = 'https://jsonip.com/'

try:

text, status_code = open_url_using_proxy(check_ip_url, proxy)

except:

return

print('有效代理IP: ' + proxy)

with open('valid_proxy_ip.txt','a') as f:

f.writelines(proxy)

try:

source_ip = json.loads(text).get('ip')

print(f'源IP地址为:{source_ip}')

print('='*40)

except:

print('返回的非json,无法解析')

print(text)

else:

print('无效代理IP: ' + proxy)

if __name__ == '__main__':

proxy_url = 'https://www.xicidaili.com/'

text = open_proxy_url(proxy_url)

proxy_ip_filename = 'proxy_ip.txt'

with open(proxy_ip_filename, 'w') as f:

f.write(text)

text = open(proxy_ip_filename, 'r').read()

proxy_ip_list = get_proxy_ip(text)

for proxy in proxy_ip_list:

check_proxy_avaliability(proxy)

运行结果:

2.session 和 cookie

- 静态网页和动态网页

- 模拟登陆163

import time

from selenium import webdriver

from selenium.webdriver.common.by import By

"""

使用selenium进行模拟登陆

1.初始化ChromDriver

2.打开163登陆页面

3.找到用户名的输入框,输入用户名

4.找到密码框,输入密码

5.提交用户信息

"""

name = '*'

passwd = '*'

driver = webdriver.Chrome()

driver.get('https://mail.163.com/')

# 将窗口调整最大

driver.maximize_window()

# 休息5s

time.sleep(5)

current_window_1 = driver.current_window_handle

print(current_window_1)

button = driver.find_element_by_id('lbNormal')

button.click()

driver.switch_to.frame(driver.find_element_by_xpath("//iframe[starts-with(@id, 'x-URS-iframe')]"))

email = driver.find_element_by_name('email')

#email = driver.find_element_by_xpath('//input[@name="email"]')

email.send_keys(name)

password = driver.find_element_by_name('password')

#password = driver.find_element_by_xpath("//input[@name='password']")

password.send_keys(passwd)

submit = driver.find_element_by_id("dologin")

time.sleep(15)

submit.click()

time.sleep(10)

print(driver.page_source)

driver.quit()

3. 总结及作业

本文介绍了在爬虫中如何使用IP代理,包括代理IP的作用、HTTP与HTTPS的区别,以及验证代理IP的有效性。同时,讨论了Session和Cookie在爬虫中的应用,如在动态网页和模拟登录中的角色。最后,文章总结了相关内容并给出了实践作业。

本文介绍了在爬虫中如何使用IP代理,包括代理IP的作用、HTTP与HTTPS的区别,以及验证代理IP的有效性。同时,讨论了Session和Cookie在爬虫中的应用,如在动态网页和模拟登录中的角色。最后,文章总结了相关内容并给出了实践作业。

448

448

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?