package org.apache.hadoop.examples;

import java.io.IOException;

import java.util.Iterator;

import java.util.StringTokenizer;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class MyWordCount

{

//main方法

public static void main(String[] args) throws Exception

{

//初始化Conf 连接到HDFS

Configuration conf = new Configuration();

conf.set("fs.defaultFS", "hdfs://localhost:9000/user/root");

//指定输入输出目录

String[] otherArgs = new String[]{"/user/root/input","/user/root/output"};

Path path = new Path(otherArgs[1]);

//如果输出路径已存在则删除

FileSystem fileSystem = path.getFileSystem(conf);

if (fileSystem.exists(new Path(otherArgs[1])))

{

fileSystem.delete(new Path(otherArgs[1]),true);

}

//如果不是一个输入一个输出路径,则报错

if(otherArgs.length < 2)

{

System.err.println("Usage: wordcount <in> [<in>...] <out>");

System.exit(2);

}

Job job = Job.getInstance(conf, "word count"); //Job(Configuration conf, String jobName) 设置job名称

job.setJarByClass(MyWordCount.class);

job.setMapperClass(MyWordCount.TokenizerMapper.class); //为job设置Mapper类

job.setCombinerClass(MyWordCount.IntSumReducer.class); //为job设置Combiner类

job.setReducerClass(MyWordCount.IntSumReducer.class); //为job设置Reduce类

job.setOutputKeyClass(Text.class); //设置输出key的类型

job.setOutputValueClass(IntWritable.class); //设置输出value的类型

FileInputFormat.addInputPath(job, new Path(otherArgs[0])); //为map-reduce任务设置InputFormat实现类 设置输入路径

FileOutputFormat.setOutputPath(job, new Path(otherArgs[1])); //为map-reduce任务设置OutputFormat实现类 设置输出路径

System.exit(job.waitForCompletion(true)?0:1);

}

//Map类,继承自Mapper类--一个抽象类

public static class TokenizerMapper extends Mapper<Object, Text, Text, IntWritable>

{

//每个单词都在Context中写入1(频次)

private static final IntWritable one = new IntWritable(1);

//Text 实现了BinaryComparable类可以作为key值

private Text word = new Text();

public void map(Object key, Text value, Mapper<Object, Text, Text, IntWritable>.Context context) throws IOException, InterruptedException

{

StringTokenizer itr = new StringTokenizer(value.toString()); //得到什么值 StringTokenizer是分割String串的方法

//如果itr还有下一个分割的值

while(itr.hasMoreTokens())

{

//word为Text类型,要用set方法定义值

this.word.set(itr.nextToken());

//写入context(上下文,传给Reduce节点)

context.write(this.word, one);

}

}

}

//Reduce类,继承自Reducer类--一个抽象类

public static class IntSumReducer extends Reducer<Text, IntWritable, Text, IntWritable>

{

private IntWritable result = new IntWritable();

public void reduce(Text key, Iterable<IntWritable> values, Reducer<Text, IntWritable, Text, IntWritable>.Context context) throws IOException, InterruptedException

{

int sum = 0;

IntWritable val;

//对于每一个相同的key值即word,计算所有节点传入的频次和

for(Iterator i = values.iterator(); i.hasNext(); sum += val.get())

{

val = (IntWritable)i.next();

}

this.result.set(sum);

//key为word,result为频次

context.write(key, this.result);

}

}

}

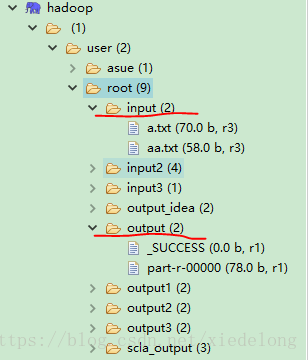

代码有很清晰的注释,看不懂的话可以评论给我,input目录文件及运行结果output目录如下:

DFS文件目录:

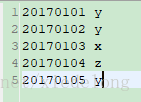

/input/a.txt

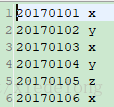

/input/aa.txt

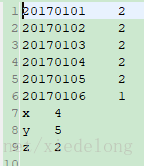

/output/part-r-00000

322

322

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?