数据形式

目标

求每一个页面的总的访问次数,最后按全局倒排序排列

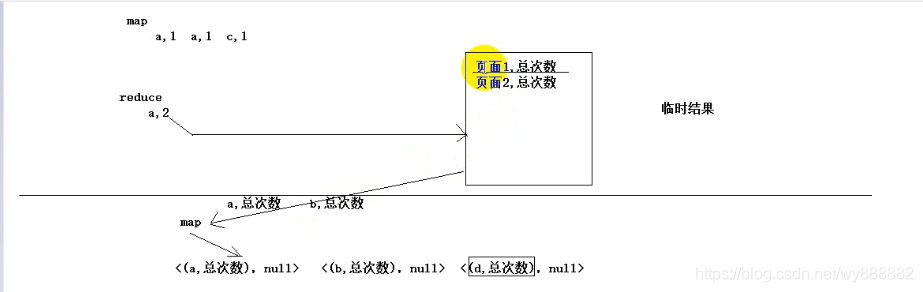

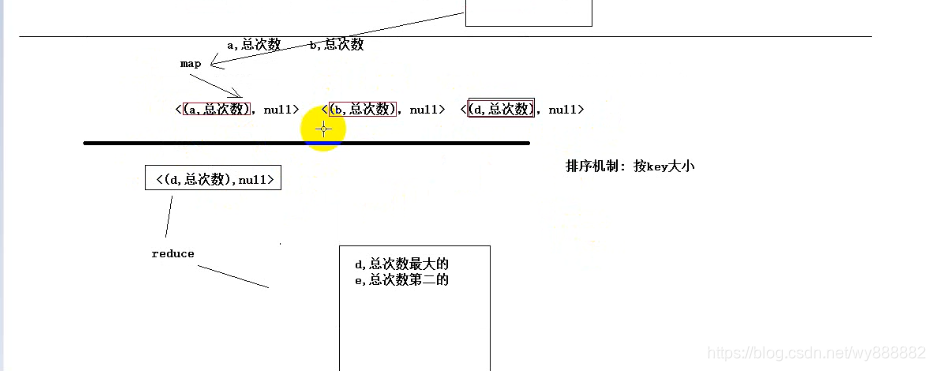

Map函数阶段会经历排序sort和combine阶段,所以可以在map阶段直接进行排序;

也就是可以写两个mapreduce,第一个输出页面访问的总次数,第二个mapreduce将第一个的结果作为输入,调用sort函数之后,直接在reduce端输出

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class PageCountStep1 {

public static class PageCountStep1Mapper extends Mapper<LongWritable, Text, Text, IntWritable>{

@Override

protected void map(LongWritable key, Text value, Mapper<LongWritable, Text, Text, IntWritable>.Context context)

throws IOException, InterruptedException {

String line = value.toString();

String[] split = line.split(" ");

context.write(new Text(split[1]), new IntWritable(1));

}

}

public static class PageCountStep1Reducer extends Reducer<Text, IntWritable, Text, IntWritable>{

@Override

protected void reduce(Text key, Iterable<IntWritable> values,

Reducer<Text, IntWritable, Text, IntWritable>.Context context) throws IOException, InterruptedException {

int count = 0;

for (IntWritable v : values) {

count += v.get();

}

context.write(key, new IntWritable(count));

}

}

public static void main(String[] args) throws Exception {

/**

* 通过加载classpath下的*-site.xml文件解析参数

*/

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

job.setJarByClass(PageCountStep1.class);

job.setMapperClass(PageCountStep1Mapper.class);

job.setReducerClass(PageCountStep1Reducer.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

FileInputFormat.setInputPaths(job, new Path("F:\\mrdata\\url\\input"));

FileOutputFormat.setOutputPath(job, new Path("F:\\mrdata\\url\\countout"));

job.setNumReduceTasks(3);

job.waitForCompletion(true);

}

}

//页面比较的函数

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

import org.apache.hadoop.io.WritableComparable;

public class PageCount implements WritableComparable<PageCount>{

private String page;

private int count;

public void set(String page, int count) {

this.page = page;

this.count = count;

}

public String getPage() {

return page;

}

public void setPage(String page) {

this.page = page;

}

public int getCount() {

return count;

}

public void setCount(int count) {

this.count = count;

}

@Override

public int compareTo(PageCount o) {

return o.getCount()-this.count==0?this.page.compareTo(o.getPage()):o.getCount()-this.count;

}

@Override

public void write(DataOutput out) throws IOException {

out.writeUTF(this.page);

out.writeInt(this.count);

}

@Override

public void readFields(DataInput in) throws IOException {

this.page= in.readUTF();

this.count = in.readInt();

}

@Override

public String toString() {

return this.page + "," + this.count;

}

}

//第二步输出

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class PageCountStep2 {

public static class PageCountStep2Mapper extends Mapper<LongWritable, Text, PageCount, NullWritable>{

@Override

protected void map(LongWritable key, Text value,

Mapper<LongWritable, Text, PageCount, NullWritable>.Context context)

throws IOException, InterruptedException {

String[] split = value.toString().split("\t");

PageCount pageCount = new PageCount();

pageCount.set(split[0], Integer.parseInt(split[1]));

context.write(pageCount, NullWritable.get());

}

}

public static class PageCountStep2Reducer extends Reducer<PageCount, NullWritable, PageCount, NullWritable>{

@Override

protected void reduce(PageCount key, Iterable<NullWritable> values,

Reducer<PageCount, NullWritable, PageCount, NullWritable>.Context context)

throws IOException, InterruptedException {

context.write(key, NullWritable.get());

}

}

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

job.setJarByClass(PageCountStep2.class);

job.setMapperClass(PageCountStep2Mapper.class);

job.setReducerClass(PageCountStep2Reducer.class);

job.setMapOutputKeyClass(PageCount.class);

job.setMapOutputValueClass(NullWritable.class);

job.setOutputKeyClass(PageCount.class);

job.setOutputValueClass(NullWritable.class);

FileInputFormat.setInputPaths(job, new Path("F:\\mrdata\\url\\countout"));

FileOutputFormat.setOutputPath(job, new Path("F:\\mrdata\\url\\sortout"));

job.setNumReduceTasks(1);

job.waitForCompletion(true);

}

}

832

832

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?