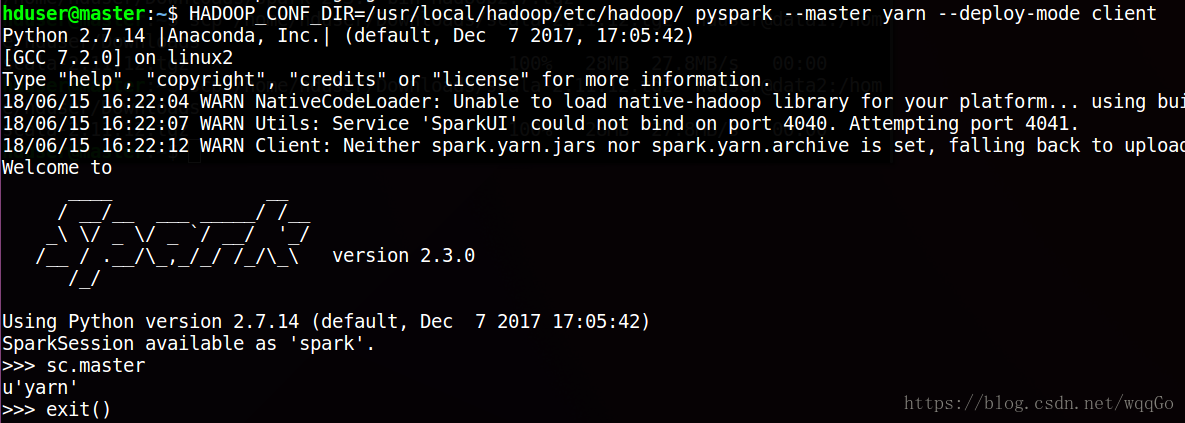

hduser@master:/usr/local/hadoop/etc/hadoop$ HADOOP_CONF_DIR=/usr/local/hadoop/etc/hadoop/ pyspark --master yarn --deploy-mode client

Python 2.7.14 |Anaconda, Inc.| (default, Dec 7 2017, 17:05:42)

[GCC 7.2.0] on linux2

Type "help", "copyright", "credits" or "license" for more information.

18/06/15 10:25:33 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

18/06/15 10:25:39 WARN Client: Neither spark.yarn.jars nor spark.yarn.archive is set, falling back to uploading libraries under SPARK_HOME.

18/06/15 10:26:39 ERROR YarnClientSchedulerBackend: Yarn application has already exited with state FINISHED!

18/06/15 10:26:39 ERROR TransportClient: Failed to send RPC 7707247702813566843 to /10.200.68.191:56658: java.nio.channels.ClosedChannelException

java.nio.channels.ClosedChannelException

at io.netty.channel.AbstractChannel$AbstractUnsafe.write(...)(Unknown Source)

18/06/15 10:26:39 ERROR YarnSchedulerBackend$YarnSchedulerEndpoint: Sending RequestExecutors(0,0,Map(),Set()) to AM was unsuccessful

java.io.IOException: Failed to send RPC 7707247702813566843 to /10.200.68.191:56658: java.nio.channels.ClosedChannelException

at org.apache.spark.network.client.TransportClient.lambda$sendRpc$2(TransportClient.java:237)

at io.netty.util.concurrent.DefaultPromise.notifyListener0(DefaultPromise.java:507)

at io.netty.util.concurrent.DefaultPromise.notifyListenersNow(DefaultPromise.java:481)

at io.netty.util.concurrent.DefaultPromise.access$000(DefaultPromise.java:34)

at io.netty.util.concurrent.DefaultPromise$1.run(DefaultPromise.java:431)

at io.netty.util.concurrent.AbstractEventExecutor.safeExecute(AbstractEventExecutor.java:163)

at io.netty.util.concurrent.SingleThreadEventExecutor.runAllTasks(SingleThreadEventExecutor.java:403)

at io.netty.channel.nio.NioEventLoop.run(NioEventLoop.java:463)

at io.netty.util.concurrent.SingleThreadEventExecutor$5.run(SingleThreadEventExecutor.java:858)

at io.netty.util.concurrent.DefaultThreadFactory$DefaultRunnableDecorator.run(DefaultThreadFactory.java:138)

at java.lang.Thread.run(Thread.java:748)

Caused by: java.nio.channels.ClosedChannelException

at io.netty.channel.AbstractChannel$AbstractUnsafe.write(...)(Unknown Source)

18/06/15 10:26:39 ERROR Utils: Uncaught exception in thread Yarn application state monitor

org.apache.spark.SparkException: Exception thrown in awaitResult:

at org.apache.spark.util.ThreadUtils$.awaitResult(ThreadUtils.scala:205)

at org.apache.spark.rpc.RpcTimeout.awaitResult(RpcTimeout.scala:75)

at org.apache.spark.scheduler.cluster.CoarseGrainedSchedulerBackend.requestTotalExecutors(CoarseGrainedSchedulerBackend.scala:566)

at org.apache.spark.scheduler.cluster.YarnSchedulerBackend.stop(YarnSchedulerBackend.scala:95)

at org.apache.spark.scheduler.cluster.YarnClientSchedulerBackend.stop(YarnClientSchedulerBackend.scala:155)

at org.apache.spark.scheduler.TaskSchedulerImpl.stop(TaskSchedulerImpl.scala:508)

at org.apache.spark.scheduler.DAGScheduler.stop(DAGScheduler.scala:1752)

at org.apache.spark.SparkContext$$anonfun$stop$8.apply$mcV$sp(SparkContext.scala:1924)

at org.apache.spark.util.Utils$.tryLogNonFatalError(Utils.scala:1357)

at org.apache.spark.SparkContext.stop(SparkContext.scala:1923)

at org.apache.spark.scheduler.cluster.YarnClientSchedulerBackend$MonitorThread.run(YarnClientSchedulerBackend.scala:112)

Caused by: java.io.IOException: Failed to send RPC 7707247702813566843 to /10.200.68.191:56658: java.nio.channels.ClosedChannelException

at org.apache.spark.network.client.TransportClient.lambda$sendRpc$2(TransportClient.java:237)

at io.netty.util.concurrent.DefaultPromise.notifyListener0(DefaultPromise.java:507)

at io.netty.util.concurrent.DefaultPromise.notifyListenersNow(DefaultPromise.java:481)

at io.netty.util.concurrent.DefaultPromise.access$000(DefaultPromise.java:34)

at io.netty.util.concurrent.DefaultPromise$1.run(DefaultPromise.java:431)

at io.netty.util.concurrent.AbstractEventExecutor.safeExecute(AbstractEventExecutor.java:163)

at io.netty.util.concurrent.SingleThreadEventExecutor.runAllTasks(SingleThreadEventExecutor.java:403)

at io.netty.channel.nio.NioEventLoop.run(NioEventLoop.java:463)

at io.netty.util.concurrent.SingleThreadEventExecutor$5.run(SingleThreadEventExecutor.java:858)

at io.netty.util.concurrent.DefaultThreadFactory$DefaultRunnableDecorator.run(DefaultThreadFactory.java:138)

at java.lang.Thread.run(Thread.java:748)

Caused by: java.nio.channels.ClosedChannelException

at io.netty.channel.AbstractChannel$AbstractUnsafe.write(...)(Unknown Source)

Traceback (most recent call last):

File "/usr/local/spark/python/pyspark/shell.py", line 45, in <module>

spark = SparkSession.builder\

File "/usr/local/spark/python/pyspark/sql/session.py", line 183, in getOrCreate

session._jsparkSession.sessionState().conf().setConfString(key, value)

File "/usr/local/spark/python/lib/py4j-0.10.6-src.zip/py4j/java_gateway.py", line 1160, in __call__

File "/usr/local/spark/python/pyspark/sql/utils.py", line 79, in deco

raise IllegalArgumentException(s.split(': ', 1)[1], stackTrace)

pyspark.sql.utils.IllegalArgumentException: u"Error while instantiating 'org.apache.spark.sql.hive.HiveSessionStateBuilder':"

>>> sc.master

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

NameError: name 'sc' is not defined

参照

https://www.cnblogs.com/tibit/p/7337045.html

https://blog.youkuaiyun.com/pucao_cug/article/details/72453382

在yarn-site.xml里增加

<property>

<name>yarn.nodemanager.vmem-check-enabled</name>

<value>false</value>

</property>

重启 格式化集群 问题解决

参照相关教程,在yarn-site.xml配置文件中进行调整,并执行重启和集群格式化操作,成功解决了Spark在Hadoop YARN上运行时遇到的错误。

参照相关教程,在yarn-site.xml配置文件中进行调整,并执行重启和集群格式化操作,成功解决了Spark在Hadoop YARN上运行时遇到的错误。

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?