Map Join概述

在Reduce端处理过多的表,非常容易产生数据倾斜。

我们可以在Map端缓存多张表,提前处理业务逻辑,这样增加Map端业务,减少Reduce端数据的压力,尽可能的减少数据倾斜。

具体办法:采用DistributedCache

(1)在Mapper的setup阶段,将文件读取到缓存集合中。

(2)在Driver驱动类中加载缓存。

//缓存普通文件到Task运行节点。

job.addCacheFile(new URI("file:///D:/input/tableCache/pd.txt"));

//如果是集群运行,需要设置HDFS路径

job.addCacheFile(new URI("hdfs://hadoop102:8020/Cache/pd.txt"));

Map Join案例

1.需求分析

我们的数据集和要实现的效果和上一篇博客一样:博客链接

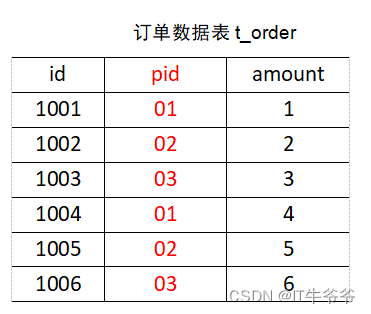

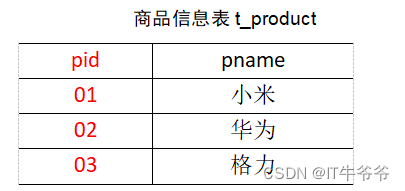

依然是两张表:

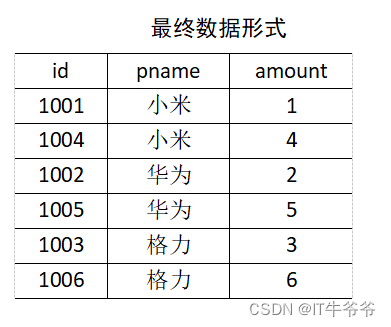

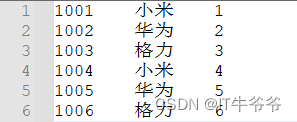

我们要将商品信息表中数据根据商品pid合并到订单数据表中,最终实现效果:

2.代码和结果分析

package mapJoin;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

import java.net.URI;

import java.net.URISyntaxException;

public class MapJoinDriver {

public static void main(String[] args) throws IOException, URISyntaxException, ClassNotFoundException, InterruptedException {

// 1 获取job信息

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

// 2 设置加载jar包路径

job.setJarByClass(MapJoinDriver.class);

// 3 关联mapper

job.setMapperClass(MapJoinMapper.class);

// 4 设置Map输出KV类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(NullWritable.class);

// 5 设置最终输出KV类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(NullWritable.class);

// 加载缓存数据

job.addCacheFile(new URI("file:///D:/input/tableCache/pd.txt"));

// Map端Join的逻辑不需要Reduce阶段,设置reduceTask数量为0

job.setNumReduceTasks(0);

// 6 设置输入输出路径

FileInputFormat.setInputPaths(job, new Path("D:\\input\\inputTable"));

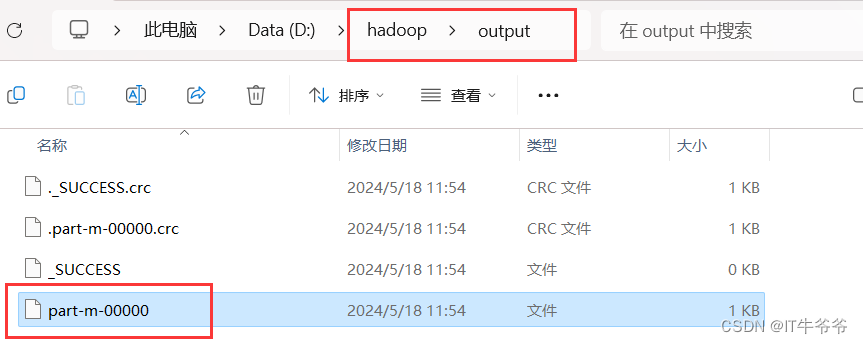

FileOutputFormat.setOutputPath(job, new Path("D:\\hadoop\\output"));

// 7 提交

boolean b = job.waitForCompletion(true);

System.exit(b ? 0 : 1);

}

}

package mapJoin;

import org.apache.commons.lang.StringUtils;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IOUtils;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import sun.dc.pr.PRError;

import java.io.BufferedReader;

import java.io.IOException;

import java.io.InputStreamReader;

import java.net.URI;

import java.util.HashMap;

public class MapJoinMapper extends Mapper<LongWritable, Text,Text, NullWritable> {

private HashMap<String,String> pdMap=new HashMap<>();

private Text outK=new Text();

@Override

protected void setup(Context context) throws IOException, InterruptedException {

//获取缓存的文件,并把文件内容封装到集合pd.txt

URI[] cacheFiles = context.getCacheFiles();

FileSystem fs = FileSystem.get(context.getConfiguration());

FSDataInputStream fis = fs.open(new Path(cacheFiles[0]));

//从流中读取数据

BufferedReader reader = new BufferedReader(new InputStreamReader(fis, "UTF-8"));

String line;

while (StringUtils.isNotEmpty(line=reader.readLine())){

//切割

String[] fields = line.split("\t");

//赋值

pdMap.put(fields[0],fields[1]);

}

//关流

IOUtils.closeStream(reader);

}

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

//处理order.txt

String line = value.toString();

String[] fields = line.split("\t");

//获取pid

String pname = pdMap.get(fields[1]);

//获取订单id和订单数量

//封装

outK.set(fields[0]+"\t"+pname+'\t'+fields[2]);

context.write(outK,NullWritable.get());

}

}

运行结果:

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?