1、pre-flight预检查

安装前的预检查,检测环境是否满足

docker版本是否在已验证版本列表中,不在的话会有个warning提示

2、拉取设置k8s集群所需的镜像

去默认的镜像源(k8s.gcr.io)拉去镜像(kubeadm config images pull),谷歌的镜像仓库很可能访问不到,可以通过国内的镜像源手动拉取下来

可以通过如下命令列举出要拉取哪些镜像?

kubeadm config images list

/**

最新的远程版本是:v1.28.4;返回到:稳定版本-1.23

无法从互联网上获取Kubernetes版本:无法获取URL "https://dl.k8s.io/release/stable-1.23.txt":得到“https://cdn.dl.k8s.io/release/stable-1.23.txt”:超过了截止日期(客户端。等待报头时超时)

回到本地客户端版本:v1.23.1

k8s.gcr.io/kube-apiserver:v1.23.1

k8s.gcr.io/kube-controller-manager:v1.23.1

k8s.gcr.io/kube-scheduler:v1.23.1

k8s.gcr.io/kube-proxy:v1.23.1

k8s.gcr.io/pause:3.6

k8s.gcr.io/etcd:3.5.1-0

k8s.gcr.io/coredns/coredns:v1.8.6

**/

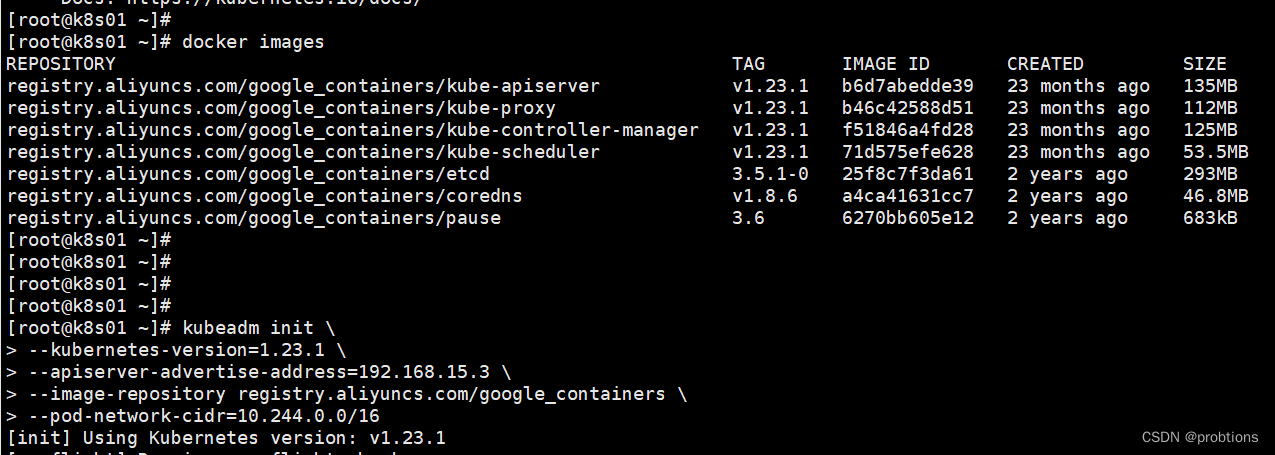

docker image pull registry.aliyuncs.com/google_containers/kube-apiserver:v1.23.1

docker image pull registry.aliyuncs.com/google_containers/kube-controller-manager:v1.23.1

docker image pull registry.aliyuncs.com/google_containers/kube-scheduler:v1.23.1

docker image pull registry.aliyuncs.com/google_containers/kube-proxy:v1.23.1

docker image pull registry.aliyuncs.com/google_containers/pause:3.6

docker image pull registry.aliyuncs.com/google_containers/etcd:3.5.1-0

docker image pull registry.aliyuncs.com/google_containers/coredns/coredns:v1.8.6 错误

docker image pull registry.aliyuncs.com/google_containers/coredns:v1.8.6

3、certs

在 /etc/kubernetes/pki 生成k8s相关组件的证书 certificate

4、kubeconfig

在 /etc/kubernetes/ 生成组件的配置文件

admin.conf

kubelet.conf

controller-manager.conf

scheduler.conf

5、kubelet-start

生成 kubelet 环境变量文件、配置文件,启动kubelet

/var/lib/kubelet/kubeadm-flags.env

/var/lib/kubelet/config.yaml

6、control-plane控制面板

manifest 清单

在 /etc/kubernetes/manifests 创建静态pod清单

7、mark-control-plane

添加标签、添加污点

8、bootstrap-token

RBAC授权

9、kubelet-finalize

rotatable 可旋转的;可转动的;可循环的

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

10、addons插件

essential 必不可少的,非常重要的

11、添加环境变量或者设置config文件

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

kubectl 可以直接和api-server交互,这个命令,默认会去加载ssl证书,确保安全

查看admin.conf文件的内容

kubectl config view

思考?

kubelet 安装好后, 执行 systemctl start kubelet是启动不起来的,那 kubelet 是在什么时候启动起来的呢?

答:master节点,kubelet 是在初始化中启动起来的;从节点,kubelet 是在(kubeadm join)中启动起来的

[root@k8s01 ~]# kubeadm init \

> --kubernetes-version=1.23.1 \

> --apiserver-advertise-address=192.168.15.3 \

> --image-repository registry.aliyuncs.com/google_containers \

> --pod-network-cidr=10.244.0.0/16

[init] Using Kubernetes version: v1.23.1

[preflight] Running pre-flight checks

[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 24.0.7. Latest validated version: 20.10

[WARNING Service-Kubelet]: kubelet service is not enabled, please run 'systemctl enable kubelet.service'

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [k8s01 kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.15.3]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [k8s01 localhost] and IPs [192.168.15.3 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [k8s01 localhost] and IPs [192.168.15.3 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 13.005676 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.23" in namespace kube-system with the configuration for the kubelets in the cluster

NOTE: The "kubelet-config-1.23" naming of the kubelet ConfigMap is deprecated. Once the UnversionedKubeletConfigMap feature gate graduates to Beta the default name will become just "kubelet-config". Kubeadm upgrade will handle this transition transparently.

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node k8s01 as control-plane by adding the labels: [node-role.kubernetes.io/master(deprecated) node-role.kubernetes.io/control-plane node.kubernetes.io/exclude-from-external-load-balancers]

[mark-control-plane] Marking the node k8s01 as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: 9ouumw.zuukj1hnqa0m724j

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.15.3:6443 --token 9ouumw.zuukj1hnqa0m724j \

--discovery-token-ca-cert-hash sha256:c8f53b84d38297eb0352a3e0e1597c083d767bfd287f126a8ce82036ef8511be

[root@k8s01 ~]#

本文详细描述了如何在Linux系统中安装Kubernetes,包括预检查环境、镜像拉取、证书生成、kubeconfig配置、kubelet启动、控制面板设置、标记为控制节点以及添加bootstrap-token等关键步骤。

本文详细描述了如何在Linux系统中安装Kubernetes,包括预检查环境、镜像拉取、证书生成、kubeconfig配置、kubelet启动、控制面板设置、标记为控制节点以及添加bootstrap-token等关键步骤。

570

570

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?