原理

AdaBoost,是英文"Adaptive Boosting"(自适应增强)的缩写,由Yoav Freund和Robert Schapire在1995年提出。它的自适应在于:前一个基本分类器分错的样本会得到加强,加权后的全体样本再次被用来训练下一个基本分类器。同时,在每一轮中加入一个新的弱分类器,直到达到某个预定的足够小的错误率或达到预先指定的最大迭代次数。

整个Adaboost 迭代算法就3步:

- 初始化训练数据的权值分布。如果有N个样本,则每一个训练样本最开始时都被赋予相同的权值:1/N。

- 训练弱分类器。具体训练过程中,如果某个样本点已经被准确地分类,那么在构造下一个训练集中,它的权值就被降低;相反,如果某个样本点没有被准确地分类,那么它的权值就得到提高。然后,权值更新过的样本集被用于训练下一个分类器,整个训练过程如此迭代地进行下去。

- 将各个训练得到的弱分类器组合成强分类器。各个弱分类器的训练过程结束后,加大分类误差率小的弱分类器的权重,使其在最终的分类函数中起着较大的决定作用,而降低分类误差率大的弱分类器的权重,使其在最终的分类函数中起着较小的决定作用。换言之,误差率低的弱分类器在最终分类器中占的权重较大,否则较小。

AdaBoost可以表示为基分类器的线性组合:

H

(

x

)

=

∑

i

=

1

N

α

i

h

i

(

x

)

H(\boldsymbol{x})=\sum_{i=1}^{N} \alpha_{i} h_{i}(\boldsymbol{x})

H(x)=i=1∑Nαihi(x)

其中

h

i

(

x

)

,

i

=

1

,

2

,

.

.

.

h_i(x),i=1,2,...

hi(x),i=1,2,...表示基分类器,

α

i

\alpha_i

αi是每个基分类器对应的权重,表示如下:

α

i

=

1

2

ln

(

1

−

ϵ

i

ϵ

i

)

\alpha_{i}=\frac{1}{2} \ln \left(\frac{1-\epsilon_{i}}{\epsilon_{i}}\right)

αi=21ln(ϵi1−ϵi)

其中

ϵ

i

\epsilon_{i}

ϵi是每个弱分类器的错误率。

实战一

from sklearn.ensemble import AdaBoostClassifier

from sklearn import datasets

from sklearn.model_selection import train_test_split

from sklearn import metrics

iris = datasets.load_iris()

X = iris.data

y = iris.target

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2)

print(f"训练数据量:{len(X_train)},测试数据量:{len(X_test)}")

# 定义模型

model = AdaBoostClassifier(n_estimators=50,learning_rate=1.5)

# 训练

model.fit(X_train, y_train)

# 预测

y_pred = model.predict(X_test)

acc = metrics.accuracy_score(y_test, y_pred) # 准确率

print(f"准确率:{acc:.2}")

训练数据量:120,测试数据量:30

准确率:0.93

实战二

import pandas as pd

from sklearn.datasets import load_wine

from sklearn.model_selection import train_test_split

from sklearn.metrics import accuracy_score

from sklearn.tree import DecisionTreeClassifier

from sklearn.ensemble import AdaBoostClassifier

from sklearn.model_selection import GridSearchCV

import matplotlib.pyplot as plt

wine = load_wine()

print(f"所有特征:{wine.feature_names}")

X = pd.DataFrame(wine.data, columns=wine.feature_names)

y = pd.Series(wine.target)

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.20, random_state=1)

所有特征:['alcohol', 'malic_acid', 'ash', 'alcalinity_of_ash', 'magnesium', 'total_phenols', 'flavanoids', 'nonflavanoid_phenols', 'proanthocyanins', 'color_intensity', 'hue', 'od280/od315_of_diluted_wines', 'proline']

base_model = DecisionTreeClassifier(max_depth=1, criterion='gini',random_state=1).fit(X_train, y_train)

y_pred = base_model.predict(X_test)

print(f"决策树的准确率:{accuracy_score(y_test,y_pred):.3f}")

决策树的准确率:0.694

from sklearn.ensemble import AdaBoostClassifier

model = AdaBoostClassifier(base_estimator=base_model,

n_estimators=50,

learning_rate=0.5,

algorithm='SAMME.R',

random_state=1)

model.fit(X_train, y_train)

y_pred = model.predict(X_test)

print(f"AdaBoost的准确率:{accuracy_score(y_test,y_pred):.3f}")

AdaBoost的准确率:0.972

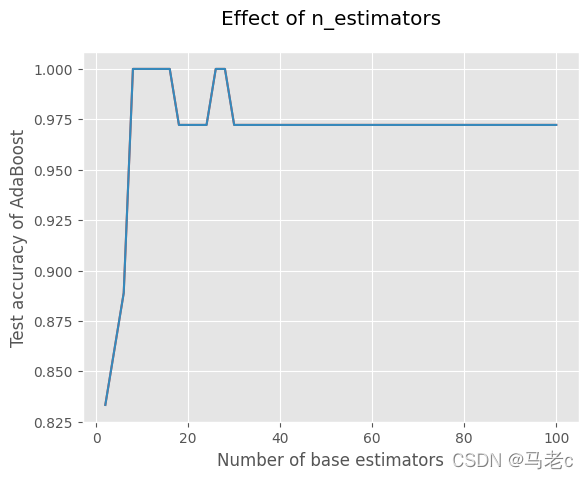

测试估计器个数的影响

x = list(range(2, 102, 2))

y = []

for i in x:

model = AdaBoostClassifier(base_estimator=base_model,

n_estimators=i,

learning_rate=0.5,

algorithm='SAMME.R',

random_state=1)

model.fit(X_train, y_train)

model_test_sc = accuracy_score(y_test, model.predict(X_test))

y.append(model_test_sc)

plt.style.use('ggplot')

plt.title("Effect of n_estimators", pad=20)

plt.xlabel("Number of base estimators")

plt.ylabel("Test accuracy of AdaBoost")

plt.plot(x, y)

plt.show()

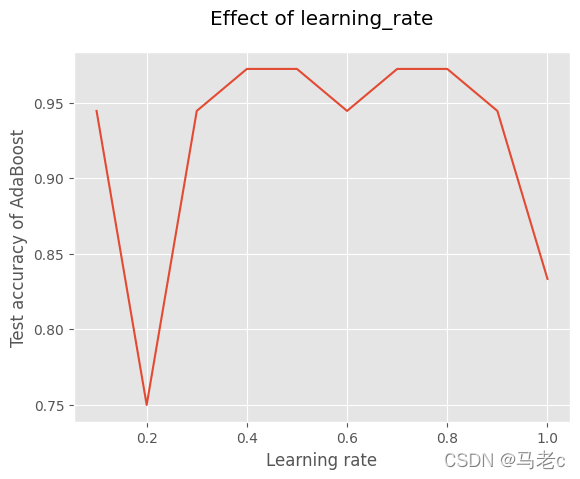

测试学习率的影响

x = [0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1]

y = []

for i in x:

model = AdaBoostClassifier(base_estimator=base_model,

n_estimators=50,

learning_rate=i,

algorithm='SAMME.R',

random_state=1)

model.fit(X_train, y_train)

model_test_sc = accuracy_score(y_test, model.predict(X_test))

y.append(model_test_sc)

plt.title("Effect of learning_rate", pad=20)

plt.xlabel("Learning rate")

plt.ylabel("Test accuracy of AdaBoost")

plt.plot(x, y)

plt.show()

使用GridSearchCV自动调参

hyperparameter_space = {'n_estimators':list(range(2, 102, 2)),

'learning_rate':[0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1]}

gs = GridSearchCV(AdaBoostClassifier(base_estimator=base_model,

algorithm='SAMME.R',

random_state=1),

param_grid=hyperparameter_space,

scoring="accuracy", n_jobs=-1, cv=5)

gs.fit(X_train, y_train)

print("最优超参数:", gs.best_params_)

最优超参数: {'learning_rate': 0.8, 'n_estimators': 42}

本文深入介绍了AdaBoost算法的原理,包括其自适应增强的特性及迭代过程。通过两个实战例子展示了如何使用AdaBoost提升分类器性能,分别在鸢尾花数据集和葡萄酒数据集上实现。接着探讨了估计器个数和学习率对模型准确性的影响,并利用GridSearchCV进行超参数调优,找到了最优的n_estimators和learning_rate组合,进一步提高了模型的预测精度。

本文深入介绍了AdaBoost算法的原理,包括其自适应增强的特性及迭代过程。通过两个实战例子展示了如何使用AdaBoost提升分类器性能,分别在鸢尾花数据集和葡萄酒数据集上实现。接着探讨了估计器个数和学习率对模型准确性的影响,并利用GridSearchCV进行超参数调优,找到了最优的n_estimators和learning_rate组合,进一步提高了模型的预测精度。

430

430

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?