流程:

1.将可以运行的项目打成jar包(点击左侧maven下Lifecycle的package),上传到Linux集群上(我上传到hdp-2),

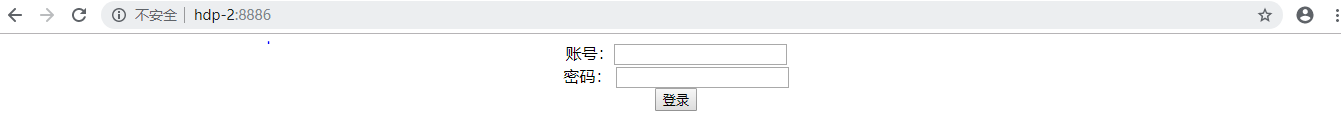

运行 Java -jar neiminda-0.0.1-SNAPSHOT.jar 。 测试:运行 hdp-2:8886

2.启动nginx 启动nginx目的是为了产生日志,还有负载均衡和反向代理(这里没涉及到负载均衡)

/usr/local/nginx/conf 路径下nginx.conf 配置文件

启动nginx: ./nginx

关闭nginx: ./nginx -s quit

重启nginx: ./nginx -s reload

#user nobody;

worker_processes 1;

#error_log logs/error.log;

#error_log logs/error.log notice;

#error_log logs/error.log info;

#pid logs/nginx.pid;

events {

worker_connections 1024;

}

http {

include mime.types;

default_type application/octet-stream;

#产生的日志

log_format main '$remote_addr';

#access_log logs/access.log main;

sendfile on;

#tcp_nopush on;

#keepalive_timeout 0;

keepalive_timeout 65;

#gzip on;

upstream frame-tomcat {

# 指明nginx转发地址

server hdp-2:8886 ;

}

server {

listen 80;

#nginx的服务地址

server_name hdp-1;

#charset koi8-r;

# nginx产生的日志存放的地址

access_log logs/log.frame.access.log main;

location / {

# root html;

# index index.html index.htm;

proxy_pass http://frame-tomcat;

}

error_page 500 502 503 504 /50x.html;

location = /50x.html {

root html;

}

}

server {

listen 80;

server_name localhost;

#charset koi8-r;

#access_log logs/host.access.log main;

location / {

root html;

index index.html index.htm;

}

#error_page 404 /404.html;

# redirect server error pages to the static page /50x.html

#

error_page 500 502 503 504 /50x.html;

location = /50x.html {

root html;

}

# proxy the PHP scripts to Apache listening on 127.0.0.1:80

#

#location ~ \.php$ {

# proxy_pass http://127.0.0.1;

#}

# pass the PHP scripts to FastCGI server listening on 127.0.0.1:9000

#

#location ~ \.php$ {

# root html;

# fastcgi_pass 127.0.0.1:9000;

# fastcgi_index index.php;

# fastcgi_param SCRIPT_FILENAME /scripts$fastcgi_script_name;

# include fastcgi_params;

#}

# deny access to .htaccess files, if Apache's document root

# concurs with nginx's one

#

#location ~ /\.ht {

# deny all;

#}

}

# another virtual host using mix of IP-, name-, and port-based configuration

#

#server {

# listen 8000;

# listen somename:8080;

# server_name somename alias another.alias;

# location / {

# root html;

# index index.html index.htm;

# }

#}

# HTTPS server

#

#server {

# listen 443;

# server_name localhost;

# ssl on;

# ssl_certificate cert.pem;

# ssl_certificate_key cert.key;

# ssl_session_timeout 5m;

# ssl_protocols SSLv2 SSLv3 TLSv1;

# ssl_ciphers HIGH:!aNULL:!MD5;

# ssl_prefer_server_ciphers on;

# location / {

# root html;

# index index.html index.htm;

# }

#}

}注:这时nginx产生的日志已经存在nginx的logs下的log.frame.access.log里

3.启动flume 采集nginx产生的日志下沉到Kafka

查看flume的配置文件(flume下的tail-kafka.conf)

#source 为数据源

a1.sources = source1

#sink 下沉数据

a1.sinks = k1

# channel 管道通道

a1.channels = c1

#exec:指明数据源来自一个可执行指令

a1.sources.source1.type = exec

#可执行指令,跟踪一个文件中的内容

a1.sources.source1.command = tail -F /usr/local/nginx/logs/log.frame.access.log

# Describe the sink

#下沉到Kafka的下沉类型

a1.sinks.k1.type = org.apache.flume.sink.kafka.KafkaSink

# topic 作用是生产者和消费者之间的纽扣

a1.sinks.k1.topic = test

#kafka的地址

a1.sinks.k1.brokerList = hdp-1:9092, hdp-2:9092, hdp-3:9092

a1.sinks.k1.requiredAcks = 1

a1.sinks.k1.batchSize = 20

a1.sinks.k1.channel = c1

# Use a channel which buffers events in memory

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a1.sources.source1.channels = c1

a1.sinks.k1.channel = c1启动flume:在flume的bin下./flume-ng agent -C ../conf/ -f ../tail-kafka.conf -n a1 -Dflume.root.logger=INFO,console

注意:nginx中conf下的nginx.conf 里面的接收采集数据地址要和flume里tail-kafka.conf的数据源地址相同,也就是要跟踪的路径

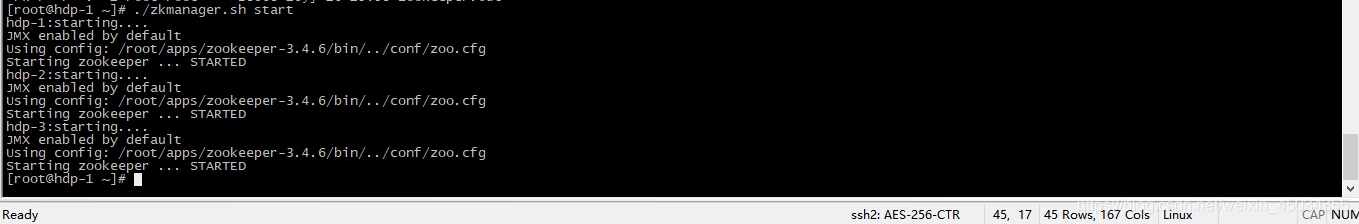

4.启动kafka 注意启动之前要先启动zookeeper ,在kafka的消费者中收到数据产生临时文件。

启动zookeeper

启动Kafka

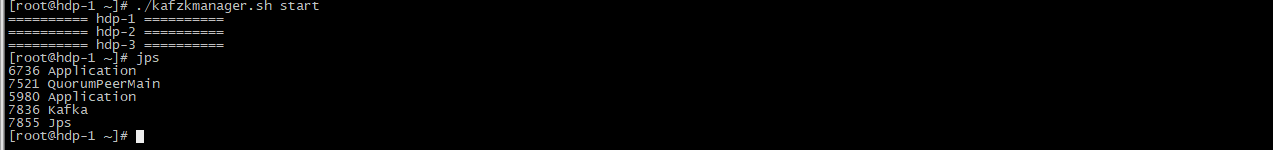

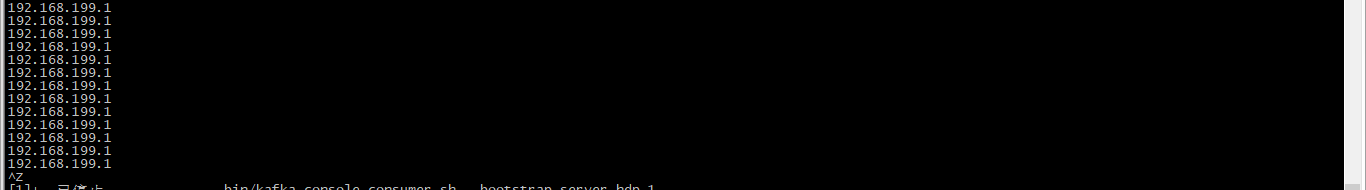

先在Kafka上启动一个消费者,测试一下是否收取到数据

bin/kafka-console-consumer.sh --bootstrap-server hdp-1:9092,hdp-2:9092,hdp-3:9092 --topic test --from-beginning

5.利用Java代码实现消费者接收数据产生临时文件,临时文件为 d:/testlog/access.log

package com.zpark.kafkatest.one;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataOutputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.apache.kafka.clients.consumer.ConsumerRecords;

import org.apache.kafka.clients.consumer.KafkaConsumer;

import org.apache.log4j.Logger;

import java.io.BufferedWriter;

import java.io.FileOutputStream;

import java.io.OutputStreamWriter;

import java.net.URI;

import java.net.URISyntaxException;

import java.util.Collections;

import java.util.Properties;

public class ConsumerLocal {

public static void main(String[] args) {

Logger logger = Logger.getLogger("logRollingFile");

//调用接收消息的方法

receiveMsg();

}

/**

* 获取kafka topic(test)上的数据

*/

private static void receiveMsg() {

Logger logger = Logger.getLogger("logRollingFile");

Properties properties = new Properties();

properties.put("bootstrap.servers", "hdp-2:9092");

properties.put("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

properties.put("value.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

properties.put("group.id","aaaa");

properties.put("enable.auto.commit", true);

//一个方法

KafkaConsumer<String, String> consumer = new KafkaConsumer<String, String>(properties);

consumer.subscribe(Collections.singleton("test"));

// URI uri = null;

// Configuration conf = null;

// String user = "root";

// try {

// uri = new URI("hdfs://hdp-1:9000");

// conf = new Configuration();

// conf = new Configuration();

// //dfs.replication:分布式文件系统副本的数量

// conf.set("dfs.replication", "2");

// //dfs.blocksize:分布式文件系统的块的大小 100M 64+36

// conf.set("dfs.blocksize", "64m");

//

// } catch (URISyntaxException e) {

// e.printStackTrace();

// }

try {

FileOutputStream fos = new FileOutputStream("d:/testlog/access.log");

OutputStreamWriter osw = new OutputStreamWriter(fos);

// FileSystem fs = FileSystem.get(uri, conf, user);

// FSDataOutputStream fdos = fs.create(new Path("/cf.txt"));

while(true) {

/**

* 获取kafka

*/

ConsumerRecords<String, String> records = consumer.poll(100);

for(ConsumerRecord<String, String> record: records) {

String msg = "key:" + record.key()+ ",value:" + record.value() + ",offset:" + record.offset()+",topic:" + record.topic()+"\r\n";

System.out.printf("key=%s,value=%s,offet=%s,topic=%s",record.key() , record.value() , record.offset(), record.topic());

logger.debug(record.value());

// BufferedWriter bw = new BufferedWriter(osw);

// bw.write(msg);

// bw.flush();

}

}

}catch (Exception e) {

e.printStackTrace();

} finally {

consumer.close();

}

}

}

6.将产生的临时文件上传到hdfs的hive表中

hive中建表: create external table flumetable2 (ip string ) row format delimited location '/usr/';

本地文件上传到hdfs,代码展示:

package com.zpark.kafkatest.one;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import java.io.IOException;

import java.net.URI;

import java.net.URISyntaxException;

public class toHDFS {

public static void main(String[] args) {

URI uri = null;

Configuration conf = null;

String user = "root";

FileSystem fs = null;

try {

uri = new URI("hdfs://hdp-1:9000");

conf = new Configuration();

//dfs.replication:分布式文件系统副本的数量

conf.set("dfs.replication", "2");

//dfs.blocksize:分布式文件系统的块的大小 100M 64+36

conf.set("dfs.blocksize", "64m");

fs = FileSystem.get(uri, conf, user);

fs.copyFromLocalFile(new Path("d:/testlog/access.log"),new Path("/usr/a.txt"));

/**

* 往hdfs中写文件

*/

// FSDataOutputStream out = fs.create(new Path("/bc.txt"));

// OutputStreamWriter outWriter = new OutputStreamWriter(out);

// BufferedWriter bw = new BufferedWriter(outWriter);

// bw.write("hello");

// bw.close();

// out.close();

fs.close();

} catch (URISyntaxException e) {

e.printStackTrace();

} catch (InterruptedException e) {

e.printStackTrace();

} catch (IOException e) {

e.printStackTrace();

} finally {

}

}

}注意:当消费者接收数据产生临时文件之后,再启动本地文件上传到hdfs的Java代码,

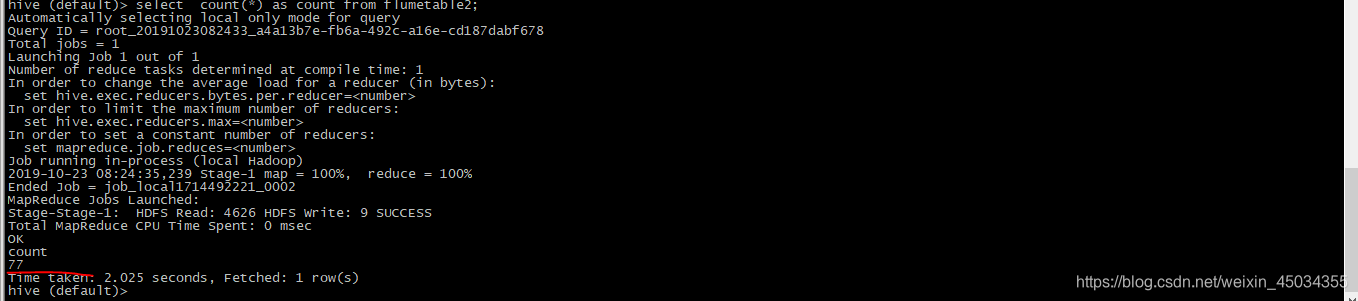

6.hive分析 select count(*) from flumetable2;统计访问总pv。

本文介绍了如何通过打包Java项目、部署到Linux集群、启动Nginx并配置日志,接着利用Flume采集日志到Kafka,接着用Java消费者处理数据生成临时文件,最后将文件上传到HDFS并使用Hive进行PV统计。

本文介绍了如何通过打包Java项目、部署到Linux集群、启动Nginx并配置日志,接着利用Flume采集日志到Kafka,接着用Java消费者处理数据生成临时文件,最后将文件上传到HDFS并使用Hive进行PV统计。

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?